初识Flink

Apache Flink是一个用于分布式流和批处理数据处理的开源平台。Flink的核心是流数据流引擎,为数据流上的分布式计算提供数据分发,通信和容错。Flink在流引擎之上构建批处理,覆盖本机迭代支持,托管内存和程序优化。

一、Flink 的下载安装启动

设置:下载并启动Flink

Flink可在Linux,Mac OS X和Windows上运行。为了能够运行Flink,唯一的要求是安装一个有效的Java 8.x. Windows用户,请查看Windows上的Flink指南,该指南介绍了如何在Windows上运行Flink以进行本地设置。

您可以通过发出以下命令来检查Java的正确安装:

java -version

如果你有Java 8,输出将如下所示:

-

java version "1.8.0_111"

-

Java(TM) SE Runtime Environment (build 1.8.0_111-b14)

-

Java HotSpot(TM) 64-Bit Server VM (build 25.111-b14, mixed mode)

-

$ cd ~/Downloads # Go to download directory

-

$ tar xzf flink-*.tgz # Unpack the downloaded archive

-

$ cd flink-1.7.0

二、启动本地Flink群集

$ ./bin/start-cluster.sh # Start Flink

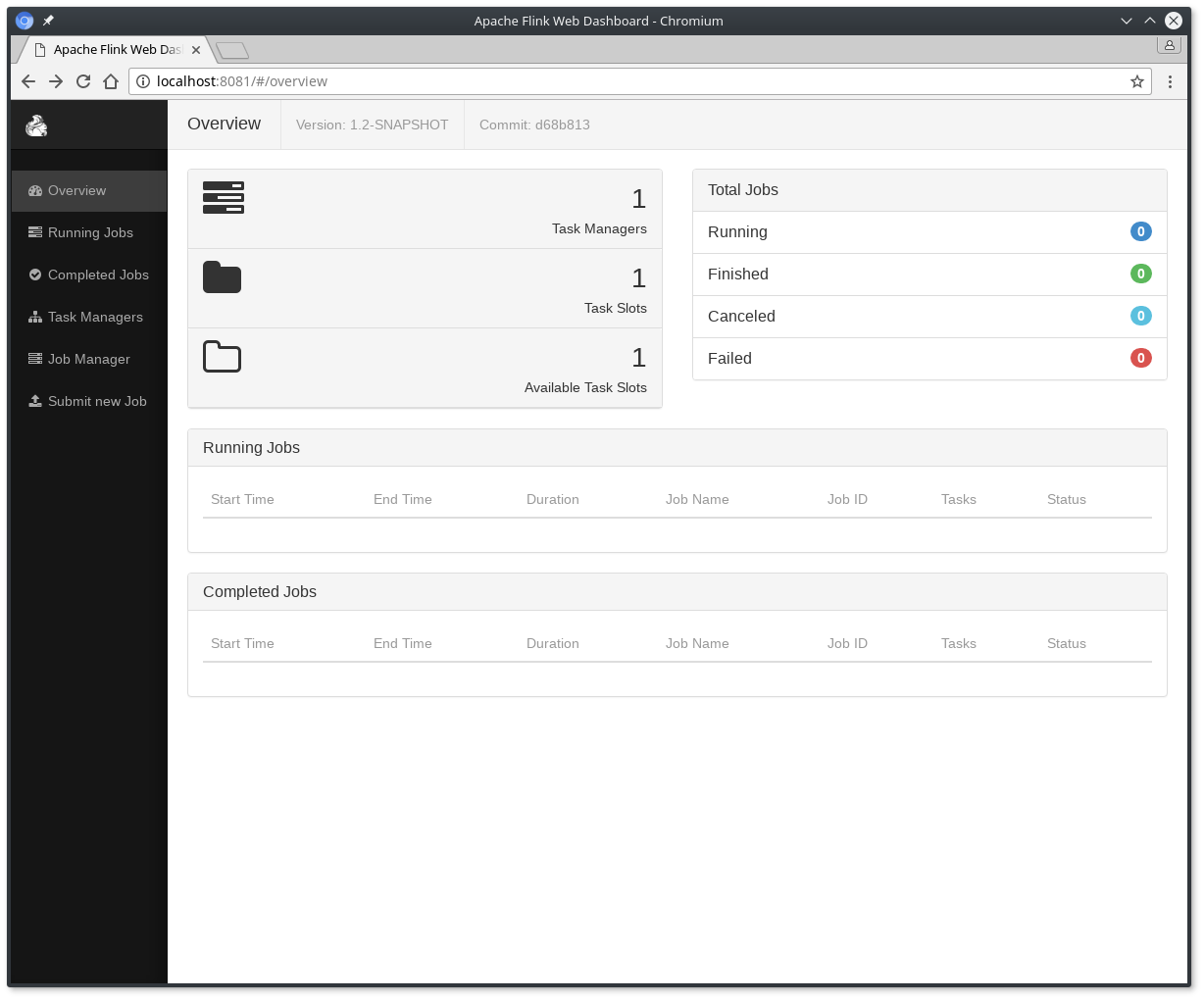

检查web前端ui页面在http://localhost:8081,并确保一切都正常运行。Web前端应报告单个可用的TaskManager实例。

您还可以通过检查logs目录中的日志文件来验证系统是否正在运行:

-

$ tail log/flink-*-standalonesession-*.log

-

INFO ... - Rest endpoint listening at localhost:8081

-

INFO ... - http://localhost:8081 was granted leadership ...

-

INFO ... - Web frontend listening at http://localhost:8081.

-

INFO ... - Starting RPC endpoint for StandaloneResourceManager at akka://flink/user/resourcemanager .

-

INFO ... - Starting RPC endpoint for StandaloneDispatcher at akka://flink/user/dispatcher .

-

INFO ... - ResourceManager akka.tcp://flink@localhost:6123/user/resourcemanager was granted leadership ...

-

INFO ... - Starting the SlotManager.

-

INFO ... - Dispatcher akka.tcp://flink@localhost:6123/user/dispatcher was granted leadership ...

-

INFO ... - Recovering all persisted jobs.

-

INFO ... - Registering TaskManager ... under ... at the SlotManager.

三、阅读代码

您可以在Scala中找到此SocketWindowWordCount示例的完整源代码,并在GitHub上找到Java。

- Scala的

-

object SocketWindowWordCount {

-

-

def main(args: Array[String]) : Unit = {

-

-

// the port to connect to

-

val port: Int = try {

-

ParameterTool.fromArgs(args).getInt("port")

-

} catch {

-

case e: Exception => {

-

System.err.println("No port specified. Please run 'SocketWindowWordCount --port <port>'")

-

return

-

}

-

}

-

-

// get the execution environment

-

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

-

-

// get input data by connecting to the socket

-

val text = env.socketTextStream("localhost", port, '\n')

-

-

// parse the data, group it, window it, and aggregate the counts

-

val windowCounts = text

-

.flatMap { w => w.split("\\s") }

-

.map { w => WordWithCount(w, 1) }

-

.keyBy("word")

-

.timeWindow(Time.seconds(5), Time.seconds(1))

-

.sum("count")

-

-

// print the results with a single thread, rather than in parallel

-

windowCounts.print().setParallelism(1)

-

-

env.execute("Socket Window WordCount")

-

}

-

-

// Data type for words with count

-

case class WordWithCount(word: String, count: Long)

-

}

-

public class SocketWindowWordCount {

-

-

public static void main(String[] args) throws Exception {

-

-

// the port to connect to

-

final int port;

-

try {

-

final ParameterTool params = ParameterTool.fromArgs(args);

-

port = params.getInt("port");

-

} catch (Exception e) {

-

System.err.println("No port specified. Please run 'SocketWindowWordCount --port <port>'");

-

return;

-

}

-

-

// get the execution environment

-

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

-

-

// get input data by connecting to the socket

-

DataStream<String> text = env.socketTextStream("localhost", port, "\n");

-

-

// parse the data, group it, window it, and aggregate the counts

-

DataStream<WordWithCount> windowCounts = text

-

.flatMap(new FlatMapFunction<String, WordWithCount>() {

-

@Override

-

public void flatMap(String value, Collector<WordWithCount> out) {

-

for (String word : value.split("\\s")) {

-

out.collect(new WordWithCount(word, 1L));

-

}

-

}

-

})

-

.keyBy("word")

-

.timeWindow(Time.seconds(5), Time.seconds(1))

-

.reduce(new ReduceFunction<WordWithCount>() {

-

@Override

-

public WordWithCount reduce(WordWithCount a, WordWithCount b) {

-

return new WordWithCount(a.word, a.count + b.count);

-

}

-

});

-

-

// print the results with a single thread, rather than in parallel

-

windowCounts.print().setParallelism(1);

-

-

env.execute("Socket Window WordCount");

-

}

-

-

// Data type for words with count

-

public static class WordWithCount {

-

-

public String word;

-

public long count;

-

-

public WordWithCount() {}

-

-

public WordWithCount(String word, long count) {

-

this.word = word;

-

this.count = count;

-

}

-

-

@Override

-

public String toString() {

-

return word + " : " + count;

-

}

-

}

-

}

四、运行示例

现在,我们将运行此Flink应用程序。它将从套接字读取文本,并且每5秒打印一次前5秒内每个不同单词的出现次数,即处理时间的翻滚窗口,只要文字漂浮在其中。

- 首先,我们使用netcat来启动本地服务器

$ nc -l 9000

- 提交Flink计划:

-

$ ./bin/flink run examples/streaming/SocketWindowWordCount.jar --port 9000

-

Starting execution of program

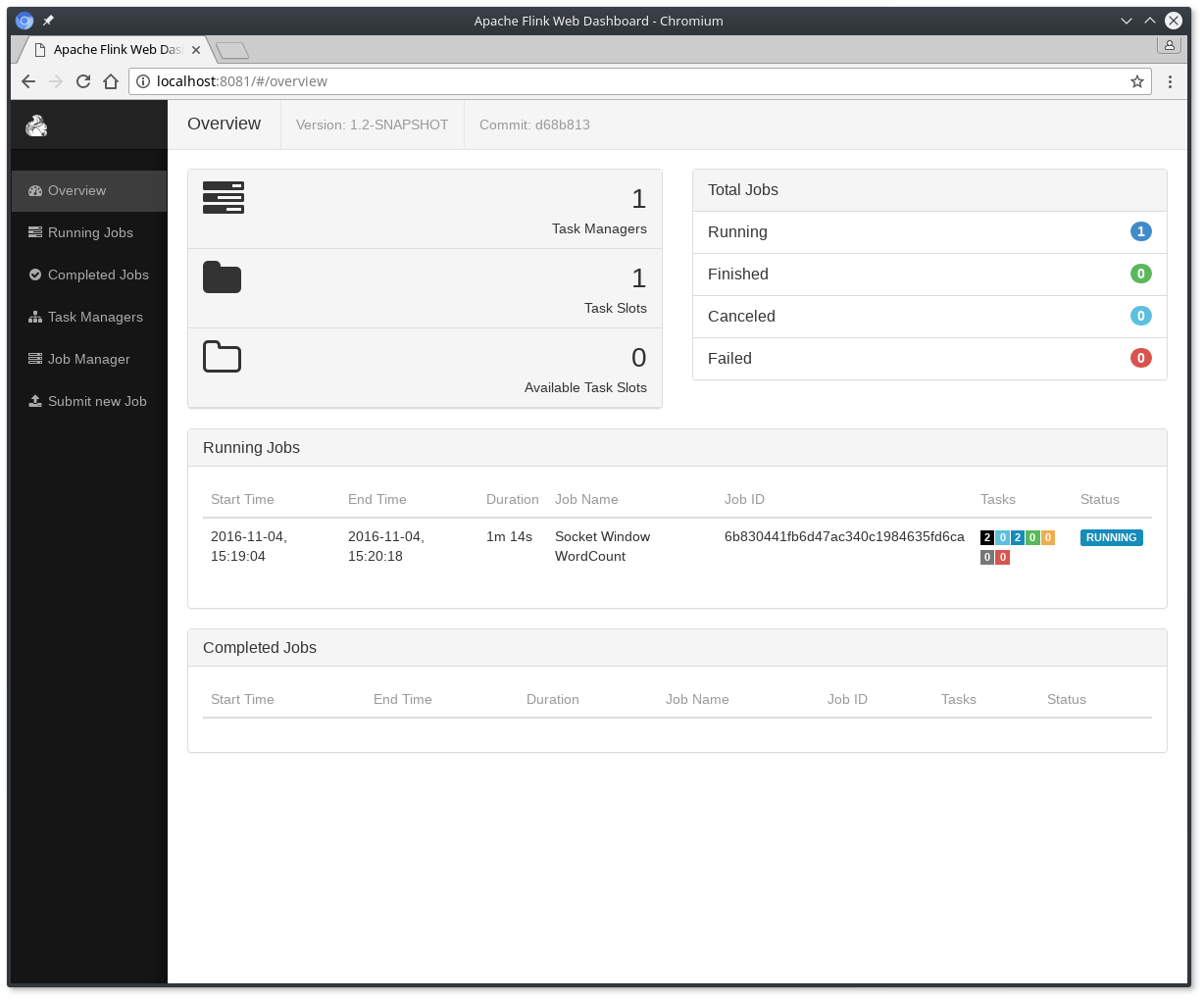

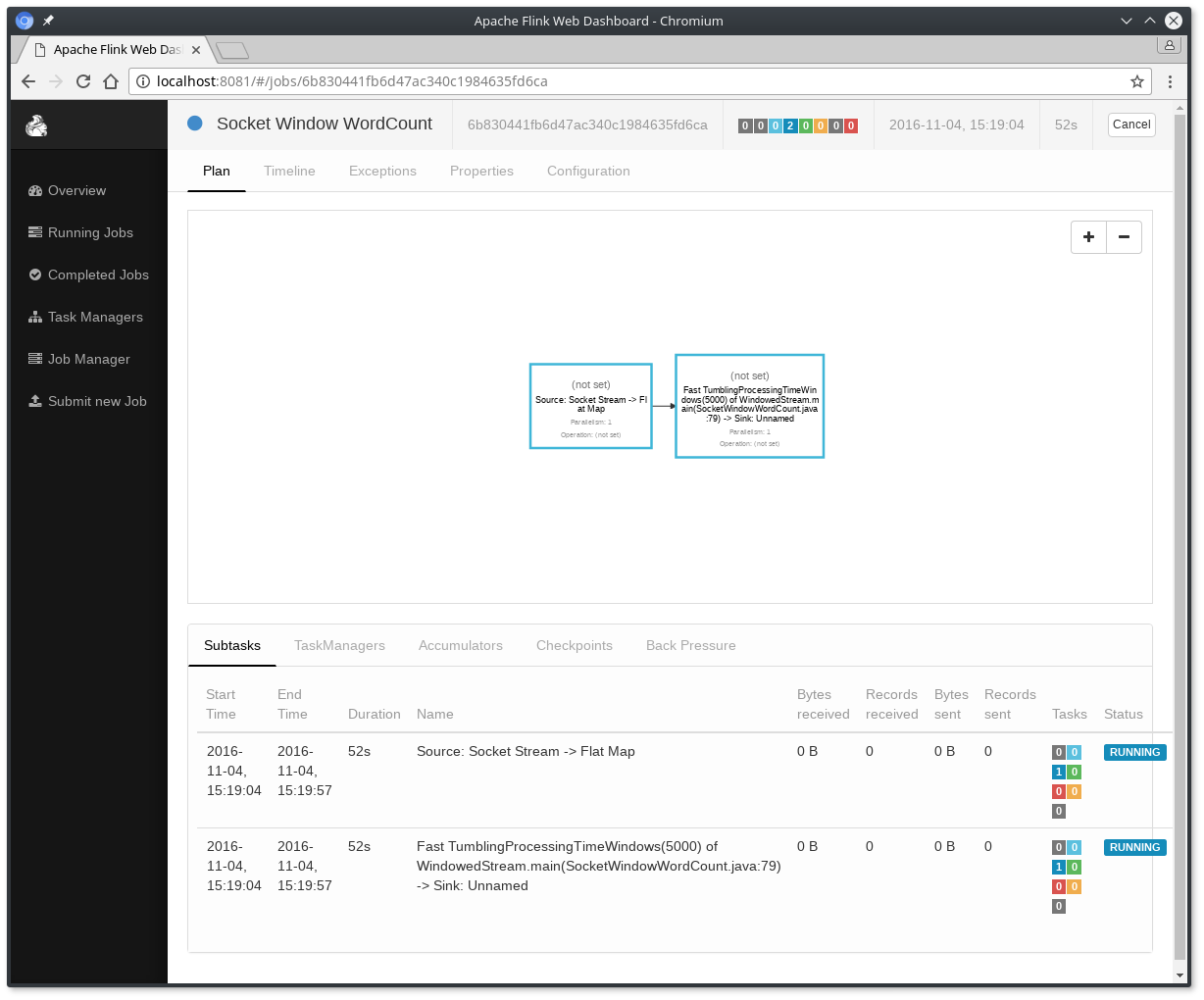

程序连接到套接字并等待输入。您可以检查Web界面以验证作业是否按预期运行:

- 单词在5秒的时间窗口(处理时间,翻滚窗口)中计算并打印到

stdout。监视TaskManager的输出文件并写入一些文本nc(输入在点击后逐行发送到Flink):

-

$ nc -l 9000

-

lorem ipsum

-

ipsum ipsum ipsum

-

bye

该.out文件将在每个时间窗口结束时,只要打印算作字浮在,例如:

-

$ tail -f log/flink-*-taskexecutor-*.out

-

lorem : 1

-

bye : 1

-

ipsum : 4

要停止Flink你可以执行如下命令:

$ ./bin/stop-cluster.sh

文章来源: blog.csdn.net,作者:血煞风雨城2018,版权归原作者所有,如需转载,请联系作者。

原文链接:blog.csdn.net/qq_31905135/article/details/86649409

- 点赞

- 收藏

- 关注作者

评论(0)