VOLO实战:使用VOLO实现图像分类任务(一)

摘要

一、论文介绍

VOLO主干网络总结

论文介绍

- 概述:本文探讨了视觉识别领域中卷积神经网络(CNNs)与视觉转换器(ViTs)的性能差距,并提出了一种新颖的前景注意力机制,以此为基础构建了视觉前景器(VOLO)主干网络。

- 背景:视觉识别领域长期由CNNs主导,但ViTs通过自注意力机制在长距离依赖性建模上展示了更大灵活性。然而,ViTs在ImageNet分类任务上的性能仍落后于SOTA CNNs。

- 目的:本文旨在缩小ViTs与CNNs之间的性能差距,并证明基于注意力的模型能够超越CNNs。

创新点

- 前景注意力机制:提出了一种新的简单且轻量级的注意力机制,称为前景器(Outlooker),以高效地丰富标记表示中的精细信息。

- VOLO架构:基于前景注意力机制,构建了一个简单且通用的架构,称为视觉前景器(VOLO),用于视觉识别任务。

- 高效编码:Outlooker通过高效的线性投影直接从锚标记特征中推断出聚合周围标记的机制,摆脱了昂贵的点积注意力计算。

方法

- 架构组成:VOLO模型由两个阶段组成,第一阶段使用一系列Outlooker生成细粒度的标记表示,第二阶段部署一系列Transformer块来聚合全局信息。

- Outlooker结构:Outlooker由一个用于空间信息编码的Outlook注意力层和一个用于通道间信息交互的多层感知器(MLP)组成。

- 网络架构变体:基于LV-ViT模型构建了VOLO,并引入了VOLO的五个版本(VOLO-D1至VOLO-D5),详细超参数设置可见表2。

模块作用

- Outlooker作用:Outlooker创新了用于标记聚合的注意力生成方式,使模型能够高效地编码精细信息,这对于实现令人信服的视觉识别性能至关重要。

- Transformer作用:在VOLO的第二阶段,Transformer块用于聚合全局信息,与Outlooker相辅相成,共同提升模型性能。

- 整体架构作用:VOLO架构通过结合Outlooker和Transformer的优点,实现了在ImageNet分类任务上的卓越性能。

改进的效果

- ImageNet分类性能:VOLO在ImageNet-1K分类任务上实现了87%以上的top-1准确率,刷新了最优性能。

- 模型缩放效果:通过增加模型大小和图像分辨率,VOLO的性能得到了显著提升。从VOLO-D1到VOLO-D5,准确率提升了近2%。

- 语义分割性能:在Cityscapes和ADE20K数据集上,VOLO也表现出了出色的语义分割性能。在ADE20K上,使用VOLO-D5作为主干网络时,mIoU分数达到了54.3,这是在没有使用额外预训练数据的情况下的最新最佳结果。

总结:本文介绍了一种新颖的视觉前景器(VOLO)主干网络,通过提出前景注意力机制和构建两个阶段的架构,实现了在ImageNet分类任务上的卓越性能。同时,VOLO在语义分割任务上也表现出了出色的性能。VOLO的提出为视觉识别领域带来了新的突破和进展。

本文使用VOLO模型实现图像分类任务,模型选择volo_d1,在植物幼苗分类任务ACC达到了85%+。

通过深入阅读本文,您将能够掌握以下关键技能与知识:

-

数据增强的多种策略:包括利用PyTorch的

transforms库进行基本增强,以及进阶技巧如CutOut、MixUp、CutMix等,这些方法能显著提升模型泛化能力。 -

VOLO模型的训练实现:了解如何从头开始构建并训练VOLO(或其他深度学习模型),涵盖模型定义、数据加载、训练循环等关键环节。

-

混合精度训练:学习如何利用PyTorch自带的混合精度训练功能,加速训练过程同时减少内存消耗。

-

梯度裁剪技术:掌握梯度裁剪的应用,有效防止梯度爆炸问题,确保训练过程的稳定性。

-

分布式数据并行(DP)训练:了解如何在多GPU环境下使用PyTorch的分布式数据并行功能,加速大规模模型训练。

-

可视化训练过程:学习如何绘制训练过程中的loss和accuracy曲线,直观监控模型学习状况。

-

评估与生成报告:掌握在验证集上评估模型性能的方法,并生成详细的评估报告,包括ACC等指标。

-

测试脚本编写:学会编写测试脚本,对测试集进行预测,评估模型在实际应用中的表现。

-

学习率调整策略:理解并应用余弦退火策略动态调整学习率,优化训练效果。

-

自定义统计工具:使用

AverageMeter类或其他工具统计和记录训练过程中的ACC、loss等关键指标,便于后续分析。 -

深入理解ACC1与ACC5:掌握图像分类任务中ACC1(Top-1准确率)和ACC5(Top-5准确率)的含义及其计算方法。

-

指数移动平均(EMA):学习如何在模型训练中应用EMA技术,进一步提升模型在测试集上的表现。

若您在以上任一领域基础尚浅,感到理解困难,推荐您参考我的专栏“经典主干网络精讲与实战”,该专栏从零开始,循序渐进地讲解上述所有知识点,助您轻松掌握深度学习中的这些核心技能。

安装包

安装timm

使用pip就行,命令:

pip install timm

mixup增强和EMA用到了timm

安装einops,执行命令:

pip install einops

数据增强Cutout和Mixup

为了提高模型的泛化能力和性能,我在数据预处理阶段加入了Cutout和Mixup这两种数据增强技术。Cutout通过随机遮挡图像的一部分来强制模型学习更鲁棒的特征,而Mixup则通过混合两张图像及其标签来生成新的训练样本,从而增加数据的多样性。实现这两种增强需要安装torchtoolbox。安装命令:

pip install torchtoolbox

Cutout实现,在transforms中。

from torchtoolbox.transform import Cutout

# 数据预处理

transform = transforms.Compose([

transforms.Resize((224, 224)),

Cutout(),

transforms.ToTensor(),

transforms.Normalize([0.5, 0.5, 0.5], [0.5, 0.5, 0.5])

])

需要导入包:from timm.data.mixup import Mixup,

定义Mixup,和SoftTargetCrossEntropy

mixup_fn = Mixup(

mixup_alpha=0.8, cutmix_alpha=1.0, cutmix_minmax=None,

prob=0.1, switch_prob=0.5, mode='batch',

label_smoothing=0.1, num_classes=12)

criterion_train = SoftTargetCrossEntropy()

Mixup 是一种在图像分类任务中常用的数据增强技术,它通过将两张图像以及其对应的标签进行线性组合来生成新的数据和标签。

参数详解:

mixup_alpha (float): mixup alpha 值,如果 > 0,则 mixup 处于活动状态。

cutmix_alpha (float):cutmix alpha 值,如果 > 0,cutmix 处于活动状态。

cutmix_minmax (List[float]):cutmix 最小/最大图像比率,cutmix 处于活动状态,如果不是 None,则使用这个 vs alpha。

如果设置了 cutmix_minmax 则cutmix_alpha 默认为1.0

prob (float): 每批次或元素应用 mixup 或 cutmix 的概率。

switch_prob (float): 当两者都处于活动状态时切换cutmix 和mixup 的概率 。

mode (str): 如何应用 mixup/cutmix 参数(每个’batch’,‘pair’(元素对),‘elem’(元素)。

correct_lam (bool): 当 cutmix bbox 被图像边框剪裁时应用。 lambda 校正

label_smoothing (float):将标签平滑应用于混合目标张量。

num_classes (int): 目标的类数。

EMA

EMA(Exponential Moving Average)在深度学习中是一种用于模型参数优化的技术,它通过计算参数的指数移动平均值来平滑模型的学习过程。这种方法有助于提高模型的稳定性和泛化能力,特别是在训练后期。以下是关于EMA的总结,表达进行了优化:

EMA概述

EMA是一种加权移动平均技术,其中每个新的平均值都是前一个平均值和当前值的加权和。在深度学习中,EMA被用于模型参数的更新,以减缓参数在训练过程中的快速波动,从而得到更加平滑和稳定的模型表现。

工作原理

在训练过程中,除了维护当前模型的参数外,还额外保存一份EMA参数。每个训练步骤或每隔一定步骤,根据当前模型参数和EMA参数,按照指数衰减的方式更新EMA参数。具体来说,EMA参数的更新公式通常如下:

其中,decay是一个介于0和1之间的超参数,控制着旧EMA值和新模型参数值之间的权重分配。较大的decay值意味着EMA更新时更多地依赖于旧值,即平滑效果更强。

应用优势

- 稳定性:EMA通过平滑参数更新过程,减少了模型在训练过程中的波动,使得模型更加稳定。

- 泛化能力:由于EMA参数是历史参数的平滑版本,它往往能捕捉到模型训练过程中的全局趋势,因此在测试或评估时,使用EMA参数往往能获得更好的泛化性能。

- 快速收敛:虽然EMA本身不直接加速训练过程,但通过稳定模型参数,它可能间接地帮助模型更快地收敛到更优的解。

使用场景

EMA在深度学习中的使用场景广泛,特别是在需要高度稳定性和良好泛化能力的任务中,如图像分类、目标检测等。在训练大型模型时,EMA尤其有用,因为它可以帮助减少过拟合的风险,并提高模型在未见数据上的表现。

具体实现如下:

import logging

from collections import OrderedDict

from copy import deepcopy

import torch

import torch.nn as nn

_logger = logging.getLogger(__name__)

class ModelEma:

def __init__(self, model, decay=0.9999, device='', resume=''):

# make a copy of the model for accumulating moving average of weights

self.ema = deepcopy(model)

self.ema.eval()

self.decay = decay

self.device = device # perform ema on different device from model if set

if device:

self.ema.to(device=device)

self.ema_has_module = hasattr(self.ema, 'module')

if resume:

self._load_checkpoint(resume)

for p in self.ema.parameters():

p.requires_grad_(False)

def _load_checkpoint(self, checkpoint_path):

checkpoint = torch.load(checkpoint_path, map_location='cpu')

assert isinstance(checkpoint, dict)

if 'state_dict_ema' in checkpoint:

new_state_dict = OrderedDict()

for k, v in checkpoint['state_dict_ema'].items():

# ema model may have been wrapped by DataParallel, and need module prefix

if self.ema_has_module:

name = 'module.' + k if not k.startswith('module') else k

else:

name = k

new_state_dict[name] = v

self.ema.load_state_dict(new_state_dict)

_logger.info("Loaded state_dict_ema")

else:

_logger.warning("Failed to find state_dict_ema, starting from loaded model weights")

def update(self, model):

# correct a mismatch in state dict keys

needs_module = hasattr(model, 'module') and not self.ema_has_module

with torch.no_grad():

msd = model.state_dict()

for k, ema_v in self.ema.state_dict().items():

if needs_module:

k = 'module.' + k

model_v = msd[k].detach()

if self.device:

model_v = model_v.to(device=self.device)

ema_v.copy_(ema_v * self.decay + (1. - self.decay) * model_v)

加入到模型中。

#初始化

if use_ema:

model_ema = ModelEma(

model_ft,

decay=model_ema_decay,

device='cpu',

resume=resume)

# 训练过程中,更新完参数后,同步update shadow weights

def train():

optimizer.step()

if model_ema is not None:

model_ema.update(model)

# 将model_ema传入验证函数中

val(model_ema.ema, DEVICE, test_loader)

针对没有预训练的模型,容易出现EMA不上分的情况,这点大家要注意啊!

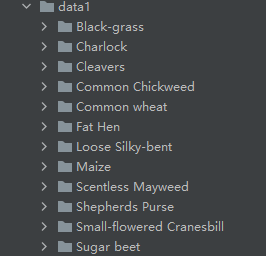

项目结构

VOLO_Demo

├─data1

│ ├─Black-grass

│ ├─Charlock

│ ├─Cleavers

│ ├─Common Chickweed

│ ├─Common wheat

│ ├─Fat Hen

│ ├─Loose Silky-bent

│ ├─Maize

│ ├─Scentless Mayweed

│ ├─Shepherds Purse

│ ├─Small-flowered Cranesbill

│ └─Sugar beet

├─models

│ └─volo.py

├─mean_std.py

├─makedata.py

├─train.py

└─test.py

mean_std.py:计算mean和std的值。

makedata.py:生成数据集。

train.py:训练models文件下DeBiFormer的模型

models:来源官方代码。

计算mean和std

在深度学习中,特别是在处理图像数据时,计算数据的均值(mean)和标准差(standard deviation, std)并进行归一化(Normalization)是加速模型收敛、提高模型性能的关键步骤之一。这里我将详细解释这两个概念,并讨论它们如何帮助模型学习。

均值(Mean)

均值是所有数值加和后除以数值的个数得到的平均值。在图像处理中,我们通常对每个颜色通道(如RGB图像的三个通道)分别计算均值。这意味着,如果我们的数据集包含多张图像,我们会计算所有图像在R通道上的像素值的均值,同样地,我们也会计算G通道和B通道的均值。

标准差(Standard Deviation, Std)

标准差是衡量数据分布离散程度的统计量。它反映了数据点与均值的偏离程度。在计算图像数据的标准差时,我们也是针对每个颜色通道分别进行的。标准差较大的颜色通道意味着该通道上的像素值变化较大,而标准差较小的通道则相对较为稳定。

归一化(Normalization)

归一化是将数据按比例缩放,使之落入一个小的特定区间,通常是[0, 1]或[-1, 1]。在图像处理中,我们通常会使用计算得到的均值和标准差来进行归一化,公式如下:

注意,在某些情况下,为了简化计算并确保数据非负,我们可能会选择将数据缩放到[0, 1]区间,这时使用的是最大最小值归一化,而不是基于均值和标准差的归一化。但在这里,我们主要讨论基于均值和标准差的归一化,因为它能保留数据的分布特性。

为什么需要归一化?

-

加速收敛:归一化后的数据具有相似的尺度,这有助于梯度下降算法更快地找到最优解,因为不同特征的梯度更新将在同一数量级上,从而避免了某些特征因尺度过大或过小而导致的训练缓慢或梯度消失/爆炸问题。

-

提高精度:归一化可以改善模型的泛化能力,因为它使得模型更容易学习到特征之间的相对关系,而不是被特征的绝对大小所影响。

-

稳定性:归一化后的数据更加稳定,减少了训练过程中的波动,有助于模型更加稳定地收敛。

如何计算和使用mean和std

-

计算全局mean和std:在整个数据集上计算mean和std。这通常是在训练开始前进行的,并使用这些值来归一化训练集、验证集和测试集。

-

使用库函数:许多深度学习框架(如PyTorch、TensorFlow等)提供了计算mean和std的便捷函数,并可以直接用于数据集的归一化。

-

动态调整:在某些情况下,特别是当数据集非常大或持续更新时,可能需要动态地计算mean和std。这通常涉及到在训练过程中使用移动平均(如EMA)来更新这些统计量。

计算并使用数据的mean和std进行归一化是深度学习中的一项基本且重要的预处理步骤,它对于加速模型收敛、提高模型性能和稳定性具有重要意义。新建mean_std.py,插入代码:

from torchvision.datasets import ImageFolder

import torch

from torchvision import transforms

def get_mean_and_std(train_data):

train_loader = torch.utils.data.DataLoader(

train_data, batch_size=1, shuffle=False, num_workers=0,

pin_memory=True)

mean = torch.zeros(3)

std = torch.zeros(3)

for X, _ in train_loader:

for d in range(3):

mean[d] += X[:, d, :, :].mean()

std[d] += X[:, d, :, :].std()

mean.div_(len(train_data))

std.div_(len(train_data))

return list(mean.numpy()), list(std.numpy())

if __name__ == '__main__':

train_dataset = ImageFolder(root=r'data1', transform=transforms.ToTensor())

print(get_mean_and_std(train_dataset))

数据集结构:

运行结果:

([0.3281186, 0.28937867, 0.20702125], [0.09407319, 0.09732835, 0.106712654])

把这个结果记录下来,后面要用!

生成数据集

我们整理还的图像分类的数据集结构是这样的

data

├─Black-grass

├─Charlock

├─Cleavers

├─Common Chickweed

├─Common wheat

├─Fat Hen

├─Loose Silky-bent

├─Maize

├─Scentless Mayweed

├─Shepherds Purse

├─Small-flowered Cranesbill

└─Sugar beet

pytorch和keras默认加载方式是ImageNet数据集格式,格式是

├─data

│ ├─val

│ │ ├─Black-grass

│ │ ├─Charlock

│ │ ├─Cleavers

│ │ ├─Common Chickweed

│ │ ├─Common wheat

│ │ ├─Fat Hen

│ │ ├─Loose Silky-bent

│ │ ├─Maize

│ │ ├─Scentless Mayweed

│ │ ├─Shepherds Purse

│ │ ├─Small-flowered Cranesbill

│ │ └─Sugar beet

│ └─train

│ ├─Black-grass

│ ├─Charlock

│ ├─Cleavers

│ ├─Common Chickweed

│ ├─Common wheat

│ ├─Fat Hen

│ ├─Loose Silky-bent

│ ├─Maize

│ ├─Scentless Mayweed

│ ├─Shepherds Purse

│ ├─Small-flowered Cranesbill

│ └─Sugar beet

新增格式转化脚本makedata.py,插入代码:

import glob

import os

import shutil

image_list=glob.glob('data1/*/*.png')

print(image_list)

file_dir='data'

if os.path.exists(file_dir):

print('true')

#os.rmdir(file_dir)

shutil.rmtree(file_dir)#删除再建立

os.makedirs(file_dir)

else:

os.makedirs(file_dir)

from sklearn.model_selection import train_test_split

trainval_files, val_files = train_test_split(image_list, test_size=0.3, random_state=42)

train_dir='train'

val_dir='val'

train_root=os.path.join(file_dir,train_dir)

val_root=os.path.join(file_dir,val_dir)

for file in trainval_files:

file_class=file.replace("\\","/").split('/')[-2]

file_name=file.replace("\\","/").split('/')[-1]

file_class=os.path.join(train_root,file_class)

if not os.path.isdir(file_class):

os.makedirs(file_class)

shutil.copy(file, file_class + '/' + file_name)

for file in val_files:

file_class=file.replace("\\","/").split('/')[-2]

file_name=file.replace("\\","/").split('/')[-1]

file_class=os.path.join(val_root,file_class)

if not os.path.isdir(file_class):

os.makedirs(file_class)

shutil.copy(file, file_class + '/' + file_name)

完成上面的内容就可以开启训练和测试了。

volo代码

# Copyright 2021 Sea Limited.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

"""

Vision OutLOoker (VOLO) implementation

"""

import torch

import torch.nn as nn

import torch.nn.functional as F

from timm.data import IMAGENET_DEFAULT_MEAN, IMAGENET_DEFAULT_STD

from timm.models.layers import DropPath, to_2tuple, trunc_normal_

from timm.models.registry import register_model

import math

import numpy as np

def _cfg(url='', **kwargs):

return {

'url': url,

'num_classes': 1000, 'input_size': (3, 224, 224), 'pool_size': None,

'crop_pct': .96, 'interpolation': 'bicubic',

'mean': IMAGENET_DEFAULT_MEAN, 'std': IMAGENET_DEFAULT_STD,

'first_conv': 'patch_embed.proj', 'classifier': 'head',

**kwargs

}

default_cfgs = {

'volo': _cfg(crop_pct=0.96),

'volo_large': _cfg(crop_pct=1.15),

}

class OutlookAttention(nn.Module):

"""

Implementation of outlook attention

--dim: hidden dim

--num_heads: number of heads

--kernel_size: kernel size in each window for outlook attention

return: token features after outlook attention

"""

def __init__(self, dim, num_heads, kernel_size=3, padding=1, stride=1,

qkv_bias=False, qk_scale=None, attn_drop=0., proj_drop=0.):

super().__init__()

head_dim = dim // num_heads

self.num_heads = num_heads

self.kernel_size = kernel_size

self.padding = padding

self.stride = stride

self.scale = qk_scale or head_dim**-0.5

self.v = nn.Linear(dim, dim, bias=qkv_bias)

self.attn = nn.Linear(dim, kernel_size**4 * num_heads)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

self.unfold = nn.Unfold(kernel_size=kernel_size, padding=padding, stride=stride)

self.pool = nn.AvgPool2d(kernel_size=stride, stride=stride, ceil_mode=True)

def forward(self, x):

B, H, W, C = x.shape

v = self.v(x).permute(0, 3, 1, 2) # B, C, H, W

h, w = math.ceil(H / self.stride), math.ceil(W / self.stride)

v = self.unfold(v).reshape(B, self.num_heads, C // self.num_heads,

self.kernel_size * self.kernel_size,

h * w).permute(0, 1, 4, 3, 2) # B,H,N,kxk,C/H

attn = self.pool(x.permute(0, 3, 1, 2)).permute(0, 2, 3, 1)

attn = self.attn(attn).reshape(

B, h * w, self.num_heads, self.kernel_size * self.kernel_size,

self.kernel_size * self.kernel_size).permute(0, 2, 1, 3, 4) # B,H,N,kxk,kxk

attn = attn * self.scale

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

x = (attn @ v).permute(0, 1, 4, 3, 2).reshape(

B, C * self.kernel_size * self.kernel_size, h * w)

x = F.fold(x, output_size=(H, W), kernel_size=self.kernel_size,

padding=self.padding, stride=self.stride)

x = self.proj(x.permute(0, 2, 3, 1))

x = self.proj_drop(x)

return x

class Outlooker(nn.Module):

"""

Implementation of outlooker layer: which includes outlook attention + MLP

Outlooker is the first stage in our VOLO

--dim: hidden dim

--num_heads: number of heads

--mlp_ratio: mlp ratio

--kernel_size: kernel size in each window for outlook attention

return: outlooker layer

"""

def __init__(self, dim, kernel_size, padding, stride=1,

num_heads=1,mlp_ratio=3., attn_drop=0.,

drop_path=0., act_layer=nn.GELU,

norm_layer=nn.LayerNorm, qkv_bias=False,

qk_scale=None):

super().__init__()

self.norm1 = norm_layer(dim)

self.attn = OutlookAttention(dim, num_heads, kernel_size=kernel_size,

padding=padding, stride=stride,

qkv_bias=qkv_bias, qk_scale=qk_scale,

attn_drop=attn_drop)

self.drop_path = DropPath(

drop_path) if drop_path > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim,

hidden_features=mlp_hidden_dim,

act_layer=act_layer)

def forward(self, x):

x = x + self.drop_path(self.attn(self.norm1(x)))

x = x + self.drop_path(self.mlp(self.norm2(x)))

return x

class Mlp(nn.Module):

"Implementation of MLP"

def __init__(self, in_features, hidden_features=None,

out_features=None, act_layer=nn.GELU,

drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

class Attention(nn.Module):

"Implementation of self-attention"

def __init__(self, dim, num_heads=8, qkv_bias=False,

qk_scale=None, attn_drop=0., proj_drop=0.):

super().__init__()

self.num_heads = num_heads

head_dim = dim // num_heads

self.scale = qk_scale or head_dim**-0.5

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

def forward(self, x):

B, H, W, C = x.shape

qkv = self.qkv(x).reshape(B, H * W, 3, self.num_heads,

C // self.num_heads).permute(2, 0, 3, 1, 4)

q, k, v = qkv[0], qkv[1], qkv[

2] # make torchscript happy (cannot use tensor as tuple)

attn = (q @ k.transpose(-2, -1)) * self.scale

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

x = (attn @ v).transpose(1, 2).reshape(B, H, W, C)

x = self.proj(x)

x = self.proj_drop(x)

return x

class Transformer(nn.Module):

"""

Implementation of Transformer,

Transformer is the second stage in our VOLO

"""

def __init__(self, dim, num_heads, mlp_ratio=4., qkv_bias=False,

qk_scale=None, attn_drop=0., drop_path=0.,

act_layer=nn.GELU, norm_layer=nn.LayerNorm):

super().__init__()

self.norm1 = norm_layer(dim)

self.attn = Attention(dim, num_heads=num_heads, qkv_bias=qkv_bias,

qk_scale=qk_scale, attn_drop=attn_drop)

# NOTE: drop path for stochastic depth, we shall see if this is better than dropout here

self.drop_path = DropPath(

drop_path) if drop_path > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim,

hidden_features=mlp_hidden_dim,

act_layer=act_layer)

def forward(self, x):

x = x + self.drop_path(self.attn(self.norm1(x)))

x = x + self.drop_path(self.mlp(self.norm2(x)))

return x

class ClassAttention(nn.Module):

"""

Class attention layer from CaiT, see details in CaiT

Class attention is the post stage in our VOLO, which is optional.

"""

def __init__(self, dim, num_heads=8, head_dim=None, qkv_bias=False,

qk_scale=None, attn_drop=0., proj_drop=0.):

super().__init__()

self.num_heads = num_heads

if head_dim is not None:

self.head_dim = head_dim

else:

head_dim = dim // num_heads

self.head_dim = head_dim

self.scale = qk_scale or head_dim**-0.5

self.kv = nn.Linear(dim,

self.head_dim * self.num_heads * 2,

bias=qkv_bias)

self.q = nn.Linear(dim, self.head_dim * self.num_heads, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(self.head_dim * self.num_heads, dim)

self.proj_drop = nn.Dropout(proj_drop)

def forward(self, x):

B, N, C = x.shape

kv = self.kv(x).reshape(B, N, 2, self.num_heads,

self.head_dim).permute(2, 0, 3, 1, 4)

k, v = kv[0], kv[

1] # make torchscript happy (cannot use tensor as tuple)

q = self.q(x[:, :1, :]).reshape(B, self.num_heads, 1, self.head_dim)

attn = ((q * self.scale) @ k.transpose(-2, -1))

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

cls_embed = (attn @ v).transpose(1, 2).reshape(

B, 1, self.head_dim * self.num_heads)

cls_embed = self.proj(cls_embed)

cls_embed = self.proj_drop(cls_embed)

return cls_embed

class ClassBlock(nn.Module):

"""

Class attention block from CaiT, see details in CaiT

We use two-layers class attention in our VOLO, which is optional.

"""

def __init__(self, dim, num_heads, head_dim=None, mlp_ratio=4.,

qkv_bias=False, qk_scale=None, drop=0., attn_drop=0.,

drop_path=0., act_layer=nn.GELU, norm_layer=nn.LayerNorm):

super().__init__()

self.norm1 = norm_layer(dim)

self.attn = ClassAttention(

dim, num_heads=num_heads, head_dim=head_dim, qkv_bias=qkv_bias,

qk_scale=qk_scale, attn_drop=attn_drop, proj_drop=drop)

# NOTE: drop path for stochastic depth

self.drop_path = DropPath(

drop_path) if drop_path > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim,

hidden_features=mlp_hidden_dim,

act_layer=act_layer,

drop=drop)

def forward(self, x):

cls_embed = x[:, :1]

cls_embed = cls_embed + self.drop_path(self.attn(self.norm1(x)))

cls_embed = cls_embed + self.drop_path(self.mlp(self.norm2(cls_embed)))

return torch.cat([cls_embed, x[:, 1:]], dim=1)

def get_block(block_type, **kargs):

"""

get block by name, specifically for class attention block in here

"""

if block_type == 'ca':

return ClassBlock(**kargs)

def rand_bbox(size, lam, scale=1):

"""

get bounding box as token labeling (https://github.com/zihangJiang/TokenLabeling)

return: bounding box

"""

W = size[1] // scale

H = size[2] // scale

cut_rat = np.sqrt(1. - lam)

cut_w = np.int(W * cut_rat)

cut_h = np.int(H * cut_rat)

# uniform

cx = np.random.randint(W)

cy = np.random.randint(H)

bbx1 = np.clip(cx - cut_w // 2, 0, W)

bby1 = np.clip(cy - cut_h // 2, 0, H)

bbx2 = np.clip(cx + cut_w // 2, 0, W)

bby2 = np.clip(cy + cut_h // 2, 0, H)

return bbx1, bby1, bbx2, bby2

class PatchEmbed(nn.Module):

"""

Image to Patch Embedding.

Different with ViT use 1 conv layer, we use 4 conv layers to do patch embedding

"""

def __init__(self, img_size=224, stem_conv=False, stem_stride=1,

patch_size=8, in_chans=3, hidden_dim=64, embed_dim=384):

super().__init__()

assert patch_size in [4, 8, 16]

self.stem_conv = stem_conv

if stem_conv:

self.conv = nn.Sequential(

nn.Conv2d(in_chans, hidden_dim, kernel_size=7, stride=stem_stride,

padding=3, bias=False), # 112x112

nn.BatchNorm2d(hidden_dim),

nn.ReLU(inplace=True),

nn.Conv2d(hidden_dim, hidden_dim, kernel_size=3, stride=1,

padding=1, bias=False), # 112x112

nn.BatchNorm2d(hidden_dim),

nn.ReLU(inplace=True),

nn.Conv2d(hidden_dim, hidden_dim, kernel_size=3, stride=1,

padding=1, bias=False), # 112x112

nn.BatchNorm2d(hidden_dim),

nn.ReLU(inplace=True),

)

self.proj = nn.Conv2d(hidden_dim,

embed_dim,

kernel_size=patch_size // stem_stride,

stride=patch_size // stem_stride)

self.num_patches = (img_size // patch_size) * (img_size // patch_size)

def forward(self, x):

if self.stem_conv:

x = self.conv(x)

x = self.proj(x) # B, C, H, W

return x

class Downsample(nn.Module):

"""

Image to Patch Embedding, downsampling between stage1 and stage2

"""

def __init__(self, in_embed_dim, out_embed_dim, patch_size):

super().__init__()

self.proj = nn.Conv2d(in_embed_dim, out_embed_dim,

kernel_size=patch_size, stride=patch_size)

def forward(self, x):

x = x.permute(0, 3, 1, 2)

x = self.proj(x) # B, C, H, W

x = x.permute(0, 2, 3, 1)

return x

def outlooker_blocks(block_fn, index, dim, layers, num_heads=1, kernel_size=3,

padding=1,stride=1, mlp_ratio=3., qkv_bias=False, qk_scale=None,

attn_drop=0, drop_path_rate=0., **kwargs):

"""

generate outlooker layer in stage1

return: outlooker layers

"""

blocks = []

for block_idx in range(layers[index]):

block_dpr = drop_path_rate * (block_idx +

sum(layers[:index])) / (sum(layers) - 1)

blocks.append(block_fn(dim, kernel_size=kernel_size, padding=padding,

stride=stride, num_heads=num_heads, mlp_ratio=mlp_ratio,

qkv_bias=qkv_bias, qk_scale=qk_scale, attn_drop=attn_drop,

drop_path=block_dpr))

blocks = nn.Sequential(*blocks)

return blocks

def transformer_blocks(block_fn, index, dim, layers, num_heads, mlp_ratio=3.,

qkv_bias=False, qk_scale=None, attn_drop=0,

drop_path_rate=0., **kwargs):

"""

generate transformer layers in stage2

return: transformer layers

"""

blocks = []

for block_idx in range(layers[index]):

block_dpr = drop_path_rate * (block_idx +

sum(layers[:index])) / (sum(layers) - 1)

blocks.append(

block_fn(dim, num_heads,

mlp_ratio=mlp_ratio,

qkv_bias=qkv_bias,

qk_scale=qk_scale,

attn_drop=attn_drop,

drop_path=block_dpr))

blocks = nn.Sequential(*blocks)

return blocks

class VOLO(nn.Module):

"""

Vision Outlooker, the main class of our model

--layers: [x,x,x,x], four blocks in two stages, the first block is outlooker, the

other three are transformer, we set four blocks, which are easily

applied to downstream tasks

--img_size, --in_chans, --num_classes: these three are very easy to understand

--patch_size: patch_size in outlook attention

--stem_hidden_dim: hidden dim of patch embedding, d1-d4 is 64, d5 is 128

--embed_dims, --num_heads: embedding dim, number of heads in each block

--downsamples: flags to apply downsampling or not

--outlook_attention: flags to apply outlook attention or not

--mlp_ratios, --qkv_bias, --qk_scale, --drop_rate: easy to undertand

--attn_drop_rate, --drop_path_rate, --norm_layer: easy to undertand

--post_layers: post layers like two class attention layers using [ca, ca],

if yes, return_mean=False

--return_mean: use mean of all feature tokens for classification, if yes, no class token

--return_dense: use token labeling, details are here:

https://github.com/zihangJiang/TokenLabeling

--mix_token: mixing tokens as token labeling, details are here:

https://github.com/zihangJiang/TokenLabeling

--pooling_scale: pooling_scale=2 means we downsample 2x

--out_kernel, --out_stride, --out_padding: kerner size,

stride, and padding for outlook attention

"""

def __init__(self, layers, img_size=224, in_chans=3, num_classes=1000, patch_size=8,

stem_hidden_dim=64, embed_dims=None, num_heads=None, downsamples=None,

outlook_attention=None, mlp_ratios=None, qkv_bias=False, qk_scale=None,

drop_rate=0., attn_drop_rate=0., drop_path_rate=0., norm_layer=nn.LayerNorm,

post_layers=None, return_mean=False, return_dense=True, mix_token=True,

pooling_scale=2, out_kernel=3, out_stride=2, out_padding=1):

super().__init__()

self.num_classes = num_classes

self.patch_embed = PatchEmbed(stem_conv=True, stem_stride=2, patch_size=patch_size,

in_chans=in_chans, hidden_dim=stem_hidden_dim,

embed_dim=embed_dims[0])

# inital positional encoding, we add positional encoding after outlooker blocks

self.pos_embed = nn.Parameter(

torch.zeros(1, img_size // patch_size // pooling_scale,

img_size // patch_size // pooling_scale,

embed_dims[-1]))

self.pos_drop = nn.Dropout(p=drop_rate)

# set the main block in network

network = []

for i in range(len(layers)):

if outlook_attention[i]:

# stage 1

stage = outlooker_blocks(Outlooker, i, embed_dims[i], layers,

downsample=downsamples[i], num_heads=num_heads[i],

kernel_size=out_kernel, stride=out_stride,

padding=out_padding, mlp_ratio=mlp_ratios[i],

qkv_bias=qkv_bias, qk_scale=qk_scale,

attn_drop=attn_drop_rate, norm_layer=norm_layer)

network.append(stage)

else:

# stage 2

stage = transformer_blocks(Transformer, i, embed_dims[i], layers,

num_heads[i], mlp_ratio=mlp_ratios[i],

qkv_bias=qkv_bias, qk_scale=qk_scale,

drop_path_rate=drop_path_rate,

attn_drop=attn_drop_rate,

norm_layer=norm_layer)

network.append(stage)

if downsamples[i]:

# downsampling between two stages

network.append(Downsample(embed_dims[i], embed_dims[i + 1], 2))

self.network = nn.ModuleList(network)

# set post block, for example, class attention layers

self.post_network = None

if post_layers is not None:

self.post_network = nn.ModuleList([

get_block(post_layers[i],

dim=embed_dims[-1],

num_heads=num_heads[-1],

mlp_ratio=mlp_ratios[-1],

qkv_bias=qkv_bias,

qk_scale=qk_scale,

attn_drop=attn_drop_rate,

drop_path=0.,

norm_layer=norm_layer)

for i in range(len(post_layers))

])

self.cls_token = nn.Parameter(torch.zeros(1, 1, embed_dims[-1]))

trunc_normal_(self.cls_token, std=.02)

# set output type

self.return_mean = return_mean # if yes, return mean, not use class token

self.return_dense = return_dense # if yes, return class token and all feature tokens

if return_dense:

assert not return_mean, "cannot return both mean and dense"

self.mix_token = mix_token

self.pooling_scale = pooling_scale

if mix_token: # enable token mixing, see token labeling for details.

self.beta = 1.0

assert return_dense, "return all tokens if mix_token is enabled"

if return_dense:

self.aux_head = nn.Linear(

embed_dims[-1],

num_classes) if num_classes > 0 else nn.Identity()

self.norm = norm_layer(embed_dims[-1])

# Classifier head

self.head = nn.Linear(

embed_dims[-1], num_classes) if num_classes > 0 else nn.Identity()

trunc_normal_(self.pos_embed, std=.02)

self.apply(self._init_weights)

def _init_weights(self, m):

if isinstance(m, nn.Linear):

trunc_normal_(m.weight, std=.02)

if isinstance(m, nn.Linear) and m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.LayerNorm):

nn.init.constant_(m.bias, 0)

nn.init.constant_(m.weight, 1.0)

@torch.jit.ignore

def no_weight_decay(self):

return {'pos_embed', 'cls_token'}

def get_classifier(self):

return self.head

def forward_embeddings(self, x):

# patch embedding

x = self.patch_embed(x)

# B,C,H,W-> B,H,W,C

x = x.permute(0, 2, 3, 1)

return x

def forward_tokens(self, x):

for idx, block in enumerate(self.network):

if idx == 2: # add positional encoding after outlooker blocks

x = x + self.pos_embed

x = self.pos_drop(x)

x = block(x)

B, H, W, C = x.shape

x = x.reshape(B, -1, C)

return x

def forward_cls(self, x):

B, N, C = x.shape

cls_tokens = self.cls_token.expand(B, -1, -1)

x = torch.cat((cls_tokens, x), dim=1)

for block in self.post_network:

x = block(x)

return x

def forward(self, x):

# step1: patch embedding

x = self.forward_embeddings(x)

# mix token, see token labeling for details.

if self.mix_token and self.training:

lam = np.random.beta(self.beta, self.beta)

patch_h, patch_w = x.shape[1] // self.pooling_scale, x.shape[

2] // self.pooling_scale

bbx1, bby1, bbx2, bby2 = rand_bbox(x.size(), lam, scale=self.pooling_scale)

temp_x = x.clone()

sbbx1,sbby1,sbbx2,sbby2=self.pooling_scale*bbx1,self.pooling_scale*bby1,\

self.pooling_scale*bbx2,self.pooling_scale*bby2

temp_x[:, sbbx1:sbbx2, sbby1:sbby2, :] = x.flip(0)[:, sbbx1:sbbx2, sbby1:sbby2, :]

x = temp_x

else:

bbx1, bby1, bbx2, bby2 = 0, 0, 0, 0

# step2: tokens learning in the two stages

x = self.forward_tokens(x)

# step3: post network, apply class attention or not

if self.post_network is not None:

x = self.forward_cls(x)

x = self.norm(x)

if self.return_mean: # if no class token, return mean

return self.head(x.mean(1))

x_cls = self.head(x[:, 0])

if not self.return_dense:

return x_cls

x_aux = self.aux_head(

x[:, 1:]

) # generate classes in all feature tokens, see token labeling

print(x_cls.shape,x_aux.max(1)[0].shape)

if not self.training:

return x_cls + 0.5 * x_aux.max(1)[0]

if self.mix_token and self.training: # reverse "mix token", see token labeling for details.

x_aux = x_aux.reshape(x_aux.shape[0], patch_h, patch_w, x_aux.shape[-1])

temp_x = x_aux.clone()

temp_x[:, bbx1:bbx2, bby1:bby2, :] = x_aux.flip(0)[:, bbx1:bbx2, bby1:bby2, :]

x_aux = temp_x

x_aux = x_aux.reshape(x_aux.shape[0], patch_h * patch_w, x_aux.shape[-1])

# return these: 1. class token, 2. classes from all feature tokens, 3. bounding box

return x_cls, x_aux, (bbx1, bby1, bbx2, bby2)

@register_model

def volo_d1(pretrained=False, **kwargs):

"""

VOLO-D1 model, Params: 27M

--layers: [x,x,x,x], four blocks in two stages, the first stage(block) is outlooker,

the other three blocks are transformer, we set four blocks, which are easily

applied to downstream tasks

--embed_dims, --num_heads,: embedding dim, number of heads in each block

--downsamples: flags to apply downsampling or not in four blocks

--outlook_attention: flags to apply outlook attention or not

--mlp_ratios: mlp ratio in four blocks

--post_layers: post layers like two class attention layers using [ca, ca]

See detail for all args in the class VOLO()

"""

layers = [4, 4, 8, 2] # num of layers in the four blocks

embed_dims = [192, 384, 384, 384]

num_heads = [6, 12, 12, 12]

mlp_ratios = [3, 3, 3, 3]

downsamples = [True, False, False, False] # do downsampling after first block

outlook_attention = [True, False, False, False ]

# first block is outlooker (stage1), the other three are transformer (stage2)

model = VOLO(layers,

embed_dims=embed_dims,

num_heads=num_heads,

mlp_ratios=mlp_ratios,

downsamples=downsamples,

outlook_attention=outlook_attention,

post_layers=['ca', 'ca'],

**kwargs)

model.default_cfg = default_cfgs['volo']

return model

@register_model

def volo_d2(pretrained=False, **kwargs):

"""

VOLO-D2 model, Params: 59M

"""

layers = [6, 4, 10, 4]

embed_dims = [256, 512, 512, 512]

num_heads = [8, 16, 16, 16]

mlp_ratios = [3, 3, 3, 3]

downsamples = [True, False, False, False]

outlook_attention = [True, False, False, False]

model = VOLO(layers,

embed_dims=embed_dims,

num_heads=num_heads,

mlp_ratios=mlp_ratios,

downsamples=downsamples,

outlook_attention=outlook_attention,

post_layers=['ca', 'ca'],

**kwargs)

model.default_cfg = default_cfgs['volo']

return model

@register_model

def volo_d3(pretrained=False, **kwargs):

"""

VOLO-D3 model, Params: 86M

"""

layers = [8, 8, 16, 4]

embed_dims = [256, 512, 512, 512]

num_heads = [8, 16, 16, 16]

mlp_ratios = [3, 3, 3, 3]

downsamples = [True, False, False, False]

outlook_attention = [True, False, False, False]

model = VOLO(layers,

embed_dims=embed_dims,

num_heads=num_heads,

mlp_ratios=mlp_ratios,

downsamples=downsamples,

outlook_attention=outlook_attention,

post_layers=['ca', 'ca'],

**kwargs)

model.default_cfg = default_cfgs['volo']

return model

@register_model

def volo_d4(pretrained=False, **kwargs):

"""

VOLO-D4 model, Params: 193M

"""

layers = [8, 8, 16, 4]

embed_dims = [384, 768, 768, 768]

num_heads = [12, 16, 16, 16]

mlp_ratios = [3, 3, 3, 3]

downsamples = [True, False, False, False]

outlook_attention = [True, False, False, False]

model = VOLO(layers,

embed_dims=embed_dims,

num_heads=num_heads,

mlp_ratios=mlp_ratios,

downsamples=downsamples,

outlook_attention=outlook_attention,

post_layers=['ca', 'ca'],

**kwargs)

model.default_cfg = default_cfgs['volo_large']

return model

@register_model

def volo_d5(pretrained=False, **kwargs):

"""

VOLO-D5 model, Params: 296M

stem_hidden_dim=128, the dim in patch embedding is 128 for VOLO-D5

"""

layers = [12, 12, 20, 4]

embed_dims = [384, 768, 768, 768]

num_heads = [12, 16, 16, 16]

mlp_ratios = [4, 4, 4, 4]

downsamples = [True, False, False, False]

outlook_attention = [True, False, False, False]

model = VOLO(layers,

embed_dims=embed_dims,

num_heads=num_heads,

mlp_ratios=mlp_ratios,

downsamples=downsamples,

outlook_attention=outlook_attention,

post_layers=['ca', 'ca'],

stem_hidden_dim=128,

**kwargs)

model.default_cfg = default_cfgs['volo_large']

return model

- 点赞

- 收藏

- 关注作者

评论(0)