常用摄像头一些点云深度矫正ROS程序(ZED & kinect v2 & D415)

ZED摄像头

获得中心点深度,未考虑RGB与深度映射(可参考下面D415)

#include <iostream>

#include <fstream>

#include <sstream>

#include <algorithm>

#include <dirent.h>

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <ros/ros.h>

#include <sensor_msgs/Image.h>

#include <cv_bridge/cv_bridge.h>

#include <image_transport/image_transport.h>

#include <sensor_msgs/image_encodings.h>

#include <opencv2/imgproc/imgproc.hpp>

void depthCallback(const sensor_msgs::Image::ConstPtr& msg)

{

// Get a pointer to the depth values casting the data

// pointer to floating point

float* depths = (float*)(&msg->data[0]);

// Image coordinates of the center pixel

int u = msg->width / 2;

int v = msg->height / 2;

// Linear index of the center pixel

int centerIdx = u + msg->width * v;

// Output the measure

ROS_INFO("Center distance : %g m", depths[centerIdx]);

}

static const std::string OPENCV_WINDOW = "Image window";

class ImageConverter

{

ros::NodeHandle nh_;

image_transport::ImageTransport it_;

image_transport::Subscriber image_sub_;

image_transport::Publisher image_pub_; public:

ImageConverter()

: it_(nh_)

{ // Subscrive to input video feed and publish output video feed

image_sub_ = it_.subscribe("/zed/right/image_raw_color", 1,

&ImageConverter::imageCb, this);

image_pub_ = it_.advertise("/image_converter/output_video", 1);

cv::namedWindow(OPENCV_WINDOW);

}

~ImageConverter()

{

cv::destroyWindow(OPENCV_WINDOW);

}

void imageCb(const sensor_msgs::ImageConstPtr& msg)

{

cv_bridge::CvImagePtr cv_ptr;

try

{

cv_ptr = cv_bridge::toCvCopy(msg, sensor_msgs::image_encodings::BGR8);

}

catch (cv_bridge::Exception& e)

{

ROS_ERROR("cv_bridge exception: %s", e.what());

return;

}

// Draw an example circle on the video stream

if (cv_ptr->image.rows > 60 && cv_ptr->image.cols > 60)

cv::circle(cv_ptr->image, cv::Point(50, 50), 10, CV_RGB(255,0,0));

// Update GUI Window

cv::imshow(OPENCV_WINDOW, cv_ptr->image);

cv::waitKey(3);

// Output modified video stream

image_pub_.publish(cv_ptr->toImageMsg());

}

};

//blog.csdn.net/qq_29985391/article/details/80247724

int main(int argc, char** argv) {

ros::init(argc, argv, "zed_video_subscriber");

/*

* NodeHandle is the main access point to communications with the ROS system.

* The first NodeHandle constructed will fully initialize this node, and the last

* NodeHandle destructed will close down the node.

*/

ros::NodeHandle n;

ros::Subscriber subDepth = n.subscribe("/zed/depth/depth_registered", 10, depthCallback);

//--只需要声明一个对象即可------------------

ImageConverter ic;//定义一个对象

//----------------------------------------

ros::spin();

return 0;

}

CMakeList.txt书写如下:

cmake_minimum_required(VERSION 2.8.3)

project(zed_depth_sub_tutorial)

# if CMAKE_BUILD_TYPE is not specified, take 'Release' as default

IF(NOT CMAKE_BUILD_TYPE)

SET(CMAKE_BUILD_TYPE Release)

ENDIF(NOT CMAKE_BUILD_TYPE)

## Find catkin and any catkin packages

find_package(catkin REQUIRED COMPONENTS

cv_bridge

image_transport

roscpp

sensor_msgs

std_msgs

geometry_msgs

)

find_package(OpenCV REQUIRED)

if(NOT WIN32)

ADD_DEFINITIONS("-std=c++0x -O3")

endif(NOT WIN32)

## Declare a catkin package

catkin_package()

include_directories(include ${catkin_INCLUDE_DIRS})

include_directories ( ${OpenCV_INCLUDE_DIRS} )

## Build

add_executable(zed_depth_sub src/zed_depth_sub_tutorial.cpp)

##target_link_libraries(zed_depth_sub ${catkin_LIBRARIES})

target_link_libraries( zed_depth_sub ${OpenCV_LIBRARIES} ${OpenCV_LIBS} ${catkin_LIBRARIES})

xml文件

<package>

<name>zed_depth_sub_tutorial</name>

<version>2.7.0</version>

<description>

This package is a tutorial showing how to subscribe to ZED depth streams

</description>

<maintainer email="support@stereolabs.com">STEREOLABS</maintainer>

<license>MIT</license>

<url type="website">http://stereolabs.com/</url>

<url type="repository">https://github.com/stereolabs/zed-ros-wrapper</url>

<url type="bugtracker">https://github.com/stereolabs/zed-ros-wrapper/issues</url>

<buildtool_depend>catkin</buildtool_depend>

<build_depend>cv_bridge</build_depend>

<build_depend>image_transport</build_depend>

<build_depend>opencv2</build_depend>

<build_depend>roscpp</build_depend>

<build_depend>sensor_msgs</build_depend>

<build_depend>std_msgs</build_depend>

<run_depend>cv_bridge</run_depend>

<run_depend>image_transport</run_depend>

<run_depend>opencv2</run_depend>

<run_depend>roscpp</run_depend>

<run_depend>sensor_msgs</run_depend>

<run_depend>std_msgs</run_depend>

</package>

报错了,怎是编译不了,这时如果大家用Qt进行编译发现报错,但是不指明在哪里报错,这时候可以到工作空间下,(我的工作空间是open_cv_ws),进行$catkin_make,这时候上面显示 format="2"应该去掉,去掉之后重新编译一下就可以了。

总结

话题/zed/right/image_raw_color可订阅ZED颜色图像,写在一个类里面,主程序直接定义一个对象就可以了

知道怎么去添加依赖,在CMakeList.txt中添加find_package(catkin REQUIRED COMPONENTS cv_bridge image_transport roscpp sensor_msgs std_msgs geometry_msgs ) find_package(OpenCV REQUIRED)

Kinect v2

ROS

做机器人相关的工作的同学, 同时对该部分也不会陌生吧。 机器人操作系统(ROS), 应用非常广泛, 并且有很多开源库, 包可以使用。 同时, 主流的传感器在ROS中也都有支持。 当然, Kinect2也是能够支持的。 ROS安装于Ubuntu之上, 我使用的版本是Ubuntu14.04 + ROS indigo。 关于ROS的安装问题, 可以查看官网相关指导 。 很简单的步骤。 依次输入下列命令:

sudo sh -c 'echo "deb http://packages.ros.org/ros/ubuntu $(lsb_release -sc) main" > /etc/apt/sources.list.d/ros-latest.list'

sudo apt-key adv --keyserver hkp://ha.pool.sks-keyservers.net --recv-key 0xB01FA116

sudo apt-get update

sudo apt-get install ros-indigo-desktop-full

sudo rosdep init

rosdep update

echo "source /opt/ros/indigo/setup.bash" >> ~/.bashrc

source ~/.bashrc

sudo apt-get install python-rosinstall

上述指令执行完毕之后, ROS也就安装完成了。 当然, 紧接着还需要建立自己的工作空间。 执行下述代码:

mkdir -p ~/catkin_ws/src

cd ~/catkin_ws/src

catkin_init_workspace

cd ..

catkin_make

驱动节点配置

在ROS环境里使用Kinect2, 主要依靠iai-kinect2这个包。 Github地址: https://github.com/code-iai/iai_kinect2 。

首先, 需要安装其libfreenect2, 步骤如下(以下默认以ubuntu14.04或更新的版本是系统版本, 别的系统, 参见原始网站说明):

libfreenect2

下载 libfreenect2 源码

git clone https://github.com/OpenKinect/libfreenect2.git

cd libfreenect2

下载upgrade deb 文件

cd depends; ./download_debs_trusty.sh

安装编译工具

sudo apt-get install build-essential cmake pkg-config

安装 libusb. 版本需求 >= 1.0.20.

sudo dpkg -i debs/libusb*deb

安装 TurboJPEG

sudo apt-get install libturbojpeg libjpeg-turbo8-dev

安装 OpenGL

sudo dpkg -i debs/libglfw3*deb

sudo apt-get install -f

sudo apt-get install libgl1-mesa-dri-lts-vivid

(If the last command conflicts with other packages, don’t do it.) 原文如上提示, 我在安装的时候, 第三条指令确实出现了错误, 就直接忽略第三条指令了。

----安装 OpenCL (可选)

-----------Intel GPU:

sudo apt-add-repository ppa:floe/beignet

sudo apt-get update

sudo apt-get install beignet-dev

sudo dpkg -i debs/ocl-icd*deb

-----------AMD GPU: apt-get install opencl-headers

-----------验证安装: clinfo

----安装 CUDA (可选, Nvidia only):

-----------(Ubuntu 14.04 only) Download cuda-repo-ubuntu1404…*.deb (“deb (network)”) from Nvidia website, follow their installation instructions, including apt-get install cuda which installs Nvidia graphics driver.

-----------(Jetson TK1) It is preloaded.

-----------(Nvidia/Intel dual GPUs) After apt-get install cuda, use sudo prime-select intel to use Intel GPU for desktop.

-----------(Other) Follow Nvidia website’s instructions.

----安装 VAAPI (可选, Intel only)sudo dpkg -i debs/{libva,i965}*deb; sudo apt-get install

----安装 OpenNI2 (可选)

powershell

sudo apt-add-repository ppa:deb-rob/ros-trusty && sudo apt-get update

sudo apt-get install libopenni2-dev

----Build

powershell

cd ..

mkdir build && cd build

cmake .. -DCMAKE_INSTALL_PREFIX=$HOME/freenect2 -DENABLE_CXX11=ON

make

make install

针对上面cmake命令的说明, 第一个参数, 是特别指定安装的位置, 你也可以指定别的你觉得高兴的地方, 但一般标准的路径是上述示例路径或者/usr/local。 第二个参数是增加C++11的支持。

----设定udev rules: sudo cp ../platform/linux/udev/90-kinect2.rules /etc/udev/rules.d/, 然后重新插拔Kinect2.

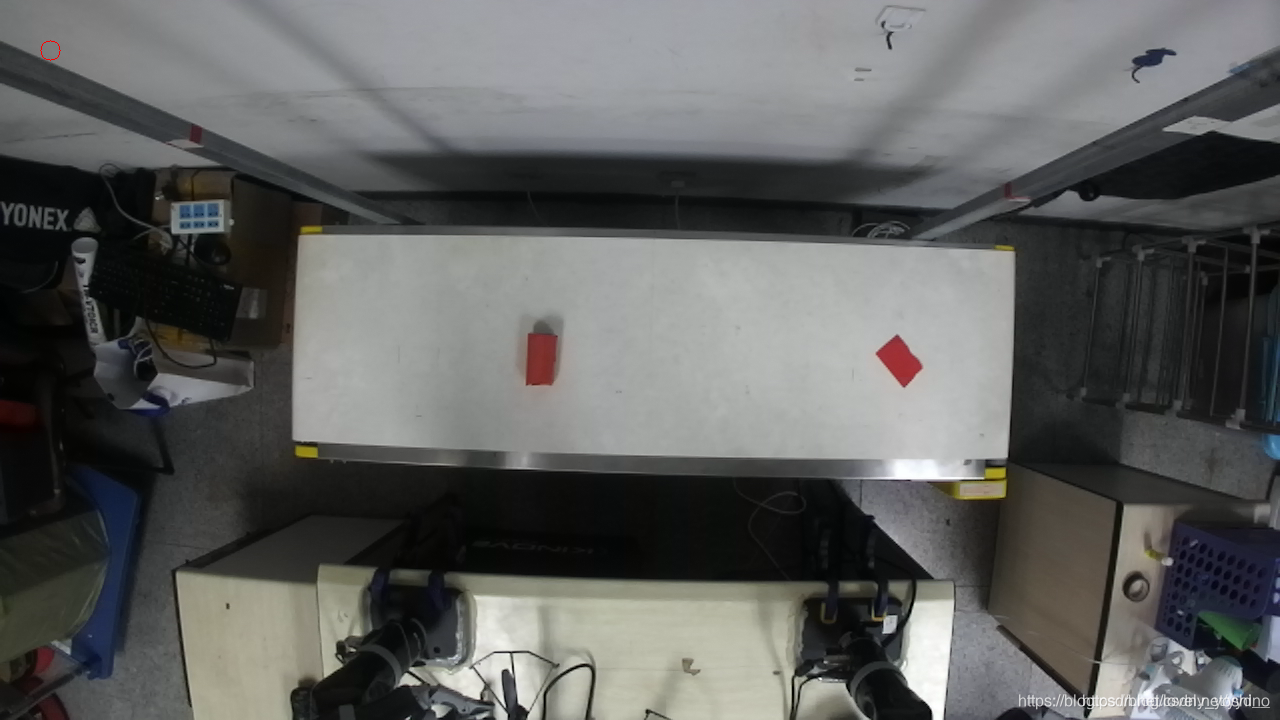

----一切搞定, 现在可以尝试运行Demo程序: ./bin/Protonect, 不出意外, 应该能够看到如下效果:

iai-kinect2

利用命令行从Github上面下载工程源码到工作空间内src文件夹内:

cd ~/catkin_ws/src/

git clone https://github.com/code-iai/iai_kinect2.git

cd iai_kinect2

rosdep install -r --from-paths .

cd ~/catkin_ws

catkin_make -DCMAKE_BUILD_TYPE="Release"

针对于上述命令中最后一行指令, 需要说明的是, 如果前面libfreenect2你安装的位置不是标准的两个路径下, 需要提供参数指定libfreenect2所在路径:

catkin_make -Dfreenect2_DIR=path_to_freenect2/lib/cmake/freenect2 -DCMAKE_BUILD_TYPE="Release"

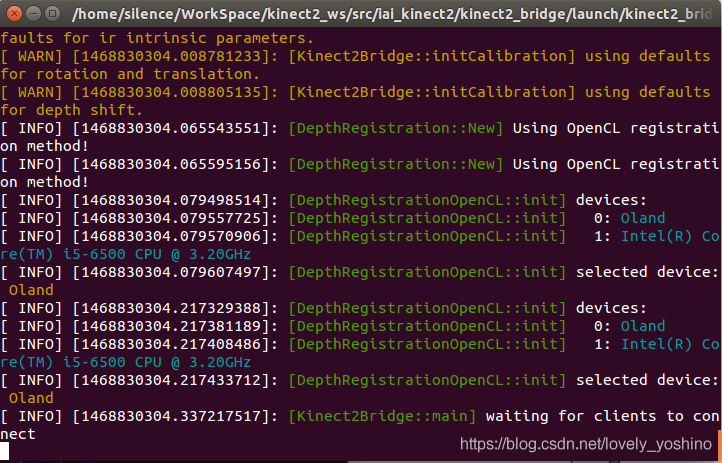

编译结束, 一切OK的话, 会看到如下提示:

最后就是激动人心的时刻了, 在ROS中获取Kinect2的数据。

PS: 很多同学在运行下属命令时,时常会遇到不能执行的问题,大部分情况是没有source对应的目录。应该先执行source ~/catkin_ws/devel/setup.bash,若对应已经写入~/.bashrc文件的同学,可以忽略。

roslaunch kinect2_bridge kinect2_bridge.launch

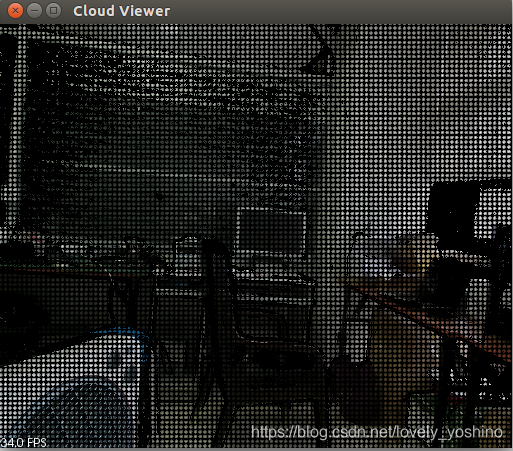

使用roslaunch发起kinect2相关节点, 可以看到如下结果, 在另外一个命令行中, 输入rostopic list, 可以查看到该节点发布出来的Topic, 还可以输入rosrun kinect2_viewer kinect2_viewer sd cloud, 来开启一个Viewer查看数据。 结果如下图所示:

简单运用

很久没有留意这篇博客了, 上面的内容, 是之前一个工作中需要用到Kinect2, 所以试着弄了一下, 将其整理下来形成这篇博客的.

今天突然有一个同学在站内给我私信, 问我这篇博客的内容. 分享出来的东西帮助到了别人, 确实很高兴! 关于这位同学问到的问题, 其实在前面的工作中, 我也实现过. 下面试着回忆整理一下.

保存图片

其实目的就一个, 将Kinect2的RGB图保存下来. 在前面的叙述中, 输入rosrun kinect2_viewer kinect2_viewer sd cloud来查看显示效果. 这句命令实质就是运行kinect2_viewer包中的kinect2_viewer节点, 给定两个参数sd 和 cloud. 进入这个包的路径, 是可以看到这个节点源码. 源码中主函数摘录如下:

int main(int argc, char **argv) {

... ... // 省略了部分代码

topicColor = "/" + ns + topicColor;

topicDepth = "/" + ns + topicDepth;

OUT_INFO("topic color: " FG_CYAN << topicColor << NO_COLOR);

OUT_INFO("topic depth: " FG_CYAN << topicDepth << NO_COLOR);

// Receiver是一个类, 也定义在该文件中.useExact(true), useCompressed(false)

Receiver receiver(topicColor, topicDepth, useExact, useCompressed);

OUT_INFO("starting receiver...");

// 运行时给出参数"cloud", 则mode = Receiver::CLOUD

// 运行时给出参数"image", 则mode = Receiver::IMAGE

// 运行时给出参数"both", 则mode = Receiver::BOTH

receiver.run(mode);

ros::shutdown();

return 0;

}

Receiver类的实现也不算太复杂. 其中用于显示的主循环在imageViewer()或cloudViewer()中. 依据给的参数的不同, 将会调用不同的函数. 对应关系如下所示:

switch(mode) {

case CLOUD:

cloudViewer();

break;

case IMAGE:

imageViewer();

break;

case BOTH:

imageViewerThread = std::thread(&Receiver::imageViewer, this);

cloudViewer();

break;

}

BOTH选项, 将会出现两个窗口来显示图像. 上面两个函数都已经实现了图片保存的功能.代码摘录如下, 两个函数实现类似, 故只是摘录了imageViewer()的内容:

int key = cv::waitKey(1);

switch(key & 0xFF) {

case 27:

case 'q':

running = false;

break;

case ' ':

case 's':

if(mode == IMAGE) {

createCloud(depth, color, cloud);

saveCloudAndImages(cloud, color, depth, depthDisp);

} else {

save = true;

}

break;

}

其中关键函数saveCloudAndImages()的实现如下:

void saveCloudAndImages(

const pcl::PointCloud<pcl::PointXYZRGBA>::ConstPtr cloud,

const cv::Mat &color, const cv::Mat &depth, const cv::Mat &depthColored) {

oss.str("");

oss << "./" << std::setfill('0') << std::setw(4) << frame;

// 所有文件都保存在当前路径下

const std::string baseName = oss.str();

const std::string cloudName = baseName + "_cloud.pcd";

const std::string colorName = baseName + "_color.jpg";

const std::string depthName = baseName + "_depth.png";

const std::string depthColoredName = baseName + "_depth_colored.png";

OUT_INFO("saving cloud: " << cloudName);

// writer是该类的pcl::PCDWriter类型的成员变量

writer.writeBinary(cloudName, *cloud);

OUT_INFO("saving color: " << colorName);

cv::imwrite(colorName, color, params);

OUT_INFO("saving depth: " << depthName);

cv::imwrite(depthName, depth, params);

OUT_INFO("saving depth: " << depthColoredName);

cv::imwrite(depthColoredName, depthColored, params);

OUT_INFO("saving complete!");

++frame;

}

从上面代码中可以看出来, 如果想要保存图片, 只需要选中显示图片的窗口, 然后输入单击键盘s键或者空格键就OK, 保存的图片就在当前目录.

如果有一些特别的需求, 在上面源码的基础上来进行实现, 将会事半功倍. 下面就是一个小小的例子.

保存图片序列

如果想要保存一个图片序列, 仅仅控制开始结束, 例如, 按键B(Begin)开始保存, 按键E(End)结束保存.

完成这样一个功能, 在源码的基础上进行适当更改就可以满足要求. 首选, 需要每一次对按键B和E的判断, 需要新增到上面摘录的switch(key & 0xFF)块中. 需要连续保存的话, 简单的设定一个标志位即可.

首先, 复制vewer.cpp文件, 命名为save_seq.cpp. 修改save_seq.cpp文件, 在Receiver类中bool save变量下面添加一个新的成员变量, bool save_seq. 在类的构造函数的初始化列表中新增该变量的初始化save_seq(false).

定位到void imageViewer()函数, 修改对应的switch(key & 0xFF)块如下:

int key = cv::waitKey(1);

switch(key & 0xFF) {

case 27:

case 'q':

running = false;

break;

case 'b': save_seq = true; save = true; break;

case 'e': save_seq = false; save = false; break;

case ' ':

case 's':

if (save_seq) break;

if(mode == IMAGE) {

createCloud(depth, color, cloud);

saveCloudAndImages(cloud, color, depth, depthDisp);

} else {

save = true;

}

break;

}

if (save_seq) {

createCloud(depth, color, cloud);

saveCloudAndImages(cloud, color, depth, depthDisp);

}

定位到void cloudViewer()函数, 修改对应的if (save)块如下:

if(save || save_seq) {

save = false;

cv::Mat depthDisp;

dispDepth(depth, depthDisp, 12000.0f);

saveCloudAndImages(cloud, color, depth, depthDisp);

}

定位到void keyboardEvent(const pcl::visualization::KeyboardEvent &event, void *)函数, 修改源码如下:

if(event.keyUp()) {

switch(event.getKeyCode()) {

case 27:

case 'q':

running = false;

break;

case ' ':

case 's':

save = true;

break;

case 'b':

save_seq = true;

break;

case 'e':

save_seq = false;

break;

}

}

在CMakeList.txt的最后, 添加如下指令:

add_executable(save_seq src/save_seq.cpp)

target_link_libraries(save_seq

${catkin_LIBRARIES}

${OpenCV_LIBRARIES}

${PCL_LIBRARIES}

${kinect2_bridge_LIBRARIES}

)

返回到catkin主目录, 运行catkin_make, 编译, 链接没有问题的话, 就可以查看效果了. 当然了, 首先是需要启动kinect_bride的. 依次运行下述指令:

roslaunch kinect2_bridge kinect2_bridge.launch

rosrun kinect2_viewer save_seq hd cloud

选中弹出的窗口, 按键B 开始, 按键E结束保存. Terminal输出结果如下:

点击点云图获取坐标

主要想法是, 鼠标在点云图中移动时, 实时显示当前鼠标所指的点的三维坐标, 点击鼠标时, 获取该坐标发送出去.

这样的话, 首先就需要对鼠标有一个回调函数, 当鼠标状态改变时, 做出对应的变化. 鼠标变化可以简化为三种情况:

----鼠标移动

----鼠标左键点击

----鼠标右键点击

因为涉及到回调函数, 而且也只是一个小功能, 代码实现很简单. 直接使用了三个全局变量代表这三个状态(代码需要支持C++11, 不想那么麻烦的话, 可以将类型更改为volatile int), 以及一个全局的普通函数:

std::atomic_int mouseX;

std::atomic_int mouseY;

std::atomic_int mouseBtnType;

void onMouse(int event, int x, int y, int flags, void* ustc) {

// std::cout << "onMouse: " << x << " " << y << std::endl;

mouseX = x;

mouseY = y;

mouseBtnType = event;

}

在初始化中添加两个ros::Publisher, 分别对应鼠标左键和右键压下应该发布数据到达的话题. 如下所示:

ros::Publisher leftBtnPointPub =

nh.advertise<geometry_msgs::PointStamped>("/kinect2/click_point/left", 1);

ros::Publisher rightBtnPointPub =

nh.advertise<geometry_msgs::PointStamped>("/kinect2/click_point/right", 1);

其中消息格式是geometry_msgs/PointStamped, ROS自带的消息, 在源码头部需要包含头文件, #include <geometry_msgs/PointStamped.h>, 具体定义如下:

std_msgs/Header header

uint32 seq

time stamp

string frame_id

geometry_msgs/Point point

float64 x

float64 y

float64 z

然后再重写cloudViewer()函数如下:

void cloudViewer() {

cv::Mat color, depth;

std::chrono::time_point<std::chrono::high_resolution_clock> start, now;

double fps = 0;

size_t frameCount = 0;

std::ostringstream oss;

std::ostringstream ossXYZ; // 新增一个string流

const cv::Point pos(5, 15);

const cv::Scalar colorText = CV_RGB(255, 0, 0);

const double sizeText = 0.5;

const int lineText = 1;

const int font = cv::FONT_HERSHEY_SIMPLEX;

// 从全局变量获取当前鼠标坐标

int img_x = mouseX;

int img_y = mouseY;

geometry_msgs::PointStamped ptMsg;

ptMsg.header.frame_id = "kinect_link";

lock.lock();

color = this->color;

depth = this->depth;

updateCloud = false;

lock.unlock();

const std::string window_name = "color viewer";

cv::namedWindow(window_name);

// 注册鼠标回调函数, 第三个参数是C++11中的关键字, 若不支持C++11, 替换成NULL

cv::setMouseCallback(window_name, onMouse, nullptr);

createCloud(depth, color, cloud);

for(; running && ros::ok();) {

if(updateCloud) {

lock.lock();

color = this->color;

depth = this->depth;

updateCloud = false;

lock.unlock();

createCloud(depth, color, cloud);

img_x = mouseX;

img_y = mouseY;

const pcl::PointXYZRGBA& pt = cloud->points[img_y * depth.cols + img_x];

ptMsg.point.x = pt.x;

ptMsg.point.y = pt.y;

ptMsg.point.z = pt.z;

// 根据鼠标左键压下或右键压下, 分别发布三维坐标到不同的话题上去

switch (mouseBtnType) {

case cv::EVENT_LBUTTONUP:

ptMsg.header.stamp = ros::Time::now();

leftBtnPointPub.publish(ptMsg);

ros::spinOnce();

break;

case cv::EVENT_RBUTTONUP:

ptMsg.header.stamp = ros::Time::now();

rightBtnPointPub.publish(ptMsg);

ros::spinOnce();

break;

default:

break;

}

mouseBtnType = cv::EVENT_MOUSEMOVE;

++frameCount;

now = std::chrono::high_resolution_clock::now();

double elapsed =

std::chrono::duration_cast<std::chrono::milliseconds>(now - start).count() / 1000.0;

if(elapsed >= 1.0) {

fps = frameCount / elapsed;

oss.str("");

oss << "fps: " << fps << " ( " << elapsed / frameCount * 1000.0 << " ms)";

start = now;

frameCount = 0;

}

cv::putText(color, oss.str(), pos, font, sizeText, colorText, lineText, CV_AA);

ossXYZ.str("");

ossXYZ << "( " << ptMsg.point.x << ", " << ptMsg.point.y

<< ", " << ptMsg.point.z << " )";

cv::putText(color, ossXYZ.str(), cv::Point(img_x, img_y), font, 1, colorText, 3, CV_AA);

// cv::circle(color, cv::Point(mouseX, mouseY), 5, cv::Scalar(0, 0, 255), -1);

cv::imshow(window_name, color);

// cv::imshow(window_name, depth);

cv::waitKey(1);

}

}

cv::destroyAllWindows();

cv::waitKey(100);

}

最后, 稍微改写一下主函数就OK了, 整个主函数摘录如下, 其中去掉了多余的参数解析, 让使用更加固定, 简单.

int main(int argc, char **argv) {

#if EXTENDED_OUTPUT

ROSCONSOLE_AUTOINIT;

if(!getenv("ROSCONSOLE_FORMAT"))

{

ros::console::g_formatter.tokens_.clear();

ros::console::g_formatter.init("[${severity}] ${message}");

}

#endif

ros::init(argc, argv, "kinect2_viewer", ros::init_options::AnonymousName);

if(!ros::ok()) {

return 0;

}

std::string ns = K2_DEFAULT_NS;

std::string topicColor = K2_TOPIC_HD K2_TOPIC_IMAGE_COLOR K2_TOPIC_IMAGE_RECT;

std::string topicDepth = K2_TOPIC_HD K2_TOPIC_IMAGE_DEPTH K2_TOPIC_IMAGE_RECT;

bool useExact = true;

bool useCompressed = false;

Receiver::Mode mode = Receiver::CLOUD;

topicColor = "/" + ns + topicColor;

topicDepth = "/" + ns + topicDepth;

OUT_INFO("topic color: " FG_CYAN << topicColor << NO_COLOR);

OUT_INFO("topic depth: " FG_CYAN << topicDepth << NO_COLOR);

Receiver receiver(topicColor, topicDepth, useExact, useCompressed);

OUT_INFO("starting receiver...");

OUT_INFO("Please click mouse in color viewer...");

receiver.run(mode);

ros::shutdown();

return 0;

}

在CMakeList.txt中加入下面两段话, 然后make就OK.

add_executable(click_rgb src/click_rgb.cpp)

target_link_libraries(click_rgb

${catkin_LIBRARIES}

${OpenCV_LIBRARIES}

${PCL_LIBRARIES}

${kinect2_bridge_LIBRARIES}

)

install(TARGETS click_rgb

RUNTIME DESTINATION ${CATKIN_PACKAGE_BIN_DESTINATION}

)

运行的话, 输入rosrun kinect2_viewer click_rgb就OK. 效果如下图所示, 图中红色坐标位置, 实际是鼠标所在位置, 截图时, 鼠标截不下来.

基于深度相机D415的彩色与深度图像匹配

环境配置可以参考考另一篇博文

- 最近在用D435做物体识别抓取的时候发现二维坐标到三维坐标没有一个直接能用的从二维像素点坐标转换到三维坐标的ROS节点,自己之前写过的kinect V2的坐标映射的通用性太差,所以这次写了一个节点,利用深度相机ROS节点发布的深度与彩色对齐的图像话题和图像信息内外参话题,将二维坐标映射到三维坐标系。

- 我挂上来的这个节点是将鼠标指向的二维坐标转换到三维坐标系下,并发布话题可视化到了rviz中,因为我自己的物体识别发布的像素坐标话题是一个自己写的消息文件,这次就不放出来了,需要用的把我这里的鼠标反馈的坐标改成你自己的坐标就行了。

- 原理:深度相机会发布一个裁剪对齐好的深度图像或者彩色图像话题,以D415为例,当我们输入:

roslaunch realsense2_camera rs_camera.launch后就可以启动程序。并看到它的ROS节点发布了/camera/aligned_depth_to_color/image_raw(即深度对齐到彩色的话题),这样只需要找到彩色图像的坐标影色到它的坐标读取一下深度,再通过内参矩阵计算就行了,而内参矩阵也通过了/camera/aligned_depth_to_color/camera_info话题发布出来,直接读取即可。 - 效果图:(ps.为了满足gif尺寸限制只能牺牲下帧率了,左边的鼠标映射到右边的粉色的球)

代码:

coordinate_map.cpp

/**********************

coordinate_map.cpp

author: wxw

email: wangxianwei1994@foxmail.com

time: 2019-7-29

**********************/

#include <iostream>

#include <string>

#include <opencv2/opencv.hpp>

#include <ros/ros.h>

#include <cv_bridge/cv_bridge.h>

#include <geometry_msgs/PointStamped.h>

#include <image_transport/image_transport.h>

#include <sensor_msgs/CameraInfo.h>

#include <sensor_msgs/Image.h>

using namespace cv;

using namespace std;

class ImageConverter {

private:

ros::NodeHandle nh_;

image_transport::ImageTransport it_;

image_transport::Subscriber image_sub_color;//接收彩色图像

image_transport::Subscriber image_sub_depth;//接收深度图像

ros::Subscriber camera_info_sub_;//接收深度图像对应的相机参数话题

ros::Publisher arm_point_pub_;//发布一个三维坐标点,可用于可视化

sensor_msgs::CameraInfo camera_info;

geometry_msgs::PointStamped output_point;

/* Mat depthImage,colorImage; */

Mat colorImage;

Mat depthImage = Mat::zeros( 480, 640, CV_16UC1 );//注意这里要修改为你接收的深度图像尺寸

Point mousepos = Point( 0, 0 ); /* mousepoint to be map */

public:

//获取鼠标的坐标,通过param指针传出到类成员Point mousepos

static void on_mouse( int event, int x, int y, int flags, void *param )

{

switch ( event )

{

case CV_EVENT_MOUSEMOVE: /* move mouse */

{

Point &tmppoint = *(cv::Point *) param;

tmppoint = Point( x, y );

} break;

}

}

ImageConverter() : it_( nh_ )

{

//topic sub:

image_sub_depth = it_.subscribe( "/camera/aligned_depth_to_color/image_raw",

1, &ImageConverter::imageDepthCb, this );

image_sub_color = it_.subscribe( "/camera/color/image_raw", 1,

&ImageConverter::imageColorCb, this );

camera_info_sub_ =

nh_.subscribe( "/camera/aligned_depth_to_color/camera_info", 1,

&ImageConverter::cameraInfoCb, this );

//topic pub:

arm_point_pub_ =

nh_.advertise<geometry_msgs::PointStamped>( "/mouse_point", 10 );

cv::namedWindow( "colorImage" );

setMouseCallback( "colorImage", &ImageConverter::on_mouse,

(void *) &mousepos );

}

~ImageConverter()

{

cv::destroyWindow( "colorImage" );

}

void cameraInfoCb( const sensor_msgs::CameraInfo &msg )

{

camera_info = msg;

}

void imageDepthCb( const sensor_msgs::ImageConstPtr &msg )

{

cv_bridge::CvImagePtr cv_ptr;

try {

cv_ptr =

cv_bridge::toCvCopy( msg, sensor_msgs::image_encodings::TYPE_16UC1 );

depthImage = cv_ptr->image;

} catch ( cv_bridge::Exception &e ) {

ROS_ERROR( "cv_bridge exception: %s", e.what() );

return;

}

}

void imageColorCb( const sensor_msgs::ImageConstPtr &msg )

{

cv_bridge::CvImagePtr cv_ptr;

try {

cv_ptr = cv_bridge::toCvCopy( msg, sensor_msgs::image_encodings::BGR8 );

colorImage = cv_ptr->image;

} catch ( cv_bridge::Exception &e ) {

ROS_ERROR( "cv_bridge exception: %s", e.what() );

return;

}

//先查询对齐的深度图像的深度信息,根据读取的camera info内参矩阵求解对应三维坐标

float real_z = 0.001 * depthImage.at<u_int16_t>( mousepos.y, mousepos.x );

float real_x =

(mousepos.x - camera_info.K.at( 2 ) ) / camera_info.K.at( 0 ) * real_z;

float real_y =

(mousepos.y - camera_info.K.at( 5 ) ) / camera_info.K.at( 4 ) * real_z;

char tam[100];

sprintf( tam, "(%0.2f,%0.2f,%0.2f)", real_x, real_y, real_z );

putText( colorImage, tam, mousepos, FONT_HERSHEY_SIMPLEX, 0.6,

cvScalar( 0, 0, 255 ), 1 );//打印到屏幕上

circle( colorImage, mousepos, 2, Scalar( 255, 0, 0 ) );

output_point.header.frame_id = "/camera_depth_optical_frame";

output_point.header.stamp = ros::Time::now();

output_point.point.x = real_x;

output_point.point.y = real_y;

output_point.point.z = real_z;

arm_point_pub_.publish( output_point );

cv::imshow( "colorImage", colorImage );

cv::waitKey( 1 );

}

};

int main( int argc, char **argv )

{

ros::init( argc, argv, "coordinate_map" );

ImageConverter imageconverter;

ros::spin();

return(0);

}

CMakeList.txt

cmake_minimum_required(VERSION 2.8.3)

project(coordinate_map)

## Compile as C++11, supported in ROS Kinetic and newer

add_compile_options(-std=c++11)

find_package(catkin REQUIRED COMPONENTS roscpp rostime std_msgs sensor_msgs message_filters cv_bridge image_transport

compressed_image_transport tf compressed_depth_image_transport geometry_msgs )

## System dependencies are found with CMake's conventions

find_package(OpenCV REQUIRED)

catkin_package(

)

include_directories(include

${catkin_INCLUDE_DIRS}

${OpenCV_INCLUDE_DIRS}

)

add_executable(coordinate_map src/coordinate_map.cpp)

target_link_libraries(coordinate_map

${catkin_LIBRARIES}

${OpenCV_LIBRARIES}

)

package.xml

<?xml version="1.0"?>

<package>

<name>coordinate_map</name>

<version>1.0.0</version>

<description>coordinate_map package</description>

<maintainer email="wangxianwei1994@foxmail.com">Wangxianwei</maintainer>

<license>BSD</license>

<buildtool_depend>catkin</buildtool_depend>

<build_depend>roscpp</build_depend>

<build_depend>rostime</build_depend>

<build_depend>std_msgs</build_depend>

<build_depend>sensor_msgs</build_depend>

<build_depend>message_filters</build_depend>

<build_depend>image_transport</build_depend>

<build_depend>compressed_image_transport</build_depend>

<build_depend>compressed_depth_image_transport</build_depend>

<build_depend>cv_bridge</build_depend>

<build_depend>tf</build_depend>

<build_depend>nav_msgs</build_depend>

<run_depend>message_runtime</run_depend>

<run_depend>roscpp</run_depend>

<run_depend>rostime</run_depend>

<run_depend>std_msgs</run_depend>

<run_depend>sensor_msgs</run_depend>

<run_depend>message_filters</run_depend>

<run_depend>image_transport</run_depend>

<run_depend>compressed_image_transport</run_depend>

<run_depend>compressed_depth_image_transport</run_depend>

<run_depend>cv_bridge</run_depend>

<run_depend>tf</run_depend>

<run_depend>nav_msgs</run_depend>

<export>

</export>

</package>

- 点赞

- 收藏

- 关注作者

评论(0)