从零搭建Linux+Docker+Ansible+kubernetes 学习环境(1*Master+3*Node)

-

一直想学K8s,但是没有环境,本身

K8s就有些重。上学之前租了一个阿里云的ESC,单核2G的,单机版K8s的勉强可以装上去,多节点没法搞,书里的Demo也没法学。需要多个节点,涉及到多机器操作,所以顺便温习一下ansible。 -

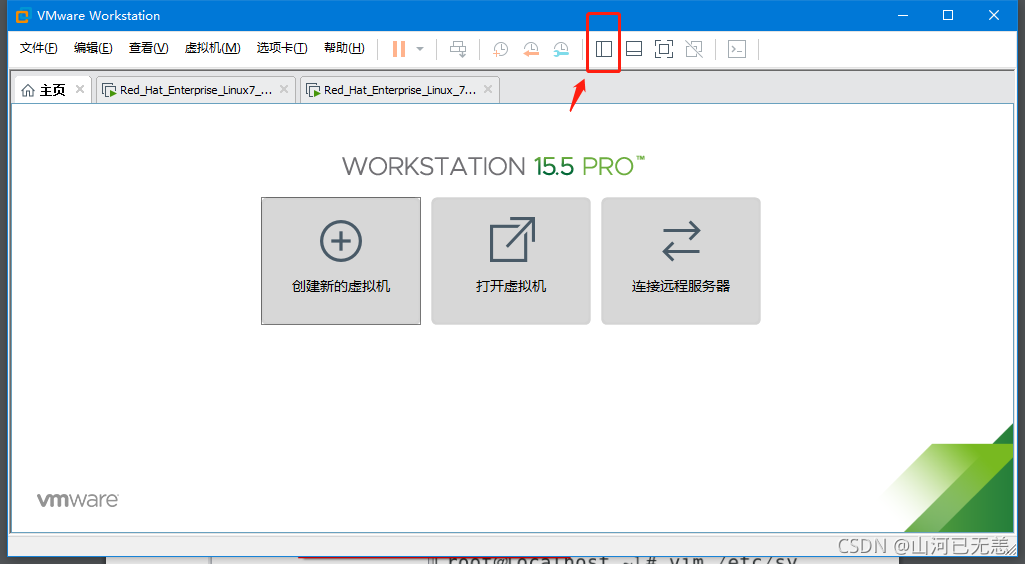

这是一个在

Win10上从零搭建学习环境的教程,包含:- 通过

Vmware Workstation安装四个linux系统虚拟机,一个Master管理节点,三个Node计算节点。 - 通过桥接模式,可以

访问外网,并且可以通过win10物理机ssh远程访问。 - 可以通过

Master节点机器ssh免密登录任意Node节点机。 - 配置

Ansible,Master节点做controller节点,使用角色配置时间同步,使用playbook安装配置docker K8S等。 Docker,K8s集群相关包安装,网络配置等

- 通过

-

关于

Vmware Workstation 和 Linux ios包,默认小伙伴已经拥有。Vmware Workstation默认小伙伴已经安装好,没有的可以网上下载一下。

我所渴求的,無非是將心中脫穎語出的本性付諸生活,為何竟如此艱難呢 ------《彷徨少年時》

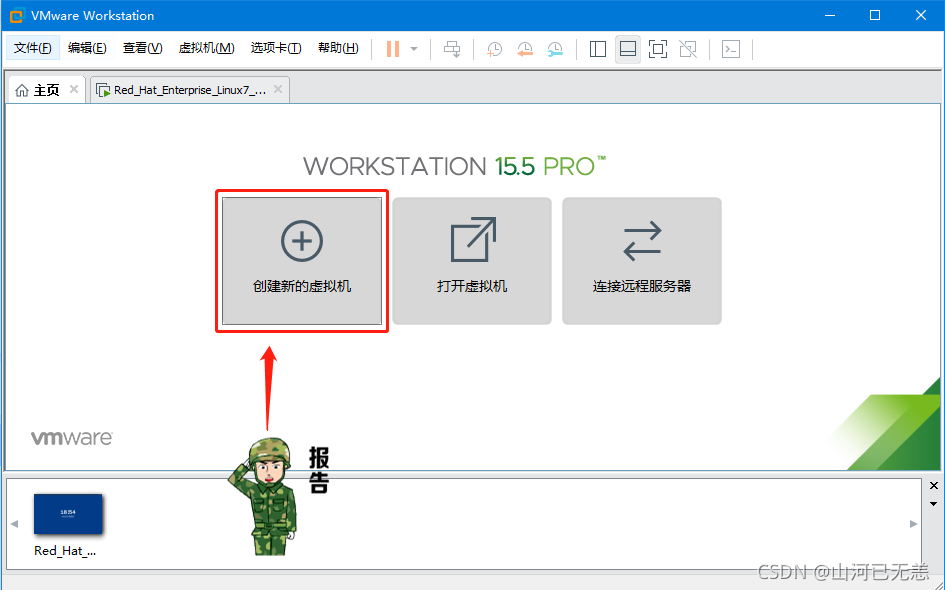

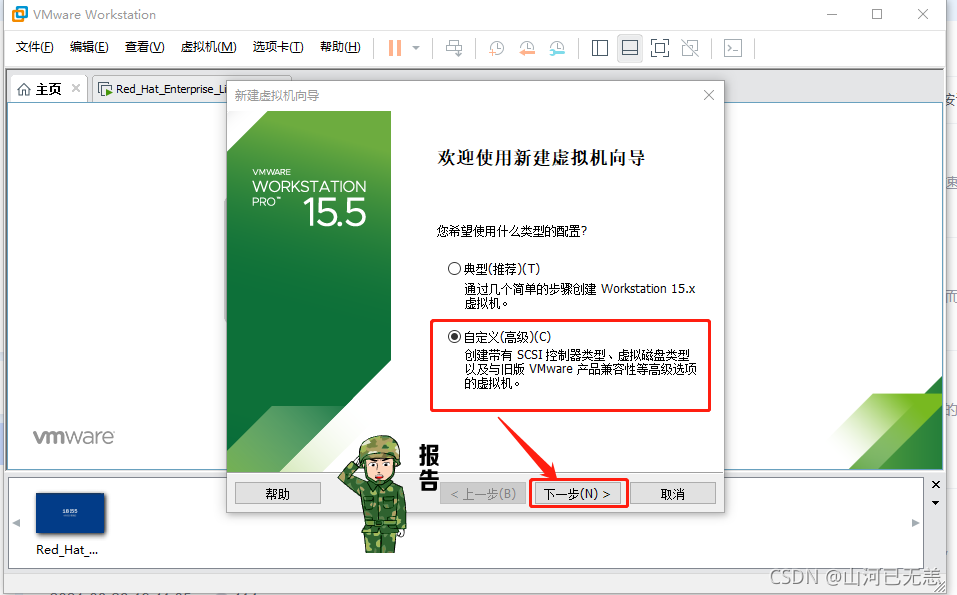

这里默认小伙伴已经安装了Vmware Workstation(VMware-workstation-full-15.5.6-16341506.exe),已经准备了linux系统 安装光盘(CentOS-7-x86_64-DVD-1810.iso)。括号内是我用的版本,我们的方式:

先安装一个Node节点机器,然后通过克隆的方式得到剩余的两个Node机器和一个Master机器

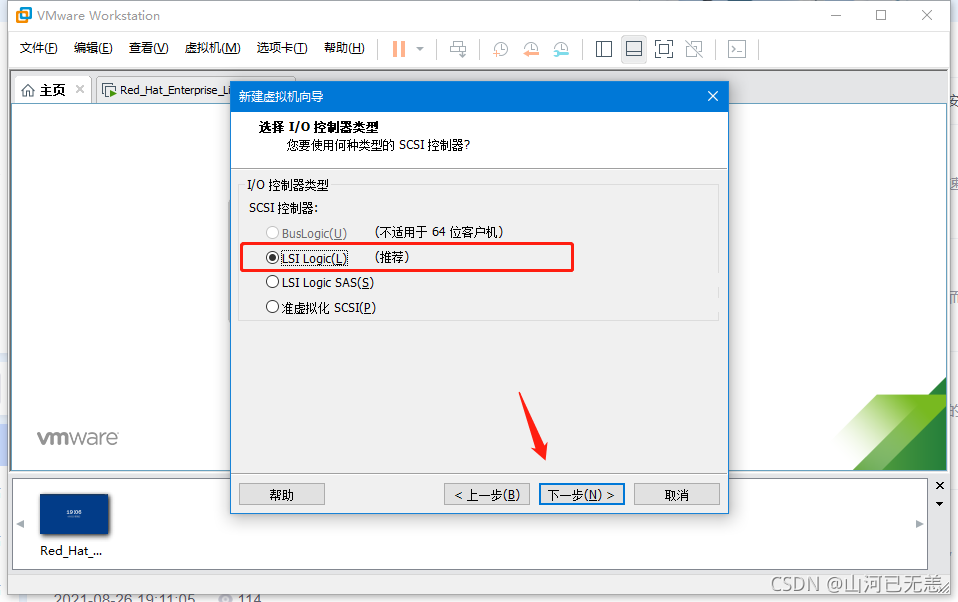

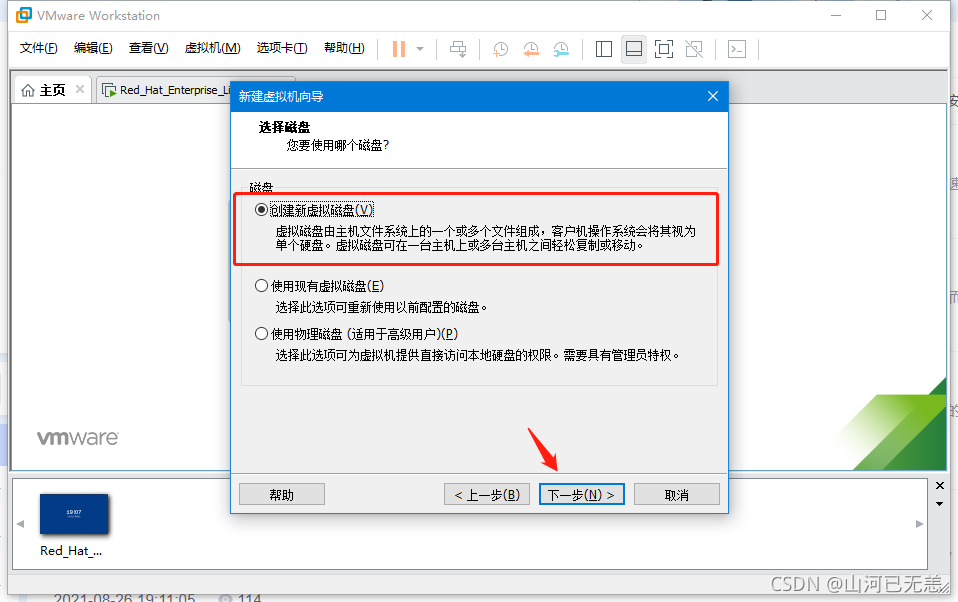

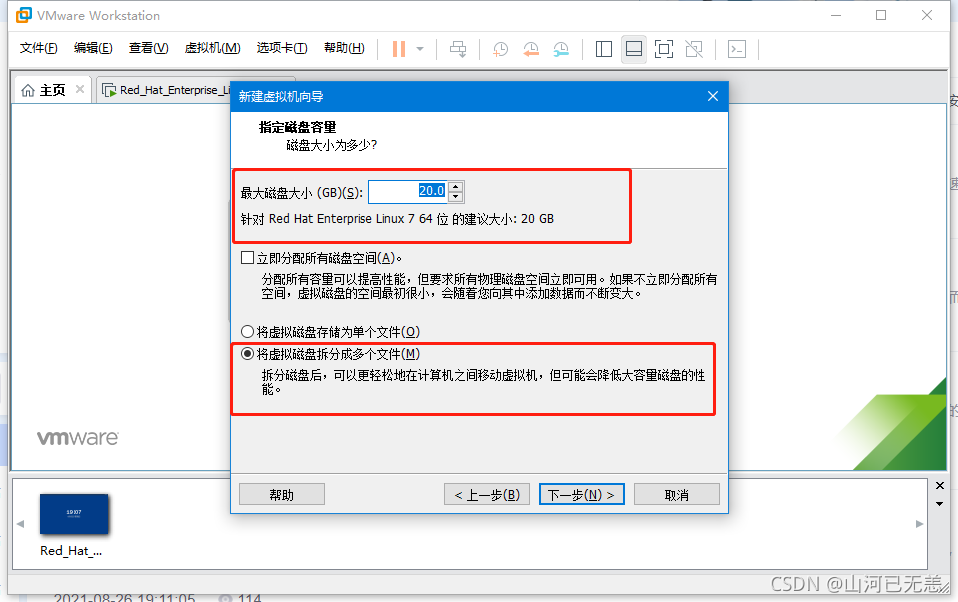

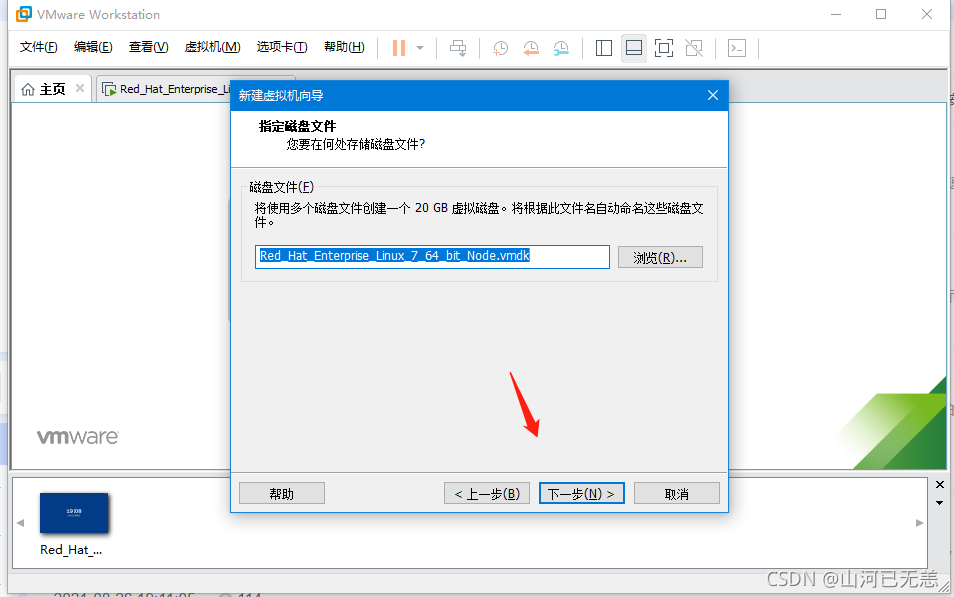

| &&&&&&&&&&&&&&&&&&安装步骤&&&&&&&&&&&&&&&&&& |

|---|

|

|

|

|

|

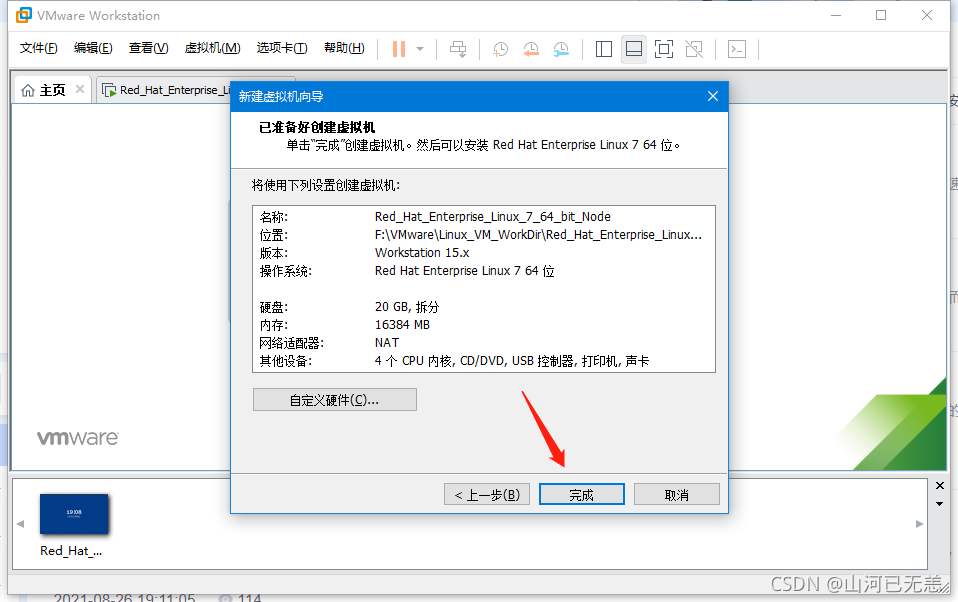

| 给虚拟机起一个名称,并指定虚拟机存放的位置。 |

|

|

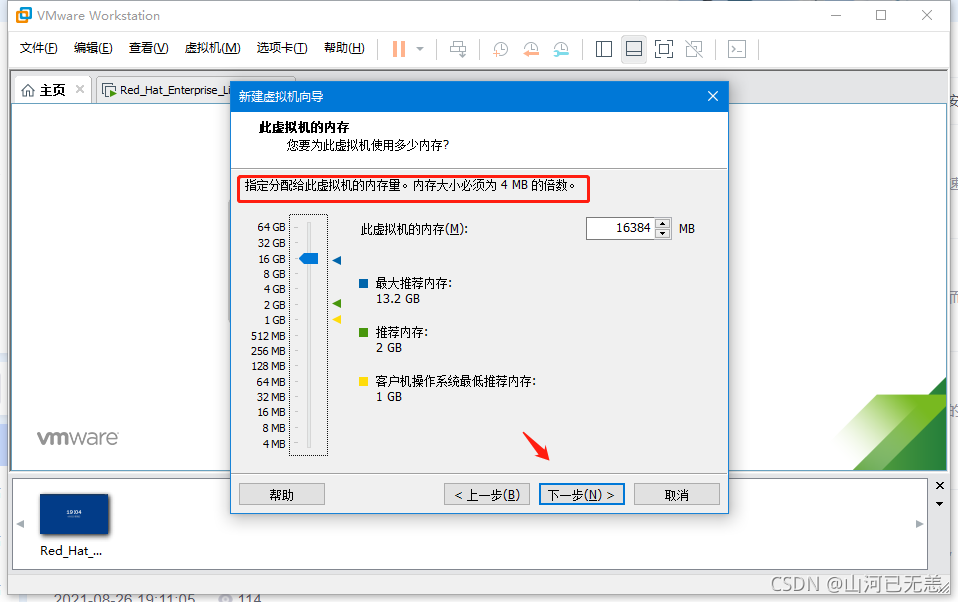

| 内存设置这里要结合自己机器的情况,如果8G内存,建议为2G,如果16G,建议4G,如果32G,建议8G |

|

|

|

|

|

|

|

|

|

| 将存放在系统中的光盘镜像放入光驱中。【通过”浏览”找到即可】 |

|

|

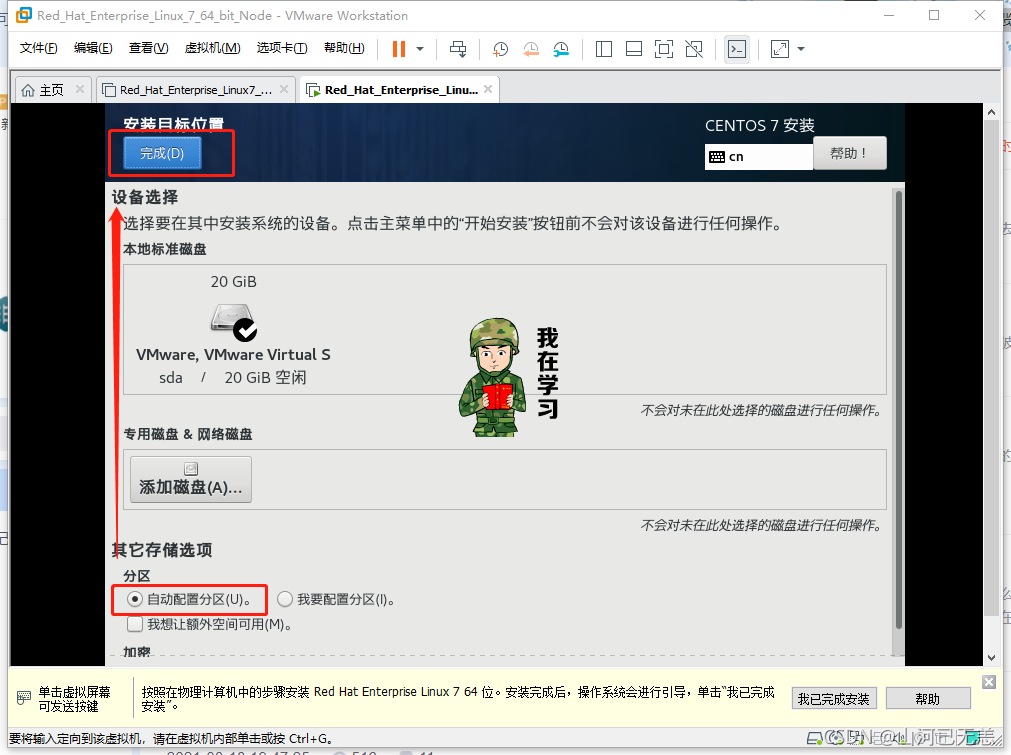

| 如果显示内存太大了,开不了机,可以适当减小内存, |

|

| 点击屏幕,光标进入到系统,然后上下键选择第一个。 |

|

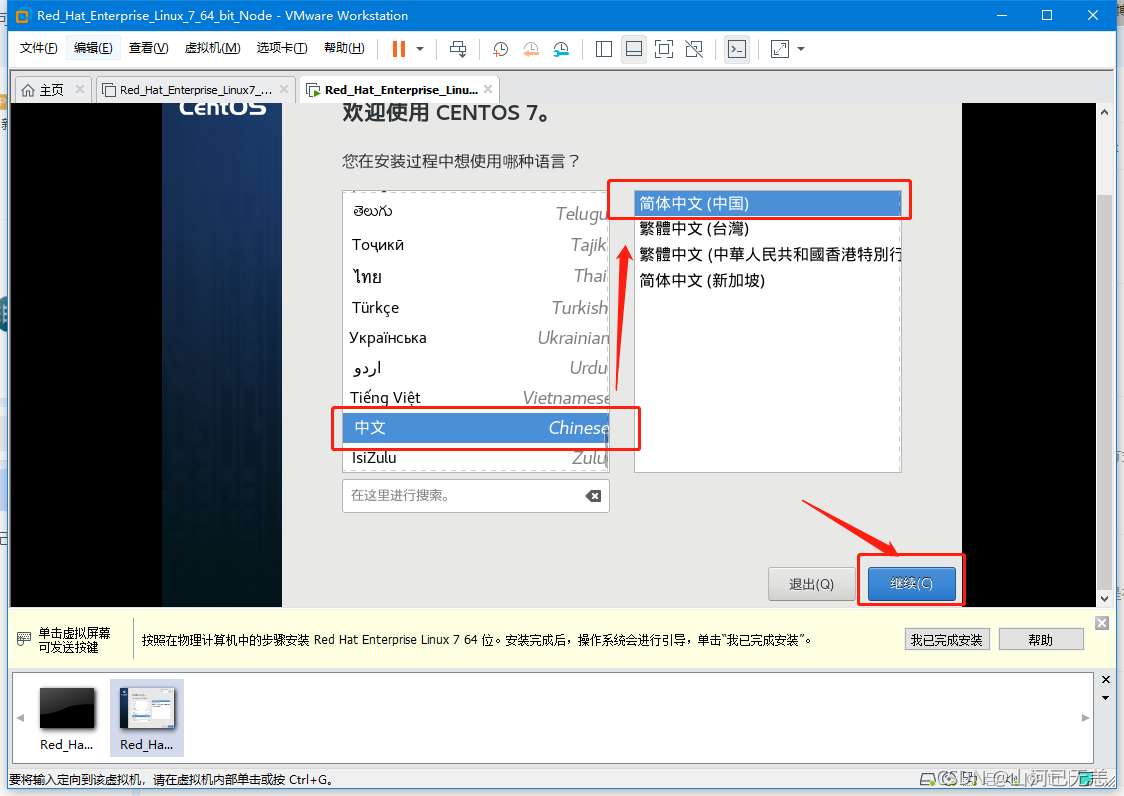

| 建议初学者选择“简体中文(中国)”,单击“继续”。 |

|

|

|

|

|

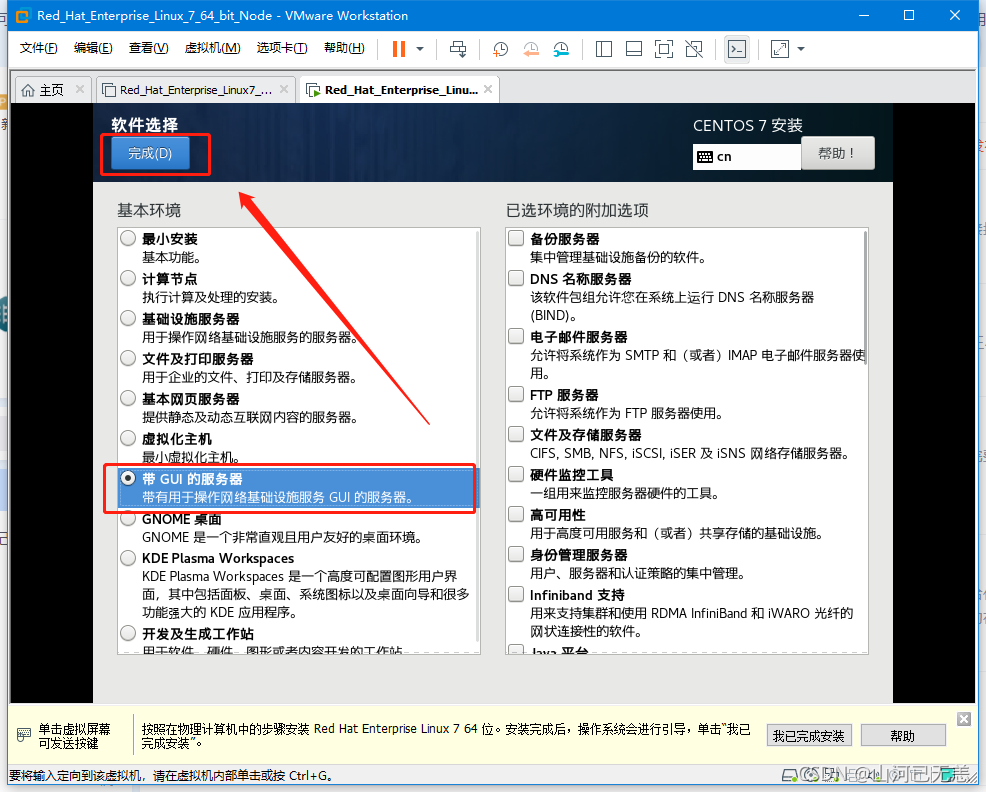

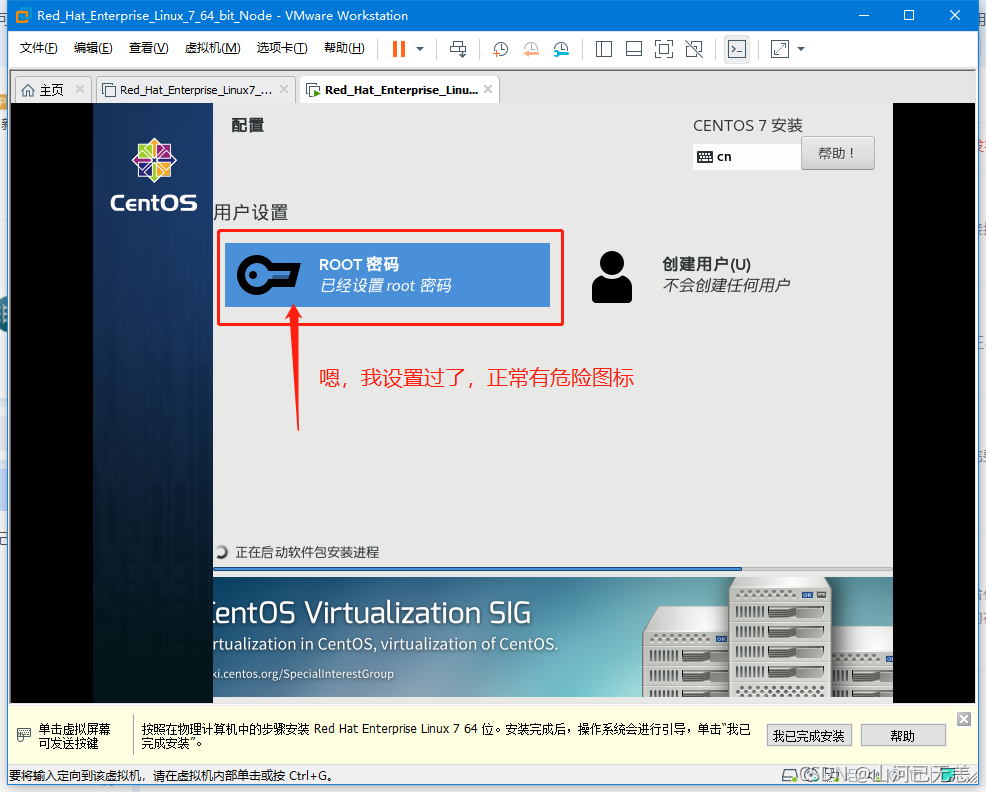

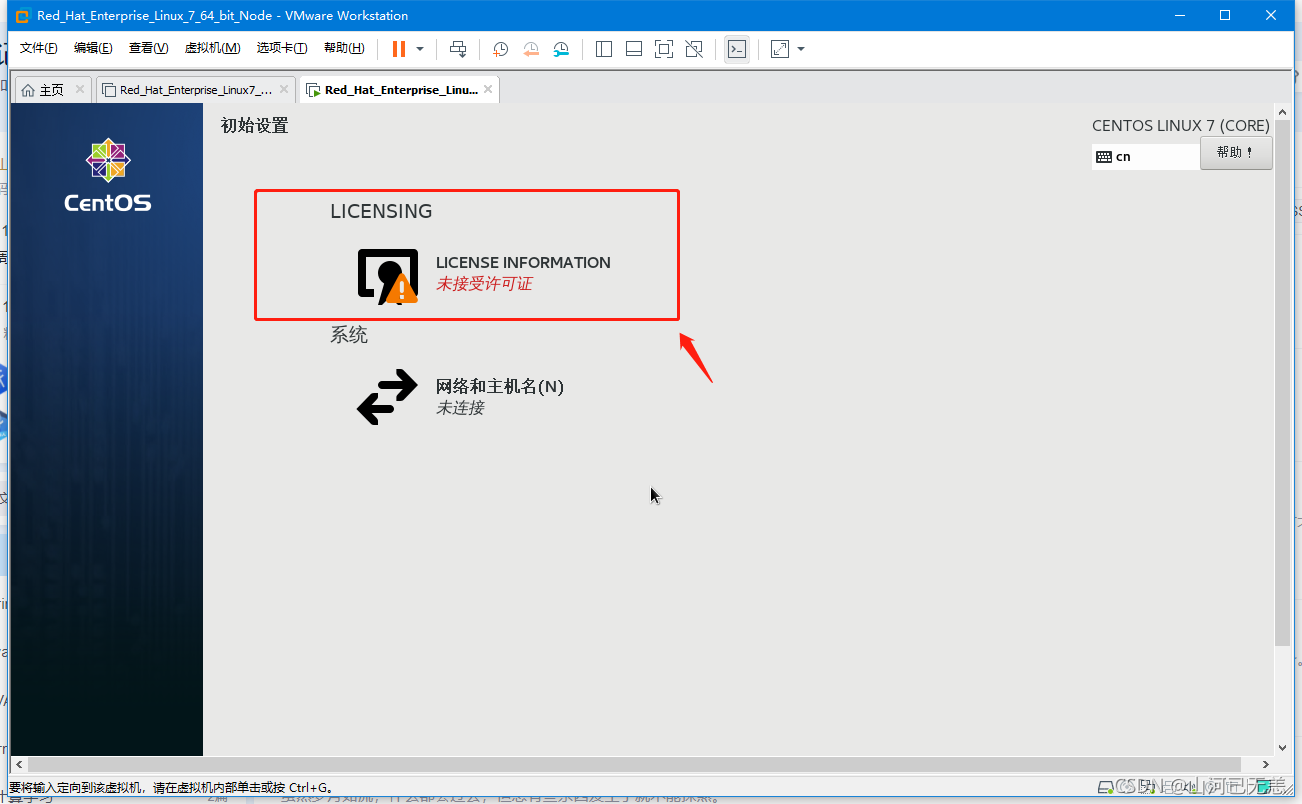

| 检查“安装信息摘要界面”,确保所有带叹号的部分都已经完成,然后单击右下方的“开始安装”按钮,将会执行正式安装。 |

|

|

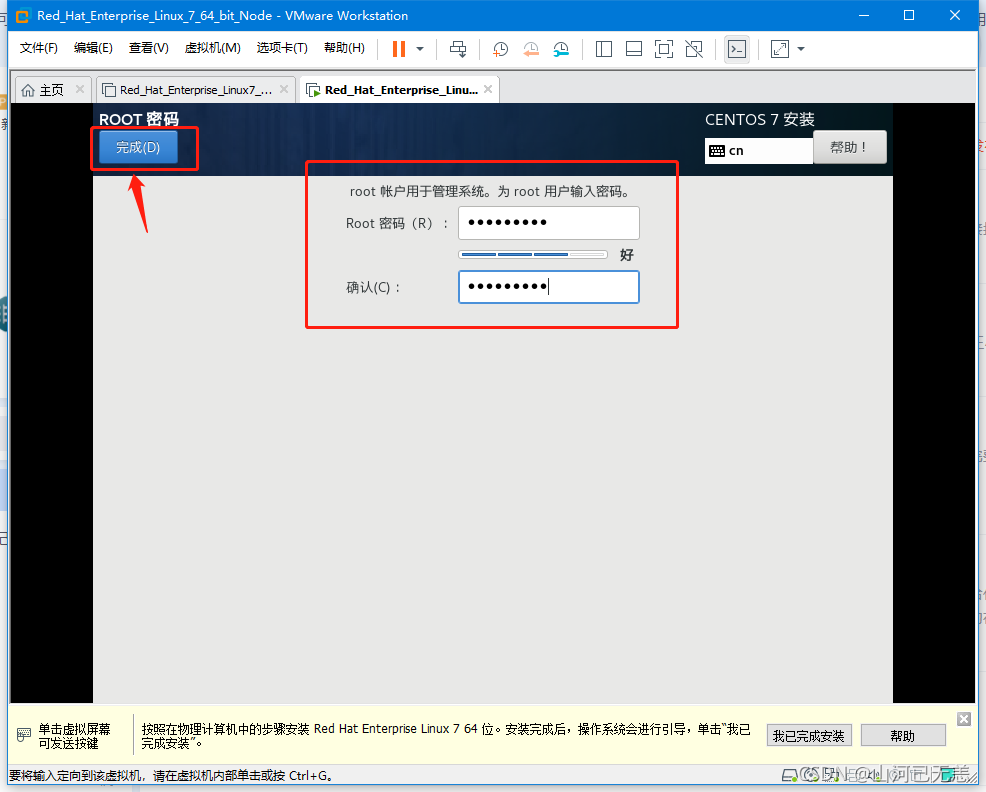

| 若密码太简单需要按两次“完成”按钮! |

|

|

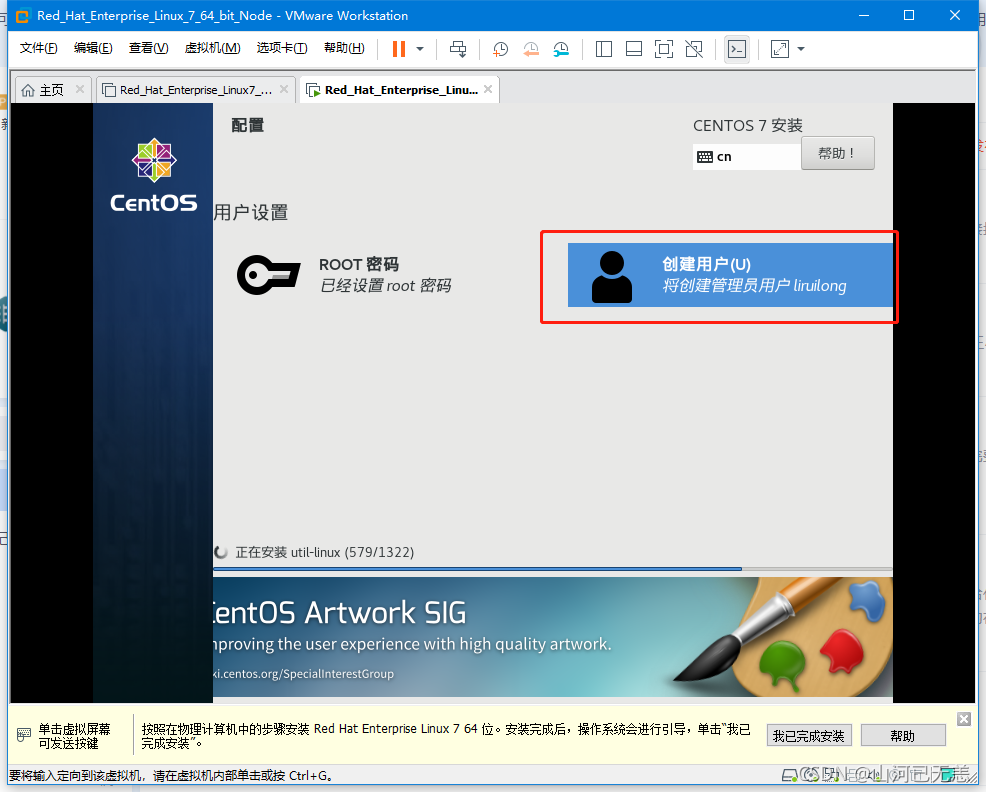

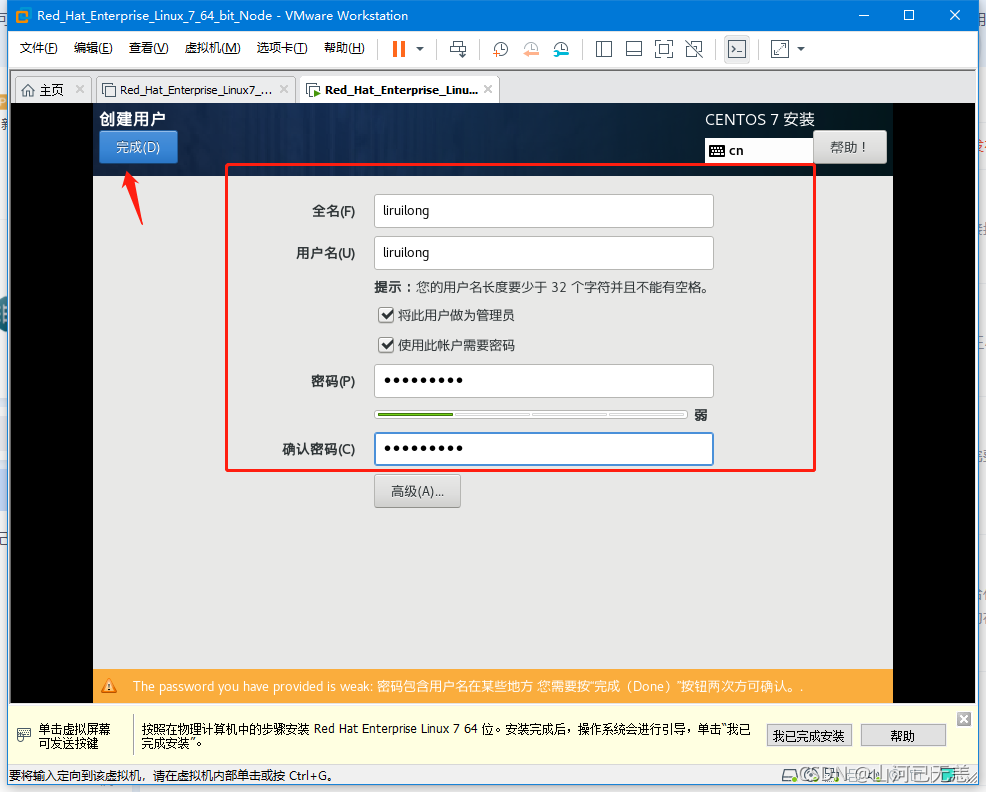

| 创建用户。(用户名字和密码自定义),填写完成后,单击两次“完成”。 |

|

|

| 这很需要时间,可以干点别的事…,安装完成之后,会有 重启 按钮,直接重启即可 |

|

|

|

| 启动系统,这个需要一些时间,耐心等待 |

|

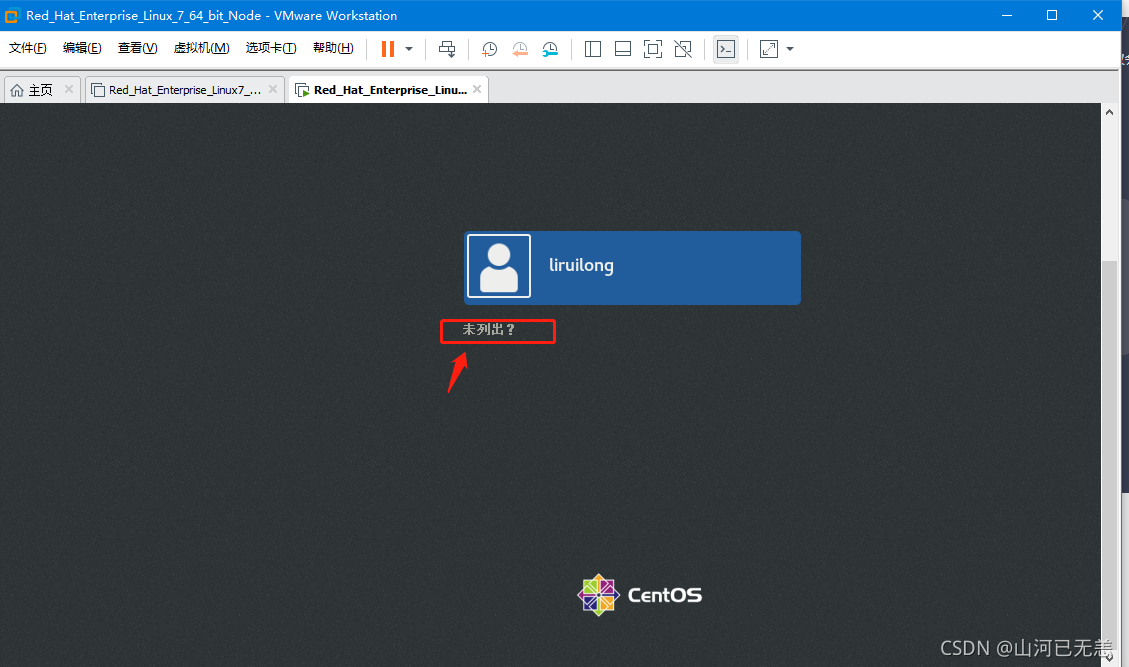

| 未列出以root用户登录,然后是一些引导页,直接下一步即可 |

|

|

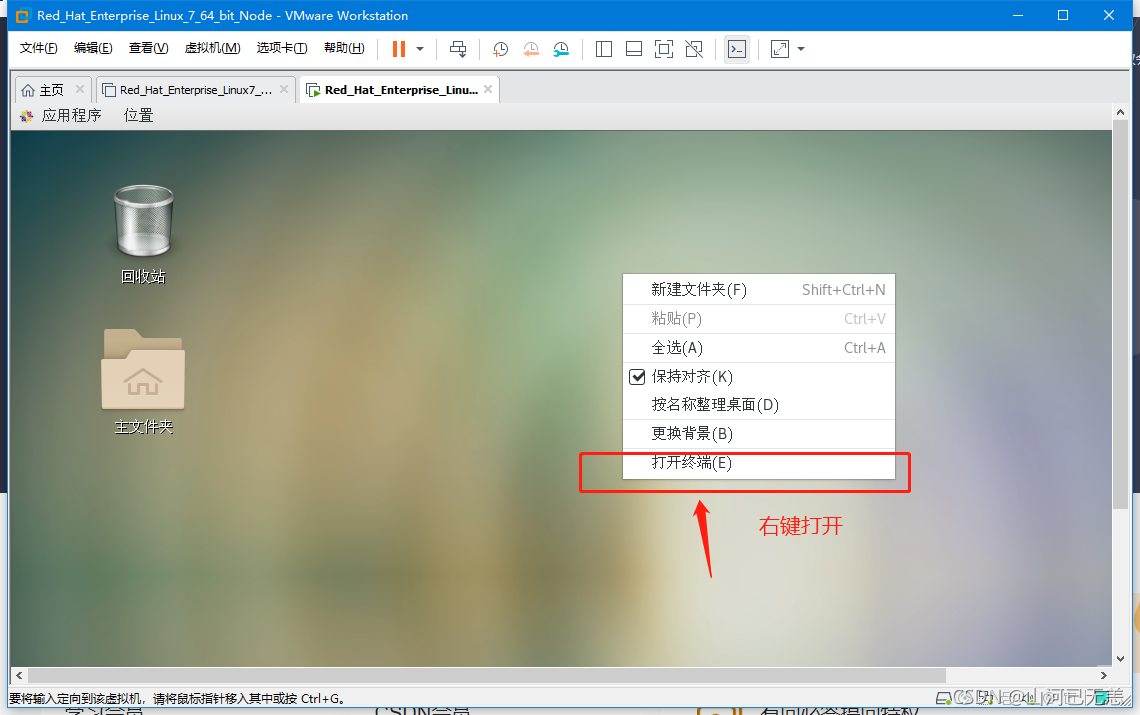

嗯,这里改一下,命令提示符。弄的好看一点想学习,直接输入:PS1="\[\033[1;32m\]┌──[\[\033[1;34m\]\u@\H\[\033[1;32m\]]-[\[\033[0;1m\]\w\[\033[1;32m\]] \n\[\033[1;32m\]└─\[\033[1;34m\]\$\[\033[0m\] "或者写到vi ~/.bashrc |

|

| &&&&&&&&&&&&&&&&&&配置网络步骤&&&&&&&&&&&&&&&&&& |

|---|

|

|

|

|

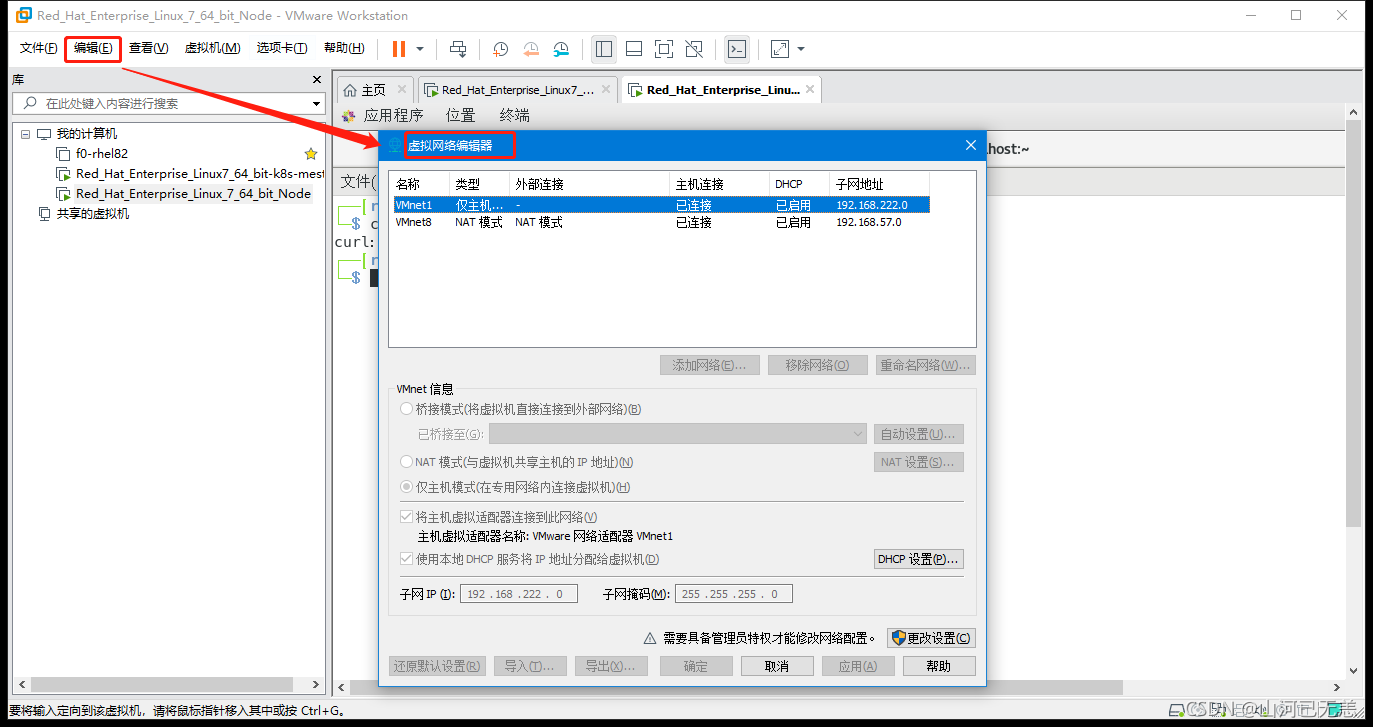

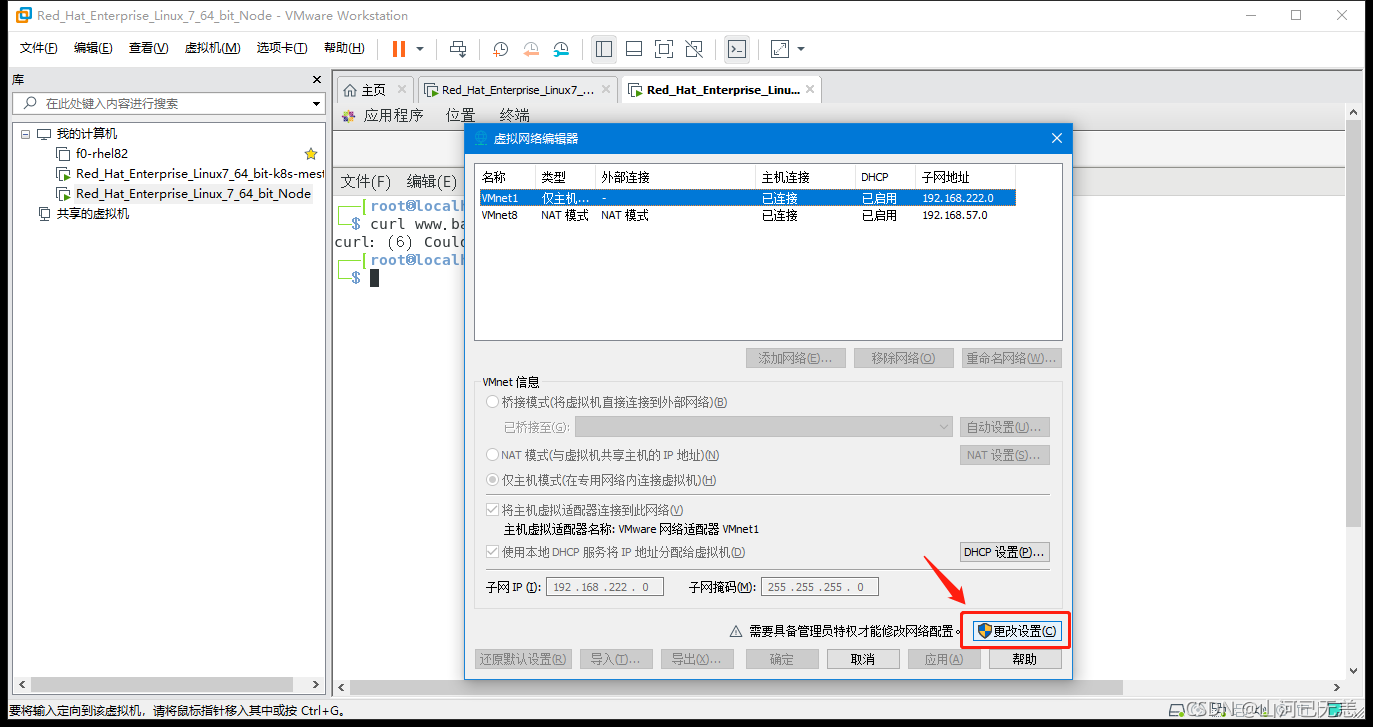

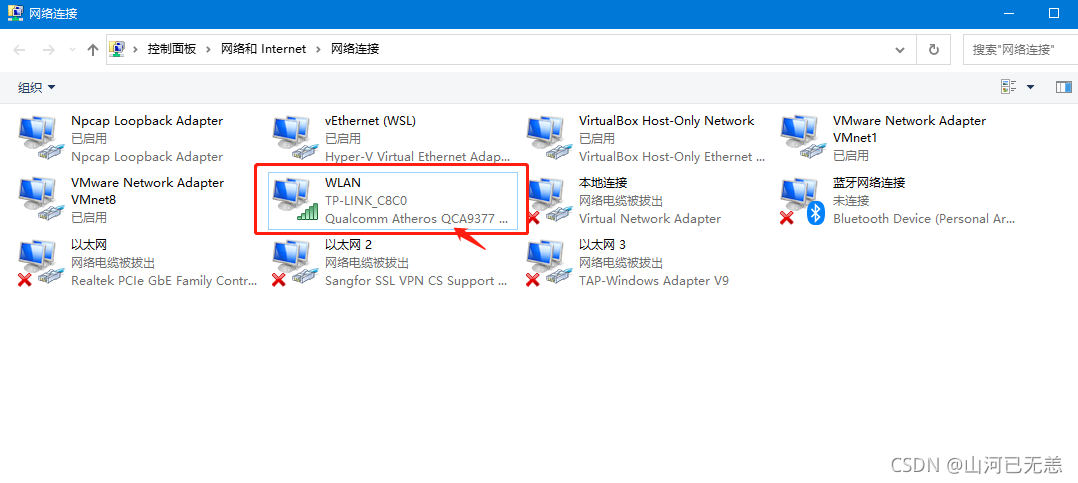

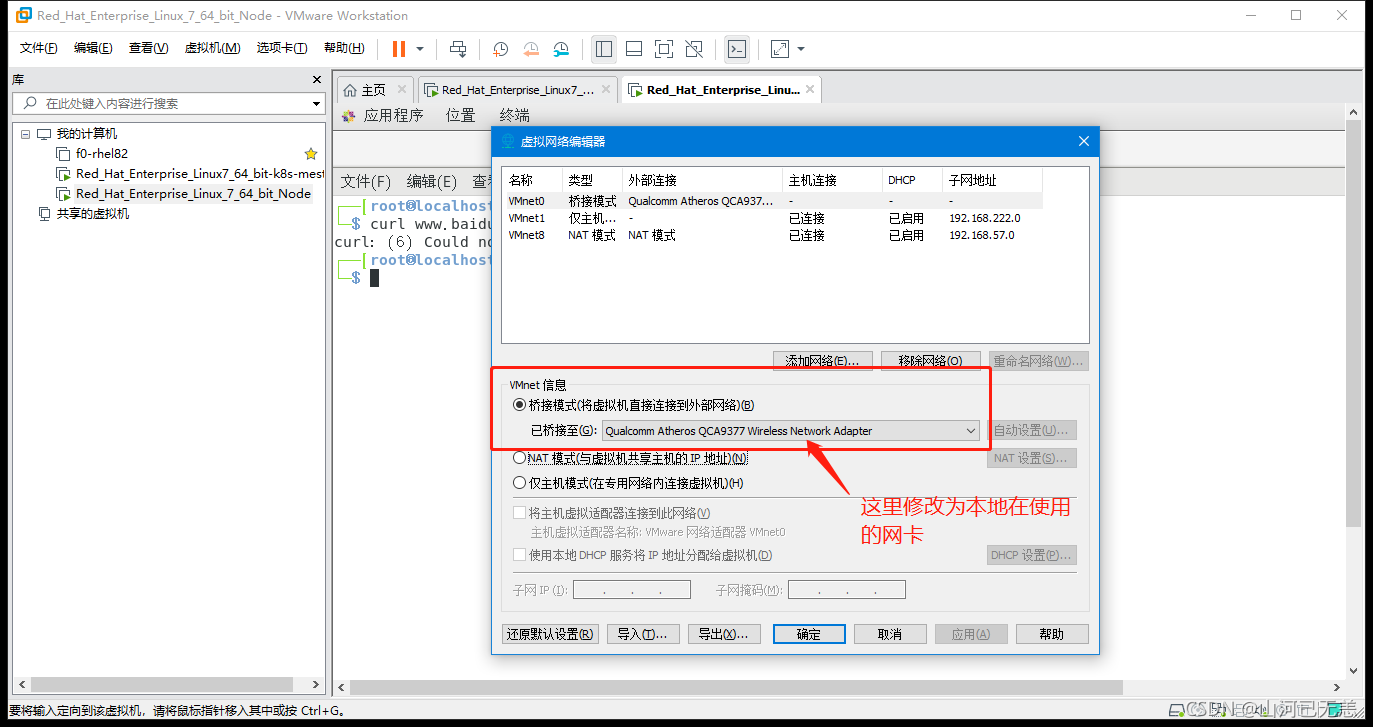

| 桥接模式下,要自己选择桥接到哪个网卡(实际联网用的网卡),然后确认 |

|

|

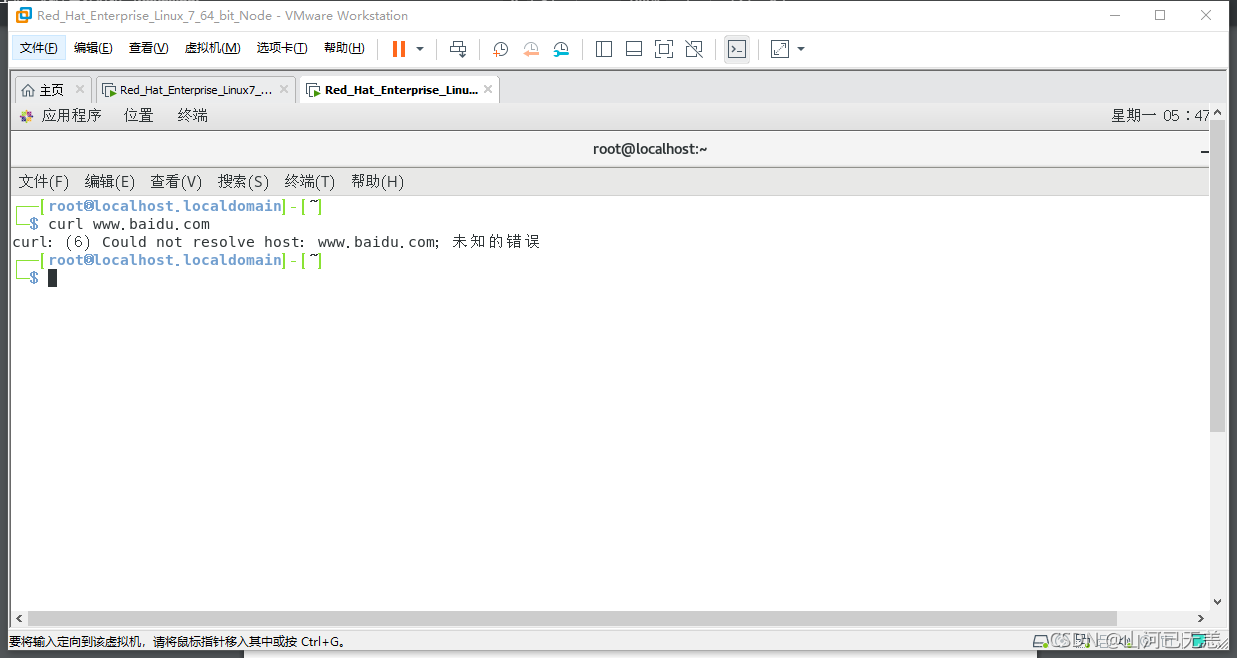

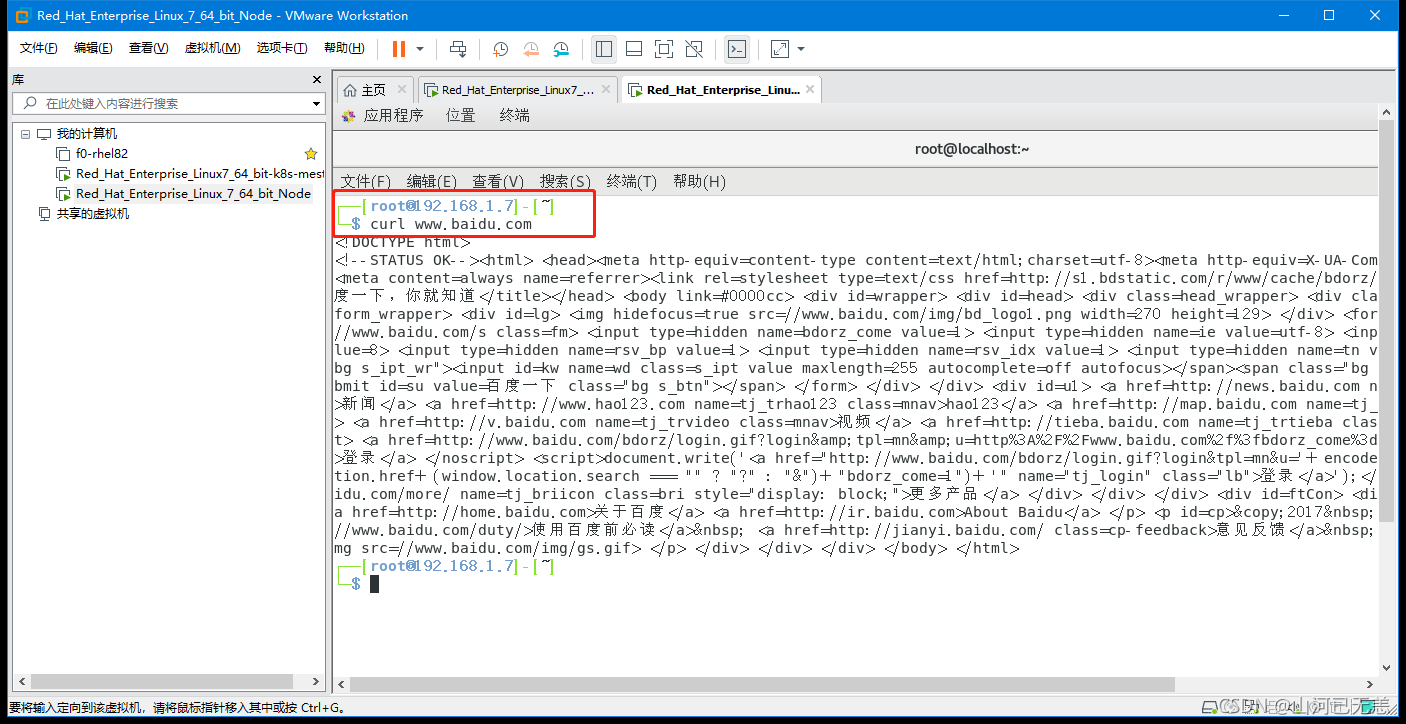

| 配置网卡为DHCP模式(自动分配IP地址):执行方式见表尾,这里值得一说的是,如果网络换了,那么所以有的节点ip也会换掉,因为是动态的,但是还是在一个网段内。DNS和SSH免密也都不能用了,需要重新配置,但是如果你只连一个网络,那就没影响。 |

nmcli connection modify 'ens33' ipv4.method auto connection.autoconnect yes #将网卡改为DHCP模式(动态分配IP),nmcli connection up 'ens33' |

|

|

配置网卡为DHCP模式(自动分配IP地址)

┌──[root@localhost.localdomain]-[~]

└─$ nmcli connection modify 'ens33' ipv4.method auto connection.autoconnect yes

┌──[root@localhost.localdomain]-[~]

└─$ nmcli connection up 'ens33'

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/4)

┌──[root@localhost.localdomain]-[~]

└─$ ifconfig | head -2

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.1.7 netmask 255.255.255.0 broadcast 192.168.1.255

┌──[root@localhost.localdomain]-[~]

└─$

┌──[root@192.168.1.7]-[~]

└─$ ifconfig

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.1.7 netmask 255.255.255.0 broadcast 192.168.1.255

inet6 fe80::8899:b0c7:4b50:73e0 prefixlen 64 scopeid 0x20<link>

inet6 240e:319:707:b800:2929:3ab2:f378:715a prefixlen 64 scopeid 0x0<global>

ether 00:0c:29:b6:a6:52 txqueuelen 1000 (Ethernet)

RX packets 535119 bytes 797946990 (760.9 MiB)

RX errors 0 dropped 96 overruns 0 frame 0

TX packets 59958 bytes 4119314 (3.9 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 616 bytes 53248 (52.0 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 616 bytes 53248 (52.0 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

virbr0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 192.168.122.1 netmask 255.255.255.0 broadcast 192.168.122.255

ether 52:54:00:2e:66:6d txqueuelen 1000 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

┌──[root@192.168.1.7]-[~]

└─$

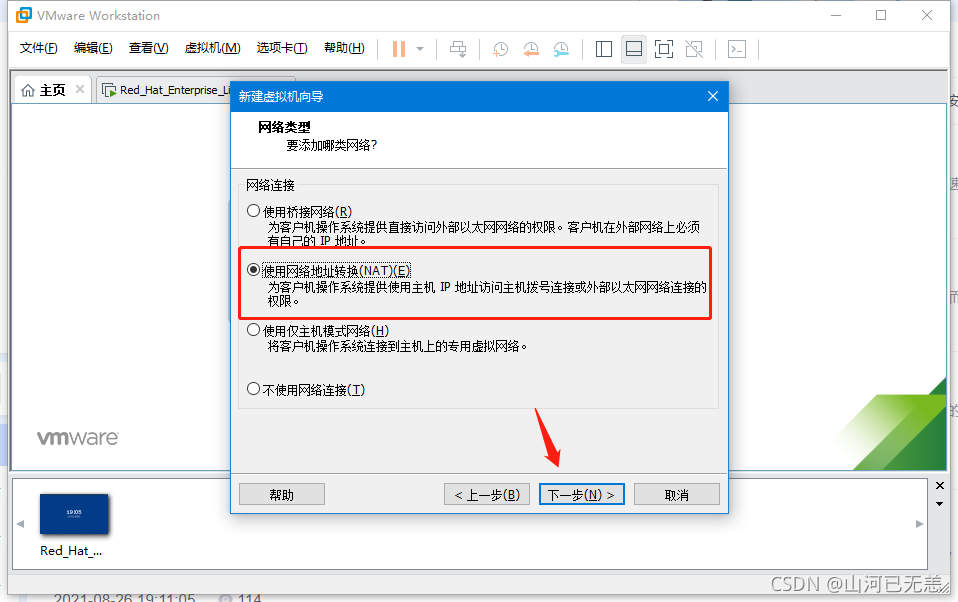

网络配置这里,如果觉得不是特别方面,可以使用NAT模式,即通过vm1或者vmm8 做虚拟交换机来使用,这样就不用考虑ip问题了。

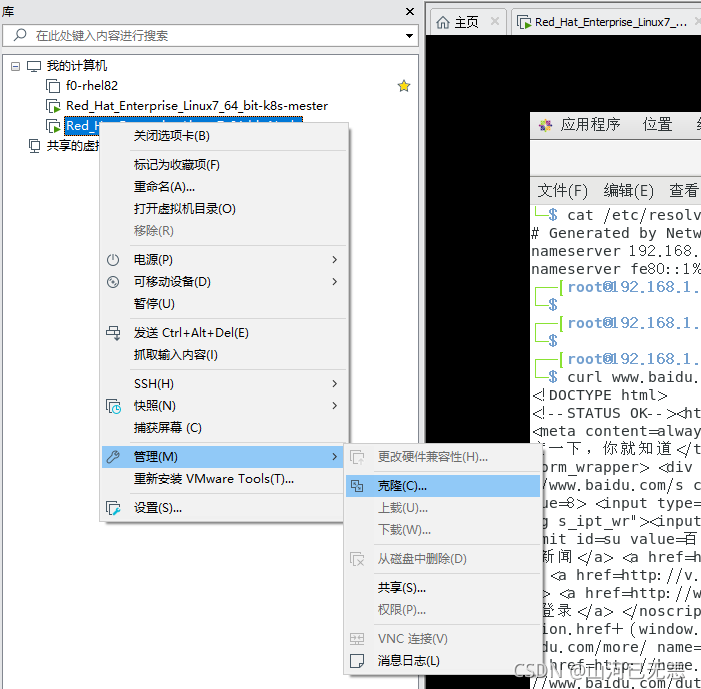

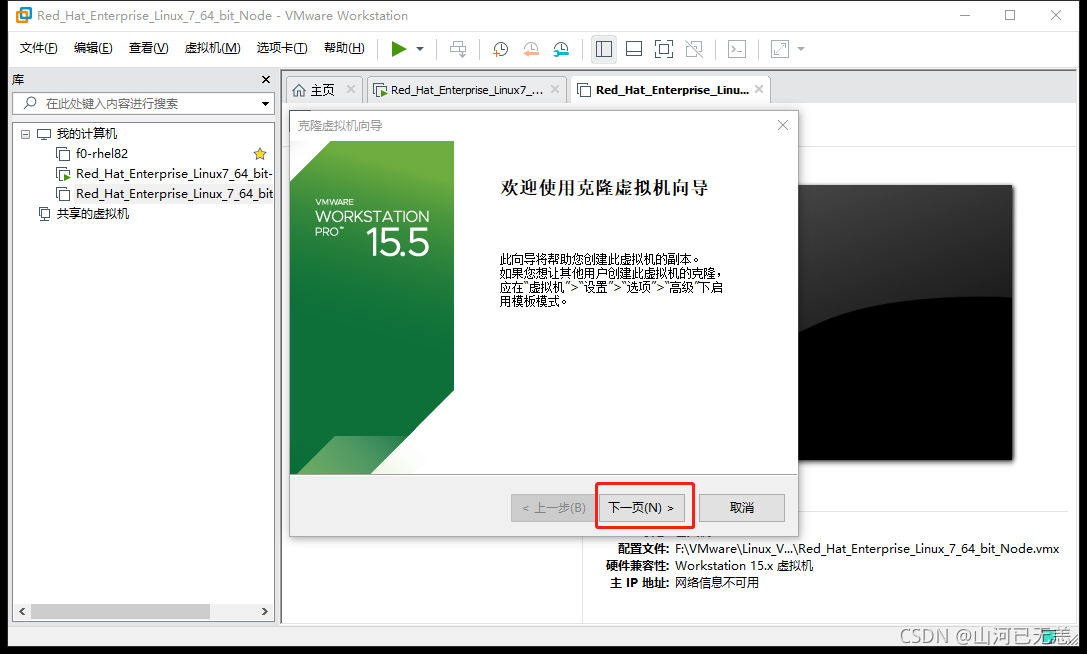

| &&&&&&&&&&&&&&&&&&机器克隆步骤&&&&&&&&&&&&&&&&&& |

|---|

| 关闭要克隆的虚拟机 |

|

|

|

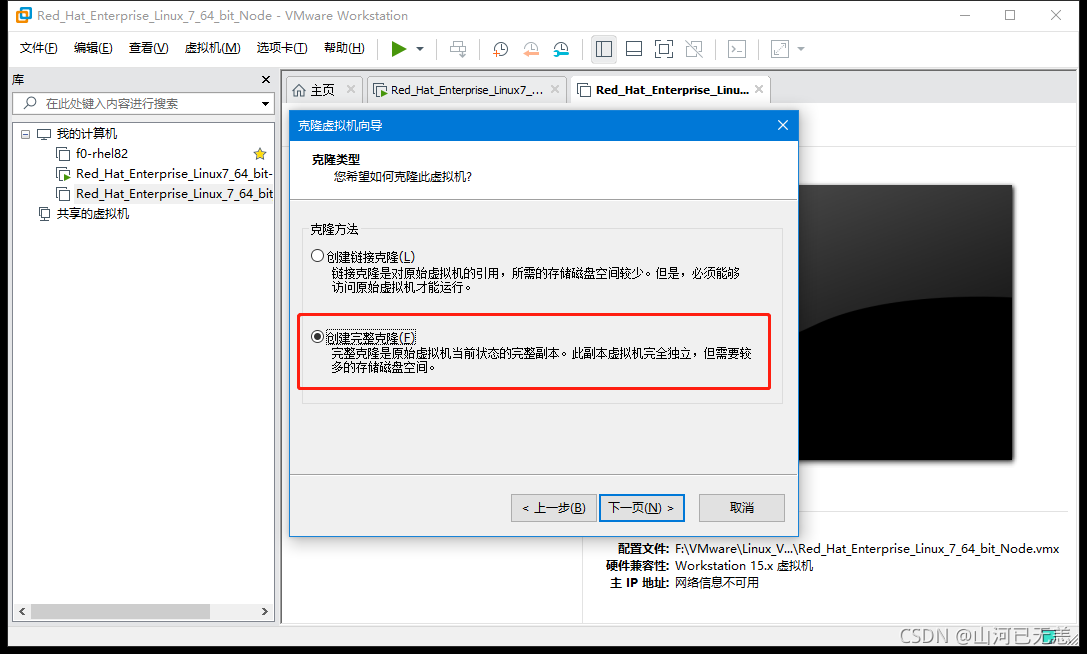

| 链接克隆和完整克隆的区别: |

| 创建链接克隆 #克隆的虚拟机占用磁盘空间很少,但是被克隆的虚拟机必须能够正常使用,否则无法正常使用; |

| 创建完整克隆 #新克隆的虚拟机跟被克隆的虚拟机之间没有关联,被克隆的虚拟机删除也不影响新克隆出来的虚拟机的使用 |

|

|

|

|

|

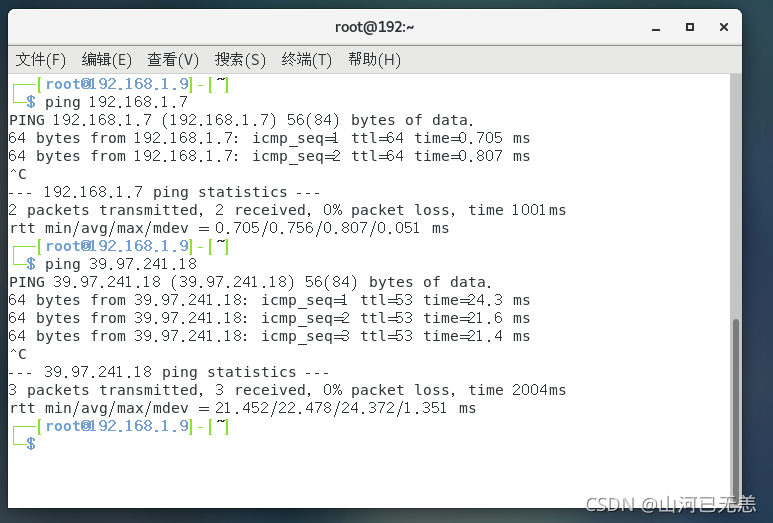

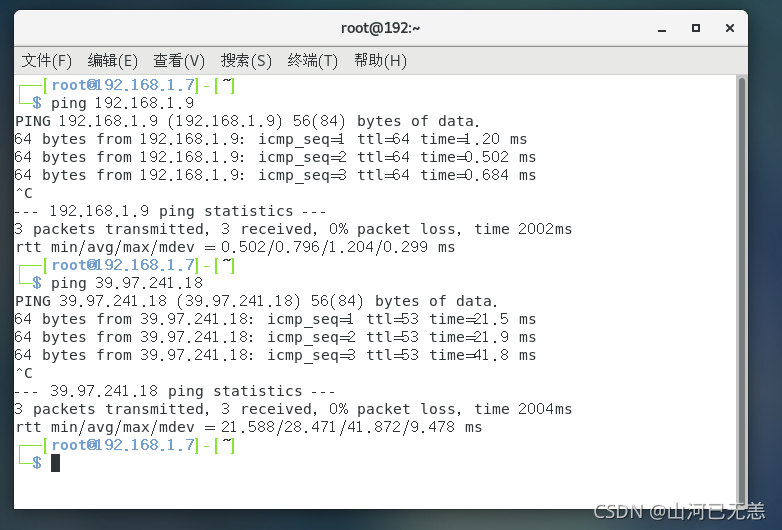

| 测试一下,可以访问外网(39.97.241是我的阿里云公网IP), 也可以和物理机互通,同时也可以和node互通 |

|

|

|

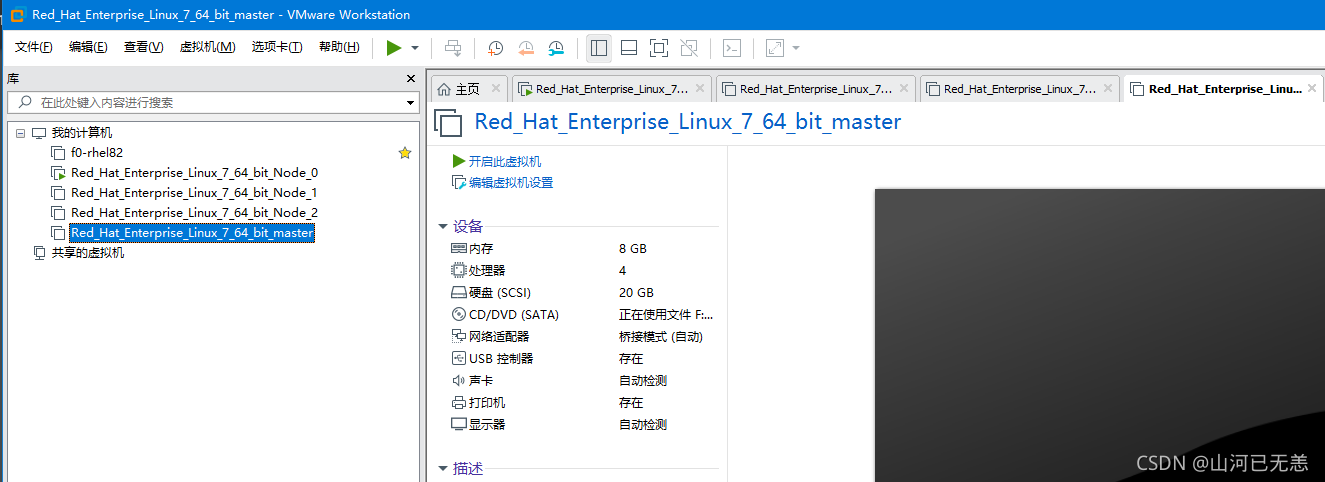

| 我们以相同的方式,克隆剩余的一个node节点机器,和一个Master节点机。 |

我们以相同的方式,克隆剩余的一个

node节点机器,和一个Master节点机。这里不做展示

| 克隆剩余的,如果启动时内存不够,需要关闭虚拟机调整相应的内存 |

|---|

|

nmcli connection modify 'ens33' ipv4.method manual ipv4.addresses 192.168.1.9/24 ipv4.gateway 192.168.1.1 connection.autoconnect yes , nmcli connection up 'ens33' 记得配置静态IP呀 |

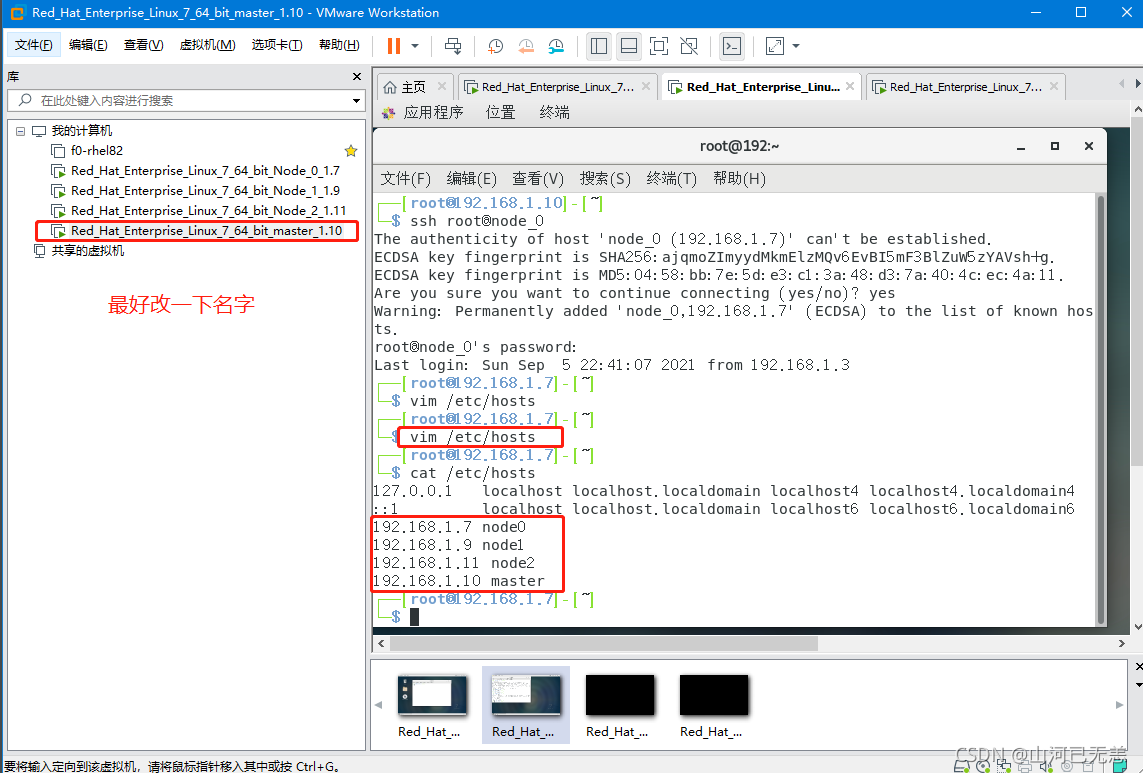

| Master节点DNS配置 |

|---|

Master节点配置DNS,可用通过主机名访问,为方便的话,可以修改每个节点机器的 主机名 /etc/hosts下修改。 |

|

┌──[root@192.168.1.10]-[~]

└─$ vim /etc/hosts

┌──[root@192.168.1.10]-[~]

└─$ cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.1.7 node0

192.168.1.9 node1

192.168.1.11 node2

192.168.1.10 master

┌──[root@192.168.1.10]-[~]

└─$

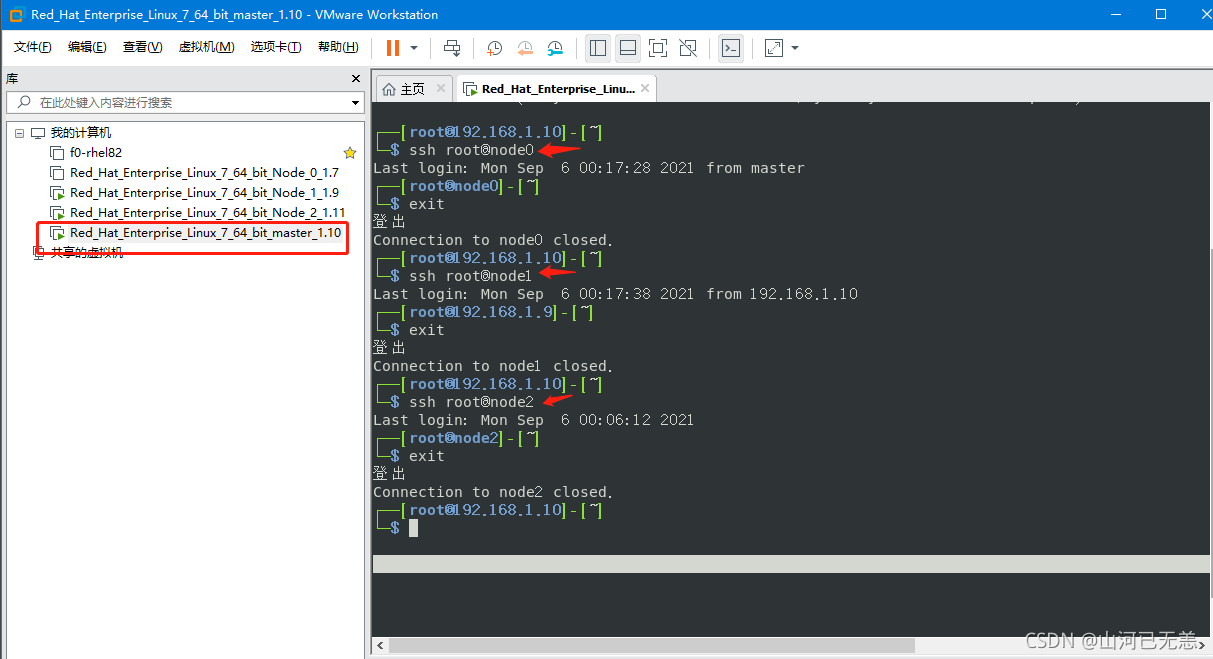

| Master节点配置SSH免密登录 |

|---|

ssh-keygen生成密匙,全部回车 |

SSH 免密配置,使用ssh-copy-id传递密匙 |

|

| 免密测试,如果为了方便,这里,Node1的主机名没有修改。所以显示为IP地址 |

|

ssh-keygen生成密匙,全部回车

┌──[root@192.168.1.10]-[~]

└─$ ssh

usage: ssh [-1246AaCfGgKkMNnqsTtVvXxYy] [-b bind_address] [-c cipher_spec]

[-D [bind_address:]port] [-E log_file] [-e escape_char]

[-F configfile] [-I pkcs11] [-i identity_file]

[-J [user@]host[:port]] [-L address] [-l login_name] [-m mac_spec]

[-O ctl_cmd] [-o option] [-p port] [-Q query_option] [-R address]

[-S ctl_path] [-W host:port] [-w local_tun[:remote_tun]]

[user@]hostname [command]

┌──[root@192.168.1.10]-[~]

└─$ ls -ls ~/.ssh/

ls: 无法访问/root/.ssh/: 没有那个文件或目录

┌──[root@192.168.1.10]-[~]

└─$ ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:qHboVj/WfMTYCDFDZ5ISf3wEcmfsz0EXJH19U6SnxbY root@node0

The key's randomart image is:

+---[RSA 2048]----+

| .o+.=o+.o+*|

| ..=B +. o==|

| ..+o.....O|

| ... .. .=.|

| . S. = o.E |

| o. o + o |

| +... o . |

| o.. + o . |

| .. . . . |

+----[SHA256]-----+

SSH 免密配置,使用ssh-copy-id传递密匙

ssh-copy-id root@node0

ssh-copy-id root@node1

ssh-copy-id root@node2

免密测试

ssh root@node0

ssh root@node1

ssh root@node2

到这一步,我们已经做好了linux环境的搭建,想学linux的小伙伴就可以从这里开始学习啦。这是我linux学习一路整理的笔记,有些实战,感兴趣小伙伴可以看看

这里为了方便,我们直接在物理机操作,而且我们已经配置了ssh,因为我本机的内存不够,所以我只能启三台机器了。

| 主机名 | IP | 角色 | 备注 |

|---|---|---|---|

| master | 192.168.1.10 | conteoller | 控制机 |

| node1 | 192.168.1.9 | node | 受管机 |

| node2 | 192.168.1.11 | node | 受管机 |

## 1. SSH到控制节点即192.168.1.10,配置yum源,安装ansible

┌──(liruilong㉿Liruilong)-[/mnt/e/docker]

└─$ ssh root@192.168.1.10

Last login: Sat Sep 11 00:23:10 2021

┌──[root@master]-[~]

└─$ ls

anaconda-ks.cfg initial-setup-ks.cfg 下载 公共 图片 文档 桌面 模板 视频 音乐

┌──[root@master]-[~]

└─$ cd /etc/yum.repos.d/

┌──[root@master]-[/etc/yum.repos.d]

└─$ ls

CentOS-Base.repo CentOS-CR.repo CentOS-Debuginfo.repo CentOS-fasttrack.repo CentOS-Media.repo CentOS-Sources.repo CentOS-Vault.repo CentOS-x86_64-kernel.repo

┌──[root@master]-[/etc/yum.repos.d]

└─$ mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.backup

┌──[root@master]-[/etc/yum.repos.d]

└─$ wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

查找 ansible安装包

┌──[root@master]-[/etc/yum.repos.d]

└─$ yum list | grep ansible

ansible-collection-microsoft-sql.noarch 1.1.0-1.el7_9 extras

centos-release-ansible-27.noarch 1-1.el7 extras

centos-release-ansible-28.noarch 1-1.el7 extras

centos-release-ansible-29.noarch 1-1.el7 extras

centos-release-ansible26.noarch 1-3.el7.centos extras

┌──[root@master]-[/etc/yum.repos.d]

阿里云的yum镜像没有ansible包,所以我们需要使用epel安装

┌──[root@master]-[/etc/yum.repos.d]

└─$ wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

--2021-09-11 00:40:11-- http://mirrors.aliyun.com/repo/epel-7.repo

Resolving mirrors.aliyun.com (mirrors.aliyun.com)... 1.180.13.237, 1.180.13.236, 1.180.13.240, ...

Connecting to mirrors.aliyun.com (mirrors.aliyun.com)|1.180.13.237|:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 664 [application/octet-stream]

Saving to: ‘/etc/yum.repos.d/epel.repo’

100%[=======================================================================================================================================================================>] 664 --.-K/s in 0s

2021-09-11 00:40:12 (91.9 MB/s) - ‘/etc/yum.repos.d/epel.repo’ saved [664/664]

┌──[root@master]-[/etc/yum.repos.d]

└─$ yum install -y epel-release

查找ansible安装包,并安装

┌──[root@master]-[/etc/yum.repos.d]

└─$ yum list|grep ansible

Existing lock /var/run/yum.pid: another copy is running as pid 12522.

Another app is currently holding the yum lock; waiting for it to exit...

The other application is: PackageKit

Memory : 28 M RSS (373 MB VSZ)

Started: Sat Sep 11 00:40:41 2021 - 00:06 ago

State : Sleeping, pid: 12522

ansible.noarch 2.9.25-1.el7 epel

ansible-collection-microsoft-sql.noarch 1.1.0-1.el7_9 extras

ansible-doc.noarch 2.9.25-1.el7 epel

ansible-inventory-grapher.noarch 2.4.4-1.el7 epel

ansible-lint.noarch 3.5.1-1.el7 epel

ansible-openstack-modules.noarch 0-20140902git79d751a.el7 epel

ansible-python3.noarch 2.9.25-1.el7 epel

ansible-review.noarch 0.13.4-1.el7 epel

ansible-test.noarch 2.9.25-1.el7 epel

centos-release-ansible-27.noarch 1-1.el7 extras

centos-release-ansible-28.noarch 1-1.el7 extras

centos-release-ansible-29.noarch 1-1.el7 extras

centos-release-ansible26.noarch 1-3.el7.centos extras

kubernetes-ansible.noarch 0.6.0-0.1.gitd65ebd5.el7 epel

python2-ansible-runner.noarch 1.0.1-1.el7 epel

python2-ansible-tower-cli.noarch 3.3.9-1.el7 epel

vim-ansible.noarch 3.2-1.el7 epel

┌──[root@master]-[/etc/yum.repos.d]

└─$ yum install -y ansible

┌──[root@master]-[/etc/yum.repos.d]

└─$ ansible --version

ansible 2.9.25

config file = /etc/ansible/ansible.cfg

configured module search path = [u'/root/.ansible/plugins/modules', u'/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python2.7/site-packages/ansible

executable location = /usr/bin/ansible

python version = 2.7.5 (default, Oct 30 2018, 23:45:53) [GCC 4.8.5 20150623 (Red Hat 4.8.5-36)]

┌──[root@master]-[/etc/yum.repos.d]

└─$

查看主机清单

┌──[root@master]-[/etc/yum.repos.d]

└─$ ansible 127.0.0.1 --list-hosts

hosts (1):

127.0.0.1

┌──[root@master]-[/etc/yum.repos.d]

我们这里使用liruilong这个普通账号,一开始装机配置的那个用户,生产中会配置特定的用户,不使用root用户;

┌──[root@master]-[/home/liruilong]

└─$ su liruilong

[liruilong@master ~]$ pwd

/home/liruilong

[liruilong@master ~]$ mkdir ansible;cd ansible;vim ansible.cfg

[liruilong@master ansible]$ cat ansible.cfg

[defaults]

# 主机清单文件,就是要控制的主机列表

inventory=inventory

# 连接受管机器的远程的用户名

remote_user=liruilong

# 角色目录

roles_path=roles

# 设置用户的su 提权

[privilege_escalation]

become=True

become_method=sudo

become_user=root

become_ask_pass=False

[liruilong@master ansible]$

被控机列表,可以是 域名,IP,分组([组名]),聚合([组名:children]),也可以主动的设置用户名密码

[liruilong@master ansible]$ vim inventory

[liruilong@master ansible]$ cat inventory

[nodes]

node1

node2

[liruilong@master ansible]$ ansible all --list-hosts

hosts (2):

node1

node2

[liruilong@master ansible]$ ansible nodes --list-hosts

hosts (2):

node1

node2

[liruilong@master ansible]$ ls

ansible.cfg inventory

[liruilong@master ansible]$

master节点上以liruilong用户对三个节点分布配置

[liruilong@master ansible]$ ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/home/liruilong/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/liruilong/.ssh/id_rsa.

Your public key has been saved in /home/liruilong/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:cJ+SHgfMk00X99oCwEVPi1Rjoep7Agfz8DTjvtQv0T0 liruilong@master

The key's randomart image is:

+---[RSA 2048]----+

| .oo*oB. |

| o +.+ B + |

| . B . + o .|

| o+=+o . o |

| SO=o .o..|

| ..==.. .E.|

| .+o .. .|

| .o.o. |

| o+ .. |

+----[SHA256]-----+

[liruilong@master ansible]$ ssh-copy-id node1

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/home/liruilong/.ssh/id_rsa.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

liruilong@node1's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'node1'"

and check to make sure that only the key(s) you wanted were added.

嗯 ,node2和mater也需要配置

[liruilong@master ansible]$ ssh-copy-id node2

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/home/liruilong/.ssh/id_rsa.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

liruilong@node2's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'node2'"

and check to make sure that only the key(s) you wanted were added.

[liruilong@master ansible]$

这里有个问题,我的机器上配置了sudo免密,但是第一次没有生效,需要输入密码,之后就不需要了,使用ansible还是不行。后来发现,在/etc/sudoers.d 下新建一个以普通用户命名的文件的授权就可以了,不知道啥原因了。

node1

┌──[root@node1]-[~]

└─$ visudo

┌──[root@node1]-[~]

└─$ cat /etc/sudoers | grep liruilong

liruilong ALL=(ALL) NOPASSWD:ALL

┌──[root@node1]-[/etc/sudoers.d]

└─$ cd /etc/sudoers.d/

┌──[root@node1]-[/etc/sudoers.d]

└─$ vim liruilong

┌──[root@node1]-[/etc/sudoers.d]

└─$ cat liruilong

liruilong ALL=(ALL) NOPASSWD:ALL

┌──[root@node1]-[/etc/sudoers.d]

└─$

┌──[root@node2]-[~]

└─$ vim /etc/sudoers.d/liruilong

node2 和 master 按照相同的方式设置

ansible 清单主机地址列表 -m 模块名 [-a '任务参数']

[liruilong@master ansible]$ ansible all -m ping

node2 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python"

},

"changed": false,

"ping": "pong"

}

node1 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python"

},

"changed": false,

"ping": "pong"

}

[liruilong@master ansible]$ ansible nodes -m command -a 'ip a list ens33'

node2 | CHANGED | rc=0 >>

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:de:77:f4 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.11/24 brd 192.168.1.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 240e:319:735:db00:2b25:4eb1:f520:830c/64 scope global noprefixroute dynamic

valid_lft 208192sec preferred_lft 121792sec

inet6 fe80::8899:b0c7:4b50:73e0/64 scope link noprefixroute

valid_lft forever preferred_lft forever

node1 | CHANGED | rc=0 >>

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:94:35:31 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.9/24 brd 192.168.1.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::8899:b0c7:4b50:73e0/64 scope link tentative noprefixroute dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::2024:5b1c:1812:f4c0/64 scope link tentative noprefixroute dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::d310:173d:7910:9571/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[liruilong@master ansible]$

嗯,到这一步,

ansible我们就配置完成了,可以在当前环境学习ansible。这是我ansible学习整理的笔记,主要是CHRE考试的笔记,有些实战,感兴趣小伙伴可以看看

关于docker以及k8s的安装,我们可以通过rhel-system-roles基于角色进行安装,也可以自定义角色进行安装,也可以直接写剧本进行安装,这里我们使用直接部署ansible剧本的方式,一步一步构建。docker的话,感兴趣的小伙伴可以看看我的笔记。容器化技术学习笔记 我们主要看看K8S,

这里部署的话,一种是直接刷大佬写好的脚本,一种是自己一步一步来,这里我们使用第二种方式。

我们现在有的机器

| 主机名 | IP | 角色 | 备注 |

|---|---|---|---|

| master | 192.168.1.10 | kube-master | 管理节点 |

| node1 | 192.168.1.9 | kube-node | 计算节点 |

| node2 | 192.168.1.11 | kube-node | 计算节点 |

这里因为我们要用节点机装包,所以需要配置yum源,ansible配置的方式有很多,可以通过yum_repository配置,我们这里为了方便,直接使用执行shell的方式。

[liruilong@master ansible]$ ansible nodes -m shell -a 'mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.backup;wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo'

node2 | CHANGED | rc=0 >>

--2021-09-11 11:40:20-- http://mirrors.aliyun.com/repo/Centos-7.repo

Resolving mirrors.aliyun.com (mirrors.aliyun.com)... 1.180.13.241, 1.180.13.238, 1.180.13.237, ...

Connecting to mirrors.aliyun.com (mirrors.aliyun.com)|1.180.13.241|:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 2523 (2.5K) [application/octet-stream]

Saving to: ‘/etc/yum.repos.d/CentOS-Base.repo’

0K .. 100% 3.99M=0.001s

2021-09-11 11:40:20 (3.99 MB/s) - ‘/etc/yum.repos.d/CentOS-Base.repo’ saved [2523/2523]

node1 | CHANGED | rc=0 >>

--2021-09-11 11:40:20-- http://mirrors.aliyun.com/repo/Centos-7.repo

Resolving mirrors.aliyun.com (mirrors.aliyun.com)... 1.180.13.241, 1.180.13.238, 1.180.13.237, ...

Connecting to mirrors.aliyun.com (mirrors.aliyun.com)|1.180.13.241|:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 2523 (2.5K) [application/octet-stream]

Saving to: ‘/etc/yum.repos.d/CentOS-Base.repo’

0K .. 100% 346M=0s

2021-09-11 11:40:20 (346 MB/s) - ‘/etc/yum.repos.d/CentOS-Base.repo’ saved [2523/2523]

[liruilong@master ansible]$

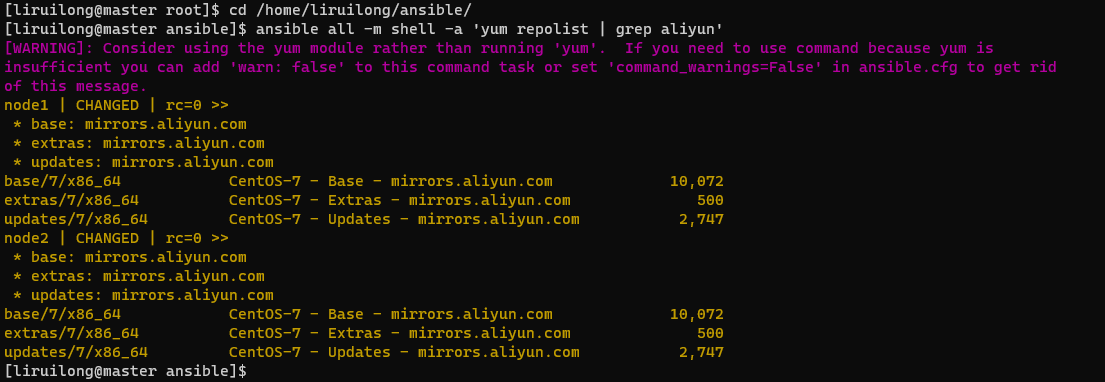

配置好了yum源,我们需要确认一下

[liruilong@master ansible]$ ansible all -m shell -a 'yum repolist | grep aliyun'

[liruilong@master ansible]$

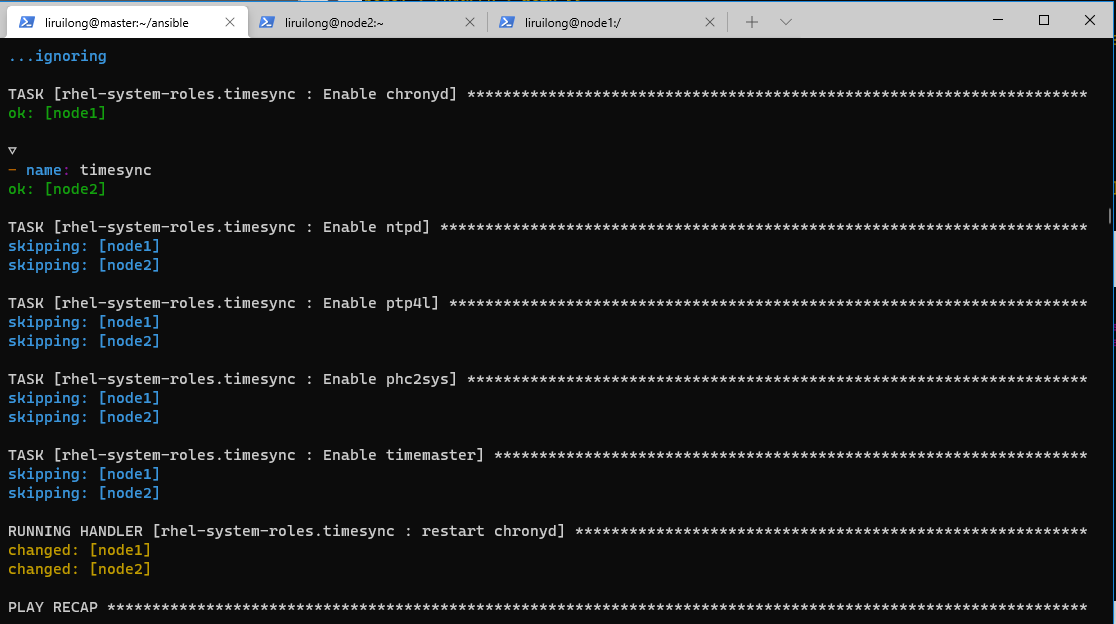

这里为了方便。我们直接使用 ansible角色 安装RHEL角色软件包,拷贝角色目录到角色目录下,并创建剧本 timesync.yml

┌──[root@master]-[/home/liruilong/ansible]

└─$ yum -y install rhel-system-roles

已加载插件:fastestmirror, langpacks

Loading mirror speeds from cached hostfile

* base: mirrors.aliyun.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

base | 3.6 kB 00:00:00

epel | 4.7 kB 00:00:00

extras | 2.9 kB 00:00:00

updates | 2.9 kB 00:00:00

(1/2): epel/x86_64/updateinfo | 1.0 MB 00:00:00

(2/2): epel/x86_64/primary_db | 7.0 MB 00:00:01

正在解决依赖关系

There are unfinished transactions remaining. You might consider running yum-complete-transaction, or "yum-complete-transaction --cleanup-only" and "yum history redo last", first to finish them. If those don't work you'll have to try removing/installing packages by hand (maybe package-cleanup can help).

--> 正在检查事务

---> 软件包 rhel-system-roles.noarch.0.1.0.1-4.el7_9 将被 安装

--> 解决依赖关系完成

依赖关系解决

========================================================================================================================

Package 架构 版本 源 大小

========================================================================================================================

正在安装:

rhel-system-roles noarch 1.0.1-4.el7_9 extras 988 k

事务概要

========================================================================================================================

安装 1 软件包

总下载量:988 k

安装大小:4.8 M

Downloading packages:

rhel-system-roles-1.0.1-4.el7_9.noarch.rpm | 988 kB 00:00:00

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

正在安装 : rhel-system-roles-1.0.1-4.el7_9.noarch 1/1

验证中 : rhel-system-roles-1.0.1-4.el7_9.noarch 1/1

已安装:

rhel-system-roles.noarch 0:1.0.1-4.el7_9

完毕!

┌──[root@master]-[/home/liruilong/ansible]

└─$ su - liruilong

上一次登录:六 9月 11 13:16:23 CST 2021pts/2 上

[liruilong@master ~]$ cd /home/liruilong/ansible/

[liruilong@master ansible]$ ls

ansible.cfg inventory

[liruilong@master ansible]$ cp -r /usr/share/ansible/roles/rhel-system-roles.timesync roles/

[liruilong@master ansible]$ ls

ansible.cfg inventory roles timesync.yml

[liruilong@master ansible]$ cat timesync.yml

- name: timesync

hosts: all

vars:

- timesync_ntp_servers:

- hostname: 192.168.1.10

iburst: yes

roles:

- rhel-system-roles.timesync

[liruilong@master ansible]$

|

| 步骤 |

|---|

| 安装docker |

| 卸载防火墙 |

| 开启路由转发 |

| 修复版本防火墙BUG |

| 重启docker服务,设置开机自启 |

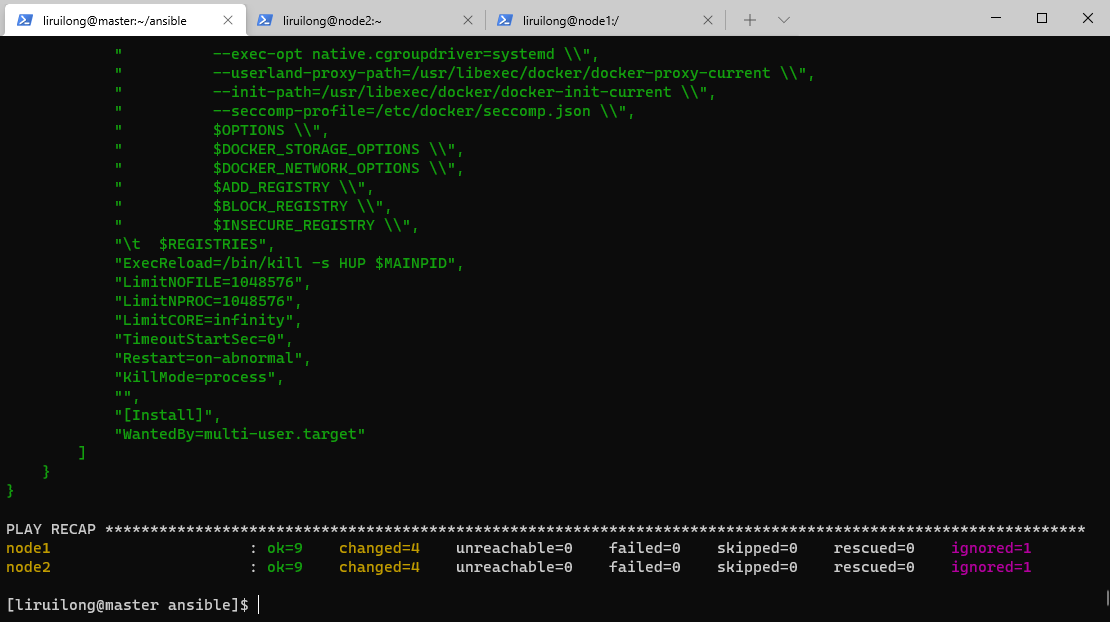

编写 docker环境初始化的剧本 install_docker_playbook.yml

- name: install docker on node1,node2

hosts: node1,node2

tasks:

- yum: name=docker state=absent

- yum: name=docker state=present

- yum: name=firewalld state=absent

- shell: echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf

- shell: sysctl -p

- shell: sed -i '18 i ExecStartPort=/sbin/iptables -P FORWARD ACCEPT' /lib/systemd/system/docker.service

- shell: cat /lib/systemd/system/docker.service

- shell: systemctl daemon-reload

- service: name=docker state=restarted enabled=yes

执行剧本

[liruilong@master ansible]$ cat install_docker_playbook.yml

- name: install docker on node1,node2

hosts: node1,node2

tasks:

- yum: name=docker state=absent

- yum: name=docker state=present

- yum: name=firewalld state=absent

- shell: echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf

- shell: sysctl -p

- shell: sed -i '18 i ExecStartPort=/sbin/iptables -P FORWARD ACCEPT' /lib/systemd/system/docker.service

- shell: cat /lib/systemd/system/docker.service

- shell: systemctl daemon-reload

- service: name=docker state=restarted enabled=yes

[liruilong@master ansible]$ ls

ansible.cfg install_docker_check.yml install_docker_playbook.yml inventory roles timesync.yml

[liruilong@master ansible]$ ansible-playbook install_docker_playbook.yml

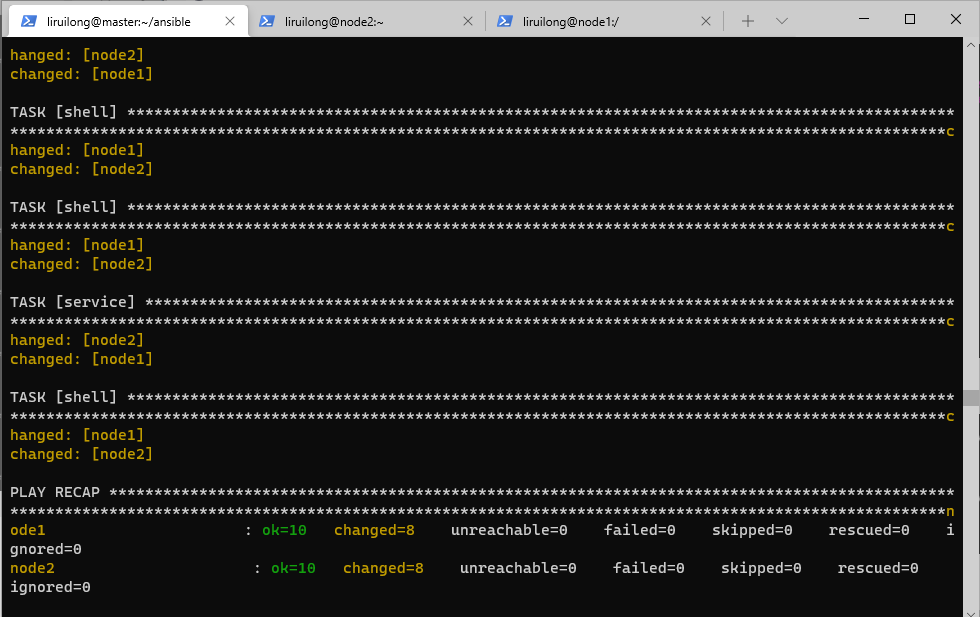

docker环境初始化的剧本执行 install_docker_playbook.yml |

|---|

|

然后,我们编写一个检查的剧本install_docker_check.yml ,用来检查docker的安装情况

- name: install_docker-check

hosts: node1,node2

ignore_errors: yes

tasks:

- shell: docker info

register: out

- debug: msg="{{out}}"

- shell: systemctl -all | grep firewalld

register: out1

- debug: msg="{{out1}}"

- shell: cat /etc/sysctl.conf

register: out2

- debug: msg="{{out2}}"

- shell: cat /lib/systemd/system/docker.service

register: out3

- debug: msg="{{out3}}"

[liruilong@master ansible]$ ls

ansible.cfg install_docker_check.yml install_docker_playbook.yml inventory roles timesync.yml

[liruilong@master ansible]$ cat install_docker_check.yml

- name: install_docker-check

hosts: node1,node2

ignore_errors: yes

tasks:

- shell: docker info

register: out

- debug: msg="{{out}}"

- shell: systemctl -all | grep firewalld

register: out1

- debug: msg="{{out1}}"

- shell: cat /etc/sysctl.conf

register: out2

- debug: msg="{{out2}}"

- shell: cat /lib/systemd/system/docker.service

register: out3

- debug: msg="{{out3}}"

[liruilong@master ansible]$ ansible-playbook install_docker_check.yml

检查的剧本执行install_docker_check.yml |

|---|

|

安装etcd(键值型数据库),在Kube-master上操作,创建配置网络

| 步骤 |

|---|

| 使用 yum 方式安装etcd |

| 修改etcd的配置文件,修改etcd监听的客户端地址,0.0.0.0 指监听所有的主机 |

| 开启路由转发 |

| 启动服务,并设置开机自启动 |

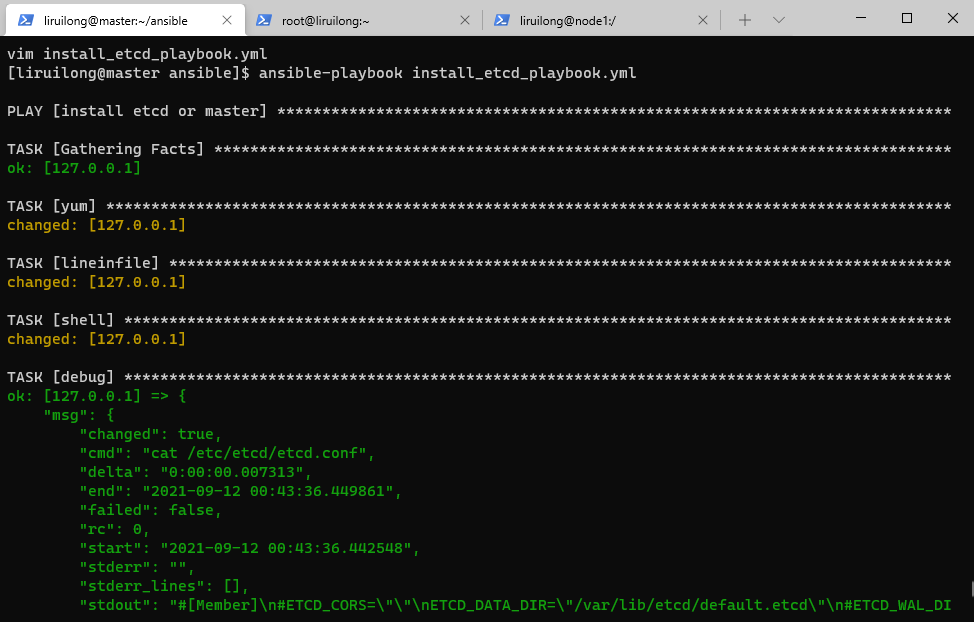

编写ansible剧本 install_etcd_playbook.yml

- name: install etcd or master

hosts: 127.0.0.1

tasks:

- yum: name=etcd state=present

- lineinfile: path=/etc/etcd/etcd.conf regexp=ETCD_LISTEN_CLIENT_URLS="http://localhost:2379" line=ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

- shell: cat /etc/etcd/etcd.conf

register: out

- debug: msg="{{out}}"

- service: name=etcd state=restarted enabled=yes

[liruilong@master ansible]$ ls

ansible.cfg install_docker_playbook.yml inventory timesync.yml

install_docker_check.yml install_etcd_playbook.yml roles

[liruilong@master ansible]$ cat install_etcd_playbook.yml

- name: install etcd or master

hosts: 127.0.0.1

tasks:

- yum: name=etcd state=present

- lineinfile: path=/etc/etcd/etcd.conf regexp=ETCD_LISTEN_CLIENT_URLS="http://localhost:2379" line=ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

- shell: cat /etc/etcd/etcd.conf

register: out

- debug: msg="{{out}}"

- service: name=etcd state=restarted enabled=yes

[liruilong@master ansible]$ ansible-playbook install_etcd_playbook.yml

ansible剧本 install_etcd_playbook.yml执行 |

|---|

|

| 创建配置网络:10.254.0.0/16 |

|---|

etcdctl ls / |

etcdctl mk /atomic.io/network/config '{"Network": "10.254.0.0/16", "Backend": {"Type":"vxlan"}} ' |

etcdctl get /atomic.io/network/config |

[liruilong@master ansible]$ etcdctl ls /

[liruilong@master ansible]$ etcdctl mk /atomic.io/network/config '{"Network": "10.254.0.0/16", "Backend": {"Type":"vxlan"}} '

{"Network": "10.254.0.0/16", "Backend": {"Type":"vxlan"}}

[liruilong@master ansible]$ etcdctl ls /

/atomic.io

[liruilong@master ansible]$ etcdctl ls /atomic.io

/atomic.io/network

[liruilong@master ansible]$ etcdctl ls /atomic.io/network

/atomic.io/network/config

[liruilong@master ansible]$ etcdctl get /atomic.io/network/config

{"Network": "10.254.0.0/16", "Backend": {"Type":"vxlan"}}

[liruilong@master ansible]$

flannel是一个网络规划服务,它的功能是让k8s集群中,不同节点主机创建的docker容器,都具有在集群中唯一的虚拟IP地址。flannel 还可以在这些虚拟机IP地址之间建立一个覆盖网络,通过这个覆盖网络,实现不同主机内的容器互联互通;嗯,类似一个vlan的作用。

kube-master 管理主机上没有docker,只需要安装flannel,修改配置,启动并设置开机自启动即可。

嗯,这里因为master节点机需要装包配置,所以我们在主机清单里加了master节点

[liruilong@master ansible]$ sudo cat /etc/hosts

192.168.1.11 node2

192.168.1.9 node1

192.168.1.10 master

[liruilong@master ansible]$ ls

ansible.cfg install_docker_playbook.yml inventory timesync.yml

install_docker_check.yml install_etcd_playbook.yml roles

[liruilong@master ansible]$ cat inventory

master

[nodes]

node1

node2

[liruilong@master ansible]$ ansible master -m ping

master | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python"

},

"changed": false,

"ping": "pong"

}

| 步骤 |

|---|

| 安装flannel网络软件包 |

修改配置文件 /etc/sysconfig/flanneld |

启动服务(flannel服务必须在docker服务之前启动),记得要把master节点的端口开了,要不就关了防火墙 |

先启动flannel,再启动docker |

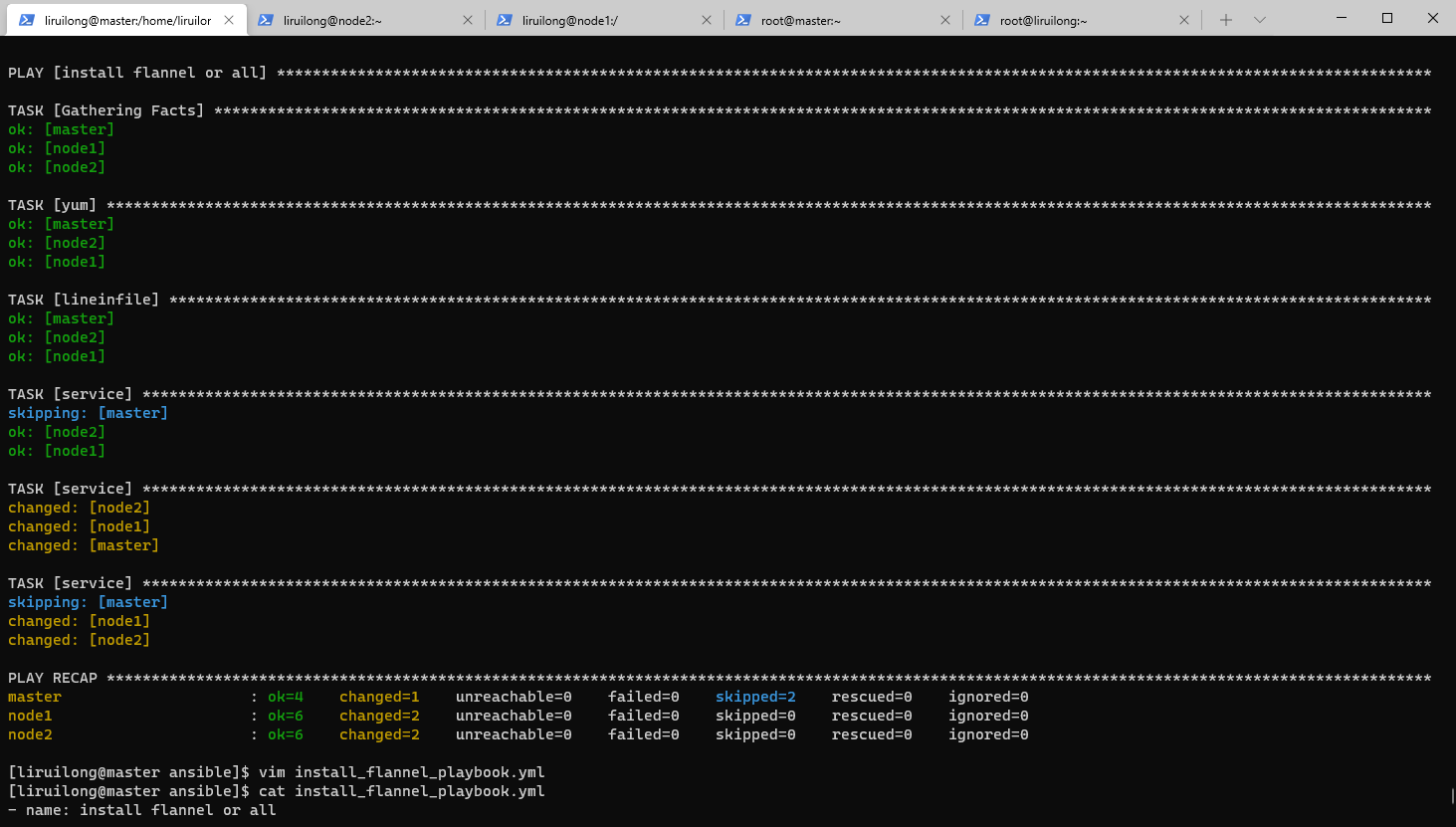

编写剧本 install_flannel_playbook.yml:

- name: install flannel or all

hosts: all

vars:

group_node: nodes

tasks:

- yum:

name: flannel

state: present

- lineinfile:

path: /etc/sysconfig/flanneld

regexp: FLANNEL_ETCD_ENDPOINTS="http://127.0.0.1:2379"

line: FLANNEL_ETCD_ENDPOINTS="http://192.168.1.10:2379"

- service:

name: docker

state: stopped

when: group_node in group_names

- service:

name: flanneld

state: restarted

enabled: yes

- service:

name: docker

state: restarted

when: group_node in group_names

执行剧本之前要把master的firewalld 关掉。也可以把2379端口放开

[liruilong@master ansible]$ su root

密码:

┌──[root@master]-[/home/liruilong/ansible]

└─$ systemctl disable flanneld.service --now

Removed symlink /etc/systemd/system/multi-user.target.wants/flanneld.service.

Removed symlink /etc/systemd/system/docker.service.wants/flanneld.service.

┌──[root@master]-[/home/liruilong/ansible]

└─$ systemctl status flanneld.service

● flanneld.service - Flanneld overlay address etcd agent

Loaded: loaded (/usr/lib/systemd/system/flanneld.service; disabled; vendor preset: disabled)

Active: inactive (dead)

9月 12 18:34:24 master flanneld-start[50344]: I0912 18:34:24.046900 50344 manager.go:149] Using interface with name ens33 and address 192.168.1.10

9月 12 18:34:24 master flanneld-start[50344]: I0912 18:34:24.046958 50344 manager.go:166] Defaulting external address to interface address (192.168.1.10)

9月 12 18:34:24 master flanneld-start[50344]: I0912 18:34:24.056681 50344 local_manager.go:134] Found lease (10.254.68.0/24) for current IP (192..., reusing

9月 12 18:34:24 master flanneld-start[50344]: I0912 18:34:24.060343 50344 manager.go:250] Lease acquired: 10.254.68.0/24

9月 12 18:34:24 master flanneld-start[50344]: I0912 18:34:24.062427 50344 network.go:58] Watching for L3 misses

9月 12 18:34:24 master flanneld-start[50344]: I0912 18:34:24.062462 50344 network.go:66] Watching for new subnet leases

9月 12 18:34:24 master systemd[1]: Started Flanneld overlay address etcd agent.

9月 12 18:40:42 master systemd[1]: Stopping Flanneld overlay address etcd agent...

9月 12 18:40:42 master flanneld-start[50344]: I0912 18:40:42.194559 50344 main.go:172] Exiting...

9月 12 18:40:42 master systemd[1]: Stopped Flanneld overlay address etcd agent.

Hint: Some lines were ellipsized, use -l to show in full.

┌──[root@master]-[/home/liruilong/ansible]

└─$

┌──[root@master]-[/home/liruilong/ansible]

└─$ cat install_flannel_playbook.yml

- name: install flannel or all

hosts: all

vars:

group_node: nodes

tasks:

- yum:

name: flannel

state: present

- lineinfile:

path: /etc/sysconfig/flanneld

regexp: FLANNEL_ETCD_ENDPOINTS="http://127.0.0.1:2379"

line: FLANNEL_ETCD_ENDPOINTS="http://192.168.1.10:2379"

- service:

name: docker

state: stopped

when: group_node in group_names

- service:

name: flanneld

state: restarted

enabled: yes

- service:

name: docker

state: restarted

when: group_node in group_names

┌──[root@master]-[/home/liruilong/ansible]

└─$ ansible-playbook install_flannel_playbook.yml

剧本 install_flannel_playbook.yml执行 |

|---|

|

这里也可以使用ansible 的docker相关模块处理,我们这里为了方便直接用shell模块

编写 install_flannel_check.yml

| 步骤 |

|---|

| 打印node节点机的docker桥接网卡docker0 |

| 在node节点机基于centos镜像运行容器,名字为主机名 |

| 打印镜像id相关信息 |

| 打印全部节点的flannel网卡信息 |

- name: flannel config check

hosts: all

vars:

nodes: nodes

tasks:

- block:

- shell: ifconfig docker0 | head -2

register: out

- debug: msg="{{out}}"

- shell: docker rm -f {{inventory_hostname}}

- shell: docker run -itd --name {{inventory_hostname}} centos

register: out1

- debug: msg="{{out1}}"

when: nodes in group_names

- shell: ifconfig flannel.1 | head -2

register: out

- debug: msg="{{out}}"

执行剧本

[liruilong@master ansible]$ cat install_flannel_check.yml

- name: flannel config check

hosts: all

vars:

nodes: nodes

tasks:

- block:

- shell: ifconfig docker0 | head -2

register: out

- debug: msg="{{out}}"

- shell: docker rm -f {{inventory_hostname}}

- shell: docker run -itd --name {{inventory_hostname}} centos

register: out1

- debug: msg="{{out1}}"

when: nodes in group_names

- shell: ifconfig flannel.1 | head -2

register: out

- debug: msg="{{out}}"

[liruilong@master ansible]$

[liruilong@master ansible]$ ansible-playbook install_flannel_check.yml

PLAY [flannel config check] *************************************************************************************************************************************************************************************

TASK [Gathering Facts] ******************************************************************************************************************************************************************************************

ok: [master]

ok: [node2]

ok: [node1]

TASK [shell] ****************************************************************************************************************************************************************************************************

skipping: [master]

changed: [node2]

changed: [node1]

TASK [debug] ****************************************************************************************************************************************************************************************************

skipping: [master]

ok: [node1] => {

"msg": {

"changed": true,

"cmd": "ifconfig docker0 | head -2",

"delta": "0:00:00.021769",

"end": "2021-09-12 21:51:44.826682",

"failed": false,

"rc": 0,

"start": "2021-09-12 21:51:44.804913",

"stderr": "",

"stderr_lines": [],

"stdout": "docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450\n inet 10.254.97.1 netmask 255.255.255.0 broadcast 0.0.0.0",

"stdout_lines": [

"docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450",

" inet 10.254.97.1 netmask 255.255.255.0 broadcast 0.0.0.0"

]

}

}

ok: [node2] => {

"msg": {

"changed": true,

"cmd": "ifconfig docker0 | head -2",

"delta": "0:00:00.011223",

"end": "2021-09-12 21:51:44.807988",

"failed": false,

"rc": 0,

"start": "2021-09-12 21:51:44.796765",

"stderr": "",

"stderr_lines": [],

"stdout": "docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450\n inet 10.254.59.1 netmask 255.255.255.0 broadcast 0.0.0.0",

"stdout_lines": [

"docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450",

" inet 10.254.59.1 netmask 255.255.255.0 broadcast 0.0.0.0"

]

}

}

TASK [shell] ****************************************************************************************************************************************************************************************************

skipping: [master]

changed: [node1]

changed: [node2]

TASK [shell] ****************************************************************************************************************************************************************************************************

skipping: [master]

changed: [node1]

changed: [node2]

TASK [debug] ****************************************************************************************************************************************************************************************************

skipping: [master]

ok: [node1] => {

"msg": {

"changed": true,

"cmd": "docker run -itd --name node1 centos",

"delta": "0:00:00.795119",

"end": "2021-09-12 21:51:48.157221",

"failed": false,

"rc": 0,

"start": "2021-09-12 21:51:47.362102",

"stderr": "",

"stderr_lines": [],

"stdout": "1c0628dcb7e772640d9eb58179efc03533e796989f7a802e230f9ebc3012845a",

"stdout_lines": [

"1c0628dcb7e772640d9eb58179efc03533e796989f7a802e230f9ebc3012845a"

]

}

}

ok: [node2] => {

"msg": {

"changed": true,

"cmd": "docker run -itd --name node2 centos",

"delta": "0:00:00.787663",

"end": "2021-09-12 21:51:48.194065",

"failed": false,

"rc": 0,

"start": "2021-09-12 21:51:47.406402",

"stderr": "",

"stderr_lines": [],

"stdout": "1931d80f5bfffc23fef714a58ab5b009ed5e2182199b55038bb9b1ccc69ec271",

"stdout_lines": [

"1931d80f5bfffc23fef714a58ab5b009ed5e2182199b55038bb9b1ccc69ec271"

]

}

}

TASK [shell] ****************************************************************************************************************************************************************************************************

changed: [master]

changed: [node2]

changed: [node1]

TASK [debug] ****************************************************************************************************************************************************************************************************

ok: [master] => {

"msg": {

"changed": true,

"cmd": "ifconfig flannel.1 | head -2",

"delta": "0:00:00.011813",

"end": "2021-09-12 21:51:48.722196",

"failed": false,

"rc": 0,

"start": "2021-09-12 21:51:48.710383",

"stderr": "",

"stderr_lines": [],

"stdout": "flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450\n inet 10.254.68.0 netmask 255.255.255.255 broadcast 0.0.0.0",

"stdout_lines": [

"flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450",

" inet 10.254.68.0 netmask 255.255.255.255 broadcast 0.0.0.0"

]

}

}

ok: [node1] => {

"msg": {

"changed": true,

"cmd": "ifconfig flannel.1 | head -2",

"delta": "0:00:00.021717",

"end": "2021-09-12 21:51:49.443800",

"failed": false,

"rc": 0,

"start": "2021-09-12 21:51:49.422083",

"stderr": "",

"stderr_lines": [],

"stdout": "flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450\n inet 10.254.97.0 netmask 255.255.255.255 broadcast 0.0.0.0",

"stdout_lines": [

"flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450",

" inet 10.254.97.0 netmask 255.255.255.255 broadcast 0.0.0.0"

]

}

}

ok: [node2] => {

"msg": {

"changed": true,

"cmd": "ifconfig flannel.1 | head -2",

"delta": "0:00:00.012259",

"end": "2021-09-12 21:51:49.439005",

"failed": false,

"rc": 0,

"start": "2021-09-12 21:51:49.426746",

"stderr": "",

"stderr_lines": [],

"stdout": "flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450\n inet 10.254.59.0 netmask 255.255.255.255 broadcast 0.0.0.0",

"stdout_lines": [

"flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450",

" inet 10.254.59.0 netmask 255.255.255.255 broadcast 0.0.0.0"

]

}

}

PLAY RECAP ******************************************************************************************************************************************************************************************************

master : ok=3 changed=1 unreachable=0 failed=0 skipped=5 rescued=0 ignored=0

node1 : ok=8 changed=4 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

node2 : ok=8 changed=4 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

[liruilong@master ansible]$

验证node1上的centos容器能否ping通 node2上的centos容器

[liruilong@master ansible]$ ssh node1

Last login: Sun Sep 12 21:58:49 2021 from 192.168.1.10

[liruilong@node1 ~]$ sudo docker exec -it node1 /bin/bash

[root@1c0628dcb7e7 /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

17: eth0@if18: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UP group default

link/ether 02:42:0a:fe:61:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.254.97.2/24 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::42:aff:fefe:6102/64 scope link

valid_lft forever preferred_lft forever

[root@1c0628dcb7e7 /]# exit

exit

[liruilong@node1 ~]$ exit

登出

Connection to node1 closed.

[liruilong@master ansible]$ ssh node2

Last login: Sun Sep 12 21:51:49 2021 from 192.168.1.10

[liruilong@node2 ~]$ sudo docker exec -it node2 /bin/bash

[root@1931d80f5bff /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

15: eth0@if16: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UP group default

link/ether 02:42:0a:fe:3b:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.254.59.2/24 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::42:aff:fefe:3b02/64 scope link

valid_lft forever preferred_lft forever

[root@1931d80f5bff /]# ping 10.254.97.2

PING 10.254.97.2 (10.254.97.2) 56(84) bytes of data.

64 bytes from 10.254.97.2: icmp_seq=1 ttl=62 time=99.3 ms

64 bytes from 10.254.97.2: icmp_seq=2 ttl=62 time=0.693 ms

64 bytes from 10.254.97.2: icmp_seq=3 ttl=62 time=97.6 ms

^C

--- 10.254.97.2 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 5ms

rtt min/avg/max/mdev = 0.693/65.879/99.337/46.100 ms

[root@1931d80f5bff /]#

测试可以ping通,到这一步,我们配置了 flannel 网络,实现不同机器间容器互联互通

嗯,网络配置好之后,我们要在master管理节点安装配置相应的kube-master。先看下有没有包

[liruilong@master ansible]$ yum list kubernetes-*

已加载插件:fastestmirror, langpacks

Determining fastest mirrors

* base: mirrors.aliyun.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

可安装的软件包

kubernetes.x86_64 1.5.2-0.7.git269f928.el7 extras

kubernetes-ansible.noarch 0.6.0-0.1.gitd65ebd5.el7 epel

kubernetes-client.x86_64 1.5.2-0.7.git269f928.el7 extras

kubernetes-master.x86_64 1.5.2-0.7.git269f928.el7 extras

kubernetes-node.x86_64 1.5.2-0.7.git269f928.el7 extras

[liruilong@master ansible]$ ls /etc/yum.repos.d/

嗯,如果有1.10的包,最好用 1.10 的,这里我们只有1.5 的就先用1.5 的试试,1.10 的yum源没找到

| 步骤 |

|---|

| 关闭交换分区,selinux |

| 配置k8s 的yum源 |

| 安装k8s软件包 |

| 修改全局配置文件 /etc/kubernetes/config |

| 修改master 配置文件 /etc/kubernetes/apiserver |

| 启动服务 |

| 验证服务 kuberctl get cs |

编写 install_kube-master_playbook.yml 剧本

- name: install kube-master or master

hosts: master

tasks:

- shell: swapoff -a

- replace:

path: /etc/fstab

regexp: "/dev/mapper/centos-swap"

replace: "#/dev/mapper/centos-swap"

- shell: cat /etc/fstab

register: out

- debug: msg="{{out}}"

- shell: getenforce

register: out

- debug: msg="{{out}}"

- shell: setenforce 0

when: out.stdout != "Disabled"

- replace:

path: /etc/selinux/config

regexp: "SELINUX=enforcing"

replace: "SELINUX=disabled"

- shell: cat /etc/selinux/config

register: out

- debug: msg="{{out}}"

- yum_repository:

name: Kubernetes

description: K8s aliyun yum

baseurl: https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

gpgcheck: yes

gpgkey: https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

repo_gpgcheck: yes

enabled: yes

- yum:

name: kubernetes-master,kubernetes-client

state: absent

- yum:

name: kubernetes-master

state: present

- yum:

name: kubernetes-client

state: present

- lineinfile:

path: /etc/kubernetes/config

regexp: KUBE_MASTER="--master=http://127.0.0.1:8080"

line: KUBE_MASTER="--master=http://192.168.1.10:8080"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_API_ADDRESS="--insecure-bind-address=127.0.0.1"

line: KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_ETCD_SERVERS="--etcd-servers=http://127.0.0.1:2379"

line: KUBE_ETCD_SERVERS="--etcd-servers=http://192.168.1.10:2379"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16"

line: KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota"

line: KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota"

- service:

name: kube-apiserver

state: restarted

enabled: yes

- service:

name: kube-controller-manager

state: restarted

enabled: yes

- service:

name: kube-scheduler

state: restarted

enabled: yes

- shell: kubectl get cs

register: out

- debug: msg="{{out}}"

执行剧本

[liruilong@master ansible]$ ansible-playbook install_kube-master_playbook.yml

............

TASK [debug] **************************************************************************************************************************************************

ok: [master] => {

"msg": {

"changed": true,

"cmd": "kubectl get cs",

"delta": "0:00:05.653524",

"end": "2021-09-12 23:44:58.030756",

"failed": false,

"rc": 0,

"start": "2021-09-12 23:44:52.377232",

"stderr": "",

"stderr_lines": [],

"stdout": "NAME STATUS MESSAGE ERROR\nscheduler Healthy ok \ncontroller-manager Healthy ok \netcd-0 Healthy {\"health\":\"true\"} ",

"stdout_lines": [

"NAME STATUS MESSAGE ERROR",

"scheduler Healthy ok ",

"controller-manager Healthy ok ",

"etcd-0 Healthy {\"health\":\"true\"} "

]

}

}

PLAY RECAP ****************************************************************************************************************************************************

master : ok=13 changed=4 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

[liruilong@master ansible]$ cat install_kube-master_playbook.yml

- name: install kube-master or master

hosts: master

tasks:

- shell: swapoff -a

- replace:

path: /etc/fstab

regexp: "/dev/mapper/centos-swap"

replace: "#/dev/mapper/centos-swap"

- shell: cat /etc/fstab

register: out

- debug: msg="{{out}}"

- shell: getenforce

register: out

- debug: msg="{{out}}"

- shell: setenforce 0

when: out.stdout != "Disabled"

- replace:

path: /etc/selinux/config

regexp: "SELINUX=enforcing"

replace: "SELINUX=disabled"

- shell: cat /etc/selinux/config

register: out

- debug: msg="{{out}}"

- yum_repository:

name: Kubernetes

description: K8s aliyun yum

baseurl: https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

gpgcheck: yes

gpgkey: https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

repo_gpgcheck: yes

enabled: yes

- yum:

name: kubernetes-master,kubernetes-client

state: absent

- yum:

name: kubernetes-master

state: present

- yum:

name: kubernetes-client

state: present

- lineinfile:

path: /etc/kubernetes/config

regexp: KUBE_MASTER="--master=http://127.0.0.1:8080"

line: KUBE_MASTER="--master=http://192.168.1.10:8080"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_API_ADDRESS="--insecure-bind-address=127.0.0.1"

line: KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_ETCD_SERVERS="--etcd-servers=http://127.0.0.1:2379"

line: KUBE_ETCD_SERVERS="--etcd-servers=http://192.168.1.10:2379"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16"

line: KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16"

- lineinfile:

path: /etc/kubernetes/apiserver

regexp: KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota"

line: KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota"

- service:

name: kube-apiserver

state: restarted

enabled: yes

- service:

name: kube-controller-manager

state: restarted

enabled: yes

- service:

name: kube-scheduler

state: restarted

enabled: yes

- shell: kubectl get cs

register: out

- debug: msg="{{out}}"

[liruilong@master ansible]$

管理节点安装成功之后我们要部署相应的计算节点,kube-node 的安装 ( 在所有node服务器上部署 )

| 步骤 |

|---|

| 关闭交换分区,selinux |

| 配置k8s 的yum源 |

| 安装k8s的node节点软件包 |

| 修改kube-node 全局配置文件 /etc/kubernetes/config |

| 修改node 配置文件 /etc/kubernetes/kubelet,这里需要注意的是有一个基础镜像的配置,如果自己的镜像库最好配自己的 |

| kubelet.kubeconfig 文件生成 |

| 设置集群:将生成的信息,写入到kubelet.kubeconfig文件中 |

| Pod 镜像安装 |

| 启动服务并验证 |

剧本编写: install_kube-node_playbook.yml

[liruilong@master ansible]$ cat

- name: install kube-node or nodes

hosts: nodes

tasks:

- shell: swapoff -a

- replace:

path: /etc/fstab

regexp: "/dev/mapper/centos-swap"

replace: "#/dev/mapper/centos-swap"

- shell: cat /etc/fstab

register: out

- debug: msg="{{out}}"

- shell: getenforce

register: out

- debug: msg="{{out}}"

- shell: setenforce 0

when: out.stdout != "Disabled"

- replace:

path: /etc/selinux/config

regexp: "SELINUX=enforcing"

replace: "SELINUX=disabled"

- shell: cat /etc/selinux/config

register: out

- debug: msg="{{out}}"

- yum_repository:

name: Kubernetes

description: K8s aliyun yum

baseurl: https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

gpgcheck: yes

gpgkey: https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

repo_gpgcheck: yes

enabled: yes

- yum:

name: kubernetes-node

state: absent

- yum:

name: kubernetes-node

state: present

- lineinfile:

path: /etc/kubernetes/config

regexp: KUBE_MASTER="--master=http://127.0.0.1:8080"

line: KUBE_MASTER="--master=http://192.168.1.10:8080"

- lineinfile:

path: /etc/kubernetes/kubelet

regexp: KUBELET_ADDRESS="--address=127.0.0.1"

line: KUBELET_ADDRESS="--address=0.0.0.0"

- lineinfile:

path: /etc/kubernetes/kubelet

regexp: KUBELET_HOSTNAME="--hostname-override=127.0.0.1"

line: KUBELET_HOSTNAME="--hostname-override={{inventory_hostname}}"

- lineinfile:

path: /etc/kubernetes/kubelet

regexp: KUBELET_API_SERVER="--api-servers=http://127.0.0.1:8080"

line: KUBELET_API_SERVER="--api-servers=http://192.168.1.10:8080"

- lineinfile:

path: /etc/kubernetes/kubelet

regexp: KUBELET_ARGS=""

line: KUBELET_ARGS="--cgroup-driver=systemd --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

- shell: kubectl config set-cluster local --server="http://192.168.1.10:8080"

- shell: kubectl config set-context --cluster="local" local

- shell: kubectl config set current-context local

- shell: kubectl config view

register: out

- debug: msg="{{out}}"

- copy:

dest: /etc/kubernetes/kubelet.kubeconfig

content: "{{out.stdout}}"

force: yes

- shell: docker pull tianyebj/pod-infrastructure:latest

- service:

name: kubelet

state: restarted

enabled: yes

- service:

name: kube-proxy

state: restarted

enabled: yes

- name: service check

hosts: master

tasks:

- shell: sleep 10

async: 11

- shell: kubectl get node

register: out

- debug: msg="{{out}}"

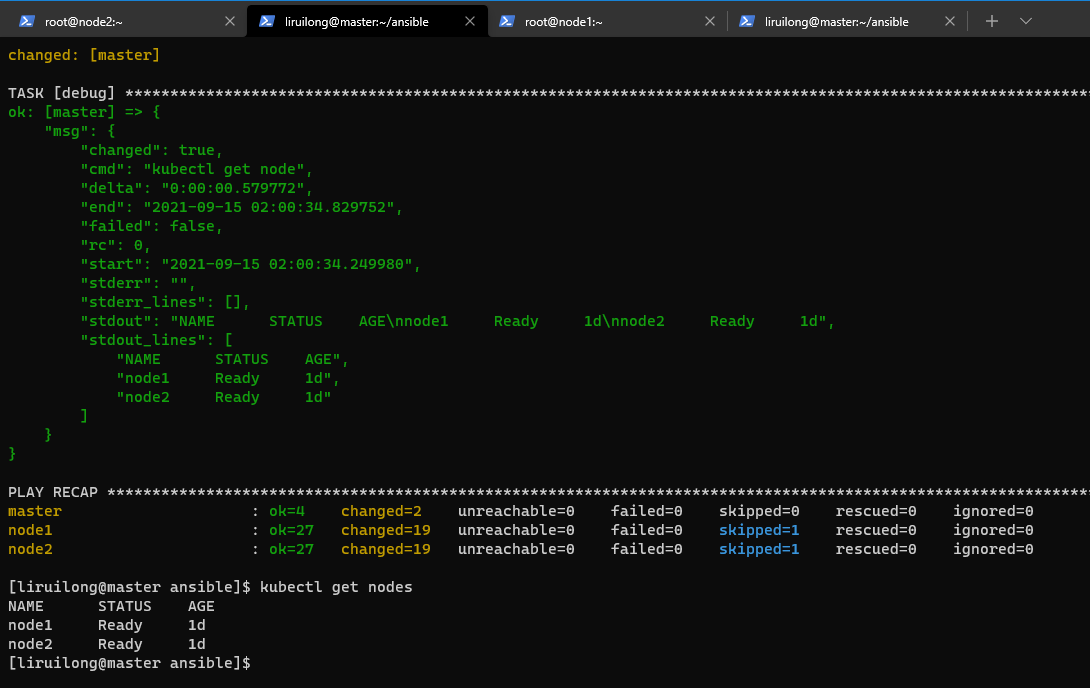

执行剧本 install_kube-node_playbook.yml

[liruilong@master ansible]$ ansible-playbook install_kube-node_playbook.yml

........

...

TASK [debug] **************************************************************************************************************************************************************************************************

ok: [master] => {

"msg": {

"changed": true,

"cmd": "kubectl get node",

"delta": "0:00:00.579772",

"end": "2021-09-15 02:00:34.829752",

"failed": false,

"rc": 0,

"start": "2021-09-15 02:00:34.249980",

"stderr": "",

"stderr_lines": [],

"stdout": "NAME STATUS AGE\nnode1 Ready 1d\nnode2 Ready 1d",

"stdout_lines": [

"NAME STATUS AGE",

"node1 Ready 1d",

"node2 Ready 1d"

]

}

}

PLAY RECAP ****************************************************************************************************************************************************************************************************

master : ok=4 changed=2 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

node1 : ok=27 changed=19 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0

node2 : ok=27 changed=19 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0

[liruilong@master ansible]$

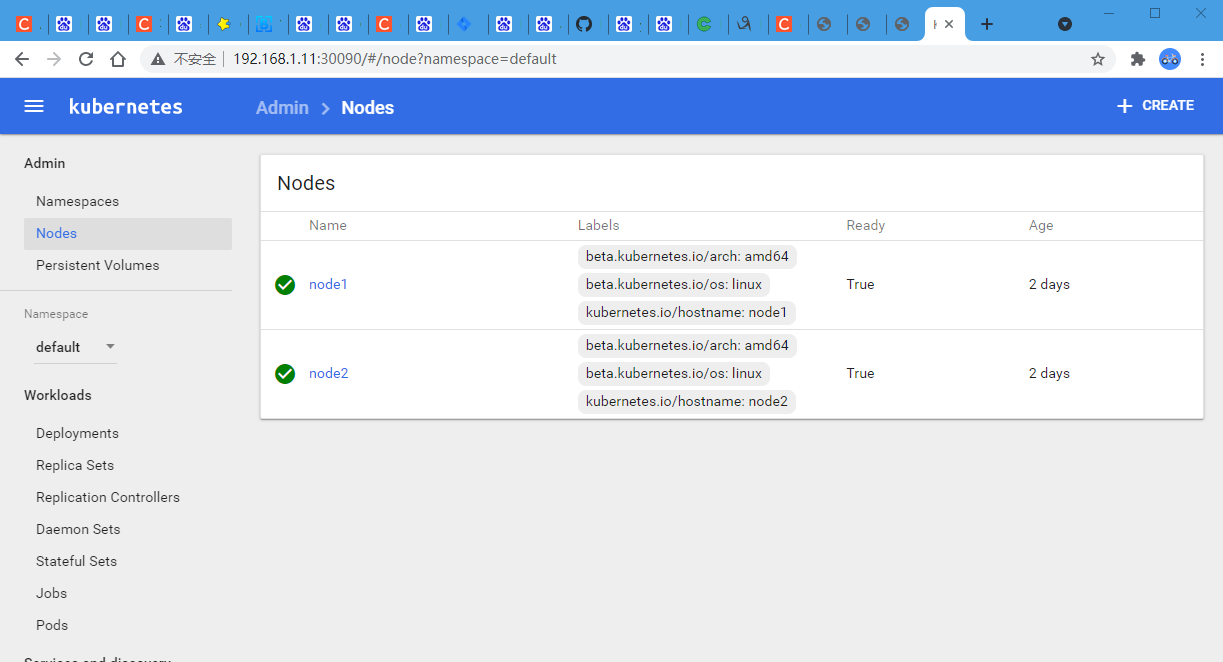

dashboard 镜像安装:kubernetes-dashboard 是 kubernetes 的web管理面板.这里的话一定要和K8s的版本对应,包括配置文件

[liruilong@master ansible]$ ansible node1 -m shell -a 'docker search kubernetes-dashboard'

[liruilong@master ansible]$ ansible node1 -m shell -a 'docker pull docker.io/rainf/kubernetes-dashboard-amd64'

kube-dashboard.yaml 文件,修改dashboard的yaml文件,在kube-master上操作

kind: Deployment

apiVersion: extensions/v1beta1

metadata:

labels:

app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: kubernetes-dashboard

template:

metadata:

labels:

app: kubernetes-dashboard

# Comment the following annotation if Dashboard must not be deployed on master

annotations:

scheduler.alpha.kubernetes.io/tolerations: |

[

{

"key": "dedicated",

"operator": "Equal",

"value": "master",

"effect": "NoSchedule"

}

]

spec:

containers:

- name: kubernetes-dashboard

image: docker.io/rainf/kubernetes-dashboard-amd64 #默认的镜像是使用google的,这里改成docker仓库的

imagePullPolicy: Always

ports:

- containerPort: 9090

protocol: TCP

args:

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

- --apiserver-host=http://192.168.1.10:8080 #注意这里是api的地址

livenessProbe:

httpGet:

path: /

port: 9090

initialDelaySeconds: 30

timeoutSeconds: 30

---

kind: Service

apiVersion: v1

metadata:

labels:

app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

type: NodePort

ports:

- port: 80

targetPort: 9090

nodePort: 30090

selector:

app: kubernetes-dashboard

根据yaml文件,创建dashboard容器,在kube-master上操作

[liruilong@master ansible]$ vim kube-dashboard.yaml

[liruilong@master ansible]$ kubectl create -f kubernetes-dashboard.yaml

deployment "kubernetes-dashboard" created

service "kubernetes-dashboard" created

[liruilong@master ansible]$ kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system kubernetes-dashboard-1953799730-jjdfj 1/1 Running 0 6s

[liruilong@master ansible]$

看一下在那个节点上,然后访问试试

[liruilong@master ansible]$ ansible nodes -a "docker ps"

node2 | CHANGED | rc=0 >>

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

14433d421746 docker.io/rainf/kubernetes-dashboard-amd64 "/dashboard --port..." 10 minutes ago Up 10 minutes k8s_kubernetes-dashboard.c82dac6b_kubernetes-dashboard-1953799730-jjdfj_kube-system_ea2ec370-1594-11ec-bbb1-000c294efe34_9c65bb2a

afc4d4a56eab registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" 10 minutes ago Up 10 minutes k8s_POD.28c50bab_kubernetes-dashboard-1953799730-jjdfj_kube-system_ea2ec370-1594-11ec-bbb1-000c294efe34_6851b7ee

node1 | CHANGED | rc=0 >>

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

[liruilong@master ansible]$

在 node2上,即可以通过 http://192.168.1.11:30090/ 访问,我们测试一下

嗯,到这里,就完成了全部的Linux+Docker+Ansible+K8S学习环境搭建。k8s的搭建方式有些落后,但是刚开始学习,慢慢来,接下来就进行愉快的 K8S学习吧。

- 点赞

- 收藏

- 关注作者

评论(0)