目录

摘要

新建项目

导入所需要的库

设置全局参数

图像预处理

读取数据

设置模型

设置训练和验证

测试

完整代码:

摘要

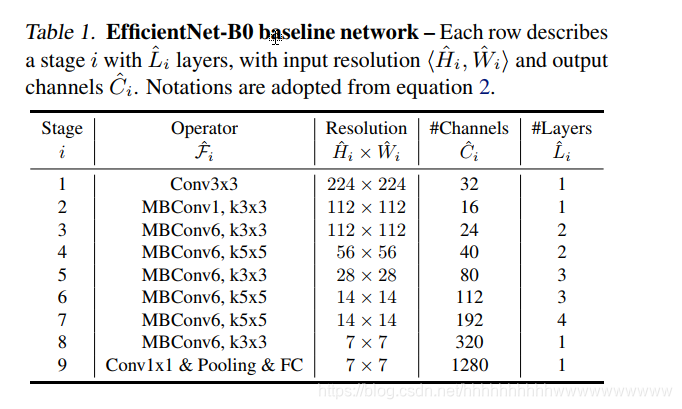

EfficientNet是谷歌2019年提出的分类模型,自从提出以后这个模型,各大竞赛平台常常能看到他的身影,成了霸榜的神器。下图是EfficientNet—B0模型的网络结构。

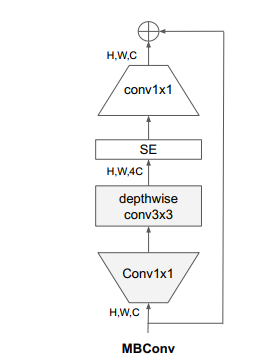

从网络中可以看出,作者构建了MBConv,结构如下图:

k对应的卷积核的大小,经过1×1的卷积,然后channel放大4倍,再经过depthwise conv3×3的卷积,然后经过SE模块后,再经过1×1的卷积,把channel恢复到输入的大小,最后和上层的输入融合。

本文简单介绍一下EfficientNet的网络结构,主要实战为主,下面讲讲如何使用EfficientNet实现猫狗分类,由于本文使用的Loss函数是CrossEntropyLoss,所以只需更改类别的个数就可以实现多分类。

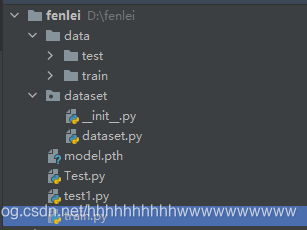

新建项目

新建一个图像分类的项目,data里面放数据集,dataset文件夹中自定义数据的读取方法,这次我不采用默认的读取方式,太简单没啥意思。然后再新建train.py和test.py

在项目的根目录新建train.py,然后在里面写训练代码。

导入所需要的库

首先检查有没有安装EfficientNet的库,如果没有安装则执行pip install efficientnet_pytorch安装EfficientNet库,安装后再导入。

设置全局参数

设置BatchSize、学习率和epochs,判断是否有cuda环境,如果没有设置为cpu。

# 设置全局参数

modellr = 1e-4

BATCH_SIZE = 64

EPOCHS = 20

DEVICE = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

图像预处理

在做图像与处理时,train数据集的transform和验证集的transform分开做,train的图像处理出了resize和归一化之外,还可以设置图像的增强,比如旋转、随机擦除等一系列的操作,验证集则不需要做图像增强,另外不要盲目的做增强,不合理的增强手段很可能会带来负作用,甚至出现Loss不收敛的情况。

# 数据预处理

transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize([0.5, 0.5, 0.5], [0.5, 0.5, 0.5])

])

transform_test = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize([0.5, 0.5, 0.5], [0.5, 0.5, 0.5])

])

读取数据

数据集地址:链接:https://pan.baidu.com/s/1ZM8vDWEzgscJMnBrZfvQGw 提取码:48c3

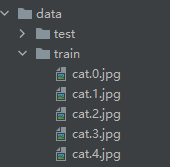

将其下载后解压放到data文件夹中。数据的目录如下图:

然后我们在dataset文件夹下面新建 __init__.py和dataset.py,在dataset.py文件夹写入下面的代码:

然后我们在train.py调用DogCat读取数据

设置模型

使用CrossEntropyLoss作为loss,模型采用efficientnet-B3。更改最后一层的全连接,将类别设置为2,然后将模型放到DEVICE。优化器选用Adam。

设置训练和验证

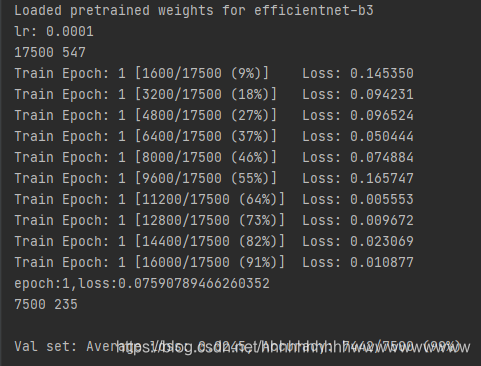

完成上面的代码后就可以开始训练,点击run开始训练,如下图:

由于我们使用了预训练模型,所以收敛速度很快。

测试

我介绍两种常用的测试方式,第一种是通用的,通过自己手动加载数据集然后做预测,具体操作如下:

测试集存放的目录如下图:

第一步 定义类别,这个类别的顺序和训练时的类别顺序对应,一定不要改变顺序!!!!我们在训练时,cat类别是0,dog类别是1,所以我定义classes为(cat,dog)。

第二步 定义transforms,transforms和验证集的transforms一样即可,别做数据增强。

第三步 加载model,并将模型放在DEVICE里,

第四步 读取图片并预测图片的类别,在这里注意,读取图片用PIL库的Image。不要用cv2,transforms不支持。

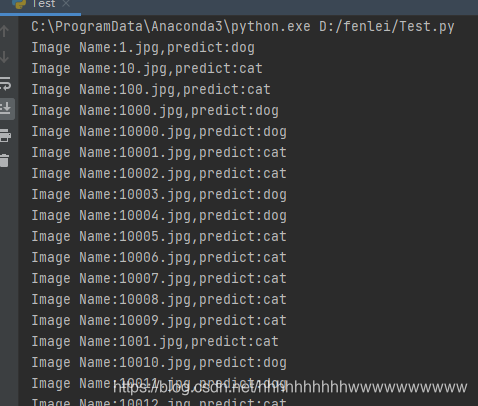

运行结果:

第二种使用我们刚才定义的dataset.py加载测试集。代码如下:

完整代码:

train.py

import torch.optim as optim

import torch

import torch.nn as nn

import torch.nn.parallel

import torch.optim

import torch.utils.data

import torch.utils.data.distributed

import torchvision.transforms as transforms

from dataset.dataset import DogCat

from torch.autograd import Variable

from efficientnet_pytorch import EfficientNet

#pip install efficientnet_pytorch

# 设置全局参数

modellr = 1e-4

BATCH_SIZE = 32

EPOCHS = 10

DEVICE = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

# 数据预处理

transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize([0.5, 0.5, 0.5], [0.5, 0.5, 0.5])

])

transform_test = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize([0.5, 0.5, 0.5], [0.5, 0.5, 0.5])

])

dataset_train = DogCat('data/train', transforms=transform, train=True)

dataset_test = DogCat("data/train", transforms=transform_test, train=False)

# 读取数据

print(dataset_train.imgs)

# 导入数据

train_loader = torch.utils.data.DataLoader(dataset_train, batch_size=BATCH_SIZE, shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset_test, batch_size=BATCH_SIZE, shuffle=False)

# 实例化模型并且移动到GPU

criterion = nn.CrossEntropyLoss()

model_ft = EfficientNet.from_pretrained('efficientnet-b3')

num_ftrs = model_ft._fc.in_features

model_ft._fc = nn.Linear(num_ftrs, 2)

model_ft.to(DEVICE)

# 选择简单暴力的Adam优化器,学习率调低

optimizer = optim.Adam(model_ft.parameters(), lr=modellr)

def adjust_learning_rate(optimizer, epoch):

"""Sets the learning rate to the initial LR decayed by 10 every 30 epochs"""

modellrnew = modellr * (0.1 ** (epoch // 50))

print("lr:", modellrnew)

for param_group in optimizer.param_groups:

param_group['lr'] = modellrnew

# 定义训练过程

def train(model, device, train_loader, optimizer, epoch):

model.train()

sum_loss = 0

total_num = len(train_loader.dataset)

print(total_num, len(train_loader))

for batch_idx, (data, target) in enumerate(train_loader):

data, target = Variable(data).to(device), Variable(target).to(device)

output = model(data)

loss = criterion(output, target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

print_loss = loss.data.item()

sum_loss += print_loss

if (batch_idx + 1) % 50 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, (batch_idx + 1) * len(data), len(train_loader.dataset),

100. * (batch_idx + 1) / len(train_loader), loss.item()))

ave_loss = sum_loss / len(train_loader)

print('epoch:{},loss:{}'.format(epoch, ave_loss))

# 验证过程

def val(model, device, test_loader):

model.eval()

test_loss = 0

correct = 0

total_num = len(test_loader.dataset)

print(total_num, len(test_loader))

with torch.no_grad():

for data, target in test_loader:

data, target = Variable(data).to(device), Variable(target).to(device)

output = model(data)

loss = criterion(output, target)

_, pred = torch.max(output.data, 1)

correct += torch.sum(pred == target)

print_loss = loss.data.item()

test_loss += print_loss

correct = correct.data.item()

acc = correct / total_num

avgloss = test_loss / len(test_loader)

print('\nVal set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

avgloss, correct, len(test_loader.dataset), 100 * acc))

# 训练

for epoch in range(1, EPOCHS + 1):

adjust_learning_rate(optimizer, epoch)

train(model_ft, DEVICE, train_loader, optimizer, epoch)

val(model_ft, DEVICE, test_loader)

torch.save(model_ft, 'model.pth')

test1.py

test2.py

图像分类EfficientNet实战.zip-深度学习文档类资源-CSDN下载

评论(0)