强化学习笔记5-Python/OpenAI/TensorFlow/ROS-阶段复习

【摘要】 到目前为止,已经完成了4节课程的学习,侧重OpenAI,分别如下:

基础知识:https://blog.csdn.net/zhangrelay/article/details/91361113程序指令:https://blog.csdn.net/zhangrelay/article/details/91414600规划博弈:https://blog.csdn.net/zha...

到目前为止,已经完成了4节课程的学习,侧重OpenAI,分别如下:

- 基础知识:https://blog.csdn.net/zhangrelay/article/details/91361113

- 程序指令:https://blog.csdn.net/zhangrelay/article/details/91414600

- 规划博弈:https://blog.csdn.net/zhangrelay/article/details/91867331

- 时间差分:https://blog.csdn.net/zhangrelay/article/details/92012795

这时候,再重新看之前博文,侧重ROS,分别如下:

- 安装配置:https://blog.csdn.net/zhangrelay/article/details/89702997

- 环境构建:https://blog.csdn.net/zhangrelay/article/details/89817010

- 深度学习:https://blog.csdn.net/zhangrelay/article/details/90177162

通过上面一系列探索学习,就能够完全掌握人工智能学工具(OpenAI)和机器人学工具(ROS)。

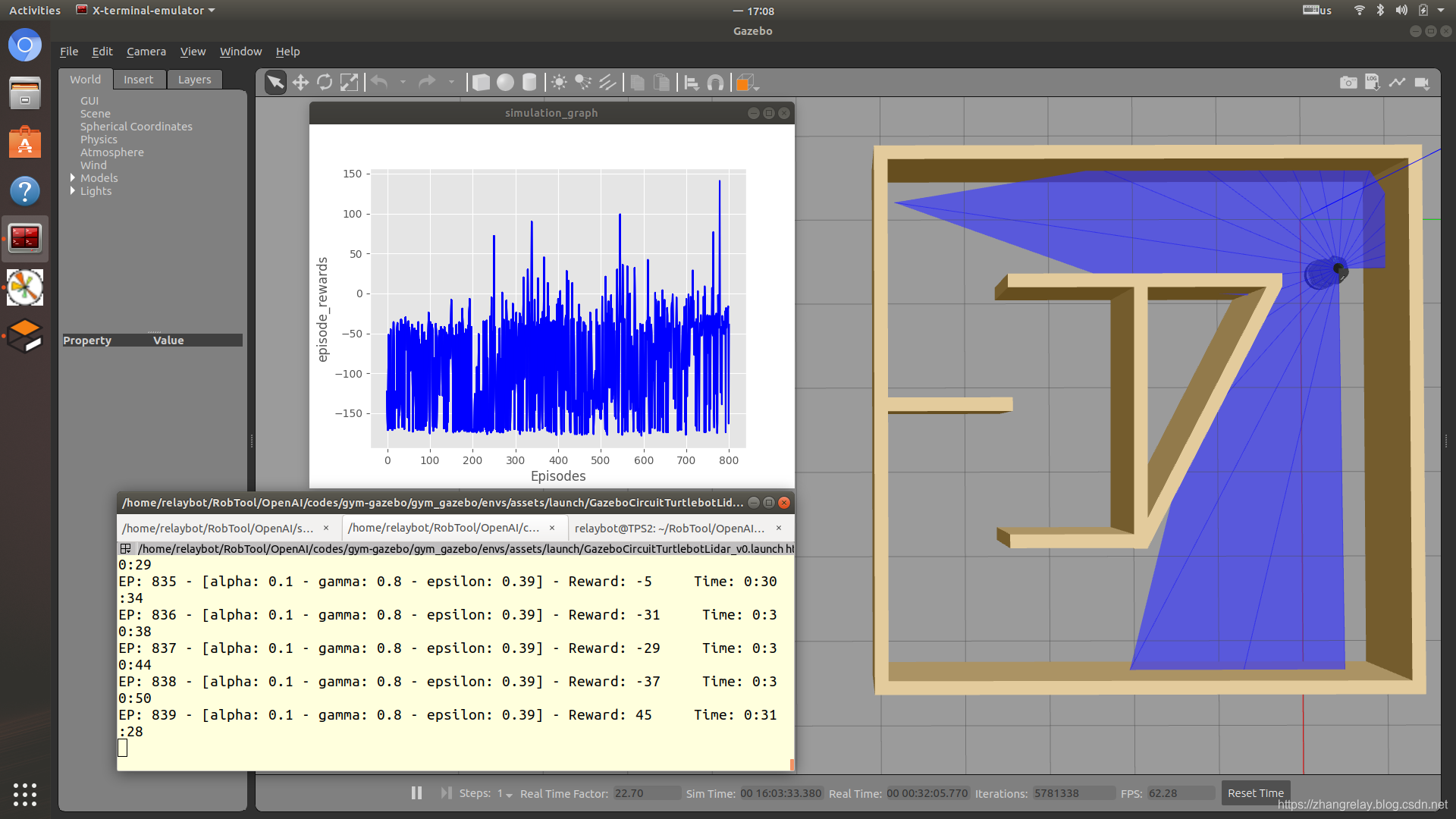

理解如下环境中,Q学习和SARSA差异:

Q学习-circuit2_turtlebot_lidar_qlearn.py:

-

#!/usr/bin/env python

-

import gym

-

from gym import wrappers

-

import gym_gazebo

-

import time

-

import numpy

-

import random

-

import time

-

-

import qlearn

-

import liveplot

-

-

def render():

-

render_skip = 0 #Skip first X episodes.

-

render_interval = 50 #Show render Every Y episodes.

-

render_episodes = 10 #Show Z episodes every rendering.

-

-

if (x%render_interval == 0) and (x != 0) and (x > render_skip):

-

env.render()

-

elif ((x-render_episodes)%render_interval == 0) and (x != 0) and (x > render_skip) and (render_episodes < x):

-

env.render(close=True)

-

-

if __name__ == '__main__':

-

-

env = gym.make('GazeboCircuit2TurtlebotLidar-v0')

-

-

outdir = '/tmp/gazebo_gym_experiments'

-

env = gym.wrappers.Monitor(env, outdir, force=True)

-

plotter = liveplot.LivePlot(outdir)

-

-

last_time_steps = numpy.ndarray(0)

-

-

qlearn = qlearn.QLearn(actions=range(env.action_space.n),

-

alpha=0.2, gamma=0.8, epsilon=0.9)

-

-

initial_epsilon = qlearn.epsilon

-

-

epsilon_discount = 0.9986

-

-

start_time = time.time()

-

total_episodes = 10000

-

highest_reward = 0

-

-

for x in range(total_episodes):

-

done = False

-

-

cumulated_reward = 0 #Should going forward give more reward then L/R ?

-

-

observation = env.reset()

-

-

if qlearn.epsilon > 0.05:

-

qlearn.epsilon *= epsilon_discount

-

-

#render() #defined above, not env.render()

-

-

state = ''.join(map(str, observation))

-

-

for i in range(1500):

-

-

# Pick an action based on the current state

-

action = qlearn.chooseAction(state)

-

-

# Execute the action and get feedback

-

observation, reward, done, info = env.step(action)

-

cumulated_reward += reward

-

-

if highest_reward < cumulated_reward:

-

highest_reward = cumulated_reward

-

-

nextState = ''.join(map(str, observation))

-

-

qlearn.learn(state, action, reward, nextState)

-

-

env._flush(force=True)

-

-

if not(done):

-

state = nextState

-

else:

-

last_time_steps = numpy.append(last_time_steps, [int(i + 1)])

-

break

-

-

if x%100==0:

-

plotter.plot(env)

-

-

m, s = divmod(int(time.time() - start_time), 60)

-

h, m = divmod(m, 60)

-

print ("EP: "+str(x+1)+" - [alpha: "+str(round(qlearn.alpha,2))+" - gamma: "+str(round(qlearn.gamma,2))+" - epsilon: "+str(round(qlearn.epsilon,2))+"] - Reward: "+str(cumulated_reward)+" Time: %d:%02d:%02d" % (h, m, s))

-

-

#Github table content

-

print ("\n|"+str(total_episodes)+"|"+str(qlearn.alpha)+"|"+str(qlearn.gamma)+"|"+str(initial_epsilon)+"*"+str(epsilon_discount)+"|"+str(highest_reward)+"| PICTURE |")

-

-

l = last_time_steps.tolist()

-

l.sort()

-

-

#print("Parameters: a="+str)

-

print("Overall score: {:0.2f}".format(last_time_steps.mean()))

-

print("Best 100 score: {:0.2f}".format(reduce(lambda x, y: x + y, l[-100:]) / len(l[-100:])))

-

-

env.close()

SARSA-circuit2_turtlebot_lidar_sarsa.py:

-

#!/usr/bin/env python

-

import gym

-

from gym import wrappers

-

import gym_gazebo

-

import time

-

import numpy

-

import random

-

import time

-

-

import liveplot

-

import sarsa

-

-

-

if __name__ == '__main__':

-

-

env = gym.make('GazeboCircuit2TurtlebotLidar-v0')

-

-

outdir = '/tmp/gazebo_gym_experiments'

-

env = gym.wrappers.Monitor(env, outdir, force=True)

-

plotter = liveplot.LivePlot(outdir)

-

-

last_time_steps = numpy.ndarray(0)

-

-

sarsa = sarsa.Sarsa(actions=range(env.action_space.n),

-

epsilon=0.9, alpha=0.2, gamma=0.9)

-

-

initial_epsilon = sarsa.epsilon

-

-

epsilon_discount = 0.9986

-

-

start_time = time.time()

-

total_episodes = 10000

-

highest_reward = 0

-

-

for x in range(total_episodes):

-

done = False

-

-

cumulated_reward = 0 #Should going forward give more reward then L/R ?

-

-

observation = env.reset()

-

-

if sarsa.epsilon > 0.05:

-

sarsa.epsilon *= epsilon_discount

-

-

#render() #defined above, not env.render()

-

-

state = ''.join(map(str, observation))

-

-

for i in range(1500):

-

-

# Pick an action based on the current state

-

action = sarsa.chooseAction(state)

-

-

# Execute the action and get feedback

-

observation, reward, done, info = env.step(action)

-

cumulated_reward += reward

-

-

if highest_reward < cumulated_reward:

-

highest_reward = cumulated_reward

-

-

nextState = ''.join(map(str, observation))

-

nextAction = sarsa.chooseAction(nextState)

-

-

#sarsa.learn(state, action, reward, nextState)

-

sarsa.learn(state, action, reward, nextState, nextAction)

-

-

env._flush(force=True)

-

-

if not(done):

-

state = nextState

-

else:

-

last_time_steps = numpy.append(last_time_steps, [int(i + 1)])

-

break

-

-

if x%100==0:

-

plotter.plot(env)

-

-

m, s = divmod(int(time.time() - start_time), 60)

-

h, m = divmod(m, 60)

-

print ("EP: "+str(x+1)+" - [alpha: "+str(round(sarsa.alpha,2))+" - gamma: "+str(round(sarsa.gamma,2))+" - epsilon: "+str(round(sarsa.epsilon,2))+"] - Reward: "+str(cumulated_reward)+" Time: %d:%02d:%02d" % (h, m, s))

-

-

#Github table content

-

print ("\n|"+str(total_episodes)+"|"+str(sarsa.alpha)+"|"+str(sarsa.gamma)+"|"+str(initial_epsilon)+"*"+str(epsilon_discount)+"|"+str(highest_reward)+"| PICTURE |")

-

-

l = last_time_steps.tolist()

-

l.sort()

-

-

#print("Parameters: a="+str)

-

print("Overall score: {:0.2f}".format(last_time_steps.mean()))

-

print("Best 100 score: {:0.2f}".format(reduce(lambda x, y: x + y, l[-100:]) / len(l[-100:])))

-

-

env.close()

复习:时间差分https://blog.csdn.net/zhangrelay/article/details/92012795

其中案例出租车demo与上面turtlebot-demo,理解并掌握ROS和OpenAI这两大工具最基本的应用。

文章来源: zhangrelay.blog.csdn.net,作者:zhangrelay,版权归原作者所有,如需转载,请联系作者。

原文链接:zhangrelay.blog.csdn.net/article/details/92050001

【版权声明】本文为华为云社区用户转载文章,如果您发现本社区中有涉嫌抄袭的内容,欢迎发送邮件进行举报,并提供相关证据,一经查实,本社区将立刻删除涉嫌侵权内容,举报邮箱:

cloudbbs@huaweicloud.com

- 点赞

- 收藏

- 关注作者

评论(0)