refineFace 笔记

原文地址:

RetinaFace: Single-stage Dense Face Localisation in the Wildarxiv.org

源码地址:

https://github.com/deepinsight/insightface/tree/master/RetinaFace

参考代码

https://github.com/biubug6/Pytorch_Retinaface

RefineFace是基于人脸检测器RetinaNet改进的,论文的主要贡献有以下几点:

- 设计了STR模块,在high level层粗略地调整anchors的定位和尺寸,为接下来的回归提供更好的初始值。

- 设计了STC模块,过滤掉low level层大部分简单的负样本,以减少分类器的搜索空间。

- 引入了SML模块,更好的从背景中区分不同尺度的人脸。

- 提出了FSM模块,使分类任务学习到更有区分性的特征。

- 构造了RFE模块,为检测极端姿态下的人脸提供不同的感受野。

- 在AFW、PASCAL face、FDDB、MAFA和WIDER FACE数据集上取得state-of-the-art的性能表现。

RefineFace采用的有6-level的特征金字塔结构的ResNet作为backbone,从这四个residual blocks提取的特征图即是上图中的C2,C3,C4,C5,而C6和C7则是直接从两个接在C5之后下采样的3 x 3卷积层提取。P2,P3,P4,P5特征图是从C2,C3,C4,C5引出的旁路分支中提取,P6,P7从P5之后接两个下采样的3 x 3卷积层提取的。

- STR:由C5,C6,C7,P5,P6和P7进行two-step的回归。

- STC:由C2,C3,C4,P2,P3和P4进行two-step的分类。

- SML:分类损失添加了scale-aware margin来更好的区分背景中不同尺度的人脸。

- FSM:包含一个RoIAlign层,4个3 x 3的卷积层和一个全局平均池化层,采用focal loss,以使backbone分类任务学习到更有区分性的特征。

- RFE:丰富了用于预测分类和定位目标特征的感受野。

1.论文框架

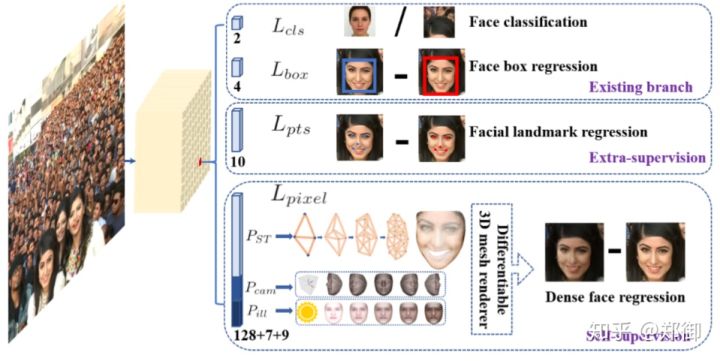

思路如下:大部分人脸检测重点关注人脸分类和人脸框定位回归分支这两部分,retinaface单级逐像素人脸定位方法加入了face landmark 回归(外监督)以及3d相关的dense face regression(自监督)的多任务学习。这样每个positive anchor输出:人脸得分,人脸框,5个人脸关键点,投射的图像平面上的3D人脸顶点

2.相关工作

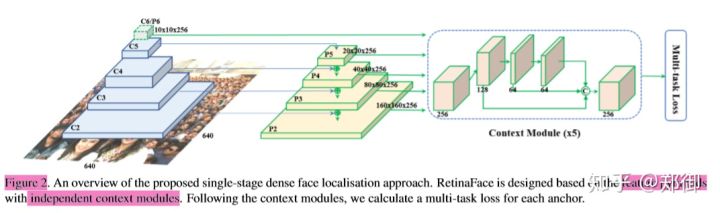

RetinaFace主要有四个特点:结构特点有FPN、单阶段、上下文建模、多任务学习

a.FPN

类似于retinanet,作者采用 FPN 中的 Feature Pyramid 结构,并以 ResNet-152 作为 Backbone,其中, C2/C3/C4/C5 为 ResNet 中各个 Residual Block 所生成的 Feature Map,而 C6 由 C5 经过 3*3 的卷积层生成(步长为 2)

-

def get_sym_by_name(name, sym_buffer):

-

if name in sym_buffer:

-

return sym_buffer[name]

-

ret = None

-

name_key = name[0:1]

-

name_num = int(name[1:])

-

#print('getting', name, name_key, name_num)

-

if name_key=='C':

-

assert name_num%2==0

-

bottom = get_sym_by_name('C%d'%(name_num//2), sym_buffer)

-

ret = conv_act_layer(bottom, '%s_C%d'(PREFIX, name_num),

-

F1, kernel=(3, 3), pad=(1, 1), stride=(2, 2), act_type='relu', bias_wd_mult=_bwm)

-

elif name_key=='P':

-

assert name_num%2==0

-

assert name_num<=max(config.RPN_FEAT_STRIDE)

-

lateral = get_sym_by_name('L%d'%(name_num), sym_buffer)

-

if name_num==max(config.RPN_FEAT_STRIDE) or name_num>32:

-

ret = mx.sym.identity(lateral, name='%s_P%d'%(PREFIX, name_num))

-

else:

-

bottom = get_sym_by_name('L%d'%(name_num*2), sym_buffer)

-

bottom_up = upsampling(bottom, F1, '%s_U%d'%(PREFIX, name_num))

-

if config.USE_CROP:

-

bottom_up = mx.symbol.Crop(*[bottom_up, lateral])

-

aggr = lateral + bottom_up

-

aggr = conv_act_layer(aggr, '%s_A%d'%(PREFIX, name_num),

-

F1, kernel=(3, 3), pad=(1, 1), stride=(1, 1), act_type='relu', bias_wd_mult=_bwm)

-

ret = mx.sym.identity(aggr, name='%s_P%d'%(PREFIX, name_num))

-

elif name_key=='L':

-

c = get_sym_by_name('C%d'%(name_num), sym_buffer)

-

#print('L', name, F1)

-

ret = conv_act_layer(c, '%s_L%d'%(PREFIX, name_num),

-

F1, kernel=(1, 1), pad=(0, 0), stride=(1, 1), act_type='relu', bias_wd_mult=_bwm)

-

else:

-

raise RuntimeError('%s is not a valid sym key name'%name)

-

sym_buffer[name] = ret

-

return ret

-

for stride in [4,8,16,32]:

-

sym_buffer['C%d'%stride] = stride2layer[stride]

-

if not config.USE_FPN:

-

for stride in config.RPN_FEAT_STRIDE:

-

name = 'L%d'%stride

-

ret[stride] = get_sym_by_name(name, sym_buffer)

-

else:

-

for stride in config.RPN_FEAT_STRIDE:

-

name = 'P%d'%stride

-

ret[stride] = get_sym_by_name(name, sym_buffer)

在mxnet框架中可能不太明确,下面参考pytorch代码更清晰了解FPN,但是pytorch代码尚未完善,只使用3层FPN。

-

class FPN(nn.Module):

-

def __init__(self,in_channels_list,out_channels):

-

super(FPN,self).__init__()

-

leaky = 0

-

if (out_channels <= 64):

-

leaky = 0.1

-

self.output1 = conv_bn1X1(in_channels_list[0], out_channels, stride = 1, leaky = leaky)

-

self.output2 = conv_bn1X1(in_channels_list[1], out_channels, stride = 1, leaky = leaky)

-

self.output3 = conv_bn1X1(in_channels_list[2], out_channels, stride = 1, leaky = leaky)

-

-

self.merge1 = conv_bn(out_channels, out_channels, leaky = leaky)

-

self.merge2 = conv_bn(out_channels, out_channels, leaky = leaky)

-

-

def forward(self, input):

-

# names = list(input.keys())

-

input = list(input.values())

-

-

output1 = self.output1(input[0])

-

output2 = self.output2(input[1])

-

output3 = self.output3(input[2])

-

-

up3 = F.interpolate(output3, size=[output2.size(2), output2.size(3)], mode="nearest")

-

output2 = output2 + up3

-

output2 = self.merge2(output2)

-

-

up2 = F.interpolate(output2, size=[output1.size(2), output1.size(3)], mode="nearest")

-

output1 = output1 + up2

-

output1 = self.merge1(output1)

-

-

out = [output1, output2, output3]

-

return out

b.单阶段,快捷高效,用mobile-net时在arm上可以实时

当前人脸检测方法继承了一些通用检测方法的成果,主要分为两类:两阶方法如Faster RCNN和单阶方法如SSD和RetinaNet。两阶方法应用一个“proposal and refinement”机制提取高精度定位。而单阶方法密集采样人脸位置和尺度,导致训练过程中极度不平衡的正样本和负样本。为了处理这种不平衡,采样和re-weighting方法被广泛使用。相比两阶方法,单阶方法更高效并且有更高的召回率,但是有获取更高误报率的风险,影响定位精度

c.上下文:为了增强模型对小人脸的上下文推理能力

为了增强模型对小人脸的上下文推理能力,SSH和PyramidBox在特征金字塔上采用了上下文模块来增强欧几里得网格中获取的感受野。为了增强CNN的非严格变换模拟能力,形变卷积网络(DCN)利用一个新的形变层来模拟几何形变。WIDER Face挑战2018的冠军方案说明对于人脸检测来说,严格(扩大)和非严格(变形)上下文建模是互补并且正交

SSH就是把输入送入三个分支,第一个分支是一个3的卷积,第二个分支是kernel为3(感受野为5),第三个分支是感受野为7(连续三个3*3卷积),然后三个分支concat到一块,送入relu输出。

-

def ssh_context_module(body, num_filter, filter_in, name):

-

conv_dimred = conv_act_layer(body, name+'_conv1',

-

num_filter, kernel=(3, 3), pad=(1, 1), stride=(1, 1), act_type='relu', separable=False, filter_in = filter_in)

-

conv5x5 = conv_act_layer(conv_dimred, name+'_conv2',

-

num_filter, kernel=(3, 3), pad=(1, 1), stride=(1, 1), act_type='', separable=False)

-

conv7x7_1 = conv_act_layer(conv_dimred, name+'_conv3_1',

-

num_filter, kernel=(3, 3), pad=(1, 1), stride=(1, 1), act_type='relu', separable=False)

-

conv7x7 = conv_act_layer(conv7x7_1, name+'_conv3_2',

-

num_filter, kernel=(3, 3), pad=(1, 1), stride=(1, 1), act_type='', separable=False)

-

return (conv5x5, conv7x7)

-

-

-

def ssh_detection_module(body, num_filter, filter_in, name):

-

assert num_filter%4==0

-

conv3x3 = conv_act_layer(body, name+'_conv1',

-

num_filter//2, kernel=(3, 3), pad=(1, 1), stride=(1, 1), act_type='', separable=False, filter_in=filter_in)

-

#_filter = max(num_filter//4, 16)

-

_filter = num_filter//4

-

conv5x5, conv7x7 = ssh_context_module(body, _filter, filter_in, name+'_context')

-

ret = mx.sym.concat(*[conv3x3, conv5x5, conv7x7], dim=1, name = name+'_concat')

-

ret = mx.symbol.Activation(data=ret, act_type='relu', name=name+'_concat_relu')

-

out_filter = num_filter//2+_filter*2

-

if config.USE_DCN>0:

-

ret = conv_deformable(ret, num_filter = out_filter, name = name+'_concat_dcn')

-

return ret

d.多任务学习:额外监督信息

联合人脸检测和对齐被广泛应用以提供更适用于提取人脸特征的人脸形状。在Mask R-CNN中,检测性能通过增加一个预测目标掩模的并行分支,检测性能得到显著提升。Densepose利用Mask-RCNN的架构,来获取每个选择区域的密集标签和位置。尽管如此,密集回归标签是通过监督学习训练的。此外,dense分支是一个很小的FCN,应用于每一个RoI上来预测像素到像素的密集映射。

以下为多任务学习损失计算:loc和landmark都是smoothl1 loss,confidence是cross entropy loss。如果不关注那个3D的mesh coder,这篇文章就是引入了landmark的一个多任务学习

-

def get_out(conv_fpn_feat, prefix, stride, landmark=False, lr_mult=1.0, gt_boxes=None):

-

A = config.NUM_ANCHORS

-

bbox_pred_len = 4

-

landmark_pred_len = 10

-

if config.USE_BLUR:

-

bbox_pred_len = 5

-

if config.USE_OCCLUSION:

-

landmark_pred_len = 15

-

ret_group = []

-

num_anchors = config.RPN_ANCHOR_CFG[str(stride)]['NUM_ANCHORS']

-

cls_label = mx.symbol.Variable(name='%s_label_stride%d'%(prefix,stride))

-

bbox_target = mx.symbol.Variable(name='%s_bbox_target_stride%d'%(prefix,stride))

-

bbox_weight = mx.symbol.Variable(name='%s_bbox_weight_stride%d'%(prefix,stride))

-

if landmark:

-

landmark_target = mx.symbol.Variable(name='%s_landmark_target_stride%d'%(prefix,stride))

-

landmark_weight = mx.symbol.Variable(name='%s_landmark_weight_stride%d'%(prefix,stride))

-

conv_feat = conv_fpn_feat[stride]

-

rpn_relu = head_module(conv_feat, F2*config.CONTEXT_FILTER_RATIO, F1, 'rf_head_stride%d'%stride)

-

-

rpn_cls_score = conv_only(rpn_relu, '%s_rpn_cls_score_stride%d'%(prefix, stride), 2*num_anchors,

-

kernel=(1,1), pad=(0,0), stride=(1, 1))

-

-

rpn_bbox_pred = conv_only(rpn_relu, '%s_rpn_bbox_pred_stride%d'%(prefix,stride), bbox_pred_len*num_anchors,

-

kernel=(1,1), pad=(0,0), stride=(1, 1))

-

-

# prepare rpn data

-

rpn_cls_score_reshape = mx.symbol.Reshape(data=rpn_cls_score,

-

shape=(0, 2, -1),

-

name="%s_rpn_cls_score_reshape_stride%s" % (prefix,stride))

-

-

rpn_bbox_pred_reshape = mx.symbol.Reshape(data=rpn_bbox_pred,

-

shape=(0, 0, -1),

-

name="%s_rpn_bbox_pred_reshape_stride%s" % (prefix,stride))

-

if landmark:

-

rpn_landmark_pred = conv_only(rpn_relu, '%s_rpn_landmark_pred_stride%d'%(prefix,stride), landmark_pred_len*num_anchors,

-

kernel=(1,1), pad=(0,0), stride=(1, 1))

-

rpn_landmark_pred_reshape = mx.symbol.Reshape(data=rpn_landmark_pred,

-

shape=(0, 0, -1),

-

name="%s_rpn_landmark_pred_reshape_stride%s" % (prefix,stride))

-

-

if config.TRAIN.RPN_ENABLE_OHEM>=2:

-

label, anchor_weight, pos_count = mx.sym.Custom(op_type='rpn_fpn_ohem3', stride=int(stride), network=config.network, dataset=config.dataset, prefix=prefix, cls_score=rpn_cls_score_reshape, labels = cls_label)

-

-

_bbox_weight = mx.sym.tile(anchor_weight, (1,1,bbox_pred_len))

-

_bbox_weight = _bbox_weight.reshape((0, -1, A * bbox_pred_len)).transpose((0,2,1))

-

bbox_weight = mx.sym.elemwise_mul(bbox_weight, _bbox_weight, name='%s_bbox_weight_mul_stride%s'%(prefix,stride))

-

-

if landmark:

-

_landmark_weight = mx.sym.tile(anchor_weight, (1,1,landmark_pred_len))

-

_landmark_weight = _landmark_weight.reshape((0, -1, A * landmark_pred_len)).transpose((0,2,1))

-

landmark_weight = mx.sym.elemwise_mul(landmark_weight, _landmark_weight, name='%s_landmark_weight_mul_stride%s'%(prefix,stride))

-

else:

-

label = cls_label

-

#if not config.FACE_LANDMARK:

-

# label, bbox_weight = mx.sym.Custom(op_type='rpn_fpn_ohem', stride=int(stride), cls_score=rpn_cls_score_reshape, bbox_weight = bbox_weight , labels = label)

-

#else:

-

# label, bbox_weight, landmark_weight = mx.sym.Custom(op_type='rpn_fpn_ohem2', stride=int(stride), cls_score=rpn_cls_score_reshape, bbox_weight = bbox_weight, landmark_weight=landmark_weight, labels = label)

-

#cls loss

-

rpn_cls_prob = mx.symbol.SoftmaxOutput(data=rpn_cls_score_reshape,

-

label=label,

-

multi_output=True,

-

normalization='valid', use_ignore=True, ignore_label=-1,

-

grad_scale = lr_mult,

-

name='%s_rpn_cls_prob_stride%d'%(prefix,stride))

-

ret_group.append(rpn_cls_prob)

-

ret_group.append(mx.sym.BlockGrad(label))

-

-

pos_count = mx.symbol.sum(pos_count)

-

pos_count = pos_count + 0.001 #avoid zero

-

-

#bbox loss

-

bbox_diff = rpn_bbox_pred_reshape-bbox_target

-

bbox_diff = bbox_diff * bbox_weight

-

rpn_bbox_loss_ = mx.symbol.smooth_l1(name='%s_rpn_bbox_loss_stride%d_'%(prefix,stride), scalar=3.0, data=bbox_diff)

-

bbox_lr_mode0 = 0.25*lr_mult*config.TRAIN.BATCH_IMAGES / config.TRAIN.RPN_BATCH_SIZE

-

landmark_lr_mode0 = 0.4*config.LANDMARK_LR_MULT*bbox_lr_mode0

-

if config.LR_MODE==0:

-

rpn_bbox_loss = mx.sym.MakeLoss(name='%s_rpn_bbox_loss_stride%d'%(prefix,stride), data=rpn_bbox_loss_, grad_scale=bbox_lr_mode0)

-

else:

-

rpn_bbox_loss_ = mx.symbol.broadcast_div(rpn_bbox_loss_, pos_count)

-

rpn_bbox_loss = mx.sym.MakeLoss(name='%s_rpn_bbox_loss_stride%d'%(prefix,stride), data=rpn_bbox_loss_, grad_scale=0.5*lr_mult)

-

ret_group.append(rpn_bbox_loss)

-

ret_group.append(mx.sym.BlockGrad(bbox_weight))

-

-

#landmark loss

-

if landmark:

-

landmark_diff = rpn_landmark_pred_reshape-landmark_target

-

landmark_diff = landmark_diff * landmark_weight

-

rpn_landmark_loss_ = mx.symbol.smooth_l1(name='%s_rpn_landmark_loss_stride%d_'%(prefix,stride), scalar=3.0, data=landmark_diff)

-

if config.LR_MODE==0:

-

rpn_landmark_loss = mx.sym.MakeLoss(name='%s_rpn_landmark_loss_stride%d'%(prefix,stride), data=rpn_landmark_loss_, grad_scale=landmark_lr_mode0)

-

else:

-

rpn_landmark_loss_ = mx.symbol.broadcast_div(rpn_landmark_loss_, pos_count)

-

rpn_landmark_loss = mx.sym.MakeLoss(name='%s_rpn_landmark_loss_stride%d'%(prefix,stride), data=rpn_landmark_loss_, grad_scale=0.2*config.LANDMARK_LR_MULT*lr_mult)

-

ret_group.append(rpn_landmark_loss)

-

ret_group.append(mx.sym.BlockGrad(landmark_weight))

-

if config.USE_3D:

-

from rcnn.PY_OP import rpn_3d_mesh

-

pass

-

if config.CASCADE>0:

-

if config.CASCADE_MODE==0:

-

body = rpn_relu

-

elif config.CASCADE_MODE==1:

-

body = head_module(conv_feat, F2*config.CONTEXT_FILTER_RATIO, F1, '%s_head_stride%d_cas'%(PREFIX, stride))

-

elif config.CASCADE_MODE==2:

-

body = conv_feat + rpn_relu

-

body = head_module(body, F2*config.CONTEXT_FILTER_RATIO, F1, '%s_head_stride%d_cas'%(PREFIX,stride))

-

else:

-

body = head_module(conv_feat, F2*config.CONTEXT_FILTER_RATIO, F1, '%s_head_stride%d_cas'%(PREFIX, stride))

-

body = mx.sym.concat(body, rpn_cls_score, rpn_bbox_pred, rpn_landmark_pred, dim=1)

-

-

#cls_pred = rpn_cls_prob

-

cls_pred_t0 = rpn_cls_score_reshape

-

cls_label_raw = cls_label

-

cls_label_t0 = label

-

bbox_pred_t0 = rpn_bbox_pred_reshape

-

#bbox_pred = rpn_bbox_pred

-

#bbox_pred = mx.sym.transpose(bbox_pred, (0, 2, 3, 1))

-

#bbox_pred_len = 4

-

#bbox_pred = mx.sym.reshape(bbox_pred, (0, -1, bbox_pred_len))

-

bbox_label_t0 = bbox_target

-

#prefix = prefix+'2'

-

for casid in range(config.CASCADE):

-

#pseudo-code

-

#anchor_label = GENANCHOR(bbox_label, bbox_pred, stride)

-

#bbox_label = F(anchor_label, bbox_pred)

-

#bbox_label = bbox_label - bbox_pred

-

cls_pred = conv_only(body, '%s_rpn_cls_score_stride%d_cas%d'%(prefix, stride, casid), 2*num_anchors,

-

kernel=(1,1), pad=(0,0), stride=(1, 1))

-

rpn_cls_score_reshape = mx.symbol.Reshape(data=cls_pred,

-

shape=(0, 2, -1),

-

name="%s_rpn_cls_score_reshape_stride%s_cas%d" % (prefix,stride, casid))

-

-

#bbox_label equals to bbox_target

-

#cls_pred, cls_label, bbox_pred, bbox_label, bbox_weight, pos_count = mx.sym.Custom(op_type='cascade_refine', stride=int(stride), network=config.network, dataset=config.dataset, prefix=prefix, cls_pred=cls_pred, cls_label = cls_label, bbox_pred = bbox_pred, bbox_label = bbox_label)

-

#cls_label, bbox_label, anchor_weight, pos_count = mx.sym.Custom(op_type='cascade_refine', stride=int(stride), network=config.network, dataset=config.dataset, prefix=prefix, cls_pred_t0=cls_pred_t0, cls_label_t0 = cls_label_t0, cls_pred = rpn_cls_score_reshape, bbox_pred_t0 = bbox_pred_t0, bbox_label_t0 = bbox_label_t0)

-

cls_label, bbox_label, anchor_weight, pos_count = mx.sym.Custom(op_type='cascade_refine', stride=int(stride), network=config.network,

-

dataset=config.dataset, prefix=prefix,

-

cls_label_t0 = cls_label_t0, cls_pred_t0=cls_pred_t0, cls_pred = rpn_cls_score_reshape,

-

bbox_pred_t0 = bbox_pred_t0, bbox_label_t0 = bbox_label_t0,

-

cls_label_raw = cls_label_raw, cas_gt_boxes = gt_boxes)

-

if stride in config.CASCADE_CLS_STRIDES:

-

rpn_cls_prob = mx.symbol.SoftmaxOutput(data=rpn_cls_score_reshape,

-

label=cls_label,

-

multi_output=True,

-

normalization='valid', use_ignore=True, ignore_label=-1,

-

grad_scale = lr_mult,

-

name='%s_rpn_cls_prob_stride%d_cas%d'%(prefix,stride,casid))

-

ret_group.append(rpn_cls_prob)

-

ret_group.append(mx.sym.BlockGrad(cls_label))

-

if stride in config.CASCADE_BBOX_STRIDES:

-

bbox_pred = conv_only(body, '%s_rpn_bbox_pred_stride%d_cas%d'%(prefix,stride,casid), bbox_pred_len*num_anchors,

-

kernel=(1,1), pad=(0,0), stride=(1, 1))

-

-

rpn_bbox_pred_reshape = mx.symbol.Reshape(data=bbox_pred,

-

shape=(0, 0, -1),

-

name="%s_rpn_bbox_pred_reshape_stride%s_cas%d" % (prefix,stride,casid))

-

_bbox_weight = mx.sym.tile(anchor_weight, (1,1,bbox_pred_len))

-

_bbox_weight = _bbox_weight.reshape((0, -1, A * bbox_pred_len)).transpose((0,2,1))

-

bbox_weight = _bbox_weight

-

pos_count = mx.symbol.sum(pos_count)

-

pos_count = pos_count + 0.01 #avoid zero

-

#bbox_weight = mx.sym.elemwise_mul(bbox_weight, _bbox_weight, name='%s_bbox_weight_mul_stride%s'%(prefix,stride))

-

#bbox loss

-

bbox_diff = rpn_bbox_pred_reshape-bbox_label

-

bbox_diff = bbox_diff * bbox_weight

-

rpn_bbox_loss_ = mx.symbol.smooth_l1(name='%s_rpn_bbox_loss_stride%d_cas%d'%(prefix,stride,casid), scalar=3.0, data=bbox_diff)

-

if config.LR_MODE==0:

-

rpn_bbox_loss = mx.sym.MakeLoss(name='%s_rpn_bbox_loss_stride%d_cas%d'%(prefix,stride,casid), data=rpn_bbox_loss_, grad_scale=bbox_lr_mode0)

-

else:

-

rpn_bbox_loss_ = mx.symbol.broadcast_div(rpn_bbox_loss_, pos_count)

-

rpn_bbox_loss = mx.sym.MakeLoss(name='%s_rpn_bbox_loss_stride%d_cas%d'%(prefix,stride,casid), data=rpn_bbox_loss_, grad_scale=0.5*lr_mult)

-

ret_group.append(rpn_bbox_loss)

-

ret_group.append(mx.sym.BlockGrad(bbox_weight))

-

#bbox_pred = rpn_bbox_pred_reshape

-

-

return ret_group

3.细节

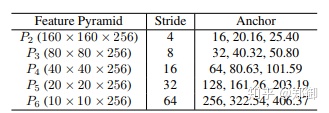

anchor设置

在从P2到P6的特征金字塔上使用特定尺度anchor。P2用于抓取小脸,通过使用更小的anchor,当然,计算代价会变大同事误报会增多。设置尺度步长为![[公式]](https://img-blog.csdnimg.cn/img_convert/aaa0f162b58058cec2a8c51ff81640d8.png) ,宽高比1:1。输入图像640x640,anchor从16x16到406x406在特征金字塔上。总共有102,300个anchors,75%来自P2层。给大家解释一下这个102300怎么来的:(160*160+80*80+40*40+20*20+10)*3 = 102300。

,宽高比1:1。输入图像640x640,anchor从16x16到406x406在特征金字塔上。总共有102,300个anchors,75%来自P2层。给大家解释一下这个102300怎么来的:(160*160+80*80+40*40+20*20+10)*3 = 102300。

正负样本设置:在训练阶段,ground-truth的IOU大于0.5的anchor被任务是正样本,小于0.3的anchor认为是背景。anchors中有大于99%的都是负样本,使用标准OHEM避免正负样本的不均衡。通过loss值选择负样本,正负样本比例1:3

训练:使用SGD优化器训练RetinaFace(momentum=0.9,weight decay = 0.0005,batchsize=8x4),Nvidia Tesla P40(24G) GPUs.起始学习率0.001,5个epoch后变为0.01,然后在第55和第68个epoch时除以10.

测试细节:在WIDER FACE上测试,利用了flip和多尺度(500,800,1100,1400,1700)策略。使用IoU 阈值0.4,预测人脸框的集合使用投票策略。

文章来源: blog.csdn.net,作者:网奇,版权归原作者所有,如需转载,请联系作者。

原文链接:blog.csdn.net/jacke121/article/details/108744653

- 点赞

- 收藏

- 关注作者

评论(0)