【大数据】一键安装hadoop(伪集群)

【摘要】 一键安装hadoop测试环境

大数据选型中,很多都要依赖使用hadoop,工作中需要快速的构建hadoop测试环境。可以使用下面脚本快速安装hadoop环境。

#!/bin/bash

#################################################################################

# 作者:cxy@toc-2022-12-05

# 功能:自动搭建hadoop 伪集群模式(用于测试)

# https://hadoop.apache.org/docs/stable/hadoop-project-dist/hadoop-common/SingleCluster.html#Standalone_Operation

#

#################################################################################

proj_dir=/cxy

proj_bao_dir="${proj_dir}/bao"

proj_jdk_dir="${proj_dir}/jdk"

proj_bd_dir="${proj_dir}/bigdata"

proj_bd_data_dir="/cxy/bigdata/data/hadoop"

mkdir -p ${proj_bao_dir}

mkdir ${proj_jdk_dir}

mkdir ${proj_bd_dir}

install_jdk(){

cd ${proj_bao_dir}

wget https://repo.huaweicloud.com/java/jdk/8u151-b12/jdk-8u151-linux-x64.tar.gz

tar xf ${proj_bao_dir}/jdk-8u151-linux-x64.tar.gz -C ${proj_jdk_dir}

cat >> /etc/profile <<EOF

export JAVA_HOME=${proj_jdk_dir}/jdk1.8.0_151

export JRE_HOME=\${JAVA_HOME}/jre

export CLASSPATH=.:\${JAVA_HOME}/lib:\${JRE_HOME}/lib

export PATH=.:\${JAVA_HOME}/bin:\$PATH

EOF

source /etc/profile

}

echo "0、安装JDK(如需要,解开注释)"

install_jdk

echo "1、创建目录、下载tar包、解压"

mkdir ${proj_bd_dir}

cd ${proj_bd_dir}

wget https://repo.huaweicloud.com/apache/hadoop/common/hadoop-3.3.2/hadoop-3.3.2.tar.gz --no-check-certificate

mkdir

tar zxvf hadoop-3.3.2.tar.gz

echo "2、写入环境变量"

cat >> /etc/profile <<EOF

#Hadoop

export HADOOP_HOME=${proj_bd_dir}/hadoop-3.3.2

export PATH=\$PATH:\$HADOOP_HOME/bin

export PATH=\$PATH:\$HADOOP_HOME/sbin

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

EOF

source /etc/profile

hadoop version

echo "3、修改hadoop相关配置文件"

cd ${proj_bd_dir}/hadoop-3.3.2

#备份要改动的文件

cp etc/hadoop/hadoop-env.sh etc/hadoop/hadoop-env.sh.bak

cp etc/hadoop/core-site.xml etc/hadoop/core-site.xml.bak

cp etc/hadoop/hdfs-site.xml etc/hadoop/hdfs-site.xml.bak

#修改配置文件

sed -i '42aexport JAVA_HOME='${proj_jdk_dir}'/jdk1.8.0_151' etc/hadoop/hadoop-env.sh

rm -f etc/hadoop/core-site.xml

touch etc/hadoop/core-site.xml

cat >> etc/hadoop/core-site.xml <<EOF

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<!-- 数据存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>${proj_bd_data_dir}/tmp</value>

</property>

<!-- 仅限测试环境下使用 -->

<property>

<name>hadoop.http.staticuser.user</name>

<value>root</value>

</property>

</configuration>

EOF

rm -f etc/hadoop/hdfs-site.xml

touch etc/hadoop/hdfs-site.xml

cat >> etc/hadoop/hdfs-site.xml <<EOF

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:${proj_bd_data_dir}/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:${proj_bd_data_dir}/hdfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

EOF

echo "4、配置自登陆"

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

chmod 0600 ~/.ssh/authorized_keys

echo "5、初始化文件系统"

#Format the filesystem

bin/hdfs namenode -format

echo "6、启动服务"

sbin/start-dfs.sh

echo "7、验证"

jps

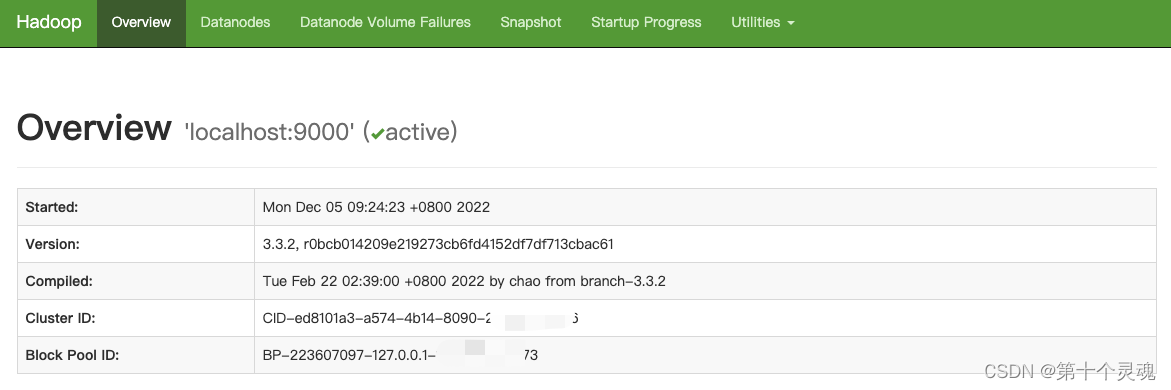

echo "安装完成,访问:http://ip:9870/"

#上传测试命令:hadoop fs -put ~/install_hadoop.sh /aaa

保存该文件:install_hadoop.sh

执行:

chmod +x install_hadoop.sh

./install_hadoop.sh

【版权声明】本文为华为云社区用户原创内容,未经允许不得转载,如需转载请自行联系原作者进行授权。如果您发现本社区中有涉嫌抄袭的内容,欢迎发送邮件进行举报,并提供相关证据,一经查实,本社区将立刻删除涉嫌侵权内容,举报邮箱:

cloudbbs@huaweicloud.com

- 点赞

- 收藏

- 关注作者

评论(0)