【CNN回归预测】基于matlab卷积神经网络CNN数据回归预测【含Matlab源码 2003期】

一、 CNN简介

1 卷积神经网络(CNN)定义

卷积神经网络(convolutional neural network, CNN),是一种专门用来处理具有类似网格结构的数据的神经网络。卷积网络是指那些至少在网络的一层中使用卷积运算来替代一般的矩阵乘法运算的神经网络。

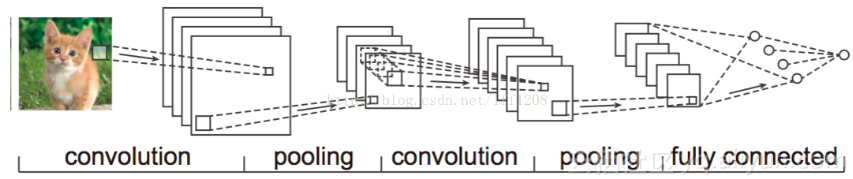

2 CNN神经网络图

CNN是一种通过卷积计算的前馈神经网络,其是受生物学上的感受野机制提出的,具有平移不变性,使用卷积核,最大的应用了局部信息,保留了平面结构信息。

3 CNN五种结构组成

3.1 输入层

在处理图像的CNN中,输入层一般代表了一张图片的像素矩阵。可以用三维矩阵代表一张图片。三维矩阵的长和宽代表了图像的大小,而三维矩阵的深度代表了图像的色彩通道。比如黑白图片的深度为1,而在RGB色彩模式下,图像的深度为3。

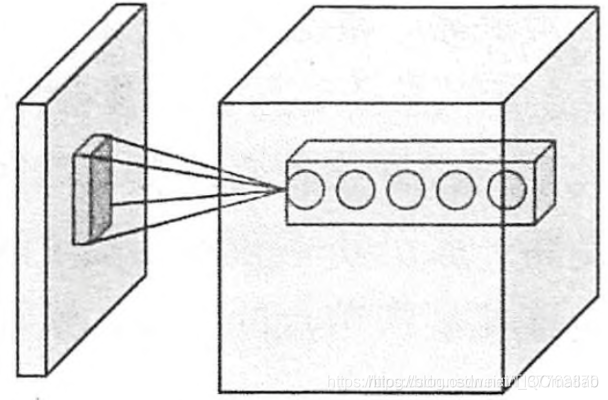

3.2 卷积层(Convolution Layer)

卷积层是CNN最重要的部分。它与传统全连接层不同,卷积层中每一个节点的输入只是上一层神经网络的一小块。卷积层被称为过滤器(filter)或者内核(kernel),Tensorflow的官方文档中称这个部分为过滤器(filter)。

【注意】在一个卷积层中,过滤器(filter)所处理的节点矩阵的长和宽都是由人工指定的,这个节点矩阵的尺寸也被称为过滤器尺寸。常用的尺寸有3x3或5x5,而过滤层处理的矩阵深度和当前处理的神经层网络节点矩阵的深度一致。

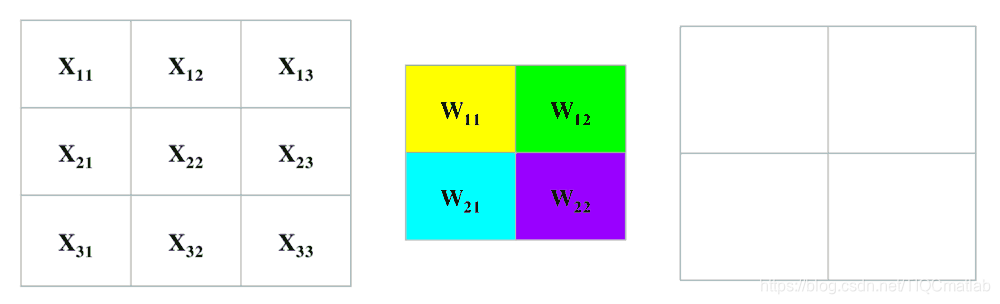

下图为卷积层过滤器(filter)结构示意图

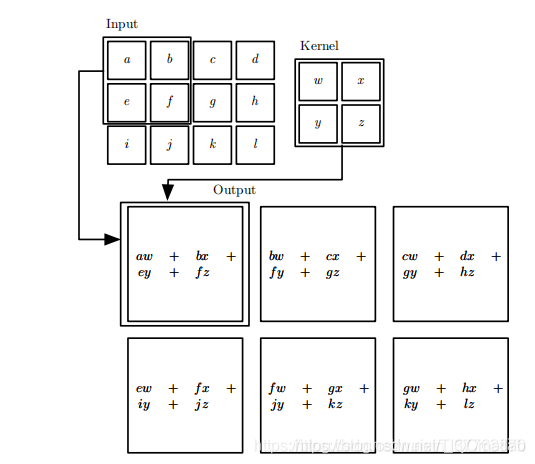

下图为卷积过程

详细过程如下,Input矩阵是像素点矩阵,Kernel矩阵是过滤器(filter)

3.3 池化层(Pooling Layer)

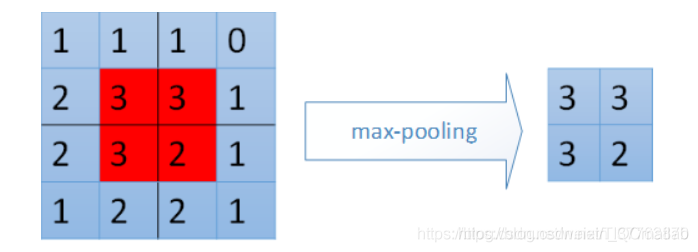

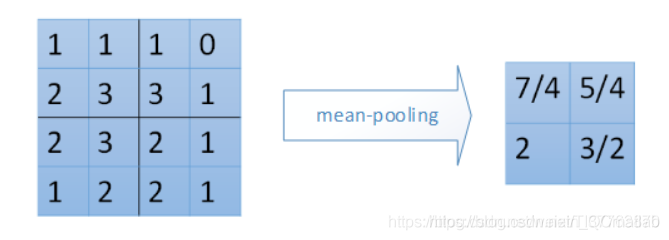

池化层不会改变三维矩阵的深度,但是它可以缩小矩阵的大小。通过池化层,可以进一步缩小最后全连接层中节点的个数,从而达到减少整个神经网络参数的目的。使用池化层既可以加快计算速度也可以防止过拟合。池化层filter的计算不是节点的加权和,而是采用最大值或者平均值计算。使用最大值操作的池化层被称之为最大池化层(max pooling)(最大池化层是使用的最多的磁化层结构)。使用平均值操作的池化层被称之为平均池化层(mean pooling)。

下图分别表示不重叠的4个2x2区域的最大池化层(max pooling)、平均池化层(mean pooling)

3.4 全连接层

在经过多轮卷积层和池化层的处理之后,在CNN的最后一般会由1到2个全连接层来给出最后的分类结果。经过几轮卷积层和池化层的处理之后,可以认为图像中的信息已经被抽象成了信息含量更高的特征。我们可以将卷积层和池化层看成自动图像特征提取的过程。在提取完成之后,仍然需要使用全连接层来完成分类任务。

3.5 Softmax层

通过Softmax层,可以得到当前样例属于不同种类的概率分布问题。

二、部分源代码

load([ 'test_input_xdata.mat'])

load([ 'test_input_ydata.mat'])

save_directory = '';

summary_file_name = 'Crossval_results_summary_table';

%Network settings:

augment_training_data = 1; %Randomly augment training dataset

normalize_training_input = 1; %Normalize training data based on predictor distribution

overwrite_crossval_results_table = 1; %1: create new cross validation results table, 0: add lines to existing table

%Input data pre-processing:

xdata = permute(xdata,[1,2,4,3]); %input needs to be [X, Y, nchannel, nslice]

n_images = size(ydata,1); %For random splitting of input into training/testing groups

img_number = 1:n_images;

rng(20211013);

idx = randperm(n_images);

%Training/testing data split:

n_test_images = 28; %Number of images to kept for independent test set

n_crossval_folds = 8; %Number of training cross-validation folds

n_train_images = n_images-n_test_images;

idx_test = find(ismember(img_number, idx(end-(n_test_images-1):end)));

XTest = xdata(:,:,:,idx_test);

YTest = ydata(idx_test);

xdata(:,:,:,idx_test) = [];

ydata(idx_test) = [];

img_number(idx_test) = [];

idx(end-(n_test_images-1):end) = [];

%Network parameters (can iterate over by moving into for loop):

%params.optimizer = {'sgdm','adam'}; %'sgdm' | 'adam'

params.batch_size = 8;

%params.max_epochs = [4,6,8];

params.learn_rate = 0.001;

params.learn_rate_drop_factor = 0.1;

params.learn_rate_drop_period = 20;

params.learn_rate_schedule = 'none'; %'none', 'piecewise'

params.shuffle = 'every-epoch';

params.momentum = 0.9; %for sgdm optimizer

params.L2_reg = 0.01;

params.conv_features = [16, 16, 32]; %Number of feature channels in convolutional layers

params.conv_filter_size = 3;

params.conv_padding = 'same';

params.pooling_size = 2;

params.pooling_stride = 1;

params.dropout_factor = 0.2;

params.duplication_factor = 3; %Duplicate training set by N times

show_plots = 0; %1: show plots of training progress

tic

iter = 1;

disp('Performing cross validation evaluation over all network iterations:')

for var1 = {'sgdm','adam'}

params.optimizer = var1;

for var2 = [4,6,8]

params.max_epochs = var2;

%for var3 = X:Y

%params.example = var3;

%etc.

%Splitting training data into k-folds

for k = 1:n_crossval_folds

images_per_fold = floor(n_train_images/n_crossval_folds);

idx_val = find(ismember(img_number, idx(1+(k-1)*images_per_fold:images_per_fold+(k-1)*images_per_fold)));

YValidation = ydata(idx_val);

XValidation = xdata(:,:,:,idx_val);

XTrain = xdata;

XTrain(:,:,:,idx_val) = [];

YTrain = ydata;

YTrain(idx_val) = [];

%ROS input normalization:

if normalize_training_input == 1

[XTrain, YTrain] = ROS(XTrain, YTrain, params.duplication_factor);

else

XTrain = repmat(XTrain,1,1,1,params.duplication_factor);

YTrain = repmat(YTrain,params.duplication_factor,1);

end

%Random geometric image augmenation:

%Augmentation parameters

aug_params.rot = [-90,90]; %Image rotation range

aug_params.trans_x = [-5 5]; %Image translation in X direction range

aug_params.trans_y = [-5 5]; %Image translation in Y direction range

aug_params.refl_x = 1; %Image reflection across X axis

aug_params.refl_y = 1; %Image reflection across Y axis

aug_params.scale = [0.7,1.3]; %Imaging scaling range

aug_params.shear_x = [-30,50]; %Image shearing in X direction range

aug_params.shear_y = [-30,50]; %Image shearing in Y direction range

aug_params.add_gauss_noise = 0; %Add Gaussian noise

aug_params.gauss_noise_var = 0.0005; %Gaussian noise variance

if augment_training_data == 1

XTrain = image_augmentation(XTrain,aug_params);

else

aug_params = structfun(@(x) [], aug_params, 'UniformOutput', false);

end

%Network structure:

layers = [

imageInputLayer([size(XTrain,1),size(XTrain,2),size(XTrain,3)])

convolution2dLayer(params.conv_filter_size,params.conv_features(1),'Padding',params.conv_padding)

%batchNormalizationLayer

reluLayer

averagePooling2dLayer(params.pooling_size,'Stride',params.pooling_stride)

convolution2dLayer(params.conv_filter_size,params.conv_features(2),'Padding',params.conv_padding)

%batchNormalizationLayer

reluLayer

averagePooling2dLayer(params.pooling_size,'Stride',params.pooling_stride)

convolution2dLayer(params.conv_filter_size,params.conv_features(3),'Padding',params.conv_padding)

%batchNormalizationLayer

reluLayer

dropoutLayer(params.dropout_factor)

fullyConnectedLayer(1)

regressionLayer];

params.validationFrequency = floor(numel(YTrain)/params.batch_size);

options = network_options(params,XValidation,YValidation,show_plots);

net = trainNetwork(XTrain,YTrain,layers,options);

%Network results:

accuracy_threshold = 0.1; %Predictions within 10% will be considered 'accurate'

predicted_train = predict(net,XTrain);

predictionError_train = YTrain - predicted_train;

numCorrect_train = sum(abs(predictionError_train) < accuracy_threshold);

accuracy_train(k) = numCorrect_train/numel(YTrain);

error_abs_train(k) = mean(abs(predictionError_train));

rmse_train(k) = sqrt(mean(predictionError_train.^2));

predicted_val = predict(net,XValidation);

predictionError_val = YValidation - predicted_val;

numCorrect_val = sum(abs(predictionError_val) < accuracy_threshold);

accuracy_val(k) = numCorrect_val/numel(YValidation);

error_abs_val(k) = mean(abs(predictionError_val));

rmse_val(k) = sqrt(mean(predictionError_val.^2));

if k == 1

predicted_val_table(1:numel(YValidation),1) = predict(net,XValidation);

if iter == 1

YValidation_table(1:numel(YValidation),1) = YValidation;

end

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

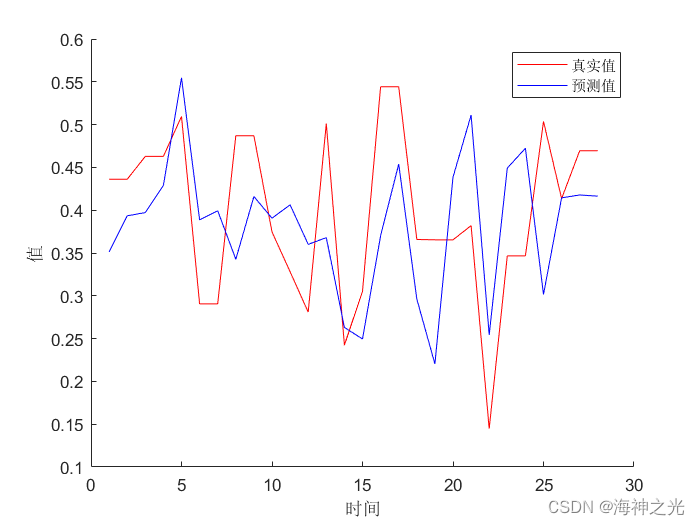

三、运行结果

四、matlab版本及参考文献

1 matlab版本

2014a

2 参考文献

[1] 包子阳,余继周,杨杉.智能优化算法及其MATLAB实例(第2版)[M].电子工业出版社,2016.

[2]张岩,吴水根.MATLAB优化算法源代码[M].清华大学出版社,2017.

[3]周品.MATLAB 神经网络设计与应用[M].清华大学出版社,2013.

[4]陈明.MATLAB神经网络原理与实例精解[M].清华大学出版社,2013.

[5]方清城.MATLAB R2016a神经网络设计与应用28个案例分析[M].清华大学出版社,2018.

3 备注

简介此部分摘自互联网,仅供参考,若侵权,联系删除

文章来源: qq912100926.blog.csdn.net,作者:海神之光,版权归原作者所有,如需转载,请联系作者。

原文链接:qq912100926.blog.csdn.net/article/details/126077464

- 点赞

- 收藏

- 关注作者

评论(0)