Spark高效数据分析03、Spack SQL

Spark高效数据分析03、Spack SQL

📋前言📋

💝博客:【】💝

✍本文由在下【红目香薰】原创,首发于CSDN✍

🤗2022年最大愿望:【服务百万技术人次】🤗

💝Spark初始环境地址:【】💝

环境需求

环境:win10

开发工具:IntelliJ IDEA 2020.1.3 x64

maven版本:3.0.5

1、修改pom.xml

修改完一定要刷新一下:

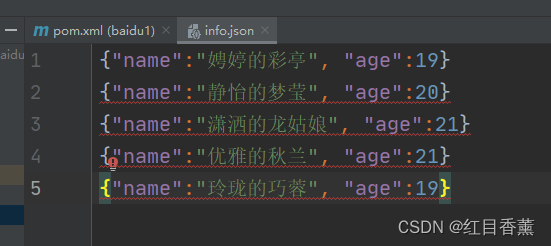

2、创建测试文件【info.json】,这里不是标准的json,面向行编写的【json】文件

别担心报错,可以正常读取的

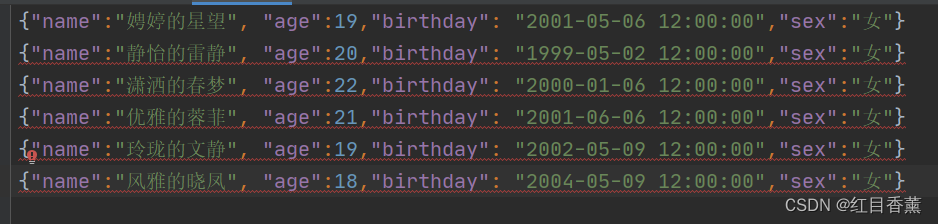

数据2

3、SparkSession

SparkSession 是 Spark 最新的 SQL 查询起始点,实质上是 SQLContext 和 HiveContext 的组合。

4、Demo1

C:\java\jdk\jdk1.8.0_152\bin\java.exe "-javaagent:C:\java\IDEA\IntelliJ IDEA 2020.1.3\lib\idea_rt.jar=57086:C:\java\IDEA\IntelliJ IDEA 2020.1.3\bin" -Dfile.encoding=UTF-8 -classpath C:\java\jdk\jdk1.8.0_152\jre\lib\charsets.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\deploy.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\access-bridge-64.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\cldrdata.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\dnsns.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\jaccess.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\jfxrt.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\localedata.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\nashorn.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunec.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunjce_provider.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunmscapi.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunpkcs11.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\zipfs.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\javaws.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jce.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jfr.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jfxswt.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jsse.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\management-agent.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\plugin.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\resources.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\rt.jar;C:\Users\Administrator\IdeaProjects\baidu1\target\classes;C:\Users\Administrator\.ivy2\cache\org.scala-lang\scala-library\jars\scala-library-2.13.8.jar;C:\Users\Administrator\.ivy2\cache\org.scala-lang\scala-reflect\jars\scala-reflect-2.13.8.jar;C:\Users\Administrator\.ivy2\cache\org.scala-lang\scala-library\srcs\scala-library-2.13.8-sources.jar;D:\old\newPro\org\apache\spark\spark-core_2.13\3.3.0\spark-core_2.13-3.3.0.jar;D:\old\newPro\org\scala-lang\modules\scala-parallel-collections_2.13\1.0.3\scala-parallel-collections_2.13-1.0.3.jar;D:\old\newPro\org\apache\avro\avro\1.11.0\avro-1.11.0.jar;D:\old\newPro\com\fasterxml\jackson\core\jackson-core\2.12.5\jackson-core-2.12.5.jar;D:\old\newPro\org\apache\commons\commons-compress\1.21\commons-compress-1.21.jar;D:\old\newPro\org\apache\avro\avro-mapred\1.11.0\avro-mapred-1.11.0.jar;D:\old\newPro\org\apache\avro\avro-ipc\1.11.0\avro-ipc-1.11.0.jar;D:\old\newPro\org\tukaani\xz\1.9\xz-1.9.jar;D:\old\newPro\com\twitter\chill_2.13\0.10.0\chill_2.13-0.10.0.jar;D:\old\newPro\com\esotericsoftware\kryo-shaded\4.0.2\kryo-shaded-4.0.2.jar;D:\old\newPro\com\esotericsoftware\minlog\1.3.0\minlog-1.3.0.jar;D:\old\newPro\org\objenesis\objenesis\2.5.1\objenesis-2.5.1.jar;D:\old\newPro\com\twitter\chill-java\0.10.0\chill-java-0.10.0.jar;D:\old\newPro\org\apache\xbean\xbean-asm9-shaded\4.20\xbean-asm9-shaded-4.20.jar;D:\old\newPro\org\apache\hadoop\hadoop-client-api\3.3.2\hadoop-client-api-3.3.2.jar;D:\old\newPro\org\apache\hadoop\hadoop-client-runtime\3.3.2\hadoop-client-runtime-3.3.2.jar;D:\old\newPro\commons-logging\commons-logging\1.1.3\commons-logging-1.1.3.jar;D:\old\newPro\org\apache\spark\spark-launcher_2.13\3.3.0\spark-launcher_2.13-3.3.0.jar;D:\old\newPro\org\apache\spark\spark-kvstore_2.13\3.3.0\spark-kvstore_2.13-3.3.0.jar;D:\old\newPro\org\fusesource\leveldbjni\leveldbjni-all\1.8\leveldbjni-all-1.8.jar;D:\old\newPro\com\fasterxml\jackson\core\jackson-annotations\2.13.3\jackson-annotations-2.13.3.jar;D:\old\newPro\org\apache\spark\spark-network-common_2.13\3.3.0\spark-network-common_2.13-3.3.0.jar;D:\old\newPro\com\google\crypto\tink\tink\1.6.1\tink-1.6.1.jar;D:\old\newPro\org\apache\spark\spark-network-shuffle_2.13\3.3.0\spark-network-shuffle_2.13-3.3.0.jar;D:\old\newPro\org\apache\spark\spark-unsafe_2.13\3.3.0\spark-unsafe_2.13-3.3.0.jar;D:\old\newPro\javax\activation\activation\1.1.1\activation-1.1.1.jar;D:\old\newPro\org\apache\curator\curator-recipes\2.13.0\curator-recipes-2.13.0.jar;D:\old\newPro\org\apache\curator\curator-framework\2.13.0\curator-framework-2.13.0.jar;D:\old\newPro\org\apache\curator\curator-client\2.13.0\curator-client-2.13.0.jar;D:\old\newPro\org\apache\zookeeper\zookeeper\3.6.2\zookeeper-3.6.2.jar;D:\old\newPro\commons-lang\commons-lang\2.6\commons-lang-2.6.jar;D:\old\newPro\org\apache\zookeeper\zookeeper-jute\3.6.2\zookeeper-jute-3.6.2.jar;D:\old\newPro\org\apache\yetus\audience-annotations\0.5.0\audience-annotations-0.5.0.jar;D:\old\newPro\jakarta\servlet\jakarta.servlet-api\4.0.3\jakarta.servlet-api-4.0.3.jar;D:\old\newPro\commons-codec\commons-codec\1.15\commons-codec-1.15.jar;D:\old\newPro\org\apache\commons\commons-lang3\3.12.0\commons-lang3-3.12.0.jar;D:\old\newPro\org\apache\commons\commons-math3\3.6.1\commons-math3-3.6.1.jar;D:\old\newPro\org\apache\commons\commons-text\1.9\commons-text-1.9.jar;D:\old\newPro\commons-io\commons-io\2.11.0\commons-io-2.11.0.jar;D:\old\newPro\commons-collections\commons-collections\3.2.2\commons-collections-3.2.2.jar;D:\old\newPro\org\apache\commons\commons-collections4\4.4\commons-collections4-4.4.jar;D:\old\newPro\com\google\code\findbugs\jsr305\3.0.0\jsr305-3.0.0.jar;D:\old\newPro\org\slf4j\slf4j-api\1.7.32\slf4j-api-1.7.32.jar;D:\old\newPro\org\slf4j\jul-to-slf4j\1.7.32\jul-to-slf4j-1.7.32.jar;D:\old\newPro\org\slf4j\jcl-over-slf4j\1.7.32\jcl-over-slf4j-1.7.32.jar;D:\old\newPro\org\apache\logging\log4j\log4j-slf4j-impl\2.17.2\log4j-slf4j-impl-2.17.2.jar;D:\old\newPro\org\apache\logging\log4j\log4j-api\2.17.2\log4j-api-2.17.2.jar;D:\old\newPro\org\apache\logging\log4j\log4j-core\2.17.2\log4j-core-2.17.2.jar;D:\old\newPro\org\apache\logging\log4j\log4j-1.2-api\2.17.2\log4j-1.2-api-2.17.2.jar;D:\old\newPro\com\ning\compress-lzf\1.1\compress-lzf-1.1.jar;D:\old\newPro\org\xerial\snappy\snappy-java\1.1.8.4\snappy-java-1.1.8.4.jar;D:\old\newPro\org\lz4\lz4-java\1.8.0\lz4-java-1.8.0.jar;D:\old\newPro\com\github\luben\zstd-jni\1.5.2-1\zstd-jni-1.5.2-1.jar;D:\old\newPro\org\roaringbitmap\RoaringBitmap\0.9.25\RoaringBitmap-0.9.25.jar;D:\old\newPro\org\roaringbitmap\shims\0.9.25\shims-0.9.25.jar;D:\old\newPro\org\scala-lang\modules\scala-xml_2.13\1.2.0\scala-xml_2.13-1.2.0.jar;D:\old\newPro\org\scala-lang\scala-library\2.13.8\scala-library-2.13.8.jar;D:\old\newPro\org\scala-lang\scala-reflect\2.13.8\scala-reflect-2.13.8.jar;D:\old\newPro\org\json4s\json4s-jackson_2.13\3.7.0-M11\json4s-jackson_2.13-3.7.0-M11.jar;D:\old\newPro\org\json4s\json4s-core_2.13\3.7.0-M11\json4s-core_2.13-3.7.0-M11.jar;D:\old\newPro\org\json4s\json4s-ast_2.13\3.7.0-M11\json4s-ast_2.13-3.7.0-M11.jar;D:\old\newPro\org\json4s\json4s-scalap_2.13\3.7.0-M11\json4s-scalap_2.13-3.7.0-M11.jar;D:\old\newPro\org\glassfish\jersey\core\jersey-client\2.34\jersey-client-2.34.jar;D:\old\newPro\jakarta\ws\rs\jakarta.ws.rs-api\2.1.6\jakarta.ws.rs-api-2.1.6.jar;D:\old\newPro\org\glassfish\hk2\external\jakarta.inject\2.6.1\jakarta.inject-2.6.1.jar;D:\old\newPro\org\glassfish\jersey\core\jersey-common\2.34\jersey-common-2.34.jar;D:\old\newPro\jakarta\annotation\jakarta.annotation-api\1.3.5\jakarta.annotation-api-1.3.5.jar;D:\old\newPro\org\glassfish\hk2\osgi-resource-locator\1.0.3\osgi-resource-locator-1.0.3.jar;D:\old\newPro\org\glassfish\jersey\core\jersey-server\2.34\jersey-server-2.34.jar;D:\old\newPro\jakarta\validation\jakarta.validation-api\2.0.2\jakarta.validation-api-2.0.2.jar;D:\old\newPro\org\glassfish\jersey\containers\jersey-container-servlet\2.34\jersey-container-servlet-2.34.jar;D:\old\newPro\org\glassfish\jersey\containers\jersey-container-servlet-core\2.34\jersey-container-servlet-core-2.34.jar;D:\old\newPro\org\glassfish\jersey\inject\jersey-hk2\2.34\jersey-hk2-2.34.jar;D:\old\newPro\org\glassfish\hk2\hk2-locator\2.6.1\hk2-locator-2.6.1.jar;D:\old\newPro\org\glassfish\hk2\external\aopalliance-repackaged\2.6.1\aopalliance-repackaged-2.6.1.jar;D:\old\newPro\org\glassfish\hk2\hk2-api\2.6.1\hk2-api-2.6.1.jar;D:\old\newPro\org\glassfish\hk2\hk2-utils\2.6.1\hk2-utils-2.6.1.jar;D:\old\newPro\org\javassist\javassist\3.25.0-GA\javassist-3.25.0-GA.jar;D:\old\newPro\io\netty\netty-all\4.1.74.Final\netty-all-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-buffer\4.1.74.Final\netty-buffer-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-codec\4.1.74.Final\netty-codec-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-common\4.1.74.Final\netty-common-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-handler\4.1.74.Final\netty-handler-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-tcnative-classes\2.0.48.Final\netty-tcnative-classes-2.0.48.Final.jar;D:\old\newPro\io\netty\netty-resolver\4.1.74.Final\netty-resolver-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport\4.1.74.Final\netty-transport-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-classes-epoll\4.1.74.Final\netty-transport-classes-epoll-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-native-unix-common\4.1.74.Final\netty-transport-native-unix-common-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-classes-kqueue\4.1.74.Final\netty-transport-classes-kqueue-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-native-epoll\4.1.74.Final\netty-transport-native-epoll-4.1.74.Final-linux-x86_64.jar;D:\old\newPro\io\netty\netty-transport-native-epoll\4.1.74.Final\netty-transport-native-epoll-4.1.74.Final-linux-aarch_64.jar;D:\old\newPro\io\netty\netty-transport-native-kqueue\4.1.74.Final\netty-transport-native-kqueue-4.1.74.Final-osx-x86_64.jar;D:\old\newPro\io\netty\netty-transport-native-kqueue\4.1.74.Final\netty-transport-native-kqueue-4.1.74.Final-osx-aarch_64.jar;D:\old\newPro\com\clearspring\analytics\stream\2.9.6\stream-2.9.6.jar;D:\old\newPro\io\dropwizard\metrics\metrics-core\4.2.7\metrics-core-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-jvm\4.2.7\metrics-jvm-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-json\4.2.7\metrics-json-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-graphite\4.2.7\metrics-graphite-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-jmx\4.2.7\metrics-jmx-4.2.7.jar;D:\old\newPro\com\fasterxml\jackson\core\jackson-databind\2.13.3\jackson-databind-2.13.3.jar;D:\old\newPro\com\fasterxml\jackson\module\jackson-module-scala_2.13\2.13.3\jackson-module-scala_2.13-2.13.3.jar;D:\old\newPro\com\thoughtworks\paranamer\paranamer\2.8\paranamer-2.8.jar;D:\old\newPro\org\apache\ivy\ivy\2.5.0\ivy-2.5.0.jar;D:\old\newPro\oro\oro\2.0.8\oro-2.0.8.jar;D:\old\newPro\net\razorvine\pickle\1.2\pickle-1.2.jar;D:\old\newPro\net\sf\py4j\py4j\0.10.9.5\py4j-0.10.9.5.jar;D:\old\newPro\org\apache\spark\spark-tags_2.13\3.3.0\spark-tags_2.13-3.3.0.jar;D:\old\newPro\org\apache\commons\commons-crypto\1.1.0\commons-crypto-1.1.0.jar;D:\old\newPro\org\spark-project\spark\unused\1.0.0\unused-1.0.0.jar;D:\old\newPro\org\apache\spark\spark-sql_2.13\3.3.0\spark-sql_2.13-3.3.0.jar;D:\old\newPro\org\rocksdb\rocksdbjni\6.20.3\rocksdbjni-6.20.3.jar;D:\old\newPro\com\univocity\univocity-parsers\2.9.1\univocity-parsers-2.9.1.jar;D:\old\newPro\org\apache\spark\spark-sketch_2.13\3.3.0\spark-sketch_2.13-3.3.0.jar;D:\old\newPro\org\apache\spark\spark-catalyst_2.13\3.3.0\spark-catalyst_2.13-3.3.0.jar;D:\old\newPro\org\codehaus\janino\janino\3.0.16\janino-3.0.16.jar;D:\old\newPro\org\codehaus\janino\commons-compiler\3.0.16\commons-compiler-3.0.16.jar;D:\old\newPro\org\antlr\antlr4-runtime\4.8\antlr4-runtime-4.8.jar;D:\old\newPro\org\apache\arrow\arrow-vector\7.0.0\arrow-vector-7.0.0.jar;D:\old\newPro\org\apache\arrow\arrow-format\7.0.0\arrow-format-7.0.0.jar;D:\old\newPro\org\apache\arrow\arrow-memory-core\7.0.0\arrow-memory-core-7.0.0.jar;D:\old\newPro\com\google\flatbuffers\flatbuffers-java\1.12.0\flatbuffers-java-1.12.0.jar;D:\old\newPro\org\apache\arrow\arrow-memory-netty\7.0.0\arrow-memory-netty-7.0.0.jar;D:\old\newPro\org\apache\orc\orc-core\1.7.4\orc-core-1.7.4.jar;D:\old\newPro\org\apache\orc\orc-shims\1.7.4\orc-shims-1.7.4.jar;D:\old\newPro\com\google\protobuf\protobuf-java\2.5.0\protobuf-java-2.5.0.jar;D:\old\newPro\io\airlift\aircompressor\0.21\aircompressor-0.21.jar;D:\old\newPro\org\jetbrains\annotations\17.0.0\annotations-17.0.0.jar;D:\old\newPro\org\threeten\threeten-extra\1.5.0\threeten-extra-1.5.0.jar;D:\old\newPro\org\apache\orc\orc-mapreduce\1.7.4\orc-mapreduce-1.7.4.jar;D:\old\newPro\org\apache\hive\hive-storage-api\2.7.2\hive-storage-api-2.7.2.jar;D:\old\newPro\org\apache\parquet\parquet-column\1.12.2\parquet-column-1.12.2.jar;D:\old\newPro\org\apache\parquet\parquet-common\1.12.2\parquet-common-1.12.2.jar;D:\old\newPro\org\apache\parquet\parquet-encoding\1.12.2\parquet-encoding-1.12.2.jar;D:\old\newPro\org\apache\parquet\parquet-hadoop\1.12.2\parquet-hadoop-1.12.2.jar;D:\old\newPro\org\apache\parquet\parquet-format-structures\1.12.2\parquet-format-structures-1.12.2.jar;D:\old\newPro\org\apache\parquet\parquet-jackson\1.12.2\parquet-jackson-1.12.2.jar;D:\old\newPro\org\apache\spark\spark-streaming_2.13\3.3.0\spark-streaming_2.13-3.3.0.jar;D:\old\newPro\org\apache\spark\spark-mllib_2.13\3.3.0\spark-mllib_2.13-3.3.0.jar;D:\old\newPro\org\scala-lang\modules\scala-parser-combinators_2.13\1.1.2\scala-parser-combinators_2.13-1.1.2.jar;D:\old\newPro\org\apache\spark\spark-graphx_2.13\3.3.0\spark-graphx_2.13-3.3.0.jar;D:\old\newPro\net\sourceforge\f2j\arpack_combined_all\0.1\arpack_combined_all-0.1.jar;D:\old\newPro\org\apache\spark\spark-mllib-local_2.13\3.3.0\spark-mllib-local_2.13-3.3.0.jar;D:\old\newPro\org\scalanlp\breeze_2.13\1.2\breeze_2.13-1.2.jar;D:\old\newPro\org\scalanlp\breeze-macros_2.13\1.2\breeze-macros_2.13-1.2.jar;D:\old\newPro\com\github\fommil\netlib\core\1.1.2\core-1.1.2.jar;D:\old\newPro\net\sf\opencsv\opencsv\2.3\opencsv-2.3.jar;D:\old\newPro\com\github\wendykierp\JTransforms\3.1\JTransforms-3.1.jar;D:\old\newPro\pl\edu\icm\JLargeArrays\1.5\JLargeArrays-1.5.jar;D:\old\newPro\com\chuusai\shapeless_2.13\2.3.3\shapeless_2.13-2.3.3.jar;D:\old\newPro\org\typelevel\spire_2.13\0.17.0\spire_2.13-0.17.0.jar;D:\old\newPro\org\typelevel\spire-macros_2.13\0.17.0\spire-macros_2.13-0.17.0.jar;D:\old\newPro\org\typelevel\spire-platform_2.13\0.17.0\spire-platform_2.13-0.17.0.jar;D:\old\newPro\org\typelevel\spire-util_2.13\0.17.0\spire-util_2.13-0.17.0.jar;D:\old\newPro\org\typelevel\algebra_2.13\2.0.1\algebra_2.13-2.0.1.jar;D:\old\newPro\org\typelevel\cats-kernel_2.13\2.1.1\cats-kernel_2.13-2.1.1.jar;D:\old\newPro\org\scala-lang\modules\scala-collection-compat_2.13\2.1.1\scala-collection-compat_2.13-2.1.1.jar;D:\old\newPro\org\glassfish\jaxb\jaxb-runtime\2.3.2\jaxb-runtime-2.3.2.jar;D:\old\newPro\jakarta\xml\bind\jakarta.xml.bind-api\2.3.2\jakarta.xml.bind-api-2.3.2.jar;D:\old\newPro\com\sun\istack\istack-commons-runtime\3.0.8\istack-commons-runtime-3.0.8.jar;D:\old\newPro\dev\ludovic\netlib\blas\2.2.1\blas-2.2.1.jar;D:\old\newPro\dev\ludovic\netlib\lapack\2.2.1\lapack-2.2.1.jar;D:\old\newPro\dev\ludovic\netlib\arpack\2.2.1\arpack-2.2.1.jar;D:\old\newPro\org\apache\spark\spark-hive_2.13\3.3.0\spark-hive_2.13-3.3.0.jar;D:\old\newPro\org\apache\hive\hive-common\2.3.9\hive-common-2.3.9.jar;D:\old\newPro\commons-cli\commons-cli\1.2\commons-cli-1.2.jar;D:\old\newPro\jline\jline\2.12\jline-2.12.jar;D:\old\newPro\com\tdunning\json\1.8\json-1.8.jar;D:\old\newPro\com\github\joshelser\dropwizard-metrics-hadoop-metrics2-reporter\0.1.2\dropwizard-metrics-hadoop-metrics2-reporter-0.1.2.jar;D:\old\newPro\org\apache\hive\hive-exec\2.3.9\hive-exec-2.3.9-core.jar;D:\old\newPro\org\apache\hive\hive-vector-code-gen\2.3.9\hive-vector-code-gen-2.3.9.jar;D:\old\newPro\com\google\guava\guava\14.0.1\guava-14.0.1.jar;D:\old\newPro\org\apache\velocity\velocity\1.5\velocity-1.5.jar;D:\old\newPro\org\antlr\antlr-runtime\3.5.2\antlr-runtime-3.5.2.jar;D:\old\newPro\org\antlr\ST4\4.0.4\ST4-4.0.4.jar;D:\old\newPro\com\google\code\gson\gson\2.2.4\gson-2.2.4.jar;D:\old\newPro\stax\stax-api\1.0.1\stax-api-1.0.1.jar;D:\old\newPro\org\apache\hive\hive-metastore\2.3.9\hive-metastore-2.3.9.jar;D:\old\newPro\javolution\javolution\5.5.1\javolution-5.5.1.jar;D:\old\newPro\com\jolbox\bonecp\0.8.0.RELEASE\bonecp-0.8.0.RELEASE.jar;D:\old\newPro\com\zaxxer\HikariCP\2.5.1\HikariCP-2.5.1.jar;D:\old\newPro\org\datanucleus\datanucleus-api-jdo\4.2.4\datanucleus-api-jdo-4.2.4.jar;D:\old\newPro\org\datanucleus\datanucleus-rdbms\4.1.19\datanucleus-rdbms-4.1.19.jar;D:\old\newPro\commons-pool\commons-pool\1.5.4\commons-pool-1.5.4.jar;D:\old\newPro\commons-dbcp\commons-dbcp\1.4\commons-dbcp-1.4.jar;D:\old\newPro\javax\jdo\jdo-api\3.0.1\jdo-api-3.0.1.jar;D:\old\newPro\javax\transaction\jta\1.1\jta-1.1.jar;D:\old\newPro\org\datanucleus\javax.jdo\3.2.0-m3\javax.jdo-3.2.0-m3.jar;D:\old\newPro\javax\transaction\transaction-api\1.1\transaction-api-1.1.jar;D:\old\newPro\org\apache\hive\hive-serde\2.3.9\hive-serde-2.3.9.jar;D:\old\newPro\org\apache\hive\hive-shims\2.3.9\hive-shims-2.3.9.jar;D:\old\newPro\org\apache\hive\shims\hive-shims-common\2.3.9\hive-shims-common-2.3.9.jar;D:\old\newPro\org\apache\hive\shims\hive-shims-0.23\2.3.9\hive-shims-0.23-2.3.9.jar;D:\old\newPro\org\apache\hive\shims\hive-shims-scheduler\2.3.9\hive-shims-scheduler-2.3.9.jar;D:\old\newPro\org\apache\hive\hive-llap-common\2.3.9\hive-llap-common-2.3.9.jar;D:\old\newPro\org\apache\hive\hive-llap-client\2.3.9\hive-llap-client-2.3.9.jar;D:\old\newPro\org\apache\httpcomponents\httpclient\4.5.13\httpclient-4.5.13.jar;D:\old\newPro\org\apache\httpcomponents\httpcore\4.4.13\httpcore-4.4.13.jar;D:\old\newPro\org\codehaus\jackson\jackson-mapper-asl\1.9.13\jackson-mapper-asl-1.9.13.jar;D:\old\newPro\org\codehaus\jackson\jackson-core-asl\1.9.13\jackson-core-asl-1.9.13.jar;D:\old\newPro\joda-time\joda-time\2.10.13\joda-time-2.10.13.jar;D:\old\newPro\org\jodd\jodd-core\3.5.2\jodd-core-3.5.2.jar;D:\old\newPro\org\datanucleus\datanucleus-core\4.1.17\datanucleus-core-4.1.17.jar;D:\old\newPro\org\apache\thrift\libthrift\0.12.0\libthrift-0.12.0.jar;D:\old\newPro\org\apache\thrift\libfb303\0.9.3\libfb303-0.9.3.jar;D:\old\newPro\org\apache\derby\derby\10.14.2.0\derby-10.14.2.0.jar com.item.action.DemoSparkSQL

Using Spark's default log4j profile: org/apache/spark/log4j2-defaults.properties

22/07/18 19:58:22 INFO SparkContext: Running Spark version 3.3.0

22/07/18 19:58:23 INFO ResourceUtils: ==============================================================

22/07/18 19:58:23 INFO ResourceUtils: No custom resources configured for spark.driver.

22/07/18 19:58:23 INFO ResourceUtils: ==============================================================

22/07/18 19:58:23 INFO SparkContext: Submitted application: Spark SQL

22/07/18 19:58:23 INFO ResourceProfile: Default ResourceProfile created, executor resources: Map(cores -> name: cores, amount: 1, script: , vendor: , memory -> name: memory, amount: 1024, script: , vendor: , offHeap -> name: offHeap, amount: 0, script: , vendor: ), task resources: Map(cpus -> name: cpus, amount: 1.0)

22/07/18 19:58:23 INFO ResourceProfile: Limiting resource is cpu

22/07/18 19:58:23 INFO ResourceProfileManager: Added ResourceProfile id: 0

22/07/18 19:58:23 INFO SecurityManager: Changing view acls to: Administrator

22/07/18 19:58:23 INFO SecurityManager: Changing modify acls to: Administrator

22/07/18 19:58:23 INFO SecurityManager: Changing view acls groups to:

22/07/18 19:58:23 INFO SecurityManager: Changing modify acls groups to:

22/07/18 19:58:23 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(Administrator); groups with view permissions: Set(); users with modify permissions: Set(Administrator); groups with modify permissions: Set()

22/07/18 19:58:23 INFO Utils: Successfully started service 'sparkDriver' on port 57125.

22/07/18 19:58:23 INFO SparkEnv: Registering MapOutputTracker

22/07/18 19:58:23 INFO SparkEnv: Registering BlockManagerMaster

22/07/18 19:58:23 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

22/07/18 19:58:23 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

22/07/18 19:58:23 INFO SparkEnv: Registering BlockManagerMasterHeartbeat

22/07/18 19:58:23 INFO DiskBlockManager: Created local directory at C:\Users\Administrator\AppData\Local\Temp\blockmgr-bb1c5f1a-9493-481d-b6a5-7bb2105634d2

22/07/18 19:58:23 INFO MemoryStore: MemoryStore started with capacity 898.5 MiB

22/07/18 19:58:23 INFO SparkEnv: Registering OutputCommitCoordinator

22/07/18 19:58:24 INFO Utils: Successfully started service 'SparkUI' on port 4040.

22/07/18 19:58:24 INFO Executor: Starting executor ID driver on host 192.168.15.19

22/07/18 19:58:24 INFO Executor: Starting executor with user classpath (userClassPathFirst = false): ''

22/07/18 19:58:24 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 57168.

22/07/18 19:58:24 INFO NettyBlockTransferService: Server created on 192.168.15.19:57168

22/07/18 19:58:24 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

22/07/18 19:58:24 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, 192.168.15.19, 57168, None)

22/07/18 19:58:24 INFO BlockManagerMasterEndpoint: Registering block manager 192.168.15.19:57168 with 898.5 MiB RAM, BlockManagerId(driver, 192.168.15.19, 57168, None)

22/07/18 19:58:24 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, 192.168.15.19, 57168, None)

22/07/18 19:58:24 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, 192.168.15.19, 57168, None)

22/07/18 19:58:24 INFO SharedState: Setting hive.metastore.warehouse.dir ('null') to the value of spark.sql.warehouse.dir.

22/07/18 19:58:24 INFO SharedState: Warehouse path is 'file:/C:/Users/Administrator/IdeaProjects/baidu1/spark-warehouse'.

22/07/18 19:58:25 INFO InMemoryFileIndex: It took 25 ms to list leaf files for 1 paths.

22/07/18 19:58:25 INFO InMemoryFileIndex: It took 1 ms to list leaf files for 1 paths.

22/07/18 19:58:27 INFO FileSourceStrategy: Pushed Filters:

22/07/18 19:58:27 INFO FileSourceStrategy: Post-Scan Filters:

22/07/18 19:58:27 INFO FileSourceStrategy: Output Data Schema: struct<value: string>

22/07/18 19:58:27 INFO MemoryStore: Block broadcast_0 stored as values in memory (estimated size 348.6 KiB, free 898.2 MiB)

22/07/18 19:58:27 INFO MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 33.7 KiB, free 898.1 MiB)

22/07/18 19:58:27 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on 192.168.15.19:57168 (size: 33.7 KiB, free: 898.5 MiB)

22/07/18 19:58:27 INFO SparkContext: Created broadcast 0 from json at DemoSparkSQL.scala:10

22/07/18 19:58:27 INFO FileSourceScanExec: Planning scan with bin packing, max size: 4194304 bytes, open cost is considered as scanning 4194304 bytes.

22/07/18 19:58:27 INFO SparkContext: Starting job: json at DemoSparkSQL.scala:10

22/07/18 19:58:27 INFO DAGScheduler: Got job 0 (json at DemoSparkSQL.scala:10) with 1 output partitions

22/07/18 19:58:27 INFO DAGScheduler: Final stage: ResultStage 0 (json at DemoSparkSQL.scala:10)

22/07/18 19:58:27 INFO DAGScheduler: Parents of final stage: List()

22/07/18 19:58:27 INFO DAGScheduler: Missing parents: List()

22/07/18 19:58:27 INFO DAGScheduler: Submitting ResultStage 0 (MapPartitionsRDD[3] at json at DemoSparkSQL.scala:10), which has no missing parents

22/07/18 19:58:27 INFO MemoryStore: Block broadcast_1 stored as values in memory (estimated size 14.2 KiB, free 898.1 MiB)

22/07/18 19:58:27 INFO MemoryStore: Block broadcast_1_piece0 stored as bytes in memory (estimated size 6.7 KiB, free 898.1 MiB)

22/07/18 19:58:27 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 192.168.15.19:57168 (size: 6.7 KiB, free: 898.5 MiB)

22/07/18 19:58:27 INFO SparkContext: Created broadcast 1 from broadcast at DAGScheduler.scala:1513

22/07/18 19:58:27 INFO DAGScheduler: Submitting 1 missing tasks from ResultStage 0 (MapPartitionsRDD[3] at json at DemoSparkSQL.scala:10) (first 15 tasks are for partitions Vector(0))

22/07/18 19:58:27 INFO TaskSchedulerImpl: Adding task set 0.0 with 1 tasks resource profile 0

22/07/18 19:58:27 INFO TaskSetManager: Starting task 0.0 in stage 0.0 (TID 0) (192.168.15.19, executor driver, partition 0, PROCESS_LOCAL, 7881 bytes) taskResourceAssignments Map()

22/07/18 19:58:27 INFO Executor: Running task 0.0 in stage 0.0 (TID 0)

22/07/18 19:58:27 INFO FileScanRDD: Reading File path: file:///C:/Users/Administrator/IdeaProjects/baidu1/src/info.json, range: 0-191, partition values: [empty row]

22/07/18 19:58:28 INFO CodeGenerator: Code generated in 193.9552 ms

22/07/18 19:58:28 INFO Executor: Finished task 0.0 in stage 0.0 (TID 0). 2167 bytes result sent to driver

22/07/18 19:58:28 INFO TaskSetManager: Finished task 0.0 in stage 0.0 (TID 0) in 553 ms on 192.168.15.19 (executor driver) (1/1)

22/07/18 19:58:28 INFO TaskSchedulerImpl: Removed TaskSet 0.0, whose tasks have all completed, from pool

22/07/18 19:58:28 INFO DAGScheduler: ResultStage 0 (json at DemoSparkSQL.scala:10) finished in 0.728 s

22/07/18 19:58:28 INFO DAGScheduler: Job 0 is finished. Cancelling potential speculative or zombie tasks for this job

22/07/18 19:58:28 INFO TaskSchedulerImpl: Killing all running tasks in stage 0: Stage finished

22/07/18 19:58:28 INFO DAGScheduler: Job 0 finished: json at DemoSparkSQL.scala:10, took 0.766775 s

22/07/18 19:58:28 INFO FileSourceStrategy: Pushed Filters: IsNotNull(age),GreaterThanOrEqual(age,20)

22/07/18 19:58:28 INFO FileSourceStrategy: Post-Scan Filters: isnotnull(age#8L),(age#8L >= 20)

22/07/18 19:58:28 INFO FileSourceStrategy: Output Data Schema: struct<age: bigint, name: string>

22/07/18 19:58:28 INFO CodeGenerator: Code generated in 19.7363 ms

22/07/18 19:58:28 INFO MemoryStore: Block broadcast_2 stored as values in memory (estimated size 348.5 KiB, free 897.8 MiB)

22/07/18 19:58:28 INFO MemoryStore: Block broadcast_2_piece0 stored as bytes in memory (estimated size 33.7 KiB, free 897.7 MiB)

22/07/18 19:58:28 INFO BlockManagerInfo: Added broadcast_2_piece0 in memory on 192.168.15.19:57168 (size: 33.7 KiB, free: 898.4 MiB)

22/07/18 19:58:28 INFO SparkContext: Created broadcast 2 from show at DemoSparkSQL.scala:14

22/07/18 19:58:28 INFO FileSourceScanExec: Planning scan with bin packing, max size: 4194304 bytes, open cost is considered as scanning 4194304 bytes.

22/07/18 19:58:28 INFO SparkContext: Starting job: show at DemoSparkSQL.scala:14

22/07/18 19:58:28 INFO DAGScheduler: Got job 1 (show at DemoSparkSQL.scala:14) with 1 output partitions

22/07/18 19:58:28 INFO DAGScheduler: Final stage: ResultStage 1 (show at DemoSparkSQL.scala:14)

22/07/18 19:58:28 INFO DAGScheduler: Parents of final stage: List()

22/07/18 19:58:28 INFO DAGScheduler: Missing parents: List()

22/07/18 19:58:28 INFO DAGScheduler: Submitting ResultStage 1 (MapPartitionsRDD[7] at show at DemoSparkSQL.scala:14), which has no missing parents

22/07/18 19:58:28 INFO MemoryStore: Block broadcast_3 stored as values in memory (estimated size 13.8 KiB, free 897.7 MiB)

22/07/18 19:58:28 INFO MemoryStore: Block broadcast_3_piece0 stored as bytes in memory (estimated size 6.9 KiB, free 897.7 MiB)

22/07/18 19:58:28 INFO BlockManagerInfo: Added broadcast_3_piece0 in memory on 192.168.15.19:57168 (size: 6.9 KiB, free: 898.4 MiB)

22/07/18 19:58:28 INFO SparkContext: Created broadcast 3 from broadcast at DAGScheduler.scala:1513

22/07/18 19:58:28 INFO DAGScheduler: Submitting 1 missing tasks from ResultStage 1 (MapPartitionsRDD[7] at show at DemoSparkSQL.scala:14) (first 15 tasks are for partitions Vector(0))

22/07/18 19:58:28 INFO TaskSchedulerImpl: Adding task set 1.0 with 1 tasks resource profile 0

22/07/18 19:58:28 INFO TaskSetManager: Starting task 0.0 in stage 1.0 (TID 1) (192.168.15.19, executor driver, partition 0, PROCESS_LOCAL, 7881 bytes) taskResourceAssignments Map()

22/07/18 19:58:28 INFO Executor: Running task 0.0 in stage 1.0 (TID 1)

22/07/18 19:58:28 INFO FileScanRDD: Reading File path: file:///C:/Users/Administrator/IdeaProjects/baidu1/src/info.json, range: 0-191, partition values: [empty row]

22/07/18 19:58:28 INFO CodeGenerator: Code generated in 13.8558 ms

22/07/18 19:58:28 INFO CodeGenerator: Code generated in 6.469 ms

22/07/18 19:58:28 INFO Executor: Finished task 0.0 in stage 1.0 (TID 1). 1755 bytes result sent to driver

22/07/18 19:58:28 INFO TaskSetManager: Finished task 0.0 in stage 1.0 (TID 1) in 128 ms on 192.168.15.19 (executor driver) (1/1)

22/07/18 19:58:28 INFO TaskSchedulerImpl: Removed TaskSet 1.0, whose tasks have all completed, from pool

22/07/18 19:58:28 INFO DAGScheduler: ResultStage 1 (show at DemoSparkSQL.scala:14) finished in 0.147 s

22/07/18 19:58:28 INFO DAGScheduler: Job 1 is finished. Cancelling potential speculative or zombie tasks for this job

22/07/18 19:58:28 INFO TaskSchedulerImpl: Killing all running tasks in stage 1: Stage finished

22/07/18 19:58:28 INFO DAGScheduler: Job 1 finished: show at DemoSparkSQL.scala:14, took 0.152033 s

22/07/18 19:58:28 INFO CodeGenerator: Code generated in 13.3474 ms

+---+------------+

|age| name|

+---+------------+

| 20| 静怡的梦莹|

| 21|潇洒的龙姑娘|

| 21| 优雅的秋兰|

+---+------------+22/07/18 19:58:28 INFO SparkUI: Stopped Spark web UI at http://192.168.15.19:4040

22/07/18 19:58:28 INFO MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped!

22/07/18 19:58:28 INFO MemoryStore: MemoryStore cleared

22/07/18 19:58:28 INFO BlockManager: BlockManager stopped

22/07/18 19:58:28 INFO BlockManagerMaster: BlockManagerMaster stopped

22/07/18 19:58:28 INFO OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped!

22/07/18 19:58:28 INFO SparkContext: Successfully stopped SparkContext

22/07/18 19:58:28 INFO ShutdownHookManager: Shutdown hook called

22/07/18 19:58:28 INFO ShutdownHookManager: Deleting directory C:\Users\Administrator\AppData\Local\Temp\spark-04b6bffe-345b-4fc2-aaa2-a02c88e0dea4Process finished with exit code 0

5、Demo2

+---+-------------------+----------+---+

|age| birthday| name|sex|

+---+-------------------+----------+---+

| 19|2001-05-06 12:00:00|娉婷的星望| 女|

| 20|1999-05-02 12:00:00|静怡的雷静| 女|

| 22|2000-01-06 12:00:00|潇洒的春梦| 女|

| 21|2001-06-06 12:00:00|优雅的蓉菲| 女|

| 19|2002-05-09 12:00:00|玲珑的文静| 女|

| 18|2004-05-09 12:00:00|风雅的晓凤| 女|

+---+-------------------+----------+---+

+---+-------------------+----------+---+

|age| birthday| name|sex|

+---+-------------------+----------+---+

| 22|2000-01-06 12:00:00|潇洒的春梦| 女|

| 21|2001-06-06 12:00:00|优雅的蓉菲| 女|

+---+-------------------+----------+---+

+---+-------------------+----------+---+

|age| birthday| name|sex|

+---+-------------------+----------+---+

| 22|2000-01-06 12:00:00|潇洒的春梦| 女|

| 21|2001-06-06 12:00:00|优雅的蓉菲| 女|

+---+-------------------+----------+---+

+---+-------------------+----------+---+

|age| birthday| name|sex|

+---+-------------------+----------+---+

| 21|2001-06-06 12:00:00|优雅的蓉菲| 女|

| 18|2004-05-09 12:00:00|风雅的晓凤| 女|

+---+-------------------+----------+---+

+----------+---+

| name|age|

+----------+---+

|娉婷的星望| 19|

|静怡的雷静| 20|

|潇洒的春梦| 22|

|优雅的蓉菲| 21|

|玲珑的文静| 19|

|风雅的晓凤| 18|

+----------+---+

+----------+---+

| name|age|

+----------+---+

|优雅的蓉菲| 21|

+----------+---+

+----------+---+

| name|age|

+----------+---+

|潇洒的春梦| 22|

|优雅的蓉菲| 21|

|静怡的雷静| 20|

+----------+---+

+---+-------------------+----------+---+

|age| birthday| name|sex|

+---+-------------------+----------+---+

| 19|2001-05-06 12:00:00|娉婷的星望| 女|

| 22|2000-01-06 12:00:00|潇洒的春梦| 女|

| 21|2001-06-06 12:00:00|优雅的蓉菲| 女|

| 19|2002-05-09 12:00:00|玲珑的文静| 女|

| 18|2004-05-09 12:00:00|风雅的晓凤| 女|

+---+-------------------+----------+---+

+---+-------------------+----------+---+

|age| birthday| name|sex|

+---+-------------------+----------+---+

| 19|2001-05-06 12:00:00|娉婷的星望| 女|

| 21|2001-06-06 12:00:00|优雅的蓉菲| 女|

| 19|2002-05-09 12:00:00|玲珑的文静| 女|

+---+-------------------+----------+---+

+---+--------+

|sex|count(1)|

+---+--------+

| 女| 6|

+---+--------+

+---+-------------------+----------+---+

|age| birthday| name|sex|

+---+-------------------+----------+---+

| 20|1999-05-02 12:00:00|静怡的雷静| 女|

| 22|2000-01-06 12:00:00|潇洒的春梦| 女|

| 19|2001-05-06 12:00:00|娉婷的星望| 女|

| 21|2001-06-06 12:00:00|优雅的蓉菲| 女|

| 19|2002-05-09 12:00:00|玲珑的文静| 女|

| 18|2004-05-09 12:00:00|风雅的晓凤| 女|

+---+-------------------+----------+---+

+--------+--------+------------------+--------+--------+

|sum(age)|count(1)|round(avg(age), 2)|max(age)|min(age)|

+--------+--------+------------------+--------+--------+

| 119| 6| 19.83| 22| 18|

+--------+--------+------------------+--------+--------+

- 点赞

- 收藏

- 关注作者

评论(0)