2020人工神经网络第一次作业-参考答案第九部分

本文是 2020人工神经网络第一次作业 的参考答案第九部分

➤09 第九题参考答案

1.数据整理

根据char7data.txt中的文件将训练样本(21个字符)以及对应的输出值转化到两个矩阵:chardata, targetdata.

对应的转换程序参见后面作业程序中的hw19data部分的代码:数据整理程序。

2.构建网络

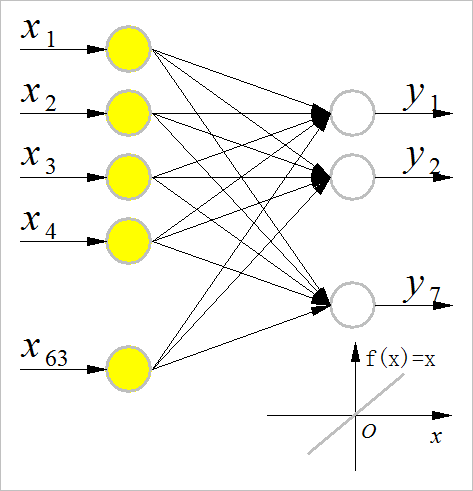

(1) 单层网络

单层网络的输入节点的个数与训练样本的输入向量长度相同,输出节点个数与训练样本的输出向量长度相同。输出节点的传递函数为线性函数。

结构如下图所示:

▲ 单层网络结构

输入向量 x ˉ \bar x xˉ与网络输出向量 y ˉ \bar y yˉ之间的关系为: y ˉ = W ⋅ x ˉ \bar y = W \cdot \bar x yˉ=W⋅xˉ。

所有的样本输入向量组成矩阵: X = { x ˉ 1 , x ˉ 2 , ⋯ , x ˉ 21 } X = \left\{ {\bar x_1 ,\bar x_2 , \cdots ,\bar x_{21} } \right\} X={xˉ1,xˉ2,⋯,xˉ21}

所有的样本输出向量组成矩阵: Y = { y ˉ 1 , y ˉ 2 , ⋯ , y ˉ 21 } Y = \left\{ {\bar y_1 ,\bar y_2 , \cdots ,\bar y_{21} } \right\} Y={yˉ1,yˉ2,⋯,yˉ21}

那么: Y = W ⋅ X Y = W \cdot X Y=W⋅X。

根据最小二乘方法,可以求取 W W W的值为: W ∗ = Y ⋅ ( X T ⋅ X + λ ⋅ I ) T ⋅ X T W^* = Y \cdot \left( {X^T \cdot X + \lambda \cdot I} \right)^T \cdot X^T W∗=Y⋅(XT⋅X+λ⋅I)T⋅XT

其中 λ \lambda λ取: λ = 0.00001 \lambda = 0.00001 λ=0.00001。

利用求的网络系数 W ∗ W^* W∗可以计算出所有样本对应的网络输出: Y ∗ = W ∗ ⋅ X Y^* = W^* \cdot X Y∗=W∗⋅X

利用二值化函数:

对网络的输出重新变成二值输出(0,1)。检验网络二值输出与训练样本,可以看到所有样本都可以正确分类。

算法具体python程序实现参见附录中:单层神经网络程序

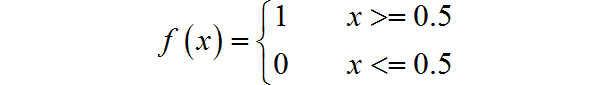

(2) 单隐层BP网络

网络结构如下图所示:

- 输入输出层节点个数分别与训练样本的输入和输出向量的长度相同;

- 隐层节点个数:选择取4~10。

▲ 网络结构

对于单隐层BP网络Python程序参见后面的附录中的: 3.单隐层BP网络Python程序

-

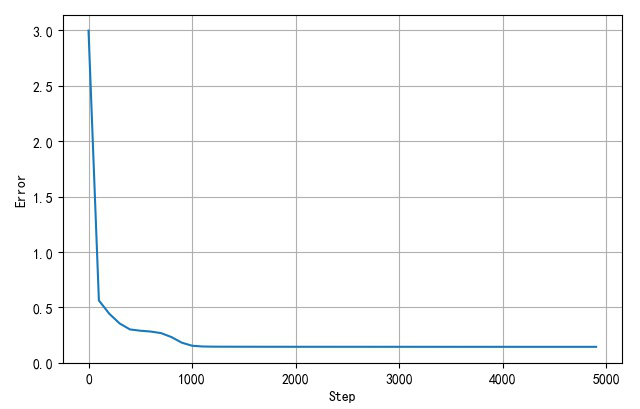

学习速率: η = 0.5 \eta = 0.5 η=0.5

-

训练训练次数:5000

-

网络误差训练收敛过程如下图所示:

▲ 网络训练收敛过程 -

网络训练样本错误个数:0

| 隐层节点数量 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 |

|---|---|---|---|---|---|---|---|---|---|

| 训练样本错误 | 15 | 9 | 4 | 0 | 0 | 0 | 0 | 0 | 0 |

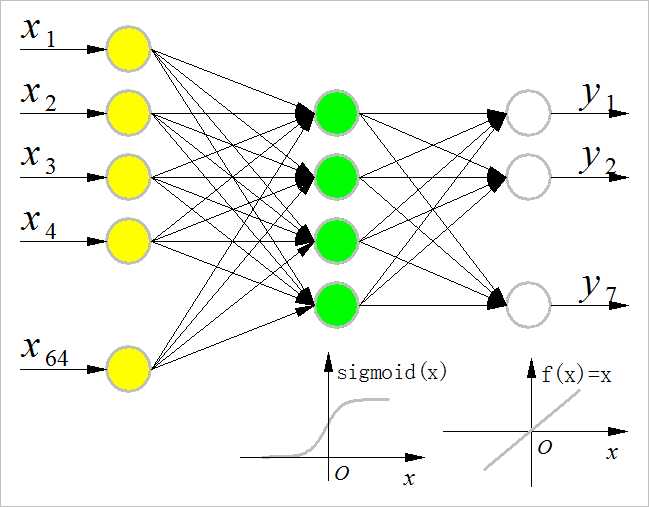

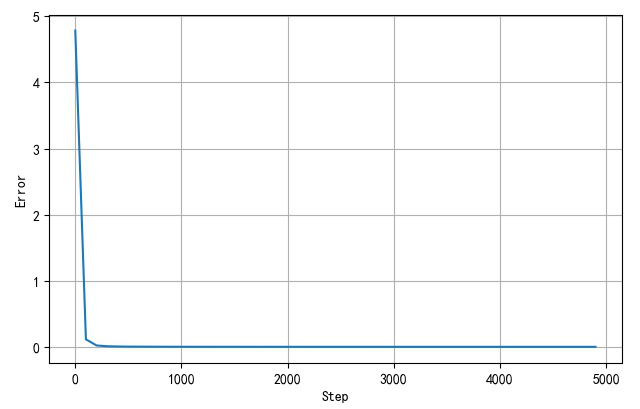

(3) 双隐层BP网络

网络结构如下图所示:

- 双隐层的节点个数分别是:4,5

▲ 双隐层网络结构

网络Python实现请参见附录中: 4.双隐层BP网络程序

- 训练学习速率: η = 0.8 \eta = 0.8 η=0.8

- 训练次数:5000

- 训练完之后,网络的错误率:0

▲ 网络训练误差收敛过程

如果隐层的个数小于等于4 之后,网络训练完之后,21个训练样本在识别中会存在错误。

➤※ 作业1-9中的Python程序

1.数据整理程序:hw19data.py

#!/usr/local/bin/python

# -*- coding: gbk -*-

#============================================================

# HW19DATA.PY -- by Dr. ZhuoQing 2020-11-24

#

# Note:

#============================================================

from headm import *

sdata = ('[0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 1, 1, 0, 1, 1, 1]',\

'[1, 0, 0, 0, 0, 0, 0]',\

'[1, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 0]',\

'[0, 1, 0, 0, 0, 0, 0]',\

'[0, 0, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 0, 1, 1, 1, 1, 0]',\

'[0, 0, 1, 0, 0, 0, 0]',\

'[1, 1, 1, 0, 0, 1, 1, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 1, 1, 1, 0, 0, 1, 1]',\

'[0, 0, 0, 0, 0, 0, 1]',\

'[0, 0, 0, 1, 1, 1, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0]',\

'[0, 0, 0, 0, 0, 1, 0]',\

'[1, 1, 1, 1, 1, 1, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 1, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1]',\

'[0, 0, 0, 0, 1, 0, 0]',\

'[1, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 1, 1, 1, 1, 1, 0, 0]',\

'[0, 0, 0, 1, 0, 0, 0]',\

'[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0]',\

'[1, 0, 0, 0, 0, 0, 0]',\

'[0, 0, 1, 1, 1, 1, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 0]',\

'[0, 1, 0, 0, 0, 0, 0]',\

'[0, 0, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0]',\

'[0, 0, 1, 0, 0, 0, 0]',\

'[1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0]',\

'[0, 0, 0, 0, 0, 0, 1]',\

'[0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0]',\

'[0, 0, 0, 0, 0, 1, 0]',\

'[0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1]',\

'[0, 0, 0, 0, 1, 0, 0]',\

'[0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 1, 0, 1, 1, 1, 1, 1, 0, 0]',\

'[0, 0, 0, 1, 0, 0, 0]',\

'[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 1, 1, 1, 1, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 1, 0, 0, 0, 1, 1]',\

'[1, 0, 0, 0, 0, 0, 0]',\

'[1, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 0]',\

'[0, 1, 0, 0, 0, 0, 0]',\

'[0, 0, 1, 1, 1, 0, 1, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0]',\

'[0, 0, 1, 0, 0, 0, 0]',\

'[1, 1, 1, 0, 0, 1, 1, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 1, 1, 1, 0, 0, 1, 1]',\

'[0, 0, 0, 0, 0, 0, 1]',\

'[0, 0, 0, 0, 1, 1, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0]',\

'[0, 0, 0, 0, 0, 1, 0]',\

'[1, 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 1, 0, 0, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1]',\

'[0, 0, 0, 0, 1, 0, 0]',\

'[1, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 1, 1, 1, 1, 1, 0, 0]',\

'[0, 0, 0, 1, 0, 0, 0]',)

#------------------------------------------------------------

chardata = []

targetdata = []

for s in sdata:

data = eval(s)

if len(data) > 7: chardata.append(data)

else: targetdata.append(data)

printf(chardata, targetdata)

chardata = array(chardata)

targetdata = array(targetdata).T

if __name__ == "__main__":

printff(chardata, targetdata)

#------------------------------------------------------------

# END OF FILE : HW19DATA.PY

#============================================================

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

2.单层神经网络程序

#!/usr/local/bin/python

# -*- coding: gbk -*-

#============================================================

# HW19CONCEPTION.PY -- by Dr. ZhuoQing 2020-11-24

#

# Note:

#============================================================

from headm import *

import hw19data

#------------------------------------------------------------

x_train = hw19data.chardata.T

y_train = hw19data.targetdata

printf(x_train.shape)

hn = x_train.shape[1]

printf(hn)

x_inv = dot(linalg.inv(eye(hn) * 0.00001 + dot(x_train.T, x_train)), x_train.T)

W = dot(y_train, x_inv)

printf(W.shape)

y_net = dot(W, x_train)

y_net[y_net <= 0.5] = 0

y_net[y_net > 0.5] = 1

printf(y_net)

printf(y_train)

error = sum(y_net != y_train)

printf(error)

#------------------------------------------------------------

# END OF FILE : HW19CONCEPTION.PY

#============================================================

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

3.单隐层BP网络Python程序

(1) 单隐层BP网络

#!/usr/local/bin/python

# -*- coding: gbk -*-

#============================================================

# HW19BPSS.PY -- by Dr. ZhuoQing 2020-11-24

#

# Note:

#============================================================

from headm import *

from bp1ss import *

import hw19data

#------------------------------------------------------------

x_train = hw19data.chardata

y_train = hw19data.targetdata

#------------------------------------------------------------

# Define the training

DISP_STEP = 100

#------------------------------------------------------------

pltgif = PlotGIF()

#------------------------------------------------------------

def train(X, Y, num_iterations, learning_rate, print_cost=False, Hn=10):

n_x = X.shape[1]

n_y = Y.shape[0]

n_h = Hn

lr = learning_rate

parameters = initialize_parameters(n_x, n_h, n_y)

XX,YY = x_train, y_train

costdim = []

for i in range(0, num_iterations):

A2, cache = forward_propagate(XX, parameters)

cost = calculate_cost(A2, YY, parameters)

grads = backward_propagate(parameters, cache, XX, YY)

parameters = update_parameters(parameters, grads, lr)

if print_cost and i % DISP_STEP == 0:

printf('Cost after iteration:%i: %f'%(i, cost))

costdim.append(cost)

return parameters, costdim

#------------------------------------------------------------

parameters,costdim = train(x_train, y_train, 5000, 0.5, True, 6)

A2, cache = forward_propagate(x_train, parameters)

A2[A2 > 0.5] = 1

A2[A2 <= 0.5] = 0

error = sum(A2!=y_train)

printf(error)

stepdim = array(range(len(costdim))) * DISP_STEP

plt.plot(stepdim, costdim)

plt.xlabel("Step")

plt.ylabel("Error")

plt.grid(True)

plt.tight_layout()

plt.show()

#------------------------------------------------------------

# END OF FILE : HW19BPSS.PY

#============================================================

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

(2) BP网络程序Python实现

#!/usr/local/bin/python

# -*- coding: gbk -*-

#============================================================

# BP1SS.PY -- by Dr. ZhuoQing 2020-11-17

#

# Note:

#============================================================

from headm import *

#------------------------------------------------------------

# Samples data construction

random.seed(int(time.time()))

#------------------------------------------------------------

def shuffledata(X, Y):

id = list(range(X.shape[0]))

random.shuffle(id)

return X[id], (Y.T[id]).T

#------------------------------------------------------------

# Define and initialization NN

def initialize_parameters(n_x, n_h, n_y):

W1 = random.randn(n_h, n_x) * 0.5 # dot(W1,X.T)

W2 = random.randn(n_y, n_h) * 0.5 # dot(W2,Z1)

b1 = zeros((n_h, 1)) # Column vector

b2 = zeros((n_y, 1)) # Column vector

parameters = {'W1':W1,

'b1':b1,

'W2':W2,

'b2':b2}

return parameters

#------------------------------------------------------------

# Forward propagattion

# X:row->sample;

# Z2:col->sample

def forward_propagate(X, parameters):

W1 = parameters['W1']

b1 = parameters['b1']

W2 = parameters['W2']

b2 = parameters['b2']

Z1 = dot(W1, X.T) + b1 # X:row-->sample; Z1:col-->sample

A1 = 1/(1+exp(-Z1))

Z2 = dot(W2, A1) + b2 # Z2:col-->sample

A2 = Z2 # Linear output

# A2 = 1/(1+exp(-Z2))

cache = {'Z1':Z1,

'A1':A1,

'Z2':Z2,

'A2':A2}

return Z2, cache

#------------------------------------------------------------

# Calculate the cost

# A2,Y: col->sample

def calculate_cost(A2, Y, parameters):

err = [x1-x2 for x1,x2 in zip(A2.T, Y.T)]

cost = [dot(e,e) for e in err]

return mean(cost)

#------------------------------------------------------------

# Backward propagattion

def backward_propagate(parameters, cache, X, Y):

m = X.shape[0] # Number of the samples

W1 = parameters['W1']

W2 = parameters['W2']

A1 = cache['A1']

A2 = cache['A2']

dZ2 = (A2 - Y)

dW2 = dot(dZ2, A1.T) / m

db2 = sum(dZ2, axis=1, keepdims=True) / m

dZ1 = dot(W2.T, dZ2) * (A1 * (1-A1))

dW1 = dot(dZ1, X) / m

db1 = sum(dZ1, axis=1, keepdims=True) / m

grads = {'dW1':dW1,

'db1':db1,

'dW2':dW2,

'db2':db2}

return grads

#------------------------------------------------------------

# Update the parameters

def update_parameters(parameters, grads, learning_rate):

W1 = parameters['W1']

b1 = parameters['b1']

W2 = parameters['W2']

b2 = parameters['b2']

dW1 = grads['dW1']

db1 = grads['db1']

dW2 = grads['dW2']

db2 = grads['db2']

W1 = W1 - learning_rate * dW1

W2 = W2 - learning_rate * dW2

b1 = b1 - learning_rate * db1

b2 = b2 - learning_rate * db2

parameters = {'W1':W1,

'b1':b1,

'W2':W2,

'b2':b2}

return parameters

#------------------------------------------------------------

# END OF FILE : BP1SS.PY

#============================================================

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

4.双隐层BP网络程序

#!/usr/local/bin/python

# -*- coding: gbk -*-

#============================================================

# HW19BP2.PY -- by Dr. ZhuoQing 2020-11-24

#

# Note:

#============================================================

from headm import *

from bp2sigmoid import *

import hw19data

#------------------------------------------------------------

random.seed(int(time.time()))

x_train = hw19data.chardata

y_train = hw19data.targetdata

#------------------------------------------------------------

# Define the training

DISP_STEP = 100

#------------------------------------------------------------

pltgif = PlotGIF()

#------------------------------------------------------------

def train(X, Y, num_iterations, learning_rate, print_cost=False, Hn=4, Hn1=4):

n_x = X.shape[1]

n_y = Y.shape[0]

n_h = Hn

n_h1 = Hn1

lr = learning_rate

parameters = initialize_parameters(n_x, n_h, n_h1, n_y)

XX,YY = x_train, y_train

costdim = []

for i in range(0, num_iterations):

A2, cache = forward_propagate(XX, parameters)

cost = calculate_cost(A2, YY, parameters)

grads = backward_propagate(parameters, cache, XX, YY)

parameters = update_parameters(parameters, grads, lr)

if print_cost and i % DISP_STEP == 0:

printf('Cost after iteration:%i: %f'%(i, cost))

costdim.append(cost)

return parameters, costdim

#------------------------------------------------------------

parameters,costdim = train(x_train, y_train, 5000, 0.8, True, 4, 5)

A2, cache = forward_propagate(x_train, parameters)

A2[A2 > 0.5] = 1

A2[A2 <= 0.5] = 0

error = sum(A2!=y_train)

printf(error)

stepdim = array(range(len(costdim))) * DISP_STEP

plt.plot(stepdim, costdim)

plt.xlabel("Step")

plt.ylabel("Error")

plt.grid(True)

plt.tight_layout()

plt.show()

#------------------------------------------------------------

# END OF FILE : HW19BP2.PY

#============================================================

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

#!/usr/local/bin/python

# -*- coding: gbk -*-

#============================================================

# BP2SIGMOID.PY -- by Dr. ZhuoQing 2020-11-17

#

# Note:

#============================================================

from headm import *

#------------------------------------------------------------

# Samples data construction

random.seed(int(time.time()))

#------------------------------------------------------------

def shuffledata(X, Y):

id = list(range(X.shape[0]))

random.shuffle(id)

return X[id], (Y.T[id]).T

#------------------------------------------------------------

# Define and initialization NN

def initialize_parameters(n_x, n_h, n_h1, n_y):

W1 = random.randn(n_h, n_x) * 0.5 # dot(W1,X.T)

W2 = random.randn(n_h1, n_h) * 0.5 # dot(W2,Z1)

W3 = random.randn(n_y, n_h1) * 0.5 # dot(W3,Z2)

b1 = zeros((n_h, 1)) # Column vector

b2 = zeros((n_h1, 1))

b3 = zeros((n_y, 1)) # Column vector

parameters = {'W1':W1,

'b1':b1,

'W2':W2,

'b2':b2,

'W3':W3,

'b3':b3}

return parameters

#------------------------------------------------------------

# Forward propagattion

# X:row->sample;

# Z2:col->sample

def forward_propagate(X, parameters):

W1 = parameters['W1']

b1 = parameters['b1']

W2 = parameters['W2']

b2 = parameters['b2']

W3 = parameters['W3']

b3 = parameters['b3']

Z1 = dot(W1, X.T) + b1 # X:row-->sample; Z1:col-->sample

A1 = 1/(1+exp(-Z1))

Z2 = dot(W2, A1) + b2 # X:row-->sample; Z1:col-->sample

A2 = 1/(1+exp(-Z2))

Z3 = dot(W3, A2) + b3

A3 = Z3 # Linear output

cache = {'Z1':Z1,

'A1':A1,

'Z2':Z2,

'A2':A2,

'Z3':Z3,

'A3':A3}

return Z3, cache

#------------------------------------------------------------

# Calculate the cost

# A3,Y: col->sample

def calculate_cost(A3, Y, parameters):

err = [x1-x2 for x1,x2 in zip(A3.T, Y.T)]

cost = [dot(e,e) for e in err]

return mean(cost)

#------------------------------------------------------------

# Backward propagattion

def backward_propagate(parameters, cache, X, Y):

m = X.shape[0] # Number of the samples

W1 = parameters['W1']

W2 = parameters['W2']

W3 = parameters['W3']

A1 = cache['A1']

A2 = cache['A2']

A3 = cache['A3']

dZ3 = (A3 - Y)

dW3 = dot(dZ3, A2.T) / m

db3 = sum(dZ3, axis=1, keepdims=True) / m

dZ2 = dot(W3.T, dZ3) * (A2 * (1-A2))

dW2 = dot(dZ2, A1.T) / m

db2 = sum(dZ2, axis=1, keepdims=True) / m

dZ1 = dot(W2.T, dZ2) * (A1 * (1-A1))

dW1 = dot(dZ1, X) / m

db1 = sum(dZ1, axis=1, keepdims=True) / m

grads = {'dW1':dW1,

'db1':db1,

'dW2':dW2,

'db2':db2,

'dW3':dW3,

'db3':db3}

return grads

#------------------------------------------------------------

# Update the parameters

def update_parameters(parameters, grads, learning_rate):

W1 = parameters['W1']

b1 = parameters['b1']

W2 = parameters['W2']

b2 = parameters['b2']

W3 = parameters['W3']

b3 = parameters['b3']

dW1 = grads['dW1']

db1 = grads['db1']

dW2 = grads['dW2']

db2 = grads['db2']

dW3 = grads['dW3']

db3 = grads['db3']

W1 = W1 - learning_rate * dW1

W2 = W2 - learning_rate * dW2

W3 = W3 - learning_rate * dW3

b1 = b1 - learning_rate * db1

b2 = b2 - learning_rate * db2

b3 = b3 - learning_rate * db3

parameters = {'W1':W1,

'b1':b1,

'W2':W2,

'b2':b2,

'W3':W3,

'b3':b3}

return parameters

#------------------------------------------------------------

# END OF FILE : BP2SIGMOID.PY

#============================================================

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

文章来源: zhuoqing.blog.csdn.net,作者:卓晴,版权归原作者所有,如需转载,请联系作者。

原文链接:zhuoqing.blog.csdn.net/article/details/109830516

- 点赞

- 收藏

- 关注作者

评论(0)