使用 kubeadm 安装单master kubernetes 集群(手动版)

节点信息

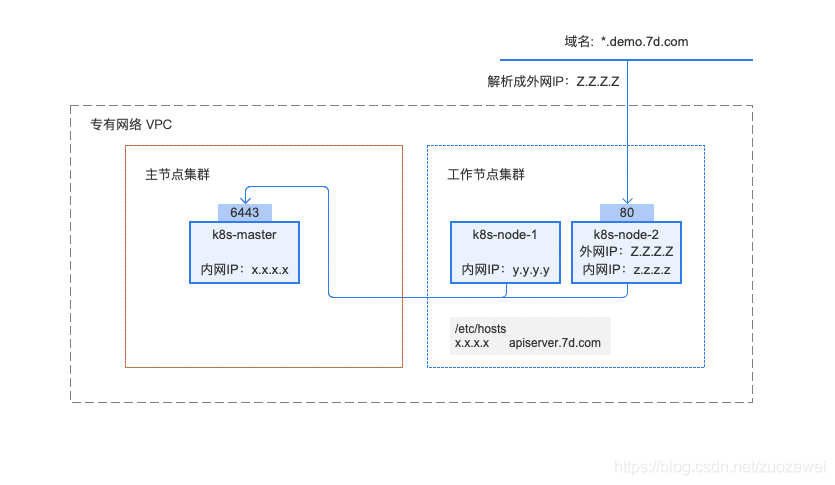

安装后的拓扑图如下:

修改主机名

#master 节点:

hostnamectl set-hostname k8s-master

#node1 节点:

hostnamectl set-hostname k8s-node1

#node2 节点:

hostnamectl set-hostname k8s-node2

#node3 节点:

hostnamectl set-hostname k8s-node3

基本配置

#Step 1: 修改/etc/hosts 文件

172.16.106.226 k8s-master

172.16.106.209 k8s-node1

172.16.106.239 k8s-node2

172.16.106.205 k8s-node3

#Step 2: 快速复制到其它主机

scp /etc/hosts root@k8s-node1:/etc/

#Step 3: 关闭防火墙和 selinux

systemctl stop firewalld && systemctl disable firewalld

sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config && setenforce 0

##Step 4: 关闭 swap

swapoff -a

yes | cp /etc/fstab /etc/fstab_bak

cat /etc/fstab_bak |grep -v swap > /etc/fstab

配置时间同步

使用 chrony 同步时间,配置 master 节点与网络 NTP 服务器同步时间,所有 node 节点与 master 节点同步时间。

配置 master 节点:

#Step 1: 安装 chrony:

yum install -y chrony

#Step 2: 注释默认 ntp 服务器

sed -i 's/^server/#&/' /etc/chrony.conf

#Step 3: 指定上游公共 ntp 服务器,并允许其他节点同步时间

vi /etc/chrony.conf

server 0.asia.pool.ntp.org iburst

server 1.asia.pool.ntp.org iburst

server 2.asia.pool.ntp.org iburst

server 3.asia.pool.ntp.org iburst

allow all

#Step 4: 重启 chronyd 服务并设为开机启动:

systemctl enable chronyd && systemctl restart chronyd

#Step 5: 开启网络时间同步功能

timedatectl set-ntp true

配置所有 node 节点:

#Step 1: 安装 chrony:

yum install -y chrony

#Step 2: 注释默认服务器

sed -i 's/^server/#&/' /etc/chrony.conf

#Step 3: 指定内网 master 节点为上游 NTP 服务器

echo server 172.16.106.226 iburst >> /etc/chrony.conf

#Step 4: 重启服务并设为开机启动:

systemctl enable chronyd && systemctl restart chronyd

所有节点执行chronyc sources命令,查看存在以^*开头的行,说明已经与服务器时间同步:

[root@k8s-node1 ~]# chronyc sources

210 Number of sources = 1

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* k8s-master 3 7 377 53 -51us[ -147us] +/- 22ms

修改 node iptables 相关参数

RHEL / CentOS 7 上的一些用户报告了由于 iptables 被绕过而导致流量路由不正确的问题。创建 /etc/sysctl.d/k8s.conf 文件,添加如下内容:

#Step 1: 创建配置文件

vi /etc/sysctl.d/k8s.conf

vm.swappiness = 0

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

#Step 2: 使配置生效

modprobe br_netfilter

sysctl -p /etc/sysctl.d/k8s.conf

#Step 3: 快速复制到其它主机

scp /etc/sysctl.d/k8s.conf root@k8s-node1:/etc/sysctl.d/

加载 ipvs 相关模块

由于 ipvs 已经加入到了内核的主干,所以为 kube-proxy 开启 ipvs 的前提需要加载以下的内核模块:

在所有的 Kubernetes 节点执行以下脚本:

#Step 1: 创建脚本

vi /etc/sysconfig/modules/ipvs.modules

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

#Step 2: 快速复制到其它主机

scp /etc/sysconfig/modules/ipvs.modules root@k8s-node1:/etc/sysconfig/modules/

#Step 3: 执行脚本

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

上面脚本创建了/etc/sysconfig/modules/ipvs.modules 文件,保证在节点重启后能自动加载所需模块。 使用 lsmod | grep -e ip_vs -e nf_conntrack_ipv4 命令查看是否已经正确加载所需的内核模块。

接下来还需要确保各个节点上已经安装了 ipset 软件包。 为了便于查看 ipvs 的代理规则,最好安装一下管理工具 ipvsadm。

yum install ipset ipvsadm -y

安装 docker

Kubernetes 默认的容器运行时仍然是 Docker,使用的是 kubelet 中内置 dockershim CRI 实现.

# step 1: 安装必要的一些系统工具

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

# Step 2: 添加软件源信息

sudo yum-config-manager --add-repo http://mirrors.aliyun.com/

docker-ce/linux/centos/docker-ce.repo

# Step 3: 更新并安装 Docker-CE

sudo yum makecache fast

sudo yum -y install docker-ce docker-ce-selinux

# 注意:

# 官方软件源默认启用了最新的软件,您可以通过编辑软件源的方式获取各个版本的软件包。例如官方并没有将测试版本的软件源置为可用,你可以通过以下方式开启。同理可以开启各种测试版本等。

# vim /etc/yum.repos.d/docker-ce.repo

# 将 [docker-ce-test] 下方的 enabled=0 修改为 enabled=1

#

# 安装指定版本的 Docker-CE:

# Step 3.1: 查找 Docker-CE 的版本:

# yum list docker-ce.x86_64 --showduplicates | sort -r

# Loading mirror speeds from cached hostfile

# Loaded plugins: branch, fastestmirror, langpacks

# docker-ce.x86_64 18.03.1.ce-1.el7.centos docker-ce-stable

# docker-ce.x86_64 18.03.1.ce-1.el7.centos @docker-ce-stable

# docker-ce.x86_64 18.03.0.ce-1.el7.centos docker-ce-stable

# Available Packages

# Step 3.2 : 安装指定版本的 Docker-CE: (VERSION 例如上面的 18.03.0.ce.1-1.el7.centos)

sudo yum -y --setopt=obsoletes=0 install docker-ce-[VERSION] \

docker-ce-selinux-[VERSION]

# Step 4: 开启 Docker 服务

sudo systemctl enable docker && systemctl start docker

卸载老版本的 Docker:

yum remove docker \

docker-common \

docker-selinux \

docker-engine

安装校验:

Client: Docker Engine - Community

Version: 19.03.11

API version: 1.40

Go version: go1.13.10

Git commit: 42e35e61f3

Built: Mon Jun 1 09:13:48 2020

OS/Arch: linux/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 19.03.11

API version: 1.40 (minimum version 1.12)

Go version: go1.13.10

Git commit: 42e35e61f3

Built: Mon Jun 1 09:12:26 2020

OS/Arch: linux/amd64

Experimental: false

containerd:

Version: 1.2.13

GitCommit: 7ad184331fa3e55e52b890ea95e65ba581ae3429

runc:

Version: 1.0.0-rc10

GitCommit: dc9208a3303feef5b3839f4323d9beb36df0a9dd

docker-init:

Version: 0.18.0

GitCommit: fec3683

安装 kubeadm,kubelet,kubectl

在各节点安装 kubeadm,kubelet,kubectl:

- kubelet 在群集中所有节点上运行的核心组件, 用来执行如启动 pods 和 containers 等操作。

- kubeadm 引导启动 k8s 集群的命令行工具,用于初始化 Cluster。

- kubectl 是 Kubernetes 命令行工具。通过 kubectl 可以部署和管理应用,查看各种资源,创建、删除和更新各种组件。

#Step 1: 配置 kubernetes.repo 的源,由于官方源国内无法访问,这里使用阿里云 yum 源

vi /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

#Step 2: 快速复制到其它主机

scp /etc/yum.repos.d/kubernetes.repo root@k8s-node1:/etc/yum.repos.d/

#Step 3: 更新并安装 kubelet

sudo yum makecache fast

#Step 4:在所有节点上安装

yum install -y kubelet kubeadm kubectl

#Step 5: 启动 kubelet 服务

systemctl enable kubelet && systemctl start kubelet

官方安装文档可以参考:

部署 master 节点

Master 节点执行初始化:

kubeadm init \

--apiserver-advertise-address=172.16.106.226 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.18.1 \

--pod-network-cidr=10.244.0.0/16

注意这里执行初始化用到了- -image-repository 选项,指定初始化需要的镜像源从阿里云镜像仓库拉取。

初始化命令说明:

--apiserver-advertise-address:指明用 Master 的哪个 interface 与 Cluster 的其他节点通信。如果 Master 有多个 interface,建议明确指定,如果不指定,kubeadm 会自动选择有默认网关的 interface。--pod-network-cidr:指定 Pod 网络的范围。Kubernetes 支持多种网络方案,而且不同网络方案对--pod-network-cidr有自己的要求,这里设置为10.244.0.0/16是因为我们将使用 flannel 网络方案,必须设置成这个 CIDR。--image-repository:Kubenetes 默认 Registries 地址是k8s.gcr.io,在国内并不能访问gcr.io,在 1.18 版本中我们可以增加–image-repository参数,默认值是k8s.gcr.io,将其指定为阿里云镜像地址:registry.aliyuncs.com/google_containers。--kubernetes-version=v1.18.1:关闭版本探测,因为它的默认值是 stable-1,会导致从https://dl.k8s.io/release/stable-1.txt下载最新的版本号,我们可以将其指定为固定版本(最新版:v1.18.1)来跳过网络请求。

初始化过程如下:

W0620 11:53:21.635124 21454 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.18.1

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 172.16.106.226]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [172.16.106.226 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [172.16.106.226 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W0620 11:54:02.402998 21454 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W0620 11:54:02.404297 21454 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 17.504415 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: ztz3qu.ee9gdjh32g228l4k

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.16.106.226:6443 --token ztz3qu.ee9gdjh32g228l4k \

--discovery-token-ca-cert-hash sha256:24411c65811afb54501be97ad0cf28c87dc9f51ca0ee5c49f71e58b535d91a43

(注意记录下初始化结果中的 kubeadm join 命令,部署 worker 节点时会用到)

初始化过程说明:

- [preflight] kubeadm 执行初始化前的检查。

- [kubelet-start] 生成 kubelet 的配置文件”/var/lib/kubelet/config.yaml”

- [certificates] 生成相关的各种 token 和证书

- [kubeconfig] 生成 KubeConfig 文件,kubelet 需要这个文件与 Master 通信

- [control-plane] 安装 Master 组件,会从指定的 Registry 下载组件的 Docker 镜像。

- [bootstraptoken] 生成 token 记录下来,后边使用 kubeadm join 往集群中添加节点时会用到

- [addons] 安装附加组件 kube-proxy 和 kube-dns。

- Kubernetes Master 初始化成功,提示如何配置常规用户使用 kubectl 访问集群。

- 提示如何安装 Pod 网络。

- 提示如何注册其他节点到 Cluster。

完整的官方文档可以参考:

-

https://kubernetes.io/docs/setup/independent/create-cluster-kubeadm/

-

https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm-init/

配置 kubectl

kubectl 是管理 Kubernetes Cluster 的命令行工具,前面我们已经在所有的节点安装了 kubectl。Master 初始化完成后需要做一些配置工作,然后 kubectl 就能使用了。

依照 kubeadm init 输出的最后提示,推荐用 Linux 普通用户执行 kubectl。

#Step 1:创建普通用户 7d 并设置密码 123456

useradd 7d && echo "7d:123456" | chpasswd 7d

#Step 2:追加 sudo 权限,并配置 sudo 免密

sed -i '/^root/a\7d ALL=(ALL) NOPASSWD:ALL' /etc/sudoers

#Step 3:保存集群安全配置文件到当前用户.kube 目录

su - 7d

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

#Step 4:启用 kubectl 命令自动补全功能(注销重新登录生效)

echo "source <(kubectl completion bash)" >> ~/.bashrc

需要这些配置命令的原因是:Kubernetes 集群默认需要加密方式访问。所以,这几条命令,就是将刚刚部署生成的 Kubernetes 集群的安全配置文件,保存到当前用户的.kube 目录下,kubectl 默认会使用这个目录下的授权信息访问 Kubernetes 集群。

如果不这么做的话,我们每次都需要通过 export KUBECONFIG 环境变量告诉 kubectl 这个安全配置文件的位置。

配置完成后 centos 用户就可以使用 kubectl 命令管理集群了。

查看集群状态,确认各个组件处于 Healthy 状态:

[7d@k8s-master ~]$ kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

查看节点状态:

[7d@k8s-master ~]$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 159m v1.18.4

可以看到,当前只存在 1 个 master 节点,并且这个节点的状态是 NotReady。

使用 kubectl describe 命令来查看这个节点(Node)对象的详细信息、状态和事件(Event):

[7d@k8s-master ~]$ kubectl describe node k8s-master

......

Conditions:

Type Status LastHeartbeatTime LastTransitionTime Reason Message

---- ------ ----------------- ------------------ ------ -------

MemoryPressure False Sat, 20 Jun 2020 14:30:30 +0800 Sat, 20 Jun 2020 11:54:13 +0800 KubeletHasSufficientMemory kubelet has sufficient memory available

DiskPressure False Sat, 20 Jun 2020 14:30:30 +0800 Sat, 20 Jun 2020 11:54:13 +0800 KubeletHasNoDiskPressure kubelet has no disk pressure

PIDPressure False Sat, 20 Jun 2020 14:30:30 +0800 Sat, 20 Jun 2020 11:54:13 +0800 KubeletHasSufficientPID kubelet has sufficient PID available

Ready False Sat, 20 Jun 2020 14:30:30 +0800 Sat, 20 Jun 2020 11:54:13 +0800 KubeletNotReady runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized

通过 kubectl describe 指令的输出,我们可以看到 NodeNotReady 的原因在于,我们尚未部署任何网络插件。

另外,我们还可以通过 kubectl 检查这个节点上各个系统 Pod 的状态,其中,kube-system 是 Kubernetes 项目预留的系统 Pod 的工作空间(Namepsace,注意它并不是 Linux Namespace,它只是 Kubernetes 划分不同工作空间的单位):

[7d@k8s-master ~]$ kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-7ff77c879f-24n99 0/1 Pending 0 162m <none> <none> <none> <none>

coredns-7ff77c879f-jdqkz 0/1 Pending 0 162m <none> <none> <none> <none>

etcd-k8s-master 1/1 Running 0 162m 172.16.106.226 k8s-master <none> <none>

kube-apiserver-k8s-master 1/1 Running 0 162m 172.16.106.226 k8s-master <none> <none>

kube-controller-manager-k8s-master 1/1 Running 0 162m 172.16.106.226 k8s-master <none> <none>

kube-proxy-56qcc 1/1 Running 0 162m 172.16.106.226 k8s-master <none> <none>

kube-scheduler-k8s-master 1/1 Running 0 162m 172.16.106.226 k8s-master <none> <none>

可以看到,CoreDNS 依赖于网络的 Pod 都处于 Pending 状态,即调度失败。这当然是符合预期的:因为这个 Master 节点的网络尚未就绪。

集群初始化如果遇到问题,可以使用 kubeadm reset 命令进行清理然后重新执行初始化。

部署网络插件

要让 Kubernetes Cluster 能够工作,必须安装 Pod 网络,否则 Pod 之间无法通信。

Kubernetes 支持多种网络方案,这里我们使用 flannel

#Step 1:下载部署文件

[7d@k8s-master ~]$ wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

#Step 2:安装 flannel

[7d@k8s-master ~]$ kubectl apply -f kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds-amd64 created

daemonset.apps/kube-flannel-ds-arm64 created

daemonset.apps/kube-flannel-ds-arm created

daemonset.apps/kube-flannel-ds-ppc64le created

daemonset.apps/kube-flannel-ds-s390x created

##Step 3:重新检测 pod 状态

[7d@k8s-master ~]$ kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-7ff77c879f-24n99 1/1 Running 0 167m 10.244.0.2 k8s-master <none> <none>

coredns-7ff77c879f-jdqkz 1/1 Running 0 167m 10.244.0.3 k8s-master <none> <none>

etcd-k8s-master 1/1 Running 0 167m 172.16.106.226 k8s-master <none> <none>

kube-apiserver-k8s-master 1/1 Running 0 167m 172.16.106.226 k8s-master <none> <none>

kube-controller-manager-k8s-master 1/1 Running 0 167m 172.16.106.226 k8s-master <none> <none>

kube-flannel-ds-amd64-5xhp5 1/1 Running 0 69s 172.16.106.226 k8s-master <none> <none>

kube-proxy-56qcc 1/1 Running 0 167m 172.16.106.226 k8s-master <none> <none>

kube-scheduler-k8s-master 1/1 Running 0 167m 172.16.106.226 k8s-master <none> <none>

可以看到,所有的系统 Pod 都成功启动了,而刚刚部署的 flannel 网络插件则在 kube-system 下面新建了一个名叫kube-flannel-ds-amd64-5xhp5的 Pod,一般来说,这些 Pod 就是容器网络插件在每个节点上的控制组件。

Kubernetes 支持容器网络插件,使用的是一个名叫 CNI 的通用接口,它也是当前容器网络的事实标准,市面上的所有容器网络开源项目都可以通过 CNI 接入 Kubernetes,比如 Flannel、Calico、Canal、Romana 等等,它们的部署方式也都是类似的“一键部署” .

再次查看 master 节点状态已经为 ready 状态:

[7d@k8s-master ~]$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 167m v1.18.4

至此,Kubernetes 的 Master 节点就部署完成了。如果你只需要一个单节点的 Kubernetes,现在你就可以使用了。不过,在默认情况下,Kubernetes 的 Master 节点是不能运行用户 Pod 的。

部署 worker 节点

Kubernetes 的 Worker 节点跟 Master 节点几乎是相同的,它们运行着的都是一个 kubelet 组件。唯一的区别在于,在 kubeadm init 的过程中,kubelet 启动后,Master 节点上还会自动运行 kube-apiserver、kube-scheduler、kube-controller-manger 这三个系统 Pod。

在 node 节点 上分别执行如下命令,将其注册到 Cluster 中:

#执行以下命令将节点接入集群

kubeadm join 172.16.106.226:6443 --token ztz3qu.ee9gdjh32g228l4k \

--discovery-token-ca-cert-hash sha256:24411c65811afb54501be97ad0cf28c87dc9f51ca0ee5c49f71e58b535d91a43

#如果执行 kubeadm init 时没有记录下加入集群的命令,可以通过以下命令重新创建

kubeadm token create --print-join-command

在 k8s-node1 上执行 kubeadm join:

[root@k8s-node1 ~]# kubeadm join 172.16.106.226:6443 --token ztz3qu.ee9gdjh32g228l4k \

> --discovery-token-ca-cert-hash sha256:24411c65811afb54501be97ad0cf28c87dc9f51ca0ee5c49f71e58b535d91a43 ;

W0620 14:44:55.729260 32211 join.go:346] [preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set.

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.18" ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

重复执行以上操作将其他 node 也加进去。

然后根据提示,我们可以通过kubectl get nodes查看节点的状态:

[7d@k8s-master ~]$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 4h43m v1.18.4

k8s-node1 Ready <none> 112m v1.18.4

k8s-node2 Ready <none> 2m14s v1.18.4

k8s-node3 Ready <none> 4m57s v1.18.4

nodes 状态全部为 ready,由于每个节点都需要启动若干组件,如果 node 节点的状态是 NotReady,可以查看所有节点 pod 状态,确保所有 pod 成功拉取到镜像并处于 running 状态:

[7d@k8s-master ~]$ kubectl get pod --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system coredns-7ff77c879f-24n99 1/1 Running 0 4h46m 10.244.0.2 k8s-master <none> <none>

kube-system coredns-7ff77c879f-jdqkz 1/1 Running 0 4h46m 10.244.0.3 k8s-master <none> <none>

kube-system etcd-k8s-master 1/1 Running 0 4h46m 172.16.106.226 k8s-master <none> <none>

kube-system kube-apiserver-k8s-master 1/1 Running 0 4h46m 172.16.106.226 k8s-master <none> <none>

kube-system kube-controller-manager-k8s-master 1/1 Running 0 4h46m 172.16.106.226 k8s-master <none> <none>

kube-system kube-flannel-ds-amd64-5xhp5 1/1 Running 0 120m 172.16.106.226 k8s-master <none> <none>

kube-system kube-flannel-ds-amd64-hbq9m 1/1 Running 0 116m 172.16.106.209 k8s-node1 <none> <none>

kube-system kube-flannel-ds-amd64-j8986 1/1 Running 0 5m25s 172.16.106.239 k8s-node2 <none> <none>

kube-system kube-flannel-ds-amd64-mrhgl 1/1 Running 0 8m8s 172.16.106.205 k8s-node3 <none> <none>

kube-system kube-proxy-56qcc 1/1 Running 0 4h46m 172.16.106.226 k8s-master <none> <none>

kube-system kube-proxy-lw72s 1/1 Running 0 8m8s 172.16.106.205 k8s-node3 <none> <none>

kube-system kube-proxy-q4gcp 1/1 Running 0 5m25s 172.16.106.239 k8s-node2 <none> <none>

kube-system kube-proxy-q4qnn 1/1 Running 0 116m 172.16.106.209 k8s-node1 <none> <none>

kube-system kube-scheduler-k8s-master 1/1 Running 0 4h46m 172.16.106.226 k8s-master <none> <none>

⚠️注意:

这时,所有的节点都已经 Ready,Kubernetes Cluster 创建成功,一切准备就绪。如果 pod 状态为 Pending、ContainerCreating、ImagePullBackOff 都表明 Pod 没有就绪,Running 才是就绪状态。如果有 pod 提示 Init:ImagePullBackOff,说明这个 pod 的镜像在对应节点上拉取失败,我们可以通过 kubectl describe pod 查看 Pod 具体情况,以确认拉取失败的镜像。

查看 master 节点下载了哪些镜像:

[7d@k8s-master ~]$ sudo docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kube-proxy v1.18.1 4e68534e24f6 2 months ago 117MB

registry.aliyuncs.com/google_containers/kube-apiserver v1.18.1 a595af0107f9 2 months ago 173MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.18.1 d1ccdd18e6ed 2 months ago 162MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.18.1 6c9320041a7b 2 months ago 95.3MB

quay.io/coreos/flannel v0.12.0-amd64 4e9f801d2217 3 months ago 52.8MB

registry.aliyuncs.com/google_containers/pause 3.2 80d28bedfe5d 4 months ago 683kB

registry.aliyuncs.com/google_containers/coredns 1.6.7 67da37a9a360 4 months ago 43.8MB

registry.aliyuncs.com/google_containers/etcd 3.4.3-0 303ce5db0e90 7 months ago 288MB

查看 node 节点下载了哪些镜像:

[root@k8s-node1 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kube-proxy v1.18.1 4e68534e24f6 2 months ago 117MB

quay.io/coreos/flannel v0.12.0-amd64 4e9f801d2217 3 months ago 52.8MB

registry.aliyuncs.com/google_containers/pause 3.2 80d28bedfe5d 4 months ago 683kB

安装 Ingress Controller

快速初始化

在 master 节点上执行

# 只在 master 节点执行

kubectl apply -f https://kuboard.cn/install-script/v1.18.x/nginx-ingress.yaml

卸载Ingress Controller

在 master 节点上执行

只在您想选择其他 Ingress Controller 的情况下卸载

# 只在 master 节点执行

kubectl delete -f https://kuboard.cn/install-script/v1.18.x/nginx-ingress.yaml

配置域名解析

将域名 *.demo.yourdomain.com 解析K8S-node1 的 IP 地址 z.z.z.z (也可以是 K8S-node2 的地址 y.y.y.y)

验证配置

在浏览器访问 a.demo.yourdomain.com,将得到 404 NotFound 错误页面

WARNING

如果打算将 Kubernetes 用于生产环境,请参考此文档 Installing Ingress Controller,完善 Ingress 的配置

- 点赞

- 收藏

- 关注作者

评论(0)