spaCy使用

【摘要】 官方文档 https://spacy.io/usage

spaCy是一个Python自然语言处理工具包,诞生于2014年年中,号称“Industrial-Strength Natural Language Processing in Python”,是具有工业级强度的Python NLP工具包。spaCy里大量使用了 Cython 来提高相关模块的性能,这个区别于学术...

spaCy是一个Python自然语言处理工具包,诞生于2014年年中,号称“Industrial-Strength Natural Language Processing in Python”,是具有工业级强度的Python NLP工具包。spaCy里大量使用了 Cython 来提高相关模块的性能,这个区别于学术性质更浓的Python NLTK,因此具有了业界应用的实际价值。

加载模型

# 导入工具包和英文模型

# python -m spacy download en 用管理员身份打开CMD

import spacy

nlp = spacy.load('en')

- 1

- 2

- 3

- 4

- 5

文本处理

doc = nlp('Weather is good, very windy and sunny. We have no classes in the afternoon.')

# 分词

for token in doc: print (token)

OUT:

Weather

is

good

,

very

windy

and

sunny

.

We

have

no

classes

in

the

afternoon

---------------------------------

#分句

for sent in doc.sents: print (sent)

OUT:

Weather is good, very windy and sunny.

We have no classes in the afternoon.

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

词性 参考 https://www.winwaed.com/blog/2011/11/08/part-of-speech-tags/

for token in doc: print ('{}-{}'.format(token,token.pos_))

OUT:

Weather-PROPN

is-VERB

good-ADJ

,-PUNCT

very-ADV

windy-ADJ

and-CCONJ

sunny-ADJ

.-PUNCT

We-PRON

have-VERB

no-DET

classes-NOUN

in-ADP

the-DET

afternoon-NOUN

.-PUNCT

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

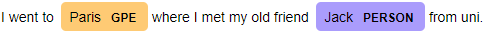

命名体识别

doc_2 = nlp("I went to Paris where I met my old friend Jack from uni.")

for ent in doc_2.ents: print ('{}-{}'.format(ent,ent.label_))

OUT:

Paris-GPE

Jack-PERSON

----

from spacy import displacy

doc = nlp('I went to Paris where I met my old friend Jack from uni.')

displacy.render(doc,style='ent',jupyter=True)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

练习 : 找到书中所有人物名字

def read_file(file_name): with open(file_name, 'r') as file: return file.read()

# 加载文本数据

text = read_file('./data/pride_and_prejudice.txt')

processed_text = nlp(text)

sentences = [s for s in processed_text.sents]

print (len(sentences))

OUT:

6469

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

一共有6469个句子

from collections import Counter,defaultdict

def find_person(doc): c = Counter() for ent in processed_text.ents: if ent.label_ == 'PERSON': c[ent.lemma_]+=1 return c.most_common(10)

print (find_person(processed_text))

OUT:

[('elizabeth', 604), ('darcy', 276), ('jane', 274), ('bennet', 233), ('bingley', 189), ('collins', 179), ('wickham', 170), ('gardiner', 95), ('lizzy', 94), ('lady catherine', 77)]

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

搞定

文章来源: maoli.blog.csdn.net,作者:刘润森!,版权归原作者所有,如需转载,请联系作者。

原文链接:maoli.blog.csdn.net/article/details/88931036

【版权声明】本文为华为云社区用户转载文章,如果您发现本社区中有涉嫌抄袭的内容,欢迎发送邮件进行举报,并提供相关证据,一经查实,本社区将立刻删除涉嫌侵权内容,举报邮箱:

cloudbbs@huaweicloud.com

- 点赞

- 收藏

- 关注作者

评论(0)