MapReduce快速入门系列(15) | MapReduce之数据清洗进阶版本

【摘要】

此片博文是上篇博文的拓展进阶部分。

目录

1. 需求2. 代码实现3. 运行及结果

1. 需求

对Web访问日志中的各字段识别切分,去除日志中不合法的记录。根据清洗规则,输出过滤后的数据。

1. 输入数据 2. 期望输出数据 都是合法的数据

2. 代码实现

1. 定义一个bean,用来记录日志数据中的各数据字段

pack...

此片博文是上篇博文的拓展进阶部分。

1. 需求

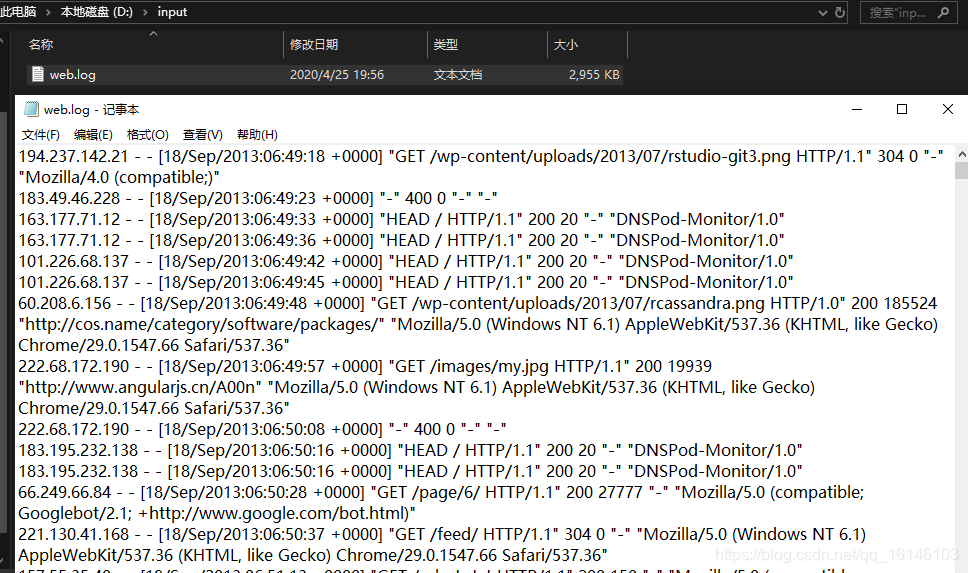

对Web访问日志中的各字段识别切分,去除日志中不合法的记录。根据清洗规则,输出过滤后的数据。

- 1. 输入数据

- 2. 期望输出数据

都是合法的数据

2. 代码实现

- 1. 定义一个bean,用来记录日志数据中的各数据字段

package com.buwenbuhuo.ETLcomplex;

/**

* @author 卜温不火

* @create 2020-04-25 20:08

* com.buwenbuhuo.ETL - the name of the target package where the new class or interface will be created.

* mapreduce0422 - the name of the current project.

*/

public class LogBean { private String remote_addr;// 记录客户端的ip地址 private String remote_user;// 记录客户端用户名称,忽略属性"-" private String time_local;// 记录访问时间与时区 private String request;// 记录请求的url与http协议 private String status;// 记录请求状态;成功是200 private String body_bytes_sent;// 记录发送给客户端文件主体内容大小 private String http_referer;// 用来记录从那个页面链接访问过来的 private String http_user_agent;// 记录客户浏览器的相关信息 private boolean valid = true;// 判断数据是否合法 @Override public String toString() { StringBuilder sb = new StringBuilder(); sb.append(this.valid); sb.append("\001").append(this.remote_addr); sb.append("\001").append(this.remote_user); sb.append("\001").append(this.time_local); sb.append("\001").append(this.request); sb.append("\001").append(this.status); sb.append("\001").append(this.body_bytes_sent); sb.append("\001").append(this.http_referer); sb.append("\001").append(this.http_user_agent); return sb.toString(); } public String getRemote_addr() { return remote_addr; } public void setRemote_addr(String remote_addr) { this.remote_addr = remote_addr; } public String getRemote_user() { return remote_user; } public void setRemote_user(String remote_user) { this.remote_user = remote_user; } public String getTime_local() { return time_local; } public void setTime_local(String time_local) { this.time_local = time_local; } public String getRequest() { return request; } public void setRequest(String request) { this.request = request; } public String getStatus() { return status; } public void setStatus(String status) { this.status = status; } public String getBody_bytes_sent() { return body_bytes_sent; } public void setBody_bytes_sent(String body_bytes_sent) { this.body_bytes_sent = body_bytes_sent; } public String getHttp_referer() { return http_referer; } public void setHttp_referer(String http_referer) { this.http_referer = http_referer; } public String getHttp_user_agent() { return http_user_agent; } public void setHttp_user_agent(String http_user_agent) { this.http_user_agent = http_user_agent; } public boolean isValid() { return valid; } public void setValid(boolean valid) { this.valid = valid; }

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 2. 编写LogMapper类

package com.buwenbuhuo.ETLcomplex;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author 卜温不火

* @create 2020-04-25 20:08

* com.buwenbuhuo.ETL - the name of the target package where the new class or interface will be created.

* mapreduce0422 - the name of the current project.

*/

public class LogMapper extends Mapper<LongWritable, Text, Text, NullWritable> { private LogBean logBean = new LogBean(); private Text k = new Text(); @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取1行 String line = value.toString(); parseLog(line); if (logBean.isValid()) { k.set(logBean.toString()); context.write(k, NullWritable.get()); context.getCounter("ETL", "True").increment(1); } else { context.getCounter("ETL", "False").increment(1); } } private void parseLog(String line) { String[] fields = line.split(" "); if (fields.length > 11) { // 2封装数据 logBean.setRemote_addr(fields[0]); logBean.setRemote_user(fields[1]); logBean.setTime_local(fields[3].substring(1)); logBean.setRequest(fields[6]); logBean.setStatus(fields[8]); logBean.setBody_bytes_sent(fields[9]); logBean.setHttp_referer(fields[10]); if (fields.length > 12) { logBean.setHttp_user_agent(fields[11] + " "+ fields[12]); }else { logBean.setHttp_user_agent(fields[11]); } // 大于400,HTTP错误 if (Integer.parseInt(logBean.getStatus()) >= 400) { logBean.setValid(false); } else { logBean.setValid(true); } }else { logBean.setValid(false); } }

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

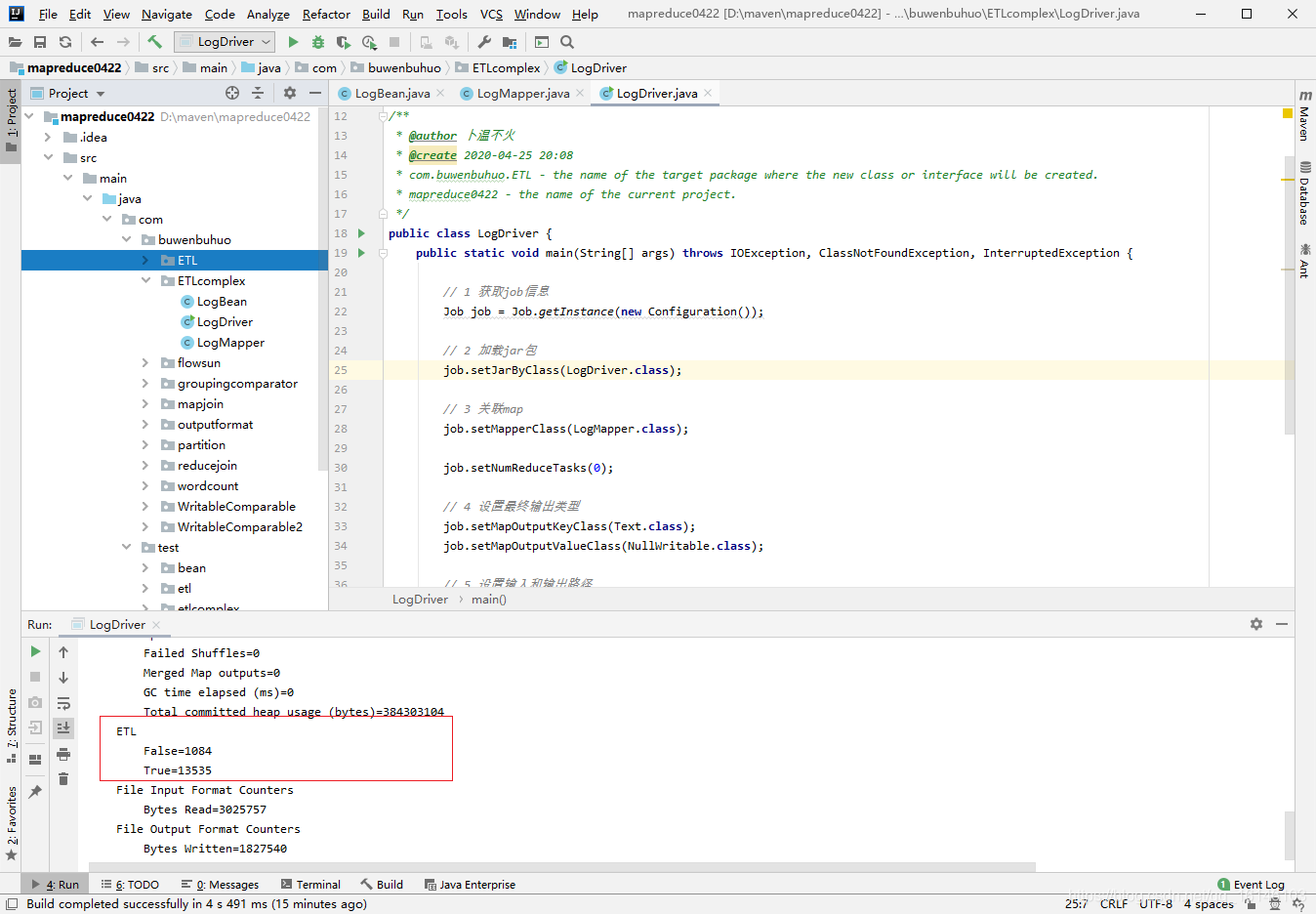

- 3. 编写LogDriver类

package com.buwenbuhuo.ETLcomplex;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* @author 卜温不火

* @create 2020-04-25 20:08

* com.buwenbuhuo.ETL - the name of the target package where the new class or interface will be created.

* mapreduce0422 - the name of the current project.

*/

public class LogDriver { public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException { // 1 获取job信息 Job job = Job.getInstance(new Configuration()); // 2 加载jar包 job.setJarByClass(LogDriver.class); // 3 关联map job.setMapperClass(LogMapper.class); job.setNumReduceTasks(0); // 4 设置最终输出类型 job.setMapOutputKeyClass(Text.class); job.setMapOutputValueClass(NullWritable.class); // 5 设置输入和输出路径 FileInputFormat.setInputPaths(job, new Path("d:\\input")); FileOutputFormat.setOutputPath(job, new Path("d:\\output")); boolean b = job.waitForCompletion(true); System.exit(b ? 0 : 1); }

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

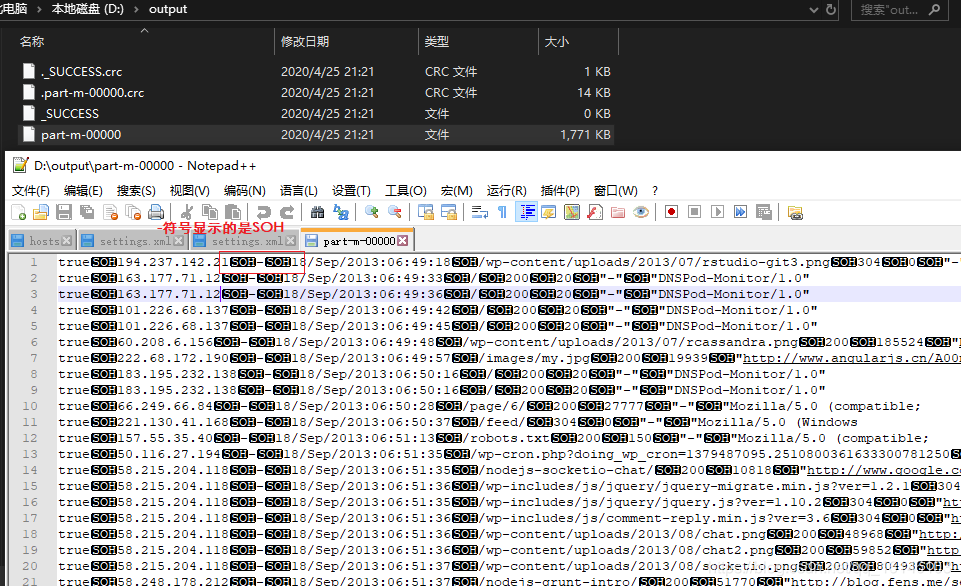

3. 运行及结果

- 1. 运行

- 2. 结果

到了这里说明我们的数据清洗(ETL)算是成功了。那本期的分享到这里也就该结束了,小伙伴们有什么疑惑或好的建议可以在评论区留言或者私信博主都是可以的。

文章来源: buwenbuhuo.blog.csdn.net,作者:不温卜火,版权归原作者所有,如需转载,请联系作者。

原文链接:buwenbuhuo.blog.csdn.net/article/details/105756139

【版权声明】本文为华为云社区用户转载文章,如果您发现本社区中有涉嫌抄袭的内容,欢迎发送邮件进行举报,并提供相关证据,一经查实,本社区将立刻删除涉嫌侵权内容,举报邮箱:

cloudbbs@huaweicloud.com

- 点赞

- 收藏

- 关注作者

评论(0)